MOSS-Audio-Tokenizer: Scaling Audio Tokenizers for Future Audio Foundation Models

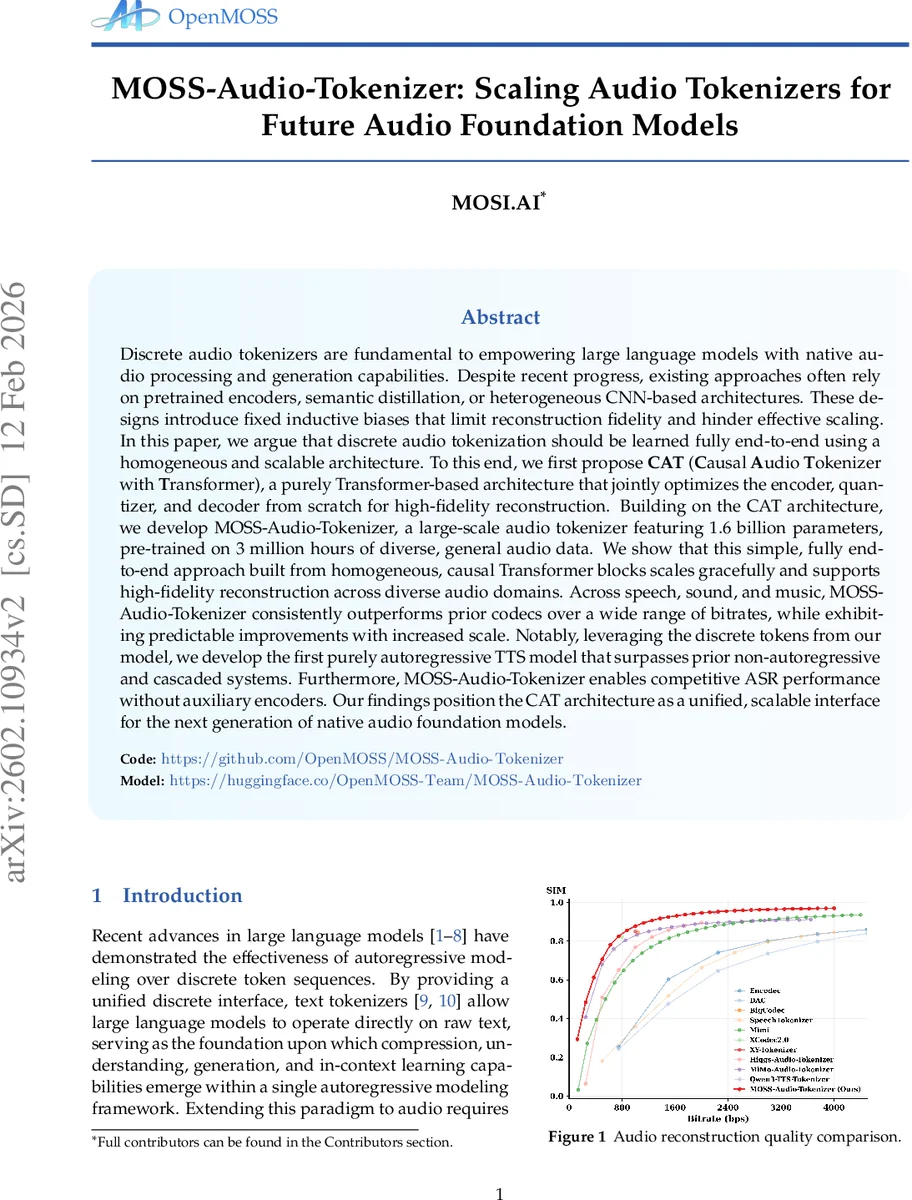

Discrete audio tokenizers are fundamental to empowering large language models with native audio processing and generation capabilities. Despite recent progress, existing approaches often rely on pretrained encoders, semantic distillation, or heterogeneous CNN-based architectures. These designs introduce fixed inductive biases that limit reconstruction fidelity and hinder effective scaling. In this paper, we argue that discrete audio tokenization should be learned fully end-to-end using a homogeneous and scalable architecture. To this end, we first propose CAT (Causal Audio Tokenizer with Transformer), a purely Transformer-based architecture that jointly optimizes the encoder, quantizer, and decoder from scratch for high-fidelity reconstruction. Building on the CAT architecture, we develop MOSS-Audio-Tokenizer, a large-scale audio tokenizer featuring 1.6 billion parameters, pre-trained on 3 million hours of diverse, general audio data. We show that this simple, fully end-to-end approach built from homogeneous, causal Transformer blocks scales gracefully and supports high-fidelity reconstruction across diverse audio domains. Across speech, sound, and music, MOSS-Audio-Tokenizer consistently outperforms prior codecs over a wide range of bitrates, while exhibiting predictable improvements with increased scale. Notably, leveraging the discrete tokens from our model, we develop the first purely autoregressive TTS model that surpasses prior non-autoregressive and cascaded systems. Furthermore, MOSS-Audio-Tokenizer enables competitive ASR performance without auxiliary encoders. Our findings position the CAT architecture as a unified, scalable interface for the next generation of native audio foundation models.

💡 Research Summary

The paper introduces MOSS‑Audio‑Tokenizer, a large‑scale, fully end‑to‑end audio tokenization system built exclusively from causal Transformer blocks (the CAT architecture). Unlike prior neural audio codecs that rely on convolutional front‑ends, pretrained encoders, or multi‑stage pipelines, CAT jointly optimizes the encoder, residual vector quantizer (RVQ), decoder, and adversarial discriminators in a single training loop. The model operates on raw 24 kHz waveforms, patches them into fixed‑dimensional vectors, and progressively reduces temporal resolution through “patchify” layers, yielding a token stream at 12.5 Hz. Quantization is performed with 32 RVQ layers; during training a quantizer‑dropout mechanism randomly disables some layers, enabling robust performance across a wide bitrate range (0.125 kbps to 4 kbps).

Training is performed on an unprecedented 3 million hours of diverse audio (speech, music, environmental sounds) paired with textual annotations (ASR transcripts, captions). In addition to a multi‑scale mel‑spectrogram L1 reconstruction loss, the objective includes commitment and codebook losses for the quantizer, adversarial and feature‑matching losses from multiple discriminators, and a semantic loss where a 0.5 B‑parameter language model is conditioned on the quantized representations to predict text. This multi‑task setup forces the discrete tokens to be both acoustically faithful and semantically rich, turning them into a true “audio vocabulary” for downstream language models.

Scaling experiments show that increasing model parameters (up to 1.6 B) and batch size yields near‑linear improvements in reconstruction quality, confirming the scalability of the homogeneous design. Compared against state‑of‑the‑art codecs such as EnCodec, Hifi‑Codec, and X‑Codec, MOSS‑Audio‑Tokenizer consistently achieves higher PESQ, STOI, and ViSQOL scores across all evaluated bitrates, with especially large gains at low bitrates where traditional codecs suffer severe quality loss. Subjective MOS evaluations corroborate these findings for speech, music, and environmental audio.

The authors further demonstrate two downstream applications. First, they build CAT‑TTS, a purely autoregressive text‑to‑speech model that directly generates RVQ tokens conditioned on text and speaker prompts. To allow a single model to operate at multiple bitrates, they propose Progressive Sequence Dropout: during training, deeper RVQ layers are progressively masked, teaching the model to synthesize coherent audio even when only a subset of quantization levels is used at inference. CAT‑TTS outperforms the best non‑autoregressive and cascaded TTS systems in MOS, particularly excelling at very low bitrates (≤0.5 kbps). Second, they feed the tokenizer’s discrete tokens directly into a language model for automatic speech recognition, achieving 4.9 % WER on LibriSpeech test‑clean and 12.3 % on test‑other without any separate acoustic front‑end. This result highlights that the tokens retain sufficient linguistic information and temporal continuity for high‑performing ASR.

Overall, the work establishes that a Transformer‑only, causal, end‑to‑end architecture can serve as a universal, scalable interface between raw audio and large language models. By removing architectural heterogeneity and pretrained dependencies, the system scales gracefully with data and compute, while delivering state‑of‑the‑art reconstruction and enabling competitive generative and discriminative downstream tasks. The authors suggest that such tokenizers will become the foundational “audio embedding” layer for future multimodal foundation models, supporting real‑time streaming, low‑bitrate transmission, and a wide array of interactive AI applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment