Anagent For Enhancing Scientific Table & Figure Analysis

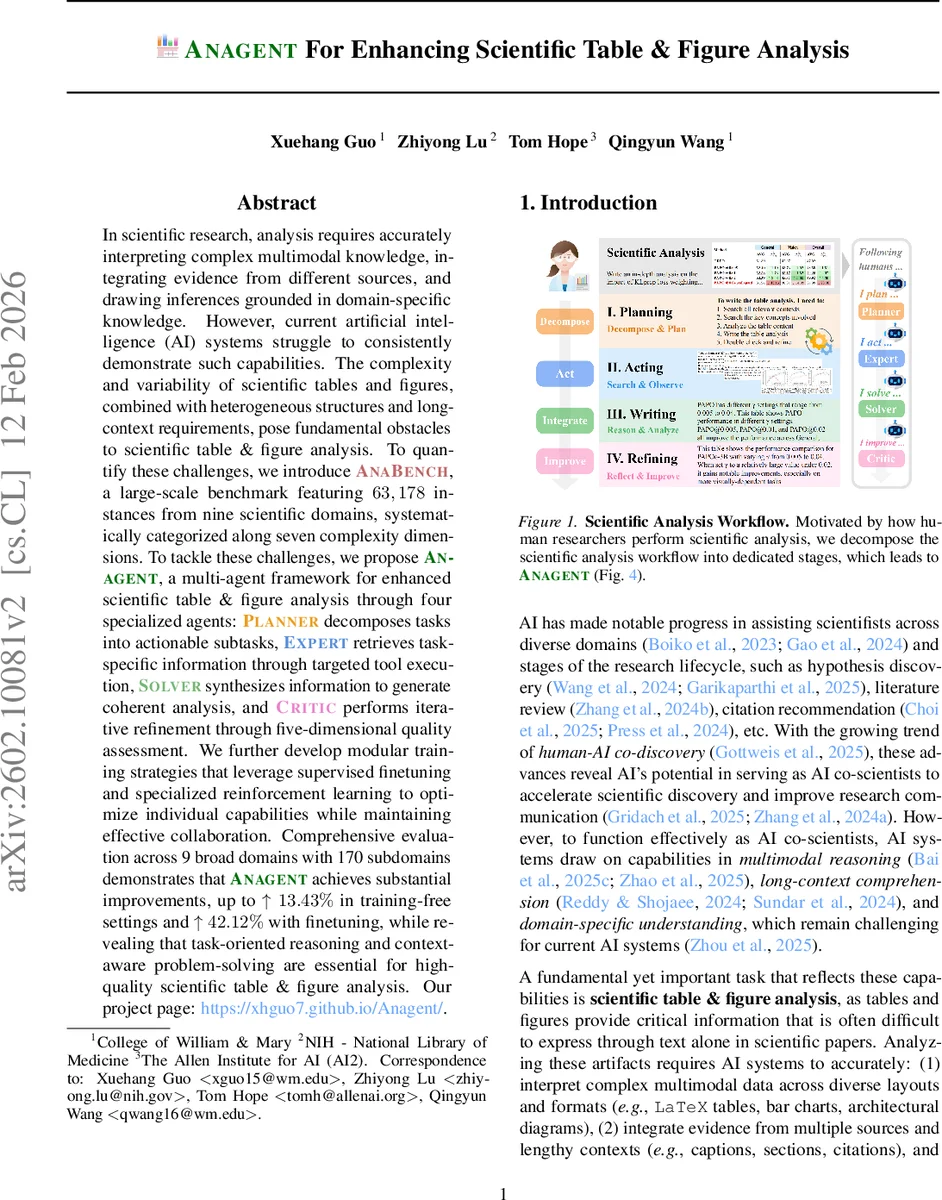

In scientific research, analysis requires accurately interpreting complex multimodal knowledge, integrating evidence from different sources, and drawing inferences grounded in domain-specific knowledge. However, current artificial intelligence (AI) systems struggle to consistently demonstrate such capabilities. The complexity and variability of scientific tables and figures, combined with heterogeneous structures and long-context requirements, pose fundamental obstacles to scientific table & figure analysis. To quantify these challenges, we introduce AnaBench, a large-scale benchmark featuring $63,178$ instances from nine scientific domains, systematically categorized along seven complexity dimensions. To tackle these challenges, we propose Anagent, a multi-agent framework for enhanced scientific table & figure analysis through four specialized agents: Planner decomposes tasks into actionable subtasks, Expert retrieves task-specific information through targeted tool execution, Solver synthesizes information to generate coherent analysis, and Critic performs iterative refinement through five-dimensional quality assessment. We further develop modular training strategies that leverage supervised finetuning and specialized reinforcement learning to optimize individual capabilities while maintaining effective collaboration. Comprehensive evaluation across 9 broad domains with 170 subdomains demonstrates that Anagent achieves substantial improvements, up to $\uparrow 13.43%$ in training-free settings and $\uparrow 42.12%$ with finetuning, while revealing that task-oriented reasoning and context-aware problem-solving are essential for high-quality scientific table & figure analysis. Our project page: https://xhguo7.github.io/Anagent/.

💡 Research Summary

The paper addresses the long‑standing challenge of automatically analyzing scientific tables and figures—a task that requires multimodal perception, long‑context reasoning, and domain‑specific knowledge integration. To quantify the difficulty, the authors introduce AnaBench, a large‑scale benchmark comprising 63,178 instances drawn from nine broad scientific domains (computer science, electrical & electronic engineering, mathematics & physics, economics, biology, finance, statistics, and biomedical science) and 170 fine‑grained sub‑disciplines. Each instance contains one or more tables and/or figures, their surrounding textual context (captions, sections, citations), metadata, and a natural‑language query that specifies the analysis goal. The benchmark categorizes data complexity along four dimensions—type (table, figure, both), domain, format (LaTeX or XML), and source (research article vs. review)—and analysis complexity along three dimensions—width (self‑contained, internal, external, mixed), depth (shallow vs. in‑depth), and objective (methodology vs. experiment). This taxonomy captures the heterogeneity of scientific literature that prior QA‑oriented benchmarks overlook.

Building on insights from how human scientists conduct analysis, the authors propose Anagent, a multi‑agent framework consisting of four specialized agents:

- Planner – parses the input and decomposes the overall task into a set of actionable subtasks τ₁…τ_Mₚ.

- Expert – for each subtask, iteratively invokes a toolbox of 16 specialized tools (e.g., OCR, numeric extraction, literature search, domain knowledge retrieval) to expand a knowledge base Kₑ.

- Solver – synthesizes the accumulated knowledge Kₙ with the original input and any feedback fᵢ₋₁ to generate a candidate analysis yᵢ.

- Critic – evaluates yᵢ across five quality dimensions—consistency, query‑analysis alignment, knowledge utilization, format correctness, and grounding accuracy—and returns refined feedback fᵢ to guide the next Solver iteration.

The interaction between Solver and Critic forms an iterative refinement loop that mirrors peer‑review style feedback, enabling the system to correct logical gaps, reduce hallucinations, and improve factual grounding.

Training and optimization are handled modularly. Each agent can be equipped with few‑shot exemplars for test‑time adaptation, and supervised fine‑tuning is applied separately to improve Planner’s task decomposition, Expert’s tool‑selection policy, Solver’s generation quality, and Critic’s evaluation accuracy. A reinforcement‑learning stage uses the Critic’s multi‑dimensional scores as reward signals to further align the agents’ collaborative behavior. Moreover, the authors introduce “Agent‑Level Capability Augmentation,” allowing any agent to be swapped with a more powerful language model (e.g., GPT‑4) without retraining the whole system, thereby scaling performance.

Experiments evaluate eight base models (GPT‑4.1‑mini, Gemini‑2.5‑Flash, InternVL‑3.5, Qwen2.5‑VL, Qwen3‑VL, etc.) under three settings: zero‑shot, one‑shot (single example), and fine‑tuned. Evaluation metrics include lexical measures (ROUGE‑L, BLEU, word overlap), semantic similarity (cosine similarity, SciBERT‑Score, METEOR), and a model‑as‑judge protocol where Gemini‑2.5‑Flash and GPT‑4.1‑mini grade each output on the five Critic dimensions. Results show that Anagent consistently outperforms baseline models: in zero‑shot mode it gains 6–9 percentage points in overall accuracy; with one‑shot prompting it adds another 5–7 points; and after fine‑tuning it achieves up to a 30 % absolute improvement over the best baseline. The gains are especially pronounced on instances requiring deep analytical reasoning and mixed‑width context integration, confirming the importance of the Critic‑guided refinement. Ablation studies demonstrate that removing any agent degrades performance, and that Agent‑Level Capability Augmentation yields an extra 2–3 % boost.

In summary, the paper makes three major contributions: (1) a comprehensive, multi‑dimensional benchmark (AnaBench) that captures the full spectrum of challenges in scientific table and figure analysis; (2) a novel multi‑agent architecture (Anagent) that emulates the human scientific workflow through task decomposition, targeted knowledge retrieval, generation, and iterative quality assessment; and (3) a modular training strategy that enables both zero‑shot adaptability and substantial fine‑tuned gains. By delivering high‑quality, context‑aware analyses of complex scientific visualizations, Anagent moves AI closer to being a reliable co‑scientist, capable of accelerating discovery and improving scientific communication.

Comments & Academic Discussion

Loading comments...

Leave a Comment