Efficient Remote Prefix Fetching with GPU-native Media ASICs

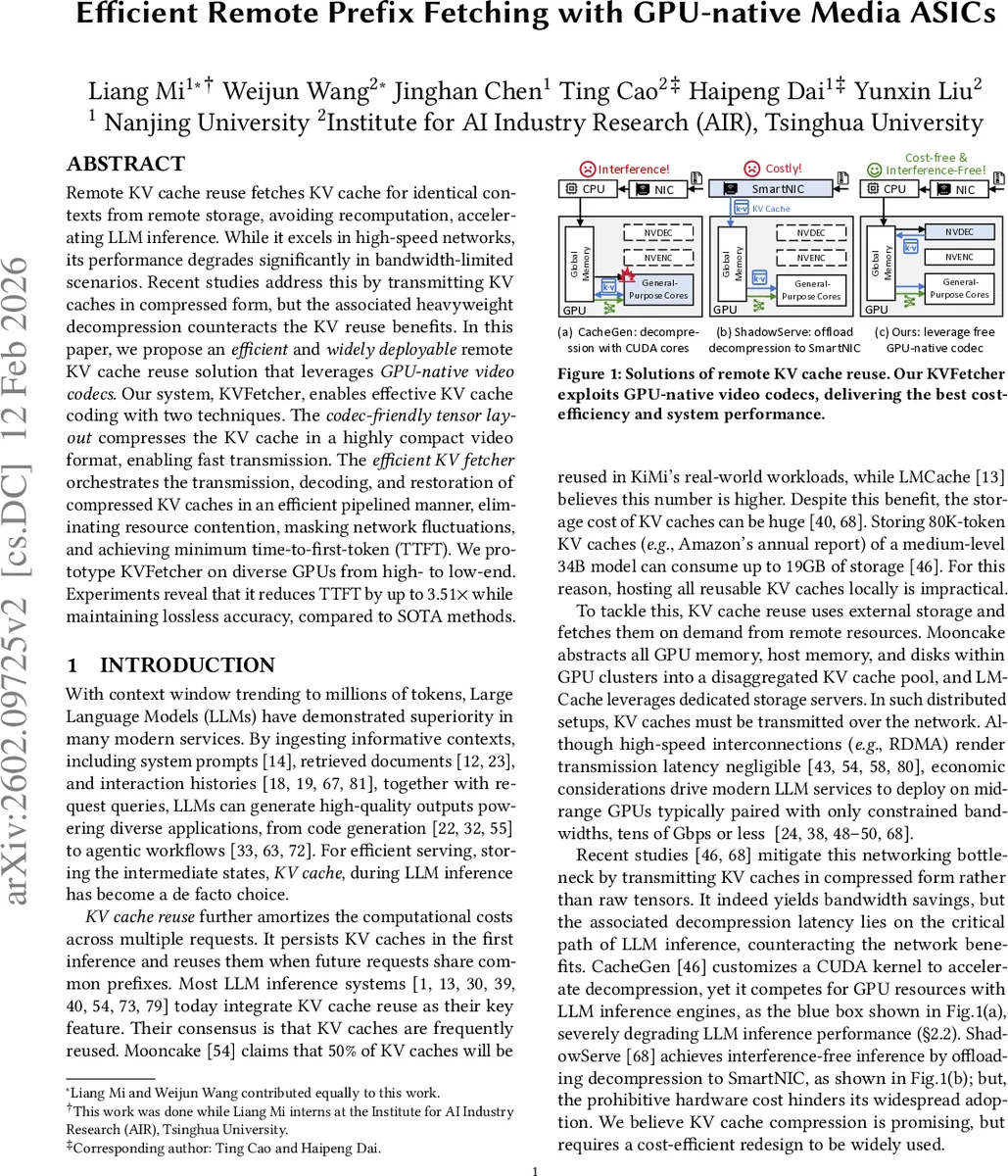

Remote KV cache reuse fetches KV cache for identical contexts from remote storage, avoiding recomputation, accelerating LLM inference. While it excels in high-speed networks, its performance degrades significantly in bandwidth-limited scenarios. Recent studies address this by transmitting KV caches in compressed form, but the associated heavyweight decompression counteracts the KV reuse benefits. In this paper, we propose an efficient and widely deployable remote KV cache reuse solution that leverages GPU-native video codecs. Our system, KVFetcher, enables effective KV cache coding with two techniques. The codec-friendly tensor layout compresses the KV cache in a highly compact video format, enabling fast transmission. The efficient KV fetcher orchestrates the transmission, decoding, and restoration of compressed KV caches in an efficient pipelined manner, eliminating resource contention, masking network fluctuations, and achieving minimum time-to-first-token (TTFT). We prototype KVFetcher on diverse GPUs from high- to low-end. Experiments reveal that it reduces TTFT by up to 3.51 times while maintaining lossless accuracy, compared to SOTA methods.

💡 Research Summary

The paper addresses the latency bottleneck that arises when large‑scale language models (LLMs) reuse key‑value (KV) caches stored remotely. While KV‑cache reuse can eliminate the costly pre‑fill phase for requests that share a common prefix, transmitting raw KV tensors over bandwidth‑constrained networks is prohibitive. Prior work (CacheGen, ShadowServe) compresses KV caches but either incurs heavy GPU‑side decompression overhead that interferes with inference or requires expensive SmartNIC hardware, limiting practical deployment.

KVFetcher proposes a fundamentally different approach: leverage the video encoding/decoding ASICs that modern GPUs already contain (e.g., NVIDIA NVENC/NVDEC). These units are independent of the general‑purpose cores, idle during LLM inference, and capable of high‑throughput, low‑latency, lossless compression. The system consists of two core innovations.

-

Codec‑friendly Tensor Layout – KV tensors (layer × head × token × dimension) are sliced along the token dimension and scattered across consecutive video frames. Multiple resolutions of the same data are generated, enabling adaptive bandwidth usage. Crucially, the layout skips the lossy DCT and quantization steps of typical video codecs and relies only on intra‑frame and inter‑frame prediction, which are lossless. By analyzing the statistical distribution of K and V values (mostly near‑zero with limited variance), the authors achieve a tenfold compression ratio without any degradation in generation quality.

-

Efficient KV Fetcher – This component orchestrates transmission, decoding, and restoration in a pipelined, contention‑free manner. It includes:

- a fetching‑aware scheduler that separates KV‑reuse requests from ordinary inference, preventing KV fetching from blocking non‑reuse queries;

- adaptive‑resolution fetching that monitors real‑time network bandwidth and selects the appropriate video resolution, thereby keeping time‑to‑first‑token (TTFT) minimal across a wide range of network conditions;

- frame‑wise tensor restoration that converts each decoded video frame back to KV tensors on‑the‑fly, using only the memory needed for the current frame. This avoids the 2.7× memory blow‑up observed in CacheGen and eliminates GPU SM under‑utilization caused by kernel context switches.

The authors prototype KVFetcher on three GPU families (NVIDIA H100, A100, RTX 4090) and evaluate three model sizes (7B, 34B, 70B) under network bandwidths from 1 Gbps to 40 Gbps (TCP) and 100/200 Gbps (RDMA). Using a realistic request trace with context windows up to 200 k tokens, KVFetcher reduces TTFT by 1.52–3.51× compared to state‑of‑the‑art compressed‑KV methods, while preserving lossless accuracy. It also eliminates the GPU resource contention and memory bloat that plagued prior CUDA‑based decompression, and it does so without the need for costly SmartNICs, making it suitable for both cloud and edge deployments.

In summary, KVFetcher demonstrates that GPU‑native video codecs provide a cost‑effective, widely available hardware substrate for remote KV‑cache reuse. By co‑designing a compression layout that aligns with video codec primitives and a scheduler that isolates fetching from inference, the system achieves substantial latency reductions and scalability on commodity hardware. Future work may explore multi‑GPU coordination, integration with other GPU‑offload primitives (e.g., TensorRT‑LLM), and extending the approach to other tensor‑heavy workloads.

Comments & Academic Discussion

Loading comments...

Leave a Comment