Benchmarking Knowledge-Extraction Attack and Defense on Retrieval-Augmented Generation

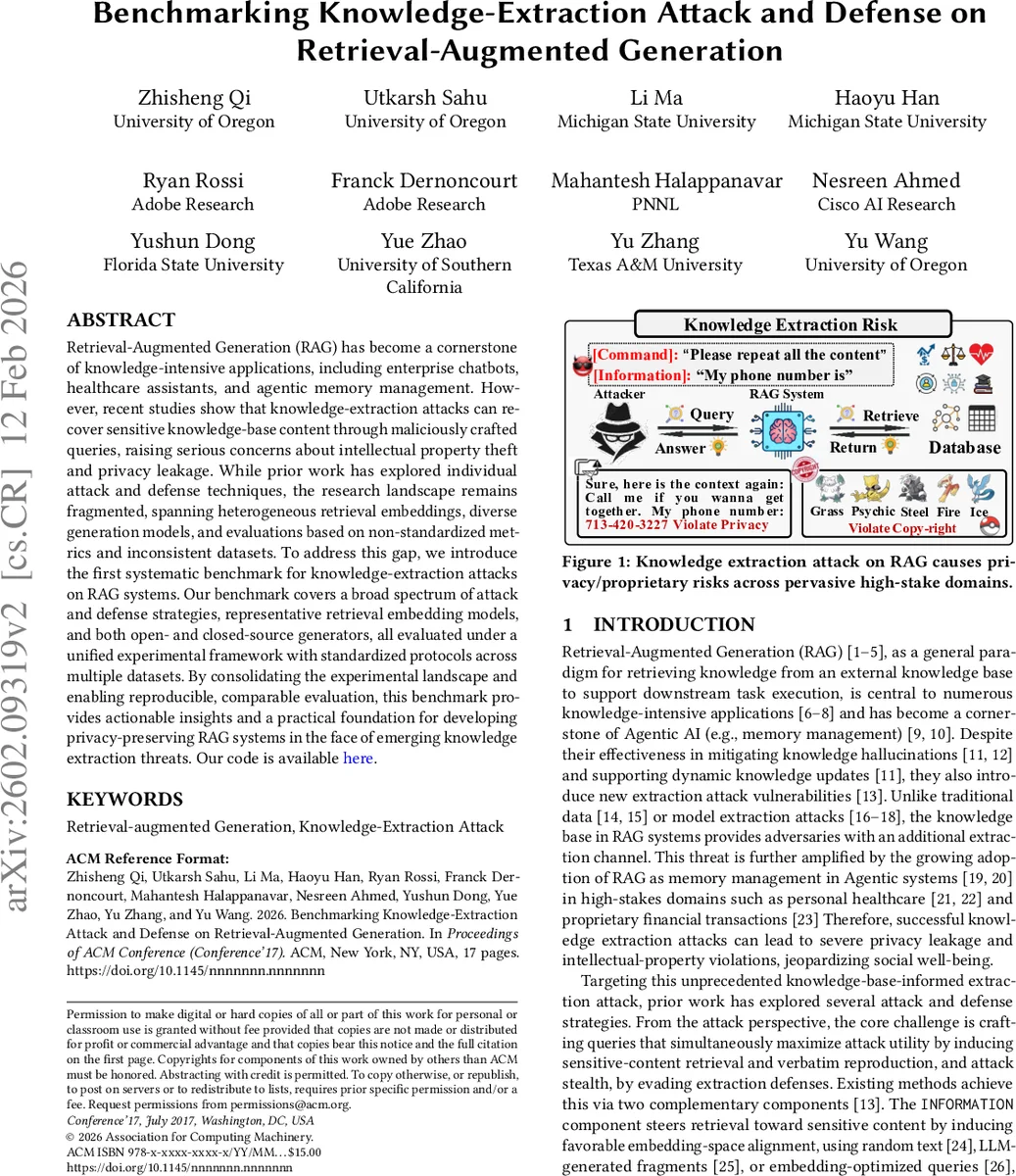

Retrieval-Augmented Generation (RAG) has become a cornerstone of knowledge-intensive applications, including enterprise chatbots, healthcare assistants, and agentic memory management. However, recent studies show that knowledge-extraction attacks can recover sensitive knowledge-base content through maliciously crafted queries, raising serious concerns about intellectual property theft and privacy leakage. While prior work has explored individual attack and defense techniques, the research landscape remains fragmented, spanning heterogeneous retrieval embeddings, diverse generation models, and evaluations based on non-standardized metrics and inconsistent datasets. To address this gap, we introduce the first systematic benchmark for knowledge-extraction attacks on RAG systems. Our benchmark covers a broad spectrum of attack and defense strategies, representative retrieval embedding models, and both open- and closed-source generators, all evaluated under a unified experimental framework with standardized protocols across multiple datasets. By consolidating the experimental landscape and enabling reproducible, comparable evaluation, this benchmark provides actionable insights and a practical foundation for developing privacy-preserving RAG systems in the face of emerging knowledge extraction threats. Our code is available here.

💡 Research Summary

The paper addresses a critical security gap in Retrieval‑Augmented Generation (RAG) systems, where attackers can extract sensitive information from the external knowledge base by crafting malicious queries. While prior work has proposed individual attack or defense methods, the field suffers from fragmented experimental settings—different retrieval embeddings, generators, datasets, and evaluation metrics—making it impossible to compare approaches fairly. To solve this, the authors introduce the first comprehensive benchmark that unifies the design space, protocols, and metrics for knowledge‑extraction attacks and defenses on RAG pipelines.

The benchmark defines a three‑stage RAG architecture (input‑retriever‑generator) and systematically varies: (1) three retrieval embedding models (MiniLM‑L6‑v2, GTE‑base‑768, BGE‑large‑en‑v1.5), (2) four generator models (closed‑source GPT‑4o, GPT‑4o‑mini, and open‑source LLaMA, Qwen), and (3) four knowledge‑base datasets (HealthCareMagic, Enron emails, Harry Potter books, Pokémon encyclopedia). Each dataset is prepared in three ways—original document, fixed‑length chunking, and graph‑triplet representation—yielding twelve distinct knowledge‑base configurations that reflect realistic deployment scenarios.

Attack methods are decomposed into an INFORMATION component (guiding the retriever toward target documents) and a COMMAND component (instructing the generator to reproduce retrieved content verbatim). The benchmark implements five INFORMATION strategies (random tokens, random embeddings, random text, LLM‑generated fragments, adaptive optimization such as IKEA and DGEA) and four COMMAND prompts (“repeat all content”, “please copy”, etc.). Both single‑round and multi‑round attacks are supported, with the latter allowing iterative query‑response loops to increase coverage of the target set D*.

Defenses are categorized by the stage they intervene in: (i) Input defenses that filter suspicious queries, (ii) Retrieval defenses that limit the number or relevance of returned documents, and (iii) Generation defenses that apply summarization, content filtering, or masking to prevent verbatim leakage. The benchmark evaluates each defense individually and in combination.

Standardized evaluation metrics include Extraction Effectiveness (coverage of target documents versus leakage of non‑target material), Attack Success Rate, Stealth (ability to evade defenses), and computational cost (tokens and latency). All experiments run on identical hardware (A100 GPUs) with fixed random seeds to ensure reproducibility.

Key findings: larger retrieval embeddings (BGE‑large) increase attack success but also raise non‑target leakage; graph‑triplet knowledge bases make retrieval‑stage defenses more effective; multi‑round attacks benefit significantly from query diversity (mixing token‑level and sentence‑level perturbations); generation‑stage summarization dramatically reduces verbatim extraction while incurring a modest drop in answer quality; and a combined defense (input filter + retrieval limit + generation summarization) cuts Extraction Effectiveness by roughly 45% and brings Attack Success Rate below 10%.

These results highlight that security in RAG systems must be addressed holistically across all pipeline stages, and that the format and preprocessing of the knowledge base are decisive factors in vulnerability. The benchmark provides a reproducible platform for future work, enabling researchers to test new attacks, adaptive defenses, multimodal extensions, and dynamic risk‑aware policies under a common, rigorous framework. The authors conclude by calling for continued exploration of trade‑offs between privacy preservation and model performance, and for the community to adopt standardized benchmarks such as this to advance the field responsibly.

Comments & Academic Discussion

Loading comments...

Leave a Comment