LLM-in-Sandbox Elicits General Agentic Intelligence

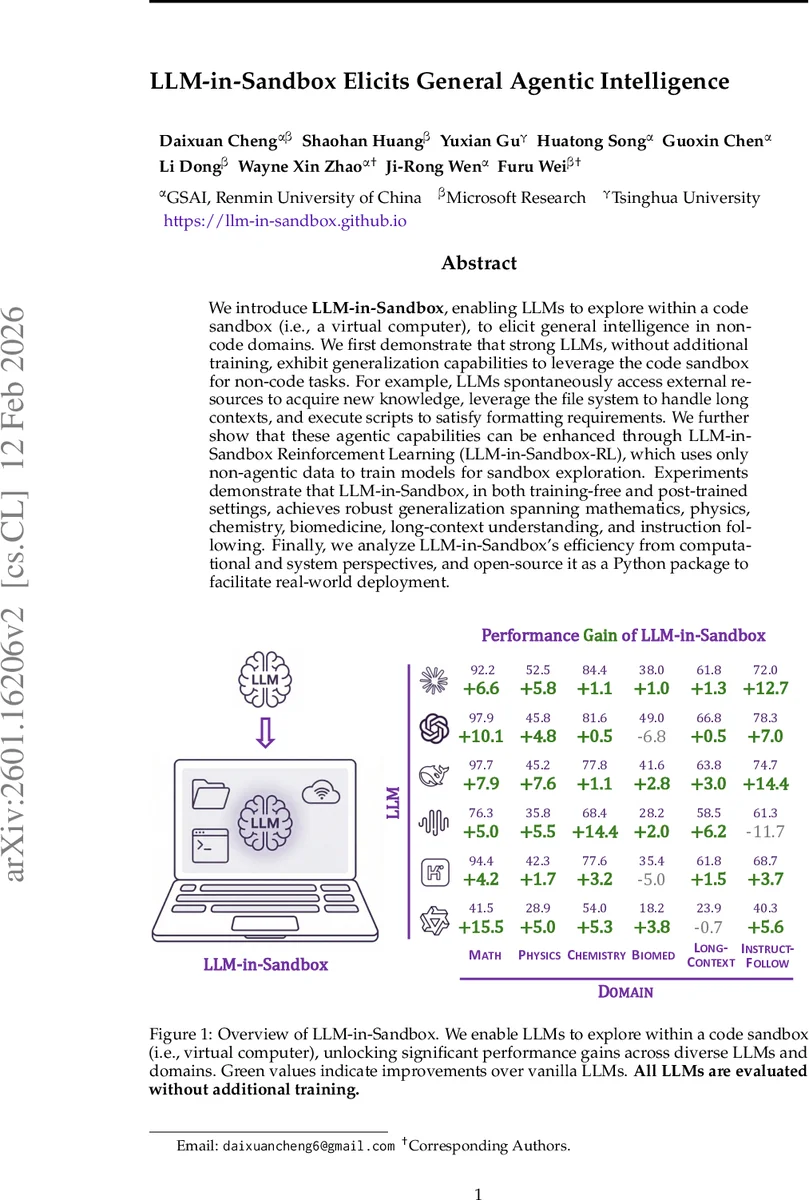

We introduce LLM-in-Sandbox, enabling LLMs to explore within a code sandbox (i.e., a virtual computer), to elicit general intelligence in non-code domains. We first demonstrate that strong LLMs, without additional training, exhibit generalization capabilities to leverage the code sandbox for non-code tasks. For example, LLMs spontaneously access external resources to acquire new knowledge, leverage the file system to handle long contexts, and execute scripts to satisfy formatting requirements. We further show that these agentic capabilities can be enhanced through LLM-in-Sandbox Reinforcement Learning (LLM-in-Sandbox-RL), which uses only non-agentic data to train models for sandbox exploration. Experiments demonstrate that LLM-in-Sandbox, in both training-free and post-trained settings, achieves robust generalization spanning mathematics, physics, chemistry, biomedicine, long-context understanding, and instruction following. Finally, we analyze LLM-in-Sandbox’s efficiency from computational and system perspectives, and open-source it as a Python package to facilitate real-world deployment.

💡 Research Summary

The paper introduces LLM‑in‑Sandbox, a framework that equips large language models (LLMs) with a virtual computer (a Docker‑based Ubuntu sandbox) so that the model can freely execute terminal commands, manage files, and run arbitrary code. Three primitive tools—execute_bash, str_replace_editor, and submit—expose the three meta‑capabilities of any computer: external resource access, persistent file management, and code execution. By giving LLMs these capabilities, the authors hypothesize that the models can solve virtually any task, just as humans use computers.

Design principles are minimalism (only a standard Python interpreter and basic scientific libraries are pre‑installed) and exploration (the model is encouraged to discover and install domain‑specific tools on its own). This contrasts with existing software‑engineering agents that require heavyweight, task‑specific images. The lightweight shared image (≈1.1 GB) enables scalable deployment without per‑task storage overhead.

Workflow builds on the ReAct paradigm: at each turn the model reasons, selects a tool call, receives the observation from the sandbox, and iterates until it calls submit or reaches a turn limit. The system prompt explicitly tells the model to perform calculations via code execution, to store the final answer in a designated file (e.g., /testbed/answer.txt), and that the sandbox is a safe environment for unrestricted exploration. Input data can be placed as files (useful for long‑context tasks), and the model can use shell utilities (grep, sed, awk) and Python scripts to process them.

Experiments evaluate seven recent LLMs (Claude‑Sonnet‑4.5‑Think, GPT‑5, DeepSeek‑V3.2‑Thinking, MiniMax‑M2, Kimi‑K2‑Thinking, Qwen3‑Coder‑30B‑A3B, Qwen3‑4B‑Instruct‑2507) across six non‑code domains: mathematics, physics, chemistry, biomedicine, long‑context understanding, and instruction following. For each model the authors compare the standard “LLM” mode (direct generation) with the “LLM‑in‑Sandbox” mode. Strong agentic models consistently improve, with gains ranging from +1 % to +15.5 % (the largest on Qwen3‑Coder for mathematics). Weaker models, exemplified by Qwen3‑4B‑Instruct, sometimes perform worse in sandbox mode, highlighting a capability gap.

LLM‑in‑Sandbox‑RL is proposed to close this gap. Using only non‑agentic data (general context‑based tasks), the authors train models via reinforcement learning where the task description is provided as files in the sandbox, and the model must explore, execute code, and produce an answer to receive a reward. After RL, previously weaker models not only surpass their vanilla LLM performance in sandbox mode but also show modest improvements in the standard mode, indicating that sandbox exploration yields transferable skills.

Efficiency analysis shows substantial token savings: for 100 K‑token long‑context tasks, token consumption drops to ~13 K (≈8× reduction). Query‑level throughput remains comparable to direct API calls, and the sandbox’s infrastructure overhead is minimal because a single shared container image serves all tasks.

Open‑source release provides a Python package that integrates with popular inference back‑ends such as vLLM and SGLang, as well as API‑based services. This lowers the barrier for researchers and engineers to experiment with agentic LLMs without building custom sandbox infrastructure.

Key insights:

- Providing LLMs with a minimal, general‑purpose sandbox unlocks latent agentic abilities even without additional training.

- The three meta‑capabilities (external resource access, file management, code execution) are sufficient for solving a wide range of non‑coding problems.

- Reinforcement learning on sandbox interactions can endow weaker models with robust exploration skills, improving both sandbox and vanilla performance.

- The approach yields practical efficiency gains (token reduction, modest compute overhead) making it viable for real‑world deployment.

In summary, the work demonstrates that coupling LLMs with a code sandbox is a powerful, scalable route toward general agentic intelligence, and that sandbox‑based reinforcement learning can further democratize these capabilities across models of varying strength.

Comments & Academic Discussion

Loading comments...

Leave a Comment