Learning to Route: A Rule-Driven Agent Framework for Hybrid-Source Retrieval-Augmented Generation

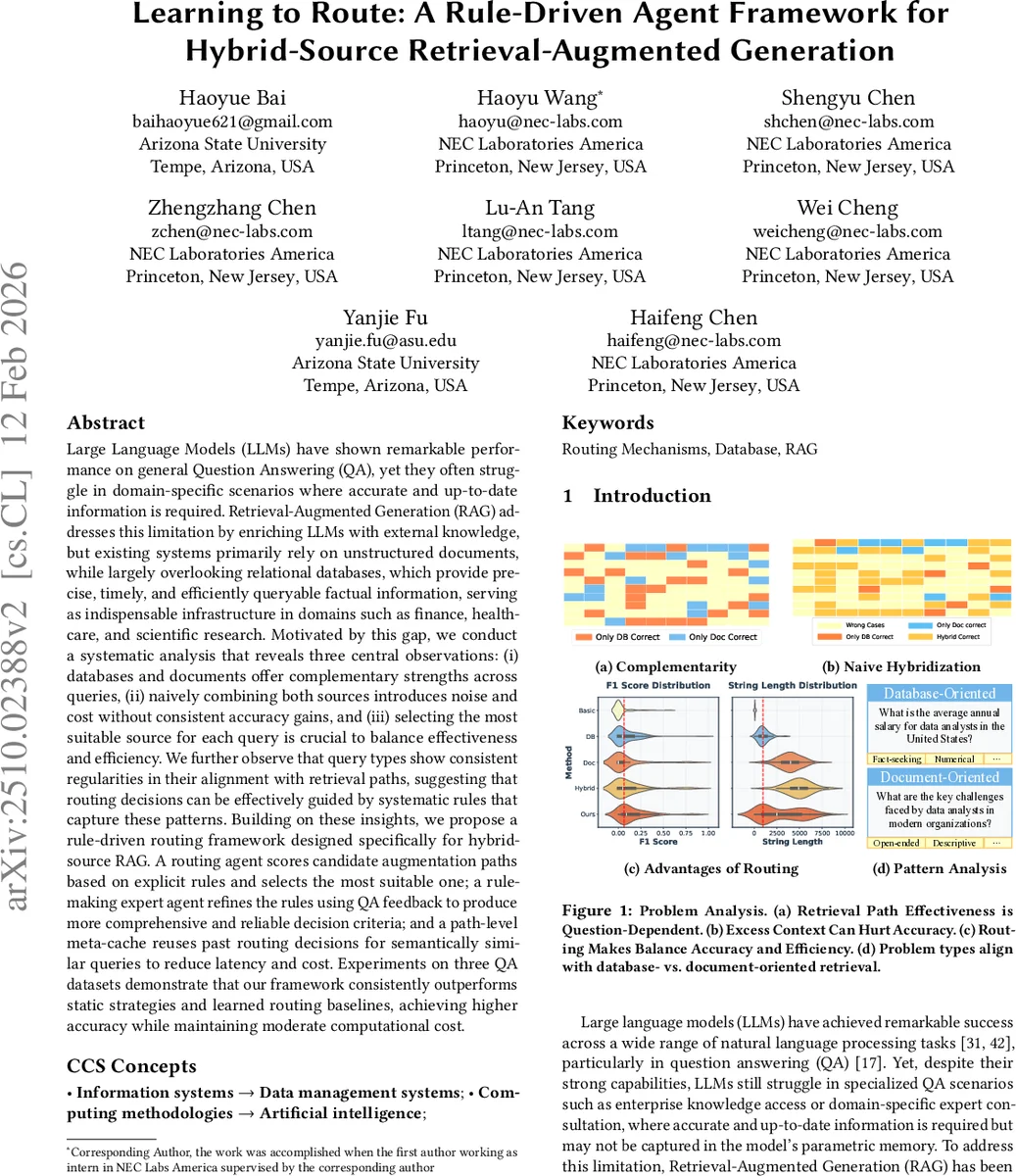

Large Language Models (LLMs) have shown remarkable performance on general Question Answering (QA), yet they often struggle in domain-specific scenarios where accurate and up-to-date information is required. Retrieval-Augmented Generation (RAG) addresses this limitation by enriching LLMs with external knowledge, but existing systems primarily rely on unstructured documents, while largely overlooking relational databases, which provide precise, timely, and efficiently queryable factual information, serving as indispensable infrastructure in domains such as finance, healthcare, and scientific research. Motivated by this gap, we conduct a systematic analysis that reveals three central observations: (i) databases and documents offer complementary strengths across queries, (ii) naively combining both sources introduces noise and cost without consistent accuracy gains, and (iii) selecting the most suitable source for each query is crucial to balance effectiveness and efficiency. We further observe that query types show consistent regularities in their alignment with retrieval paths, suggesting that routing decisions can be effectively guided by systematic rules that capture these patterns. Building on these insights, we propose a rule-driven routing framework. A routing agent scores candidate augmentation paths based on explicit rules and selects the most suitable one; a rule-making expert agent refines the rules over time using QA feedback to maintain adaptability; and a path-level meta-cache reuses past routing decisions for semantically similar queries to reduce latency and cost. Experiments on three QA benchmarks demonstrate that our framework consistently outperforms static strategies and learned routing baselines, achieving higher accuracy while maintaining moderate computational cost.

💡 Research Summary

Large language models (LLMs) excel at general question answering but often falter when answers must be precise, up‑to‑date, or domain‑specific. Retrieval‑Augmented Generation (RAG) mitigates this by feeding external knowledge into the LLM, yet most RAG systems rely exclusively on unstructured text collections. Relational databases—highly structured, timely, and query‑efficient—remain largely untapped despite being critical in finance, healthcare, and scientific research.

The authors first conduct a systematic analysis on the TATQA benchmark using GPT‑4.1‑mini. They compare three augmentation paths: (i) DB‑Only, where relevant rows are retrieved from relational tables, verbalized, and appended to the prompt; (ii) Doc‑Only, where passages from a document corpus are retrieved; and (iii) a naïve Hybrid that concatenates both sources. The findings are threefold: (1) Complementarity – some questions are answered correctly only with DB evidence, others only with documents; no single source dominates. (2) Naïve Hybridization – feeding both sources simultaneously often harms accuracy because redundant or noisy context distracts the LLM, while token count and inference cost explode. (3) Necessity of Routing – an ideal system should assign each query to its most suitable source, achieving higher accuracy with moderate cost. Moreover, they observe consistent regularities: fact‑centric, numerical queries align with databases, whereas open‑ended, descriptive queries align with documents.

Motivated by these observations, the paper proposes a rule‑driven routing framework specifically designed for hybrid‑source RAG. The framework comprises three interacting components:

-

Rule‑Driven Routing Agent – It evaluates candidate augmentation paths (DB, Doc, Hybrid) using an explicit set of interpretable rules. Rules are simple “if‑else” conditions based on observable query attributes such as length, presence of numeric or domain‑specific keywords, expected answer type, and privacy considerations. Each rule contributes a weighted score to each path; the path with the highest aggregate score is selected.

-

Rule‑Making Expert Agent – To avoid static, hand‑crafted rules, an expert agent refines the rule set using feedback from QA performance. After each batch of queries, the system records whether the chosen path yielded a correct answer, the token usage, and monetary cost. The expert agent, implemented as an LLM prompted to suggest rule modifications, proposes additions, deletions, or weight adjustments. A regularization term penalizes rule proliferation and excessive complexity, ensuring the rule base remains transparent and manageable.

-

Path‑Level Meta‑Cache – Routing decisions are cached at the path level for semantically similar queries. Queries are embedded with a pre‑trained sentence transformer; embeddings are clustered via FAISS. For a new query, the system retrieves its nearest cluster and reuses the most frequently selected path within that cluster, bypassing the routing and expert agents. This dramatically reduces latency and cost, especially for repetitive enterprise queries.

The authors evaluate the framework on three QA benchmarks: TATQA (financial), FinQA (accounting), and a medical QA set. Baselines include static strategies (DB‑Only, Doc‑Only, Hybrid‑All) and learned routers (a lightweight classifier and an LLM‑prompt router). Metrics are F1 score, average token count, and inference cost in USD. Results show that the rule‑driven system consistently outperforms all baselines, achieving 3–5 percentage‑point gains in F1 while cutting token usage by roughly 30 % compared to the naïve hybrid. The expert agent’s periodic rule updates enable the system to adapt to new query patterns without manual intervention, preserving performance on emerging question types. The meta‑cache further reduces average latency by up to 40 % and saves additional inference cost.

Limitations are acknowledged. Initial rule construction still benefits from domain expertise; fully automated rule discovery remains an open challenge. Complex multi‑join SQL queries rely on the quality of the underlying text‑to‑SQL component, which can become a bottleneck. The effectiveness of the meta‑cache hinges on the quality of the query embeddings, suggesting future work on dynamic embedding updates. The authors propose extending the rule‑making process with reinforcement learning, improving cache invalidation for real‑time database updates, and exploring richer hybrid fusion strategies beyond simple concatenation.

In summary, this paper demonstrates that a transparent, rule‑based routing mechanism can effectively mediate between relational databases and unstructured documents in a RAG setting. By combining a scoring router, an adaptive expert, and a similarity‑based cache, the system achieves higher answer accuracy, lower computational cost, and greater interpretability than existing static or learned routing approaches. The work offers a practical blueprint for enterprises and research institutions that need to integrate precise, up‑to‑date structured data with broader textual knowledge for reliable question answering.

Comments & Academic Discussion

Loading comments...

Leave a Comment