Logical Structure as Knowledge: Enhancing LLM Reasoning via Structured Logical Knowledge Density Estimation

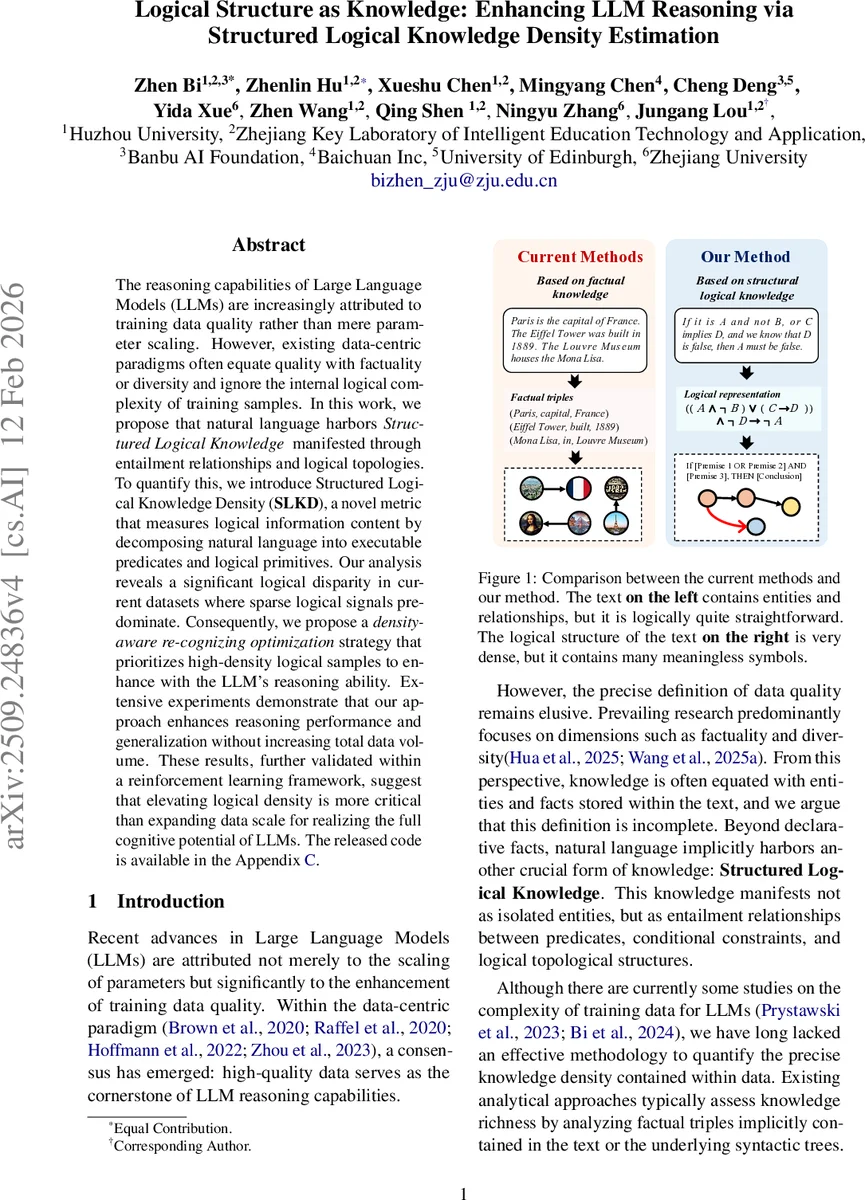

The reasoning capabilities of Large Language Models (LLMs) are increasingly attributed to training data quality rather than mere parameter scaling. However, existing data-centric paradigms often equate quality with factuality or diversity and ignore the internal logical complexity of training samples. In this work, we propose that natural language harbors Structured Logical Knowledge manifested through entailment relationships and logical topologies. To quantify this, we introduce Structured Logical Knowledge Density (SLKD), a novel metric that measures logical information content by decomposing natural language into executable predicates and logical primitives. Our analysis reveals a significant logical disparity in current datasets where sparse logical signals predominate. Consequently, we propose a density aware re-cognizing optimization strategy that prioritizes high-density logical samples to enhance with the LLM’s reasoning ability. Extensive experiments demonstrate that our approach enhances reasoning performance and generalization without increasing total data volume. These results, further validated within a reinforcement learning framework, suggest that elevating logical density is more critical than expanding data scale for realizing the full cognitive potential of LLMs. The released code is available in the Appendix C.

💡 Research Summary

The paper tackles a fundamental question in the recent surge of large language model (LLM) capabilities: is reasoning power driven mainly by sheer model size, or does the logical richness of the training data play a decisive role? While many data‑centric works have focused on factual correctness, diversity, or provenance, they have largely ignored the internal logical structure that natural language can encode—entailment relations, conditional constraints, and the topology of reasoning chains.

To fill this gap, the authors introduce Structured Logical Knowledge Density (SLKD), a novel metric that quantifies the “logical information content” of a text sample. The pipeline consists of three stages. First, a “structure decomposition” step uses a powerful LLM (e.g., GPT‑4) as a distillation function f to transform raw sentences into three primitive sets: constants C (entities or values), predicates P (relations or properties), and logical expressions E (formal first‑order logic rules). This conversion turns fuzzy natural language into a rigorously defined knowledge base.

Second, a “combinatorial topology analysis” reconstructs the reasoning trajectory required to answer a question. Given the primitives and the target answer, a function F produces a pre‑condition structure (\overline{E}) and a sequence of reasoning steps S. Each step (s_k) is described by three numbers: the count of logical operators (AND/OR/NOT), the nesting depth (D_k) of the expression, and the formal expression itself. Nesting depth receives a quadratic penalty, reflecting the non‑linear increase in cognitive load for deeply nested formulas.

Third, the SLKD score aggregates two density components. Contextual Logical Density (S_ctx) measures static logical content in the problem description, weighting the number of logical expressions, predicates, and constants, with a squared depth term. Inferential Topology Density (S_opt(l)) evaluates the dynamic complexity of each answer option’s entailment path, summing over the number of pre‑conditions, reasoning steps, operator counts, and depths. The raw score (S_{raw}=S_{ctx}+∑{l}S{opt}(l)) is then normalized to the interval

Comments & Academic Discussion

Loading comments...

Leave a Comment