From CVE Entries to Verifiable Exploits: An Automated Multi-Agent Framework for Reproducing CVEs

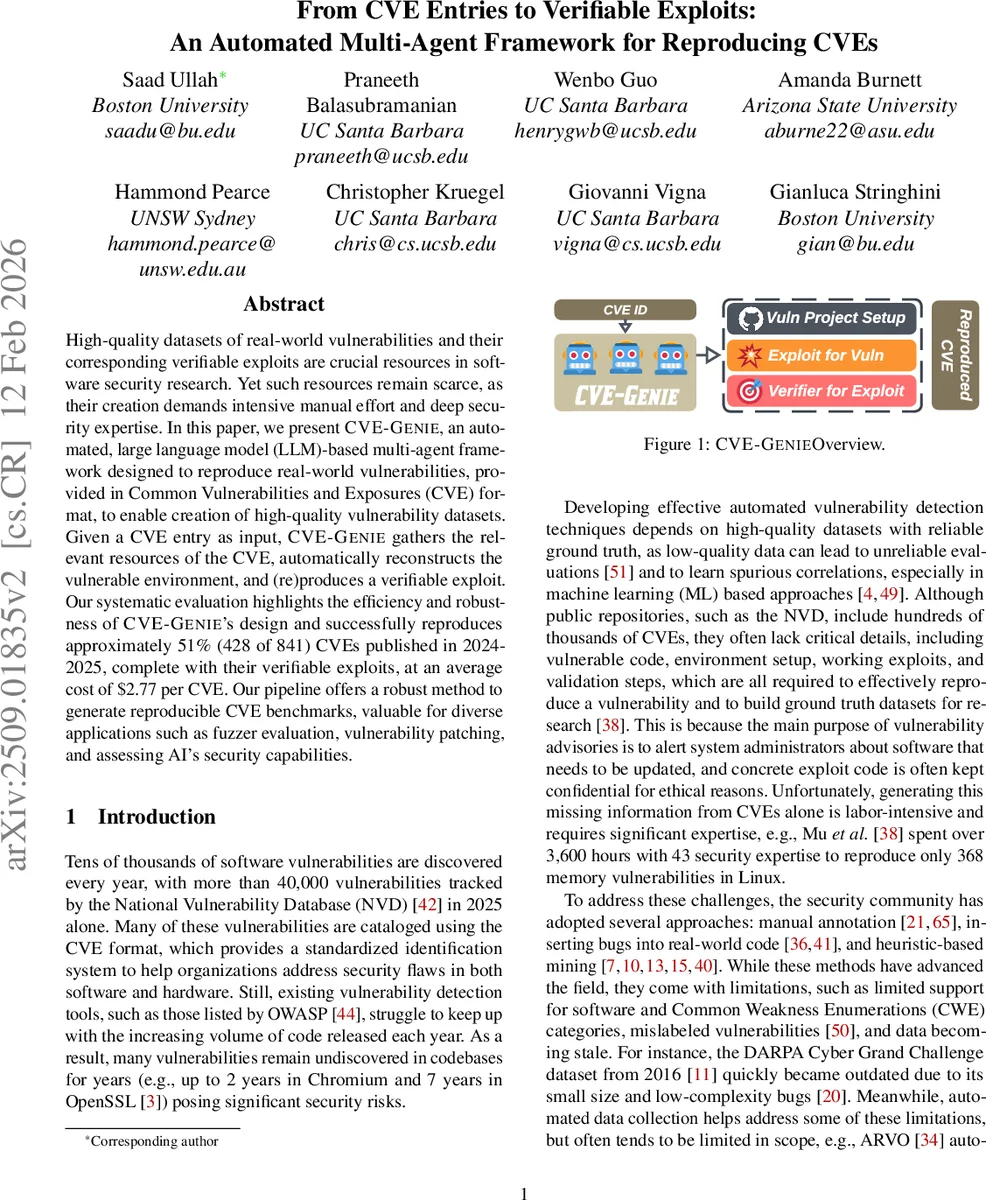

High-quality datasets of real-world vulnerabilities and their corresponding verifiable exploits are crucial resources in software security research. Yet such resources remain scarce, as their creation demands intensive manual effort and deep security expertise. In this paper, we present CVE-GENIE, an automated, large language model (LLM)-based multi-agent framework designed to reproduce real-world vulnerabilities, provided in Common Vulnerabilities and Exposures (CVE) format, to enable creation of high-quality vulnerability datasets. Given a CVE entry as input, CVE-GENIE gathers the relevant resources of the CVE, automatically reconstructs the vulnerable environment, and (re)produces a verifiable exploit. Our systematic evaluation highlights the efficiency and robustness of CVE-GENIE’s design and successfully reproduces approximately 51% (428 of 841) CVEs published in 2024-2025, complete with their verifiable exploits, at an average cost of $2.77 per CVE. Our pipeline offers a robust method to generate reproducible CVE benchmarks, valuable for diverse applications such as fuzzer evaluation, vulnerability patching, and assessing AI’s security capabilities.

💡 Research Summary

The paper introduces CVE‑GENIE, a novel, fully automated, large‑language‑model (LLM) powered multi‑agent framework that can take a CVE identifier as input and output a complete, reproducible exploit together with a verifier for that exploit. The authors argue that while millions of CVEs are catalogued in the National Vulnerability Database, most entries lack the essential artifacts needed for research—vulnerable source code, build environment, working proof‑of‑concept (PoC) code, and a way to confirm that the PoC actually triggers the vulnerability. Existing datasets either rely on labor‑intensive manual annotation, heuristic mining that produces noisy labels, or are limited to specific bug classes (e.g., memory corruption in C/C++ from OSS‑Fuzz). Consequently, there is a gap for a scalable, high‑quality benchmark that satisfies all the requirements of an “EAGER” system: Exploit generation, Assessment (verifier), Generalization across CWEs, languages and projects, End‑to‑end automation, and Rebuild of the vulnerable environment.

CVE‑GENIE’s architecture decomposes the end‑to‑end task into four cooperating modules:

- Processor – Retrieves the vulnerable version of the target project from GitHub, extracts CVE metadata (description, CWE, patch diffs, advisory URLs), and feeds these raw artifacts to a Knowledge Builder.

- Knowledge Builder – Uses a purpose‑tuned LLM to transform the raw data into a concise, structured knowledge base that captures the root cause, any existing PoC, and the exact code changes that fix the bug. If no PoC is available, the LLM is prompted to synthesize a plausible exploit outline.

- Builder – Generates Dockerfiles or other container specifications, resolves dependencies, and compiles the vulnerable version, thereby reconstructing the exact environment required to run the exploit.

- Exploiter – Consumes the knowledge base and the rebuilt environment, and asks a code‑generation LLM to produce a working exploit script. The exploit is then executed inside the container to verify its effect.

- CTF Verifier – Automatically creates a verification harness (often a small CTF‑style checker) that can programmatically confirm whether the exploit successfully triggers the vulnerability.

Each module is implemented as a pair of agents: a “developer” that performs the primary task and a “critic” that reviews the output, following a ReAct‑style iterative loop. This self‑critique mechanism mitigates known LLM limitations such as hallucination and context overflow. The authors also perform careful model selection: code‑focused models (e.g., o1‑mini) for patch analysis, general‑purpose GPT‑4o for exploit synthesis, and Claude‑3 for reasoning‑heavy steps. Prompt engineering is customized per module, and ablation studies show that no single LLM can satisfy all EAGER criteria alone.

In the evaluation, the authors ran CVE‑GENIE on 841 CVEs released between June 2024 and May 2025. The system successfully reproduced 428 CVEs (≈ 51 %) across 267 distinct projects, covering 141 CWE categories and 22 programming languages. The average monetary cost per reproduced CVE was $2.77, a dramatic reduction compared with prior manual efforts that required thousands of person‑hours. The reproduced CVEs include both open‑source and some proprietary projects (when source is supplied), and the generated exploits are verified by the automatically built verifiers. Compared to prior automated pipelines such as ARVO, which are limited to memory‑corruption bugs in C/C++, CVE‑GENIE demonstrates broader generalization.

Limitations are acknowledged: (i) LLM‑generated code may still contain false positives, requiring human review for safety; (ii) closed‑source binaries without accessible source remain out of reach; (iii) complex build systems with custom scripts or hardware dependencies sometimes cause reconstruction failures. The authors propose future work on tighter integration with static/dynamic analysis tools, expanding to multi‑cloud orchestration for scaling, and improving LLM fidelity through reinforcement learning from human feedback.

All source code, the dataset of 428 reproduced CVEs, and full logs of agent interactions are publicly released, providing a valuable resource for downstream tasks such as fuzzer benchmarking, automated patch validation, and evaluating AI’s security capabilities. The paper convincingly demonstrates that LLM‑driven multi‑agent pipelines can move from experimental code generation to practical, end‑to‑end security research infrastructure, filling a critical gap in the vulnerability‑research ecosystem.

Comments & Academic Discussion

Loading comments...

Leave a Comment