Model-based controller assisted domain randomization for transient vibration suppression of nonlinear powertrain system with parametric uncertainty

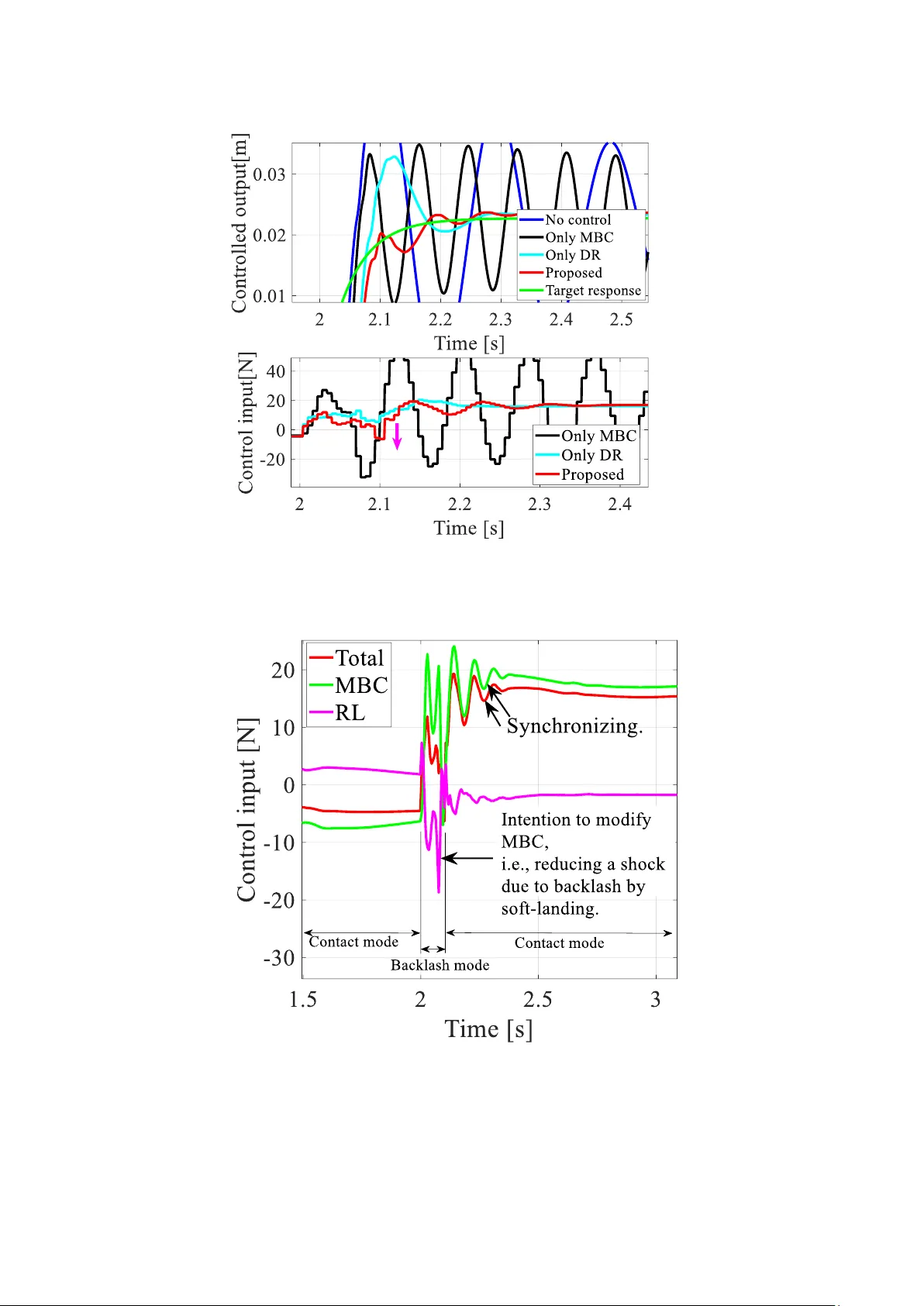

Complex mechanical systems such as vehicle powertrains are inherently subject to multiple nonlinearities and uncertainties arising from parametric variations. Modeling errors are therefore unavoidable, making the transfer of control systems from simu…

Authors: Heisei Yonezawa, Ansei Yonezawa, Itsuro Kajiwara