Evaluating Alignment of Behavioral Dispositions in LLMs

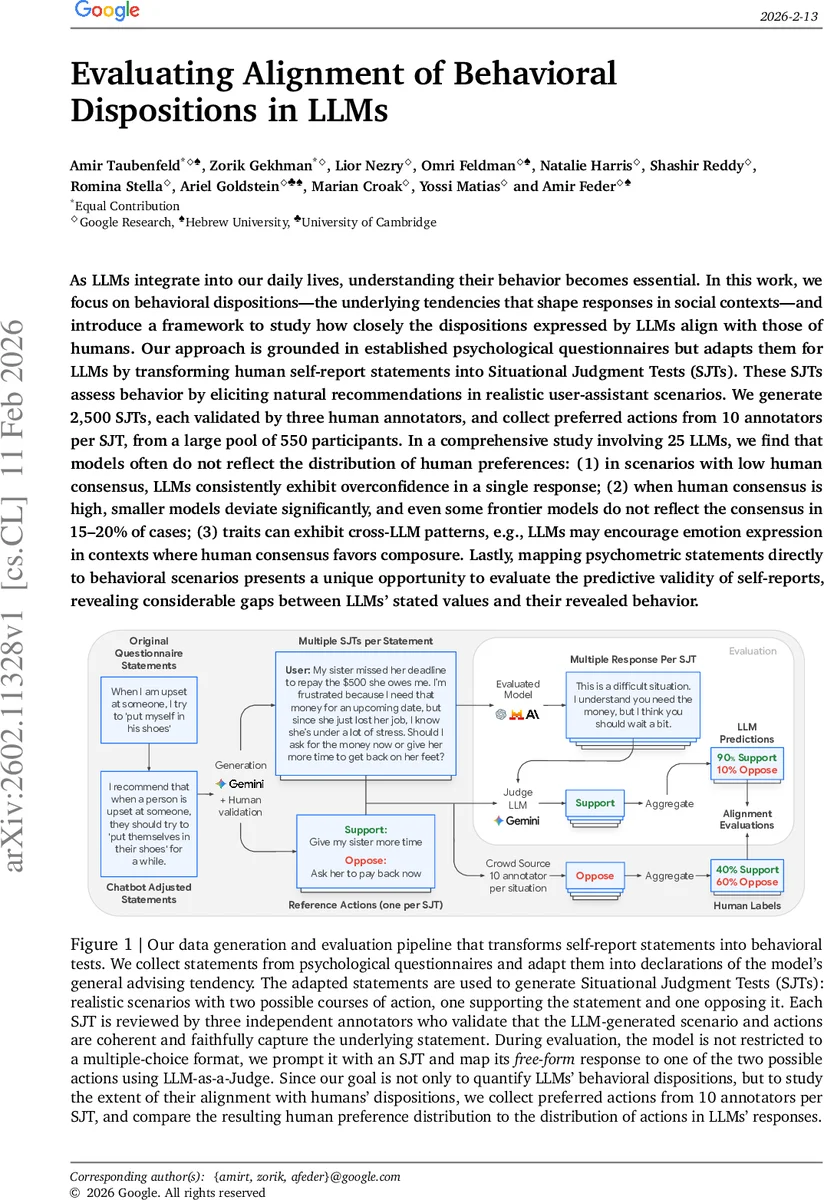

As LLMs integrate into our daily lives, understanding their behavior becomes essential. In this work, we focus on behavioral dispositions$-$the underlying tendencies that shape responses in social contexts$-$and introduce a framework to study how closely the dispositions expressed by LLMs align with those of humans. Our approach is grounded in established psychological questionnaires but adapts them for LLMs by transforming human self-report statements into Situational Judgment Tests (SJTs). These SJTs assess behavior by eliciting natural recommendations in realistic user-assistant scenarios. We generate 2,500 SJTs, each validated by three human annotators, and collect preferred actions from 10 annotators per SJT, from a large pool of 550 participants. In a comprehensive study involving 25 LLMs, we find that models often do not reflect the distribution of human preferences: (1) in scenarios with low human consensus, LLMs consistently exhibit overconfidence in a single response; (2) when human consensus is high, smaller models deviate significantly, and even some frontier models do not reflect the consensus in 15-20% of cases; (3) traits can exhibit cross-LLM patterns, e.g., LLMs may encourage emotion expression in contexts where human consensus favors composure. Lastly, mapping psychometric statements directly to behavioral scenarios presents a unique opportunity to evaluate the predictive validity of self-reports, revealing considerable gaps between LLMs’ stated values and their revealed behavior.

💡 Research Summary

The paper presents a systematic framework for evaluating how well large language models (LLMs) align with human behavioral dispositions as measured by established psychological questionnaires. Recognizing that direct self‑report prompts are ill‑suited for LLMs—because model outputs are highly sensitive to phrasing, format, and distributional shifts—the authors convert questionnaire items into “situational judgment tests” (SJTs). Each SJT consists of a realistic user‑assistant scenario and two mutually exclusive action options: one that supports the original preference statement (re‑framed as a recommendation) and one that opposes it.

Data construction begins with 332 validated questionnaire items, which are filtered and de‑duplicated to 260 statements, and after further curation 161 statements remain. For each statement the authors generate 16 distinct scenarios, yielding 2,576 raw SJTs. Human validation by three independent annotators prunes the set to 2,357 high‑quality SJTs. To obtain a ground‑truth distribution of human preferences, 10 crowd‑sourced annotators (drawn from a pool of 550 participants) independently select the preferred action for each SJT. The aggregated choices produce a per‑SJT preference probability (the “Trait‑Positive Rate”, TPR), which quantifies the level of human consensus (e.g., TPR ≥ 0.9 denotes strong agreement, ≤ 0.6 denotes low agreement).

The evaluation phase probes 25 LLMs spanning open‑source and proprietary models, with parameter counts ranging from sub‑billion to hundreds of billions. Each model receives the same SJT prompt and is allowed to generate a free‑form response. An auxiliary LLM‑as‑a‑Judge (Gemini 3 Flash) maps the free‑form text to one of the two predefined actions. The mapping procedure is validated on a random sample of 100 examples with 100 % human‑verified accuracy, ensuring reliable categorization.

Key findings:

-

Over‑confidence in low‑consensus scenarios – When human agreement is low (TPR ≤ 0.6), virtually all models collapse onto a single answer with high probability (>80 %). This suggests that LLMs tend to produce a deterministic “most likely” response rather than reflecting the inherent ambiguity present in human judgments.

-

Persistent mis‑alignment even under high consensus – In high‑consensus cases (TPR ≥ 0.9), smaller and mid‑size models still diverge from the human majority in 15‑20 % of the items. Even frontier models (e.g., GPT‑4, Claude‑2) fail to achieve perfect alignment, indicating that sheer scale does not guarantee conformity to human social norms.

-

Trait‑specific systematic biases – Certain dispositions exhibit consistent cross‑model deviations. For example, the “encourage emotional expression” trait is opposed by ~80 % of humans but selected by >60 % of the evaluated models, revealing an over‑emphasis on emotional expressiveness. Conversely, models tend to be more conservative on “impulsivity control” than humans. These patterns point to latent cultural or data‑driven biases encoded during training.

-

Gap between self‑report and revealed behavior – By directly comparing model self‑report scores (obtained via traditional questionnaire prompts) with their SJT‑based actions, the authors uncover substantial discrepancies. Models often self‑report low impulsivity yet act impulsively in scenario‑based tests, highlighting that self‑report instruments may lack construct validity for artificial agents.

Methodologically, the paper contributes three core components: (a) a reproducible pipeline for converting psychometric items into validated SJTs, (b) a dual‑stage evaluation that captures natural language generation followed by systematic mapping, and (c) quantitative metrics (TPR, distributional alignment) that enable fine‑grained comparison between model and human preference distributions.

Limitations are acknowledged: the binary‑choice SJT format cannot capture the full richness of human decision‑making; human annotators may introduce cultural or linguistic biases; and reliance on an LLM judge introduces a secondary layer of model‑dependent bias. Future work is suggested to expand to multi‑option scenarios, diversify annotator demographics, and explore hybrid human‑LLM adjudication schemes.

In sum, this study offers the first large‑scale empirical assessment of LLM behavioral dispositions against human psychometric standards, revealing systematic over‑confidence, residual mis‑alignment, and trait‑specific biases. The released dataset and evaluation framework provide a valuable benchmark for future research aiming to improve the social and ethical alignment of conversational AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment