Active Zero: Self-Evolving Vision-Language Models through Active Environment Exploration

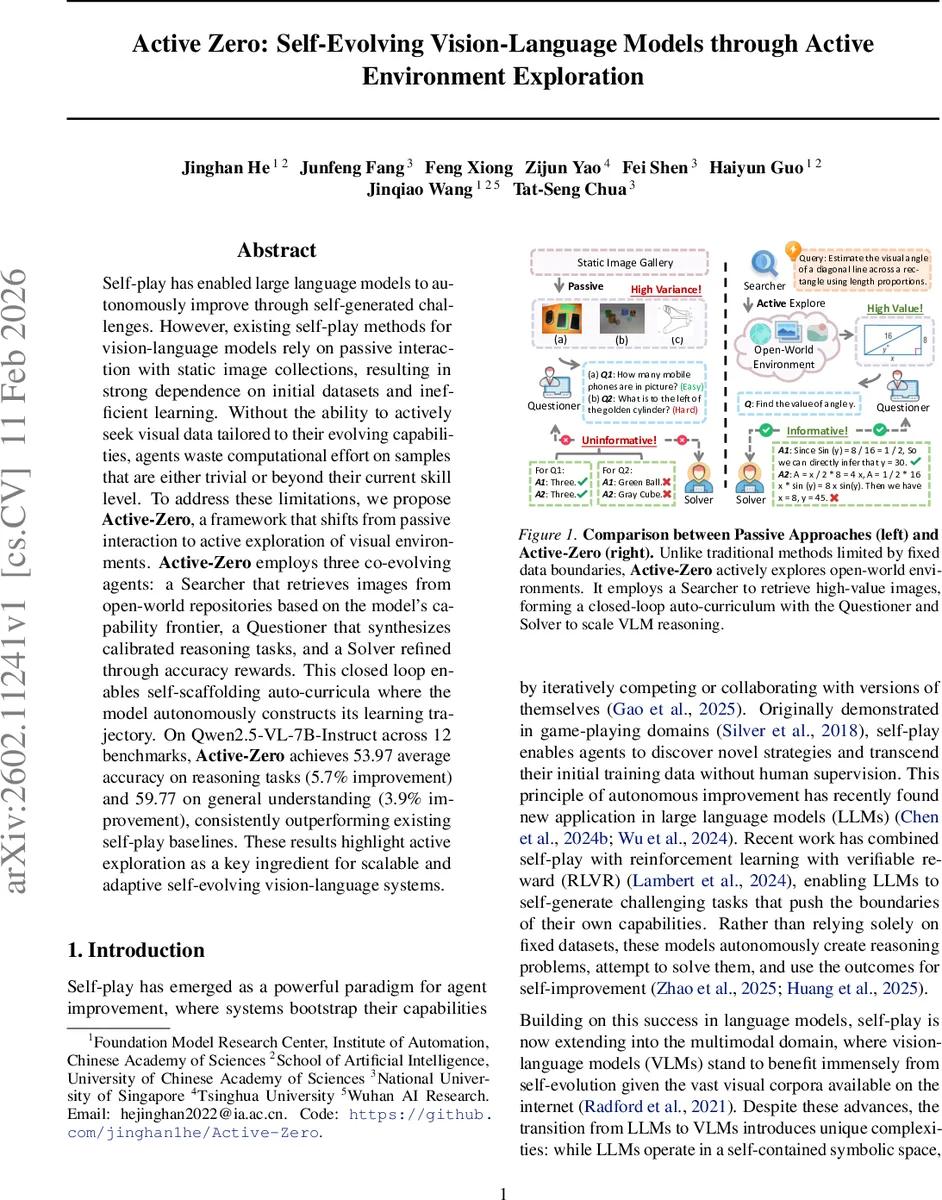

Self-play has enabled large language models to autonomously improve through self-generated challenges. However, existing self-play methods for vision-language models rely on passive interaction with static image collections, resulting in strong dependence on initial datasets and inefficient learning. Without the ability to actively seek visual data tailored to their evolving capabilities, agents waste computational effort on samples that are either trivial or beyond their current skill level. To address these limitations, we propose Active-Zero, a framework that shifts from passive interaction to active exploration of visual environments. Active-Zero employs three co-evolving agents: a Searcher that retrieves images from open-world repositories based on the model’s capability frontier, a Questioner that synthesizes calibrated reasoning tasks, and a Solver refined through accuracy rewards. This closed loop enables self-scaffolding auto-curricula where the model autonomously constructs its learning trajectory. On Qwen2.5-VL-7B-Instruct across 12 benchmarks, Active-Zero achieves 53.97 average accuracy on reasoning tasks (5.7% improvement) and 59.77 on general understanding (3.9% improvement), consistently outperforming existing self-play baselines. These results highlight active exploration as a key ingredient for scalable and adaptive self-evolving vision-language systems.

💡 Research Summary

Active‑Zero introduces a novel self‑play framework for vision‑language models (VLMs) that replaces the traditional reliance on static image collections with an active, open‑world exploration loop. The system consists of three co‑evolving agents: a Searcher that generates textual queries to retrieve images from external repositories, a Questioner that transforms those images into multi‑step reasoning questions, and a Solver that learns to answer the questions using accuracy‑based reinforcement signals.

In Stage 1, the Searcher’s policy π_S(q|P) is optimized to select images that lie at the current capability frontier of the Solver. This is achieved through a Challenge Reward (R_chal) that peaks when the Solver’s empirical accuracy on a sampled question is near 0.5 (maximum uncertainty), encouraging the retrieval of “just‑right” difficulty samples. A Repetition Penalty (P_rep) based on BLEU similarity for queries and cosine similarity for image embeddings discourages redundancy and promotes diversity. The final reward R_S = max(0, R_chal − P_rep) guides the Searcher toward informative, varied visual contexts. Domain‑specific prompts partition the search space into N semantic domains, and Group‑Relative Policy Optimization (GRPO) normalizes advantages within each domain to avoid over‑focusing on any single area.

Stage 2 employs the Questioner π_Q(x|I,T_cot) to synthesize Chain‑of‑Thought (CoT) style questions from the retrieved images. The question generation objective is to maximize the Solver’s uncertainty again, using a pretrained difficulty estimator and the Solver’s uncertainty predictions to select the most challenging prompts. The resulting image‑question pairs constitute the training set D_train.

Stage 3 refines the Solver V using the same GRPO backbone. For each question, the Solver performs m independent reasoning passes, and a consensus‑based accuracy reward is computed. The policy update follows the clipped objective L_clip(θ) = min(r·Â, clip(r·Â, ε_low, ε_high)), where r is the importance ratio and  the group‑wise advantage. This loop iterates, allowing the Solver’s performance to improve, which in turn pushes the Searcher to discover harder images, thereby forming an automatic curriculum that self‑adjusts to the model’s evolving abilities.

Empirical evaluation on Qwen2.5‑VL‑7B‑Instruct across twelve benchmarks (six reasoning‑intensive and six general‑understanding tasks) shows that Active‑Zero achieves an average reasoning accuracy of 53.97 % (a 5.7 % gain over prior self‑play baselines such as VisPlay and EvolMM) and a general‑understanding score of 59.77 % (+3.9 %). Ablation studies confirm that both the uncertainty‑driven challenge reward and the diversity‑preserving repetition penalty contribute significantly to performance.

The paper’s contributions are threefold: (1) it redefines VLM self‑play by integrating active environment exploration, (2) it proposes a tri‑agent framework that jointly optimizes data acquisition, task synthesis, and reasoning via GRPO, and (3) it demonstrates consistent improvements over static‑data self‑play methods on a broad suite of multimodal benchmarks. Limitations include dependence on text‑based query mechanisms and external image APIs, which may affect scalability to richer modalities such as video or 3D scenes. Nonetheless, Active‑Zero establishes a compelling paradigm for scalable, self‑evolving vision‑language systems that can continuously adapt their training curriculum without human‑curated data.

Comments & Academic Discussion

Loading comments...

Leave a Comment