Transferable Backdoor Attacks for Code Models via Sharpness-Aware Adversarial Perturbation

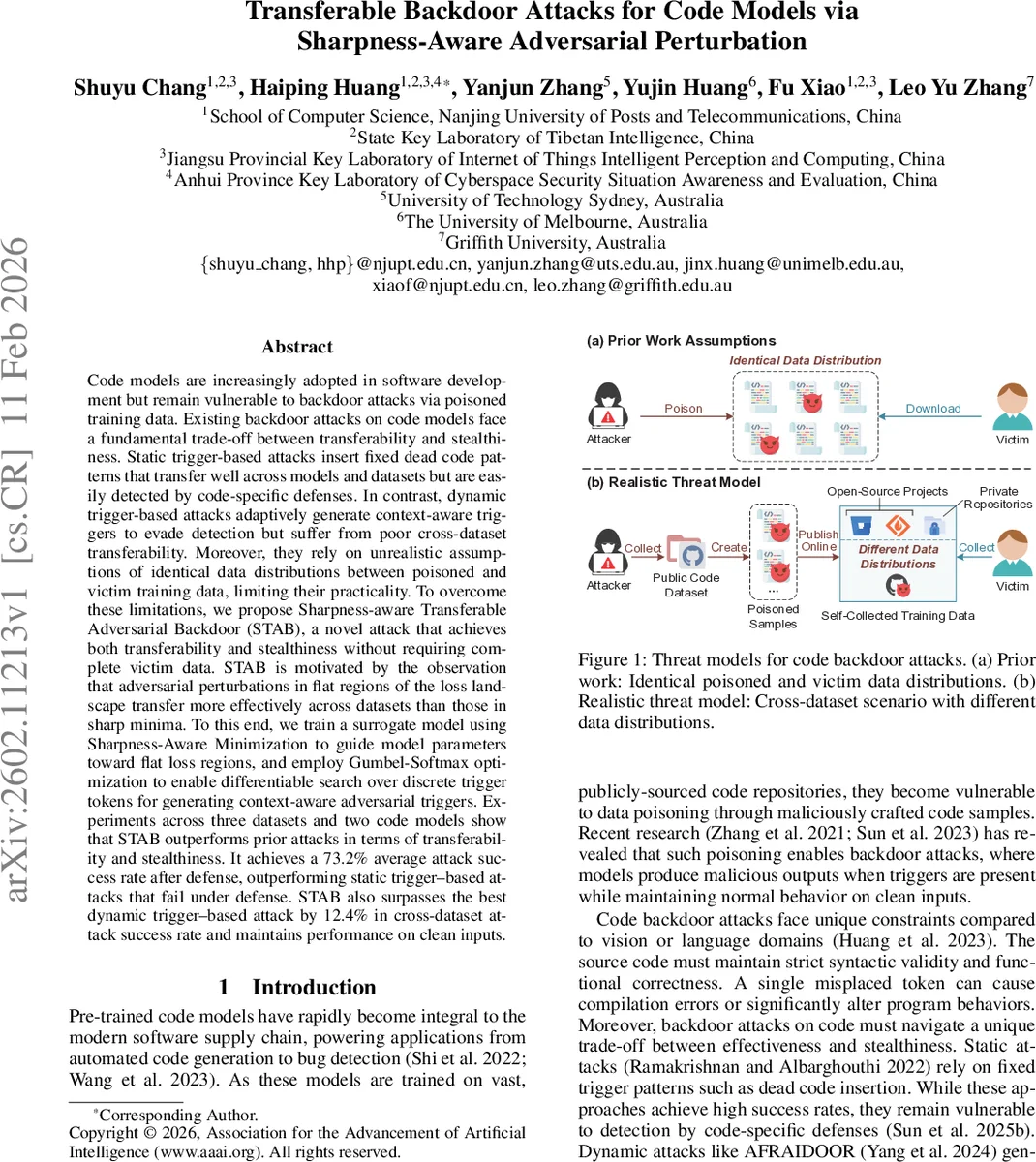

Code models are increasingly adopted in software development but remain vulnerable to backdoor attacks via poisoned training data. Existing backdoor attacks on code models face a fundamental trade-off between transferability and stealthiness. Static trigger-based attacks insert fixed dead code patterns that transfer well across models and datasets but are easily detected by code-specific defenses. In contrast, dynamic trigger-based attacks adaptively generate context-aware triggers to evade detection but suffer from poor cross-dataset transferability. Moreover, they rely on unrealistic assumptions of identical data distributions between poisoned and victim training data, limiting their practicality. To overcome these limitations, we propose Sharpness-aware Transferable Adversarial Backdoor (STAB), a novel attack that achieves both transferability and stealthiness without requiring complete victim data. STAB is motivated by the observation that adversarial perturbations in flat regions of the loss landscape transfer more effectively across datasets than those in sharp minima. To this end, we train a surrogate model using Sharpness-Aware Minimization to guide model parameters toward flat loss regions, and employ Gumbel-Softmax optimization to enable differentiable search over discrete trigger tokens for generating context-aware adversarial triggers. Experiments across three datasets and two code models show that STAB outperforms prior attacks in terms of transferability and stealthiness. It achieves a 73.2% average attack success rate after defense, outperforming static trigger-based attacks that fail under defense. STAB also surpasses the best dynamic trigger-based attack by 12.4% in cross-dataset attack success rate and maintains performance on clean inputs.

💡 Research Summary

The paper addresses a critical limitation of existing backdoor attacks on code models: the trade‑off between transferability (the ability of a trigger to work across different victim models and datasets) and stealthiness (the difficulty of detection by code‑specific defenses). Static trigger attacks insert fixed dead‑code snippets that transfer well but are easily spotted, while dynamic attacks generate context‑aware identifier renamings that evade detection but rely on the unrealistic assumption that the poisoned data distribution matches the victim’s training distribution. Consequently, dynamic attacks suffer from poor cross‑dataset transferability.

To overcome these issues, the authors propose Sharpness‑aware Transferable Adversarial Backdoor (STAB). The central insight is that adversarial perturbations discovered in flat regions of the loss landscape (i.e., flat minima) are more robust to changes in data distribution than those found in sharp minima. Flat minima capture universal code patterns rather than dataset‑specific quirks. STAB therefore consists of three stages:

- Sharpness‑aware Surrogate Model Training – Using publicly available code, a surrogate model is trained with Sharpness‑Aware Minimization (SAM). SAM solves a min‑max objective:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment