Collaborative Threshold Watermarking

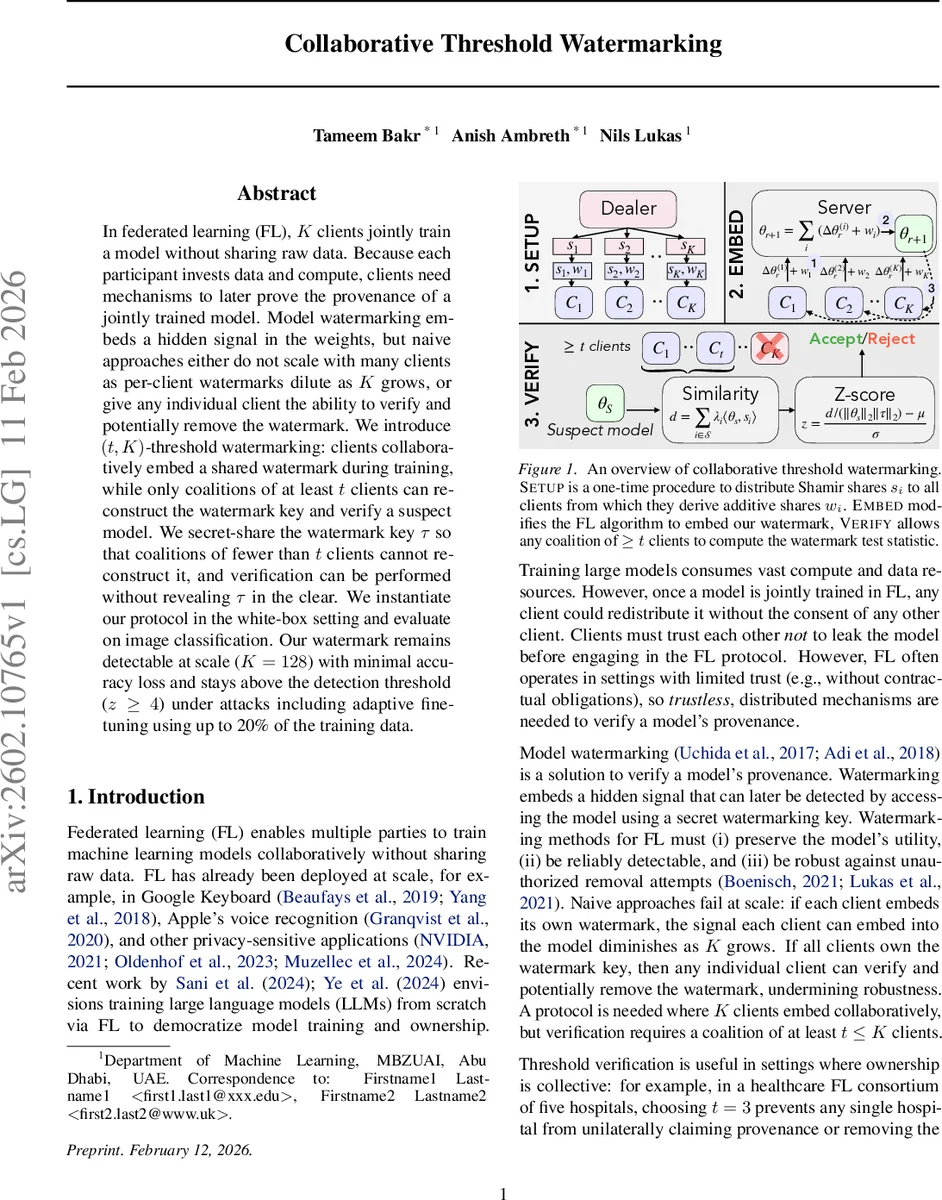

In federated learning (FL), $K$ clients jointly train a model without sharing raw data. Because each participant invests data and compute, clients need mechanisms to later prove the provenance of a jointly trained model. Model watermarking embeds a hidden signal in the weights, but naive approaches either do not scale with many clients as per-client watermarks dilute as $K$ grows, or give any individual client the ability to verify and potentially remove the watermark. We introduce $(t,K)$-threshold watermarking: clients collaboratively embed a shared watermark during training, while only coalitions of at least $t$ clients can reconstruct the watermark key and verify a suspect model. We secret-share the watermark key $τ$ so that coalitions of fewer than $t$ clients cannot reconstruct it, and verification can be performed without revealing $τ$ in the clear. We instantiate our protocol in the white-box setting and evaluate on image classification. Our watermark remains detectable at scale ($K=128$) with minimal accuracy loss and stays above the detection threshold ($z\ge 4$) under attacks including adaptive fine-tuning using up to 20% of the training data.

💡 Research Summary

In federated learning (FL), multiple clients collaboratively train a model without sharing raw data, which raises the problem of proving ownership of the jointly trained model. Traditional watermarking either embeds a separate watermark per client—causing the signal to dilute as the number of participants K grows—or gives every client the same secret key, allowing any single client to verify or even remove the watermark. This paper introduces (t, K)‑threshold watermarking, a scheme that embeds a single shared watermark during training but requires at least t clients to reconstruct the watermark key τ and to verify a suspect model.

The core construction combines Shamir secret sharing with secure aggregation. A trusted dealer (or a dealer‑free distributed key generation protocol) samples a random watermark key τ from a standard normal distribution and publishes a commitment C together with a public nonce ρ. Using a (t, K) Shamir scheme, the dealer distributes shares s₁,…,s_K to the clients. Each client computes a public Lagrange coefficient λ_i (based on fixed evaluation points) and derives an additive embedding share w_i = λ_i s_i. By definition, the sum of all w_i equals τ.

During each FL round, client i performs local training, obtains its update Δθ_i^r, and maintains an exponential moving average (EMA) of update norms. A per‑client scaling factor scale_i = c·‖Δθ_i^r‖²·EMA_i^r is computed, where c is a global watermark‑strength hyper‑parameter. All scale_i values are securely aggregated, yielding a global scale_total that is broadcast to every participant. Each client then forms a watermarked local model u_i = θ_i^r + scale_total·w_i and submits u_i through a second secure‑aggregation step. The server receives only the aggregate and updates the global model as θ^r = (1/K)·∑_i u_i. Because ∑_i w_i = τ, the global model drifts by (scale_total/K)·τ each round, embedding the watermark in a way that is invisible to the server.

If a subset S_r of clients participates in a round, the protocol adapts by recomputing Lagrange coefficients for the active set, provided |S_r| ≥ t. When fewer than t clients are active, watermark embedding is skipped for that round, preserving the threshold property.

Verification is performed in the white‑box setting. A coalition of at least t clients can compute the inner product ⟨θ_s, τ⟩ without ever reconstructing τ in the clear. Using their shares and the public λ_i, they compute d = Σ_{i∈S} λ_i ⟨θ_s, s_i⟩, then calculate a calibrated one‑sided z‑score:

z = (d / (‖θ_s‖·‖τ‖) – μ) / σ.

If z ≥ 4, the watermark is declared present with >99.99 % confidence. Because τ never appears in plaintext, no client or the server can learn the secret unless they control at least t shares.

Security analysis shows that (i) the server learns only aggregated updates and cannot infer τ, (ii) any coalition of fewer than t clients obtains no information about τ (information‑theoretic secrecy), and (iii) the commitment guarantees integrity of τ. The paper also describes a dealer‑free distributed key generation (DKG) protocol that achieves the same security guarantees with O(K²) communication, enabling deployment in environments without a trusted third party.

Empirical evaluation uses ResNet‑18 on CIFAR‑10, CIFAR‑100, and Tiny‑ImageNet, scaling the number of clients up to K = 128. Compared to a baseline per‑client watermark, which falls below the detection threshold when K ≥ 16, the proposed threshold watermark maintains a z‑score ≥ 5.2 even at K = 128, while incurring less than 0.5 % accuracy loss. Robustness tests include (a) pruning up to 90 % of weights, (b) 4‑bit quantization, and (c) adaptive fine‑tuning using 20 % of the original training data. In all cases the watermark remains detectable (z ≥ 4).

Performance overhead consists of two secure‑aggregation rounds per FL iteration (one for scaling factors, one for the watermarked updates). The additional communication and computation are linear in K and amount to less than a 3 % increase in total FLOPs, making the scheme practical for large‑scale FL deployments.

Limitations include reliance on white‑box access for verification; extending the method to a black‑box API‑only setting would require new signal designs. The need for fixed public evaluation points means the protocol is less straightforward when client identities change dynamically. Moreover, the current analysis assumes synchronous participation; handling fully asynchronous FL would need further protocol adaptations.

In summary, the paper presents a novel (t, K)‑threshold watermarking framework that securely embeds a shared watermark during federated training, guarantees that only coalitions of at least t clients can verify its presence, scales to hundreds of participants with negligible utility loss, and demonstrates strong resistance to pruning, quantization, and adaptive fine‑tuning attacks. This work advances the state of the art in provable model provenance for collaborative machine‑learning environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment