A fast Bayesian surrogate for the photon flux in ultra-peripheral collisions

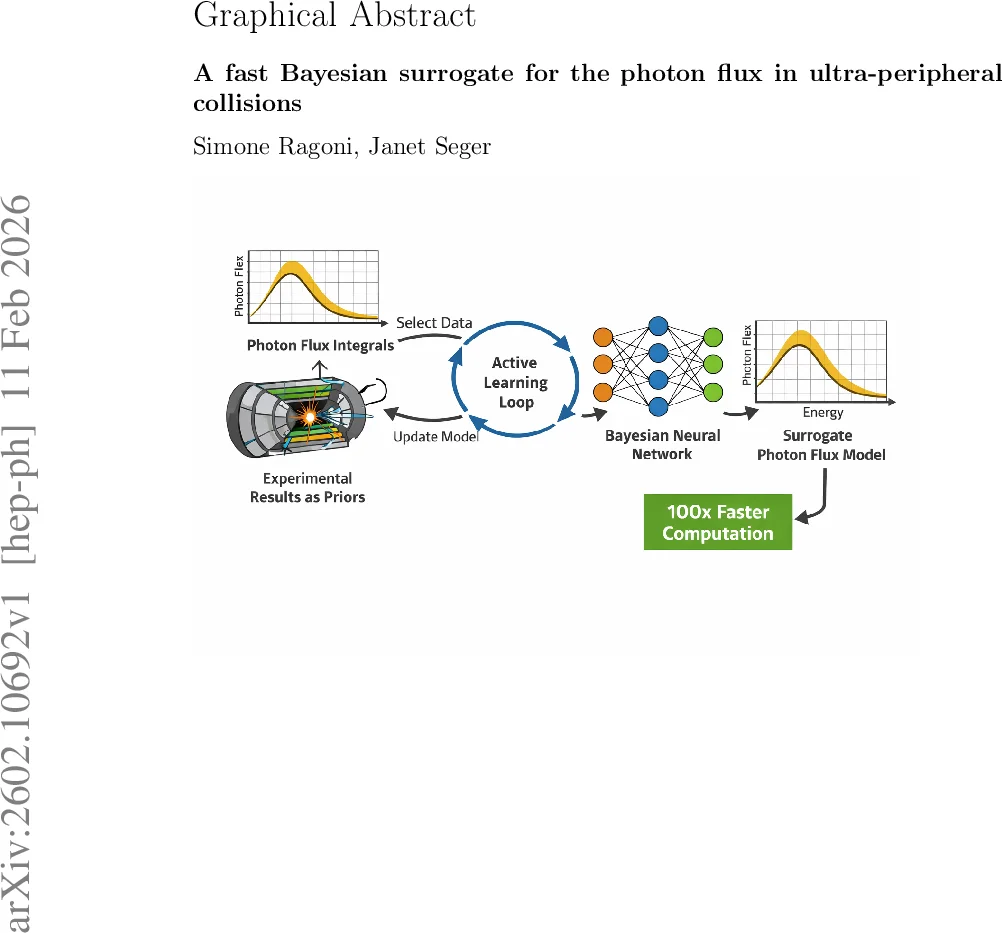

We present a fast surrogate for the Equivalent Photon Approximation (EPA) flux in ultraperipheral collisions (UPCs), based on a Bayesian neural network (BNN) trained over analytical flux integrals with an iterative procedure focused on regions of high relative uncertainties to minimise the number of integrations. The surrogate propagates experimentally available uncertainties on the neutron skin thickness and surface diffuseness. Once trained, this surrogate technique brings an estimated gain of two orders of magnitude in CPU time. The implementation provides a modular framework for rapidly propagating updated nuclear priors and assessing uncertainties for photon flux in future UPC analyses.

💡 Research Summary

The paper introduces a Bayesian neural‑network (BNN) surrogate for the Equivalent Photon Approximation (EPA) photon flux used in ultra‑peripheral collisions (UPCs) at high‑energy colliders. In UPCs, the strong interaction is suppressed by large impact parameters, and the electromagnetic fields of relativistic heavy ions act as sources of quasi‑real photons. The photon flux, traditionally obtained by a two‑dimensional convolution of a point‑charge kernel with a Woods‑Saxon nuclear density, requires costly numerical integrations each time a Monte‑Carlo job is launched, leading to a substantial computational overhead in large‑scale analyses.

To address this, the authors train a physics‑informed BNN on analytically computed flux integrals. The network’s input vector consists of the logarithm of the photon energy (ln ω), the impact parameter (b), a shift of the nuclear radius (ΔR) representing the neutron‑skin thickness, and the surface diffuseness (a). The target is the logarithm of the flux, ln N(ω,b). Drop‑out is employed as a Monte‑Carlo approximation, allowing the BNN to output an ensemble of predictions from which a mean μ(x) and a standard deviation σ(x) are extracted, providing a natural quantification of epistemic uncertainty.

Crucially, the training employs an active‑learning loop to minimise the number of expensive flux integrations. Starting from a small random seed dataset, the BNN is trained for a fixed number of epochs, then evaluated on a dense rapidity grid. Candidate points are sampled with probability proportional to the inverse of the predicted uncertainty, ensuring that new training samples are concentrated in regions where the model is least certain. The top‑k most uncertain points are then labelled by performing the full EPA integral, added to the training set, and the process repeats until the maximum relative uncertainty across rapidity falls below a user‑defined threshold (15 %). This strategy reduces the total number of integrals by roughly two orders of magnitude compared with uniform sampling.

The surrogate’s performance is demonstrated for a fixed impact parameter b = 20 fm. Across the full rapidity range, the BNN prediction matches the direct numerical integration with a mean absolute deviation below 2 %. The 68 % credible interval of the surrogate is roughly 22‑23 % wide, reflecting the propagated uncertainties on ΔR (taken from PREX‑II) and a (from recent nuclear‑structure fits). These uncertainties are comparable in magnitude to those obtained in earlier studies that varied only the nuclear radius, but the Bayesian treatment here incorporates correlated variations of both radius and diffuseness, offering a more complete error budget.

From a computational standpoint, the traditional STARlight implementation requires about 15 minutes of single‑core CPU time per configuration to build lookup tables, which, when multiplied by thousands of distributed jobs, amounts to 10³‑10⁴ CPU‑hours. In contrast, the BNN surrogate needs a one‑time training of roughly 30 minutes on a modern CPU and then delivers flux predictions in sub‑millisecond time. This translates into a three‑ to four‑order‑of‑magnitude reduction in total CPU usage for typical Monte‑Carlo productions. Moreover, because the surrogate directly provides both central values and uncertainties, repeated flux recalculations for systematic studies are no longer necessary.

The framework is modular: priors on the Woods‑Saxon parameters can be swapped out for custom distributions or alternative density models, and the active‑learning loop will automatically regenerate the training set to reflect the new information. Consequently, as future experiments improve measurements of neutron‑skin thickness or surface diffuseness, the surrogate can be updated without redesigning the entire pipeline.

In summary, the authors deliver a fast, Bayesian‑informed surrogate for the UPC photon flux that (i) cuts the computational cost of flux evaluation by two orders of magnitude, (ii) propagates nuclear‑structure uncertainties in a principled way, and (iii) offers a flexible, extensible tool for upcoming UPC analyses. This work represents a significant methodological advance for high‑energy nuclear physics, enabling more efficient and statistically robust extraction of photon‑induced cross sections from collider data.

Comments & Academic Discussion

Loading comments...

Leave a Comment