Enhancing Underwater Images via Adaptive Semantic-aware Codebook Learning

Underwater Image Enhancement (UIE) is an ill-posed problem where natural clean references are not available, and the degradation levels vary significantly across semantic regions. Existing UIE methods treat images with a single global model and ignor…

Authors: Bosen Lin, Feng Gao, Yanwei Yu

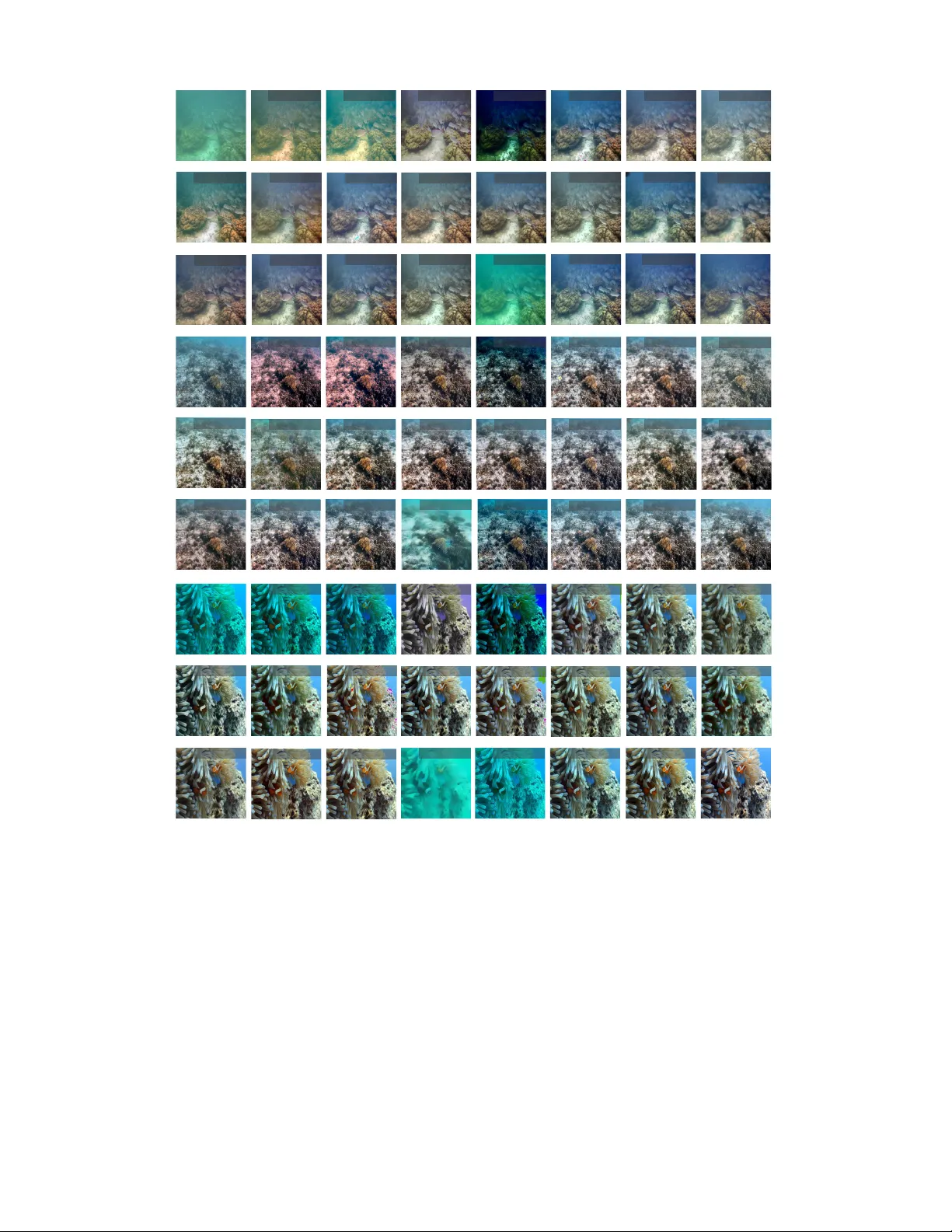

IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 1 Enhancing Underwater Images via Adapti v e Semantic-a w are Codebook Learning Bosen Lin, Feng Gao, Member , IEEE , Y anwei Y u, Member , IEEE , Jun yu Dong, Member , IEEE , Qian Du, F ellow , IEEE Abstract —Underwater Image Enhancement (UIE) is an ill- posed problem wher e natural clean refer ences ar e not av ailable, and the degradation levels vary significantly across semantic regions. Existing UIE methods treat images with a single global model and ignore the inconsistent degradation of different scene components. This oversight leads to significant color distortions and loss of fine details in heterogeneous underwater scenes, especially where degradation varies significantly across different image regions. Theref or e, we propose SUCode (Semantic-aware Underwater Codebook Network), which achiev es adaptive UIE from semantic-aware discrete codebook representation. Com- pared with one-shot codebook-based methods, SUCode exploits semantic-aware, pixel-level codebook repr esentation tailored to heterogeneous underwater degradation. A three-stage training paradigm is employed to repr esent raw underwater image features to av oid pseudo ground-truth contamination. Gated Channel Attention Module (GCAM) and Frequency-A ware Fea- ture Fusion (F AFF) jointly integrate channel and frequency cues for faithful color restoration and texture recovery . Extensive experiments on multiple benchmarks demonstrate that SUCode achieves state-of-the-art perf ormance, outperf orming recent UIE methods on both refer ence and no-refer ence metrics. The code will be made public available at https://github.com/oucailab/ SUCode . Index T erms —Underwater Image Enhancement, Image Recog- nition, Human V ision Perception, Underwater Dataset. I . I N T RO D U C T I O N Underwater vision plays a crucial role in extending remote sensing capabilities to marine and freshwater en vironments, which are otherwise difficult to observe using traditional aerial or satellite sensors [ 1 ][ 2 ][ 3 ]. High-quality underwater imaging is critical for autonomous underwater robots inspection, deep- sea archaeology , and marine life monitoring. Unfortunately , wa velength-dependent absorption and scattering ef fects in wa- ter make it exceptionally difficult to capture visually reliable images in situ. T o address this issue, various Underwater Image Enhancement (UIE) methods ha ve been proposed to restore color fidelity and structural details [ 4 ] [ 5 ] [ 6 ]. Howe ver , UIE faces two significant challenges: (1) Ill- posed problem and absence of clean ground-truth reference : Unlike natural or remote sensing image enhancement tasks, This work w as supported in part by the National Science and T echnology Major Project of China under Grant 2022ZD0117202, in part by the Natural Science Foundation of Shandong Pro vince under Grant ZR2024MF020. ( Corr esponding author: F eng Gao ) Bosen Lin, Feng Gao, Y anwei Y u, and Jun yu Dong are with the School of Information Science and Engineering, Ocean Univ ersity of China, Qingdao 266100, China. Qian Du is with the Department of Electrical and Computer Engineering, Mississippi State University , Starkville, MS 39762 USA. (a) One Shot Codebook Q uantization Method (c) Semantic - Aw a r e C o d e b o o k Q u a n t i z a t i o n Method (Ou rs) Category 𝑛 Enc Codebook 1 Codebook 𝑛 Dec ⋯ ⋯ ⋯ Category 1 Enc1 Codebook 1 Enc "𝑛 Codebook 𝑛 Dec ⋯ ⋯ ⋯ ⋯ Enc ⋯ ⋯ Codebook & Matching Dec Image & Mask Raw Images Pixel- level Update Image- level Update W eight W eight Output Images (b) Image Category - based Codebook Quantization Method Output Images Output Images Fig. 1. The comparison of the training and testing pipeline and enhance results between different codebook generation methods. The proposed SUCode’ s result is sharper and clearer , with more natural color . where ground-truth images serve as ideal baselines, UIE lacks truly clean reference images. In natural image enhancement, degraded images can be directly compared with clean ground truth to guide learning and e valuation [ 7 ]. In contrast, under- water imaging suf fers from unav oidable degradation including turbidity , light absorption, scattering, and fluctuating water currents. These factors lead to inconsistent color shifts, con- trast loss, and attenuation of details in unpredictable ways [ 8 ]. These effects vary across en vironments, making it impossible to capture a distortion-free underwater scene. As a result, “ground truth" references for UIE are pseudo ground-truths, typically chosen by human annotators from multiple enhanced outputs. This lack of reliable references complicates both the training and objective ev aluation of UIE methods. (2) Highly variable degradation patterns across depth, turbidity , and scene content: Degradation in underwater images is non- uniform and strongly influenced by water depth, turbidity lev els, and scene composition. F or instance, fore ground objects closer to the camera usually receiv e better illumination, while deeper background regions suffer severe color distortion and contrast loss. Additionally , dynamic disturbances such as float- ing particles, aquatic life, and water currents affect ho w light interacts with the w ater, introduce additional motion blur , noise artifacts, and structural inconsistencies. These variations cause non-uniform degradation both within individual frames and across consecuti ve frames, presenting a challenge for creating a universal enhancement solution. Con ventional approaches often apply a single global mapping to enhance the entire IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 2 frame [ 9 ] [ 10 ] [ 4 ], which tends to over -enhanced foregrounds while leaving haze in the background. T o address this, it is essential to design robust UIE methods that can adapt to the spatially varying degradation encountered in real underwater scenarios. Discrete generativ e priors facilitate the learning of a more compact and expressi ve latent space by mapping continuous image features to a discrete vector space, often represented as a Codebook. This discrete representation ef fectively cap- tures essential information (such as color, texture, etc.) while eliminating redundant or noisy elements in the image. Such methods have demonstrated impressi ve performance in tasks like image denoising, deblurring, and restoration [ 11 ] [ 12 ] [ 13 ]. These approaches learn a high-quality codebook through self-reconstruction, then retrieve the most appropriate code entries to guide the restoration process. Howe ver , directly applying this paradigm to UIE is non-tri vial. First, UIE is an ill-posed problem, which lead to the learned high-quality code- book containing unpredictable noise, which negati vely impacts enhancement performance. Second, the complex and inconsis- tent degradation patterns in underwater scenes challenge one- shot vector quantization strategies, such as CodeUNet [ 14 ] and AdaCode [ 7 ], make them insufficient to capture div erse distortions. Consequently , enhancement networks often fail to align with the correct priors, as illustrated in Fig. 1 (a). In order to introduce the advantages of codebook learning into the field of UIE and simultaneously address spatially v ary- ing degradation and the ill-posed ground-truth contamination, we propose S emantic-aware U nderwater Code book Network ( SUCode ). SUCode is designed to restore clear underwater images from semantically rele vant discrete raw image repre- sentations. In our approach, a series of pixel-le vel discrete codebooks in the latent space guided by semantic masks are first learned. Each codebook corresponds to a distinct raw underwater category . Next, a learnable weight predictor then linearly aggregates these basis codebooks to form an image-adaptiv e discrete generative codebook for any given underwater image. Finally , a dual-decoder architecture recon- structs clear images that respect both global semantics and local details, with Gated Channel Attention Module (GCAM) and Frequency-A ware Feature Fusion (F AFF). The GCAM enhances codebook representations by adaptiv ely re-weighting color channels, while F AFF bridges raw and enhanced fea- tures in the frequency domain, further ensuring semantic consistency and detail preservation. T o our knowledge, the proposed SUCode is the first network to incorporate semantic information into codebook learning in UIE tasks, with its network architecture depicted in Fig. 1 (a). In summary , our main contributions include: • W e propose a nov el codebook learning approach to obtain semantic-dependent discrete representations of UIE tasks. Unlike one-shot methods, our approach propose pixel- lev el, category-specific codebooks for underwater scenes guided by semantic masks. • By learning directly from raw underwater images, SU- Code addresses the ill-posed nature of UIE and avoids pseudo ground-truth contamination. Domain con version to clear images is performed via a GCAM-enhanced and F AFF-bridged dual-decoder architecture. • Extensiv e experiments on four public benchmarks demonstrate that SUCode outperforms nine state-of-the- art UIE models on full-reference metrics and achiev es competitiv e results on no-reference metrics, while gener- alizing well to real-world UIEs without retraining. I I . R E L A T E D W O R K A. Underwater Image Enhancement T raditional UIE methods often rely on physical models of underwater imaging and use hand-crafted priors and pa- rameterized models for enhancement. These priors include the Underwater Light Attenuation Prior (ULAP) [ 15 ], Image Blurriness and Light Absorption Prior (IBLA) [ 16 ], and the Underwater Dark Channel Prior [ 17 ], among others. Howe ver , due to the significant variation in underwater imaging condi- tions, these priors struggle to model the complexities of real- world scenarios. In recent years, deep learning methods have greatly ad- vanced the de velopment of UIE. These methods lev erage trans- formers, generati ve models, and dif fusion models to tackle the specific degradation present in underwater en vironments. W a- terNet [ 9 ] learns a fusion representation of dif ferent traditional UIE techniques. UColor [ 10 ] employs a medium transmission- guided multi-color space embedding network, which jointly captures illumination, chromatic, and perceptual priors. Peng et al. [ 8 ] designed a UNet-shaped Transformer network for end-to-end underwater image enhancement. Wf-Dif f [ 18 ] en- hances the frequency information of underwater images in the wa velet space using a diffusion model. AMSIN [ 19 ] treats UIE as an image decomposition problem and designs a deep in vert- ible neural network for bidirectional rev ersible enhancement. SS-UIE [ 5 ] employs a Mamba-based network and frequenc y domain loss to emphasize the enhancement of high-frequency details in underw ater images. SMDR-IS [ 4 ] utilizes multi-scale information in an encoder-decoder architecture to enhance underwater image details. Howe ver , these methods typically treat the image as a whole, without lev eraging the semantic regions of underwater images for targeted enhancement. This limitation can result in enhancements that are not optimal for human visual perception. B. Codebook learning The concept of codebook learning was first introduced in VQ-V AE [ 20 ], a vector -quantized autoencoder framework that allows models to learn discrete feature representations in latent space, known as codebooks. VQGAN [ 21 ] further improv es codebook learning by adding perceptual and adversarial su- pervision, which leads to a smaller codebook size and better reconstruction quality . Building on this, many low-le vel vision tasks hav e adopted discrete codebooks to enhance feature rep- resentation, including super-resolution, image restoration, and image dehazing. For instance, FeMaSR [ 22 ] applies discrete codebook learning to blind super-resolution. AdaCode [ 7 ] ex- tends the rob ustness of class-agnostic codebooks by computing a weighted combination of multiple basis codebooks. RIDCP IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 3 In - Conv ResBlock ResBlock ResBlock ⋯ 𝑍 ! 𝓏" (") 𝓏 $ (") ⋯ Voronoi Cell VQ 𝑐 VQ1 VQ2 ⋯ VQ 𝐶 ⋯ ⋯ ⋯ 𝓏 $ 𝑚 𝓏" (") 𝓏 ! 𝓏 $ (") Out- Conv ResBlock ResBlock ResBlock Raw Image 𝑥 Reconstructed Raw Image 𝑥 " ! Encoder 𝐸 ! Decoder 𝐷 ! 𝓏" (") In - Conv ResBlock ResBlock ResBlock 𝓏 & Raw Image 𝑥 Encoder 𝐸 " 𝓏" (") VQ 1 CodeBook 𝑍 # VQ 𝑐 CodeBook 𝑍 $ VQ 𝐶 CodeBook 𝑍 % ⋯ ⋯ ⋯ ⋯ We i g h t Predictor ⋯ ⋯ 𝓏 & ! # 𝓏 & ! $ 𝓏 & ! % 𝓏 ! Out- Conv GCAM GCAM GCAM Reconstructed Raw Image 𝑥 " " In - Conv ResBlock ResBlock ResBlock 𝓏 & Raw Image 𝑥 Encoder 𝐸 " 𝓏" (") Out- Conv Enhanced Imag e 𝑥 " & Ve c t o r Q u a n t i z e r Decoder 𝐷 " SwinBlock 𝓏 " GCAM GCAM GCAM GCAM GCAM GCAM F AFF F AFF F AFF Decoder 𝐷 " Decoder 𝐷 & Ve c t o r Quantizer ❄ ❄ 🔥 🔥 Shared Parameters Shared Parameters Shared Codebook Sem - Aw a r e Ve c t o r Q u a n t i z e r (a) Stage I: Sema ntic - Aw a r e C o d e b o o k P r e t r a i n i n g (b) Stage II: Unde rwater Image Representation L earning (c) Stage III: Unde rwater Image Enhancement Le arning 🔥 𝐹 " ! 𝐹 " " 𝐹 " # 𝐹 ' ! 𝐹 ' " 𝐹 ' # Fig. 2. The ov erall structure of the proposed SUCode. In stage I, the semantic-aware category-specific codebooks are updated with the mask m . Stage II is a partition and synthesis process of the codebook, achiev ed through the self-reconstruction of raw underwater images. In stage III, domain conv ersion from the raw underwater codebook to the enhanced image is achieved. [ 11 ] introduces a controllable matching operation to dehaze low-quality images using high-quality codebook priors. IPC- Dehaze [ 13 ] gradually refines and retains the best high-quality codebook for image dehazing. Despite the progress in codebook learning, few studies hav e explored its application in UIE. CodeUNet [ 14 ] follows the RIDCP framew ork and proposes prior codebooks specifically for water bodies to restore underwater images. VQUIE-Net [ 23 ] lev erages VQGAN to map underwater images into a discrete domain and emplo ys an axial flo w-guided latent transformer for image restoration. Ho wever , a key challenge remains: how to fully utilize the advantages of codebook learning to represent the complex foreground-background in- teractions in underwater scenes and address the problem of prior learning due to the ill-posed nature of ground-truth images. Therefore, it is necessary to improve the mapping from original image to enhanced one based on learning the representation of original underwater image. I I I . M E T H O D O L O G Y The moti vation for SUCode is to introduce discrete rep- resentation into UIE tasks, where the key issues need to be addressed are the complex degradation patterns and the ill- posed references. Specifically , to capture the complex region- specific degradation typical of underwater imagery , the pro- posed SUCode introduces an additional semantic dimension to the standard discrete codebook representation [ 21 ]. T o av oid learning unstable representations from ill-posed pseudo ground-truths, SUCode employs a progressive three-stage training strategy , which aims to learn quantized representations of raw underwater image content and treats the enhancement process as an adapti ve feature modulation problem. As shown in Fig. 2 , the training of SUCode is di vided into three stages. In Stage I, a set of semantic category- specific codebooks are learned from raw underwater images and their corresponding semantic masks, enabling fine-grained representation and addressing spatially inconsistent degrada- tion problem. In Stage II, a weight-quantized coder is trained via a self-reconstruction task on ra w underwater images, which aggregates the fixed semantic-aware codebooks into a unified representation, mitigating the ill-posed nature of UIE. In Stage III, this representation is used to train a dual-decoder architec- ture specifically tailored for the enhance task. A feature fusion module adaptively combines decoded features from raw and enhanced images, facilitating robust domain transformation and feature modulation, generating visually coherent outputs. A. Semantic-aware Codebook Pr etraining (Stage I) Underwater images exhibit div erse scenes and target differ- ences, and v arious degradation factors can also affect different areas within a single image. Unlike approaches that encode a static one-shot codebook for all images [ 14 ] [ 22 ] or maintain and update a set of codebooks according the category the image level [ 7 ], we aim to introduce codebook representation that distinguishes categories at the pixel level tailored to im- prov e the generalization ability of UIE. Specifically , semantic- IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 4 aware codebooks are learned guided by semantic masks, ensuring that each image region is encoded separately based on its semantic content (e.g., water , background, foreground objects) at the pixel level. The codebook is trained using the raw underwater images. This method not only diversifies the codebook but also distinguishes blur dif ferences in different regions of the image based on semantic information. Wa t e r b o d y Human Diver Plants & Grass Wr ec k & Ru in Seafloor & Rock Fish & Ve r t e b r a t e Reef & Inver tebrate Robots Fig. 3. V isualization of the learned codebooks for different underwater categories. Codebook Quantization with Semantic Guidance. Gi ven an underwater image set with semantic annotations, we e xtend the standard quantized auto-encoder to learn class-specific codebooks by lev eraging semantic segmentation masks during training. As shown in Fig. 2 , the input underwater image x ∈ R H × W × 3 is encoded by a multi-scale encoder E q to generate latent embeddings ˆ z = E q ( x ) ∈ R h × w × n z , where n z is the embedding dimension. T o learn class-specific rep- resentations for underwater scenarios, we maintain a separate learnable codebooks set: Z = Z c ∈ R N × n z | c = 1 , 2 , . . . , C , (1) where N is the number of codes in the corresponding codebook, and C is the number of semantic categories. Z c represents the codebook for category c . The semantic mask m ∈ R H × W is resized to match the resolution of ˆ z . Then, for each spatial location i , based on its class label c ( i ) , we find the discrete representation of ˆ z ( i ) from the codebook corre- sponding to class c ( i ) . Specifically , the quantization process is achiev ed by minimizing the Euclidean distance between ˆ z ( i ) and all the codebook vectors Z c ( i ) ,j in the codebook Z c ( i ) : z ( i ) q = arg min j ∈{ 0 ,...,N − 1 } ˆ z ( i ) − Z c ( i ) ,j 2 2 , (2) where z ( i ) q denotes the quantized representation at location i , ˆ z ( i ) is the original feature at location i , and Z c ( i ) ,j represent the j -th entry in codebook Z c ( i ) corresponding to class c ( i ) . All class-specific quantized outputs are then merged using the mask to form the final quantized embedding ˆ z q . Follo wing the quantization process, the decoder G q reconstructs the high- resolution image ˆ x as: ˆ x = G q ( ˆ z q ) ≈ x. (3) W e apply an adv ersarial learning scheme to train the encoder E q , the codebook Z , and the decoder G q with a discriminator D q . Compared to previous VQ-based method that quantize the entire image, our proposed method allows each semantic region to be encoded by a dedicated codebook, leading to improv ed restoration quality and class-specific representation learning. This is particularly advantageous for underwater scenes, where degradation is highly correlated with scene semantics. Some visual results of the codebooks are shown in Fig. 3 . Loss Function. The objective of stage I is to construct a discrete codebook representation using the raw underwater image and its semantic mask. T o jointly training underwater image encoder E q , codebook Z , and decoder G q , we adopt the pix el loss L 1 , the perceptual loss L per , the adversarial loss L adv , vector quantization loss L V Q : L 1 = ∥ ˆ x − x ∥ 1 (4) L per = ∥ ϕ ( ˆ x ) − ϕ ( x ) ∥ 1 (5) L adv = log D ( ˆ x ) + log(1 − D ( x )) . (6) L V Q = ∥ sg[ ˆ z q ] − z q ∥ 2 2 + β ∥ ˆ z q − sg[ z q ] ∥ 2 2 + λ s ∥ Con v( ˆ z q ) − ϕ ( x ) ∥ 2 2 , (7) where sg [ · ] denotes the stop-gradient operation, Conv( · ) de- notes a single con volutional layer to match the dimension of ˆ z q and ϕ ( x ) , ϕ ( · ) denotes the VGG19 feature extractor , β = 0 . 25 and λ s = 0 . 1 denotes the hyper -parameter , respectively . The total loss is expressed as: L stag e 1 = L 1 + L per + λ adv L adv + L V Q ( E q , G q ) , (8) where λ adv = 0 . 1 . B. Underwater Image Repr esentation (Stage II) T raditional VQ-based image enhancement methods directly learn the mapping from raw degraded images to clear images with the help of discrete codebook representation. This ap- proach is effecti ve for natural images because it can learn dis- crete representations of true clear images. Howe ver , reference images in underwater environments are artificially selected pseudo ground truths. Directly using their feature mappings to clear images interferes with the network’ s ability to learn the true, accurate features of the underwater contents. T o address this, we define the second stage of SUCode as the self-recov ery stage, enabling the network to better recover the unique features of underwater images from the semantic-a ware discrete representation extracted in the previous stage. In this stage, the raw underwater image is first mapped into class-specific quantized representations ˆ z q c using the encoder network E r and semantric-aware codebooks Z c . A weighted predictor then synthesizes them to a unified representation ˆ z q . Finally , a decoder G r composed by GCAMs reconstructs the raw image. Semantic-aware Codebook Partition and Synthesis. Giv en the semantic-aware codebooks Z = { Z c | c = 1 , 2 , . . . , C } pretrained in stage I, it is possible to map the image to a distinct discrete space in C dif ferent ways. Each codebook Z c corresponds to a unique part of the underwater scenario in the feature space, allo wing the model to learn semantic-specific representations. Specifically , giv en an underwater image x , C quantized representations ˆ Z q = { ˆ z q c | c = 1 , 2 , . . . , C } are generated, with each representation ˆ z q c being a quantized representation obtained from the semantic codebook of category c . IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 5 LNorm FFT Mag 𝐴 Pha Φ 3 × 3 Conv 1 × 1Conv LReLU 1 × 1Conv IFFT C 3 × 3 Conv LReLU 3 × 3 Conv 3 × 3 Conv LReLU 3 × 3 Conv 𝐹 ! "" 𝐹 " "" 𝐹 ! "" 𝐹 #$% "" (b) FA F F (a) GCAM 3 × 3Conv LNorm 1 × 1Conv 3 × 3Conv Channel Attn 1 × 1Conv GAP 1 × 1Conv 1 × 1Conv LNorm 1 × 1Conv Simple Gat e 𝐹 &' "" Simple Gate 1 × 1Conv 𝐹 #$% "" 𝐹 &' "" 𝐹 ($) "" Fig. 4. Structure of the proposed Gated Channel Attention Module (GCAM) and Frequency-A ware Feature Fusion (F AFF) module. rFFT and IrFFT represent real fast fourier transform and inv erse real fast fourier transform, respecively . Mag and Pha represent the magnitude and phase variables, respectiv ely . GAP refer to the global av erage pool operation. T o recov er underwater image from the ˆ Z q , the representa- tions must first be synthesized into a unified one ˆ z q . This pro- cess is facilitated by a weight prediction module, which gener- ates C weight maps W = w c ∈ R h × w × 1 | c = 1 , 2 , . . . , C . The weight predictor module consists of a Swin T ransformer layer [ 24 ] and a con volution layer , which match the channels of weight map with C . The synthesis process of the quantized representations can thus be expressed as: ˆ z q = X c w c × ˆ z q c . (9) Finally , the adaptive feature ˆ z q is reconstructed into the raw underwater image ˆ x using the decoder G r . ˆ x = G r ( ˆ z q ) ≈ x. (10) Gated Channel Attention Module. Considering the vary- ing attenuation of underwater light across wa velengths, under - water images suf fer from sev ere color imbalance. Specifically , red light is absorbed first, causing the image to lean more tow ards blue and green. Therefore, the enhanced image is prone to over -amplification of colors. Furthermore, the illu- mination dif ferences introduced by foreground objects and artificial light sources [ 25 ] may exacerbate these uneven color shift. T o more effecti vely recover color details in underwa- ter images from discrete representations, we introduce the GCAM, as shown in Fig. 4 (a). Specifically , GCAM achieves adaptiv e reweighting of color channels through a learnable gate-controlled attention mechanism. First, a simple gating operation [ 26 ] and con volutions with global av erage pooling are applied to partition the channels and achiev e lightweight channel attention, respectively . Then, a multi-layer perceptron with simple gating are used to synthesize the features. This allows the network to emphasize information-rich channels and suppress noise or oversaturated responses, resulting in more stable and realistic color restoration. Loss Function. The Stage II of SUCode is defined as the reconstruction stage of raw underwater images. T o train the encoder E r , the weight prediction module W , and the decoder G r , we employ four kinds of loss functions: pixel loss L 1 , perceptual loss L per , adversarial loss L adv , and vector quantization loss L V Q . The training objective in stage II is formulated as: L stag e 2 = L 1 + L per + λ a L adv + L V Q ( E r , G r ) , (11) where λ adv = 0 . 1 . C. Image Enhancement Learning (Stag e III) Based on the discrete feature representations learned from the raw underwater images, SUCode formulates UIE as a domain-adaptiv e feature modulation problem. Specifically , with the vector quantizer and decoder G r kept frozen, we introduce F AFF modules that conditionally shift the raw-image features tow ard the feature space of the enhancement decoder G e , producing the enhanced output ˆ x pred . Conceptually , this amounts to improving local appearance of underwater im- age by adapti vely shifting the class-specific codebook while preserving high-level semantic content. Therefore, SUCode maintains semantic understanding of the scene and adaptively handles div erse underwater degradation patterns, mitigating the visual artifacts and instability that commonly affect tra- ditional UIE methods. Frequency-A ware Featur e Fusion. T o bridge the domain gap between raw and enhanced underwater image represen- tations, the decoder G e integrates the F AFF , which explicitly fuses complementary cues from both the raw and enhanced decoding streams, while maintaining the structural prior pro- vided by G r . The structure of F AFF is shown in Fig. 4 (b). The key idea of F AFF is to retain structural fidelity from the raw domain while adaptively infusing rich texture and color details from the enhanced domain. Concretely , we preserve the phase of the original features to maintain structure, selectiv ely amplify the magnitude guided by the enhanced features, and apply domain-adaptive modulation via a learnable af fine trans- formation. Operating in the spectral domain allo ws the method to inject enhanced appearance information into the output while locking the original shape cues, thereby preserving the contours and semantics encoded by the codebook despite the domain gap. Specifically , giv en the raw-domain features F r ∈ R H i × W i × C i and the enhance-domain features F e ∈ R H i × W i × C i , F AFF first concatenates them along channels to obtained F in and apply a 3 × 3 con volution followed by layer normalization to an aligned representation. T o exploit spectral cues while preserving geometric structure, a channel-wise real Fast Fourier Transform (rFFT) [ 26 ] is performed to obtain a one-sided spectrum: ˆ F = rFFT 2 ( F in ) . (12) Subsequently , phase-amplitude decomposition is performed to obtain magnitude A = | ˆ F | and Φ = ∠ ˆ F . A lightweight frequency mapper g θ is then applied to the magnitude only , which includes 1 × 1 conv olutions and LeakyReLU operation, IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 6 yielding A ′ = g θ ( A ) . Keeping the original phase Φ for structural fidelity , the spectrum is reconstructed as ˆ F ′ = g θ ( A ) ⊙ e j Φ and transformed back to the spatial domain: F f req = = IrFFT 2 ( ˆ F ′ , s = ( H i , W i )) . (13) This frequency-a ware feature acts as a self-modulation mask on F in : F f us = F in + γ ( F in · F f req ) , (14) where γ is a learnable per-channel coef ficient. Finally , two parallel spatial branches ψ scale and ψ shif t are used to generate an adaptiv e scale-shift modulate the output feature F out ∈ R H i × W i × C i : F out = ψ scale ( F f us ) · F e + ψ shif t ( F f us ) . (15) The parallel spatial branches are constructed with 3 × 3 con- volutions and LeakyReLU operation. In summary , this design selectiv ely enhances texture and lighting through amplitude reweighting while preserving spatial structure through phase consistency . Loss Function. In stage III, adapti ve image enhancement is achiev ed from the discrete representation of the image in the Codebook and the decoder features of the raw image. T o train the encoder E r and the decoder G e , we use four types of loss functions: pixel loss L ′ 1 , perceptual loss L ′ per , adversarial loss L ′ adv , and code-le vel loss L ′ code , gi ven as: L ′ 1 = ∥ ˆ x pred − x g t ∥ 1 (16) L ′ per = ∥ ϕ ( ˆ x pred ) − ϕ ( x g t ) ∥ 1 (17) L ′ adv = log D ( ˆ x pred ) + log (1 − D ( x g t )) . (18) where x g t refer to the ground truth image. The code loss [ 7 ][ 27 ] is to reduce the difference between the discrete repre- sentation z g t of the ground truth and representation ˆ z : L code = β ∥ ˆ z − sg[ z g t ] ∥ 2 2 . (19) where sg is the stop-gradient operation and β is set to 0 . 25 to control the codebook update frequency . z g t is obtained by E r and the vector quantizer . The total loss is expressed as: L stag e 3 = L ′ 1 + L ′ per + λ ′ adv L ′ adv + L code , (20) where λ ′ adv = 0 . 1 . I V . E X P E R I M E N T S A N D R E S U LT S In this section, we first introduce the experimental settings of the proposed SUCode, followed by qualitative and quan- titativ e comparisons with other state-of-the-art UIE methods. Then, we conduct ablation study to verify the modules in- troduced in SUCode. Finally , we verify the effecti veness of SUCode on do wnstream tasks. A. Experimental Settings Datasets and T raining Details. W e trained SUCode using the SUIM-E [ 28 ] and UIEB [ 9 ] datasets. The SUIM-E dataset contains 1635 images with segmentation masks and enhanced ground truth, where 1525 images are used for training and 110 for testing. The UIEB dataset has 890 images, from which 800 are used for training and 90 for testing. SUCode is trained in three stages. In Stage I, we train using raw images and segmentation masks in SUIM-E dataset to obtain semantic codebooks and restore the raw underwater images. The underwater scenes is categorized into 8 classes [ 34 ]: human di vers, aquatic plants, wrecks, underwater robots, reefs & in vertebrates, fish & vertebrates, sea-floor & rocks, and water body . These cate gories correspond to 8 codebooks for Stage I. In Stages II and III, we use the trained codebooks to train and test on the SUIM-E and UIEB datasets, respectiv ely , to enhance the underwater images. The codebook size is set to 256 × 256 , and the input image is represented as a 32 × 32 code sequence. T o assess the robustness of SUCode, we further perform cross-dataset ev aluation on the LSUI [ 8 ] and UFO120 [ 35 ] datasets using the pretrained model on UIEB. Among them, we selected 400 images and 120 images from the LSUI dataset UFO120 dataset for e valuation, respectiv ely . Implementation Details. SUCode is implemented in Py- T orch and trained on an NVIDIA 3090T i GPU. The training consists of 200 epochs with a batch size of 4, using the Adam optimizer . The learning rates for the generator and discriminator are set to 1e-4 and 4e-4, respecti vely . Training and testing are performed at 256 × 256 resolution, with random cropping during training and direct scaling during testing. Evaluation Metrics. W e evaluate SUCode using both ref- erence and no-reference metrics. Reference metrics include SSIM [ 36 ], PSNR [ 37 ], and LPIPS [ 38 ], which are used to ev aluate the similarity between the predicted images and the ground-truth. The no-reference metrics include UCIQE [ 38 ] and UIQM [ 38 ], which e valuate the color, clarity , and contrast from dif ferent lev els. Comparison Methods. W e compare SUCode with both traditional UIE methods and deep-learning-based UIE meth- ods. The traditional UIE methods are compared including Fusion [ 29 ], IBLA[ 16 ], ULAP[ 15 ], and UDCP[ 17 ]. The state- of-the-art deep learning-based UIE methods are compared including W aterNet [ 9 ], UColor [ 10 ], UShape [ 8 ], CCMSR [ 30 ], WfDiff [ 18 ], SMDR-IS [ 4 ], HCLR-Net[ 19 ], AMSIN [ 19 ], RUE-Net[ 31 ], SS-UIE [ 5 ], FDCE-Net[ 32 ] and CDF- UIE[ 33 ]. W e further compared the proposed SUCode with other Codebook-based methods, including FeMaSR [ 22 ], Ada- Code [ 7 ], RIDCP[ 11 ], IPC-Dehaze[ 13 ] and CodeUNet [ 14 ]. W e retrain these models using the source codes from the respectiv e authors and follow their experimental settings. B. Benchmarking Comparison Results Quantitative Evaluation. W e conducted training on the UIEB and SUIM-E datasets and perform quantitativ e com- parisons using full-reference and no-reference ev aluations to IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 7 T ABLE I Q UA N TI TA T I V E C O MPA R IS O N O F D I FFE R E N T U I E M E T HO D S O N T H E U I E B [ 9 ] A N D S U IM - E [ 2 8 ] D A TAS E T S . T H E B E S T R E S U L T S A R E H I GH L I GH T E D I N B O LD A N D T H E S E C O ND B E S T R E SU LT S A R E U N D ER L I N ED . Method SUIM-E[ 28 ] UIEB[ 9 ] SSIM ↑ PSNR ↑ LPIPS ↓ UCIQE ↑ UIQM ↑ SSIM ↑ PSNR ↑ LPIPS ↓ UCIQE ↑ UIQM ↑ Fusion[ 29 ] 0.876 16.824 0.226 58.413 2.811 0.907 18.483 0.211 52.823 3.251 IBLA[ 16 ] 0.788 16.019 0.221 62.498 1.870 0.771 15.009 0.341 53.816 2.346 ULAP[ 15 ] 0.860 16.574 0.232 59.746 2.174 0.902 17.871 0.233 52.620 3.309 UDCP[ 17 ] 0.581 11.694 0.308 62.172 1.815 0.603 11.001 0.399 59.492 2.147 W aterNet[ 9 ] 0.907 22.295 0.144 60.999 2.807 0.898 21.566 0.237 61.805 3.314 UColor[ 10 ] 0.898 22.860 0.145 62.436 2.860 0.906 22.266 0.187 59.176 3.316 UShape[ 8 ] 0.851 21.369 0.147 53.451 2.969 0.819 20.266 0.219 48.406 3.296 CCMSR[ 30 ] 0.896 22.028 0.161 60.129 2.875 0.914 22.761 0.180 57.084 3.274 WfDiff[ 18 ] 0.853 16.176 0.184 57.052 2.701 0.888 18.994 0.214 53.269 3.255 SMDR-IS[ 4 ] 0.896 22.082 0.146 62.600 2.749 0.924 22.232 0.166 61.559 2.952 AMSIN[ 19 ] 0.902 21.923 0.125 61.399 2.762 0.921 22.635 0.146 62.332 3.309 HCLR-Net[ 19 ] 0.914 20.749 0.185 58.765 3.360 0.902 22.317 0.124 58.599 3.279 R UE-Net[ 31 ] 0.921 22.902 0.121 62.500 2.776 0.923 22.743 0.164 62.357 3.260 FDCE-Net[ 32 ] 0.912 22.840 0.120 60.722 2.872 0.923 23.039 0.141 58.765 3.360 SS-UIE[ 5 ] 0.871 21.713 0.182 59.538 2.815 0.850 21.006 0.255 58.919 3.066 CDF-UIE[ 33 ] 0.892 22.089 0.116 54.826 2.838 0.886 21.592 0.159 54.219 3.333 FeMaSR[ 22 ] 0.908 22.749 0.100 62.605 2.841 0.883 22.733 0.137 62.675 3.301 AdaCode[ 7 ] 0.886 22.329 0.105 62.409 2.812 0.818 21.792 0.156 60.835 3.216 RIDCP[ 11 ] 0.509 13.407 0.572 42.184 2.533 0.573 14.915 0.487 48.679 2.246 IPC-Dehaze[ 13 ] 0.823 13.869 0.381 50.837 2.252 0.852 16.923 0.226 54.777 2.352 CodeUNet[ 14 ] 0.590 17.349 0.447 54.769 2.705 0.836 21.468 0.196 59.650 3.383 SUCode(Ours) 0.939 23.908 0.087 62.618 2.878 0.925 23.857 0.124 63.136 3.174 T ABLE II C RO S S DAT A S E T Q UA N T ITA T I V E C O M P A R I S ON O F D I FFE R E NT U I E M E T H OD S T R AI N E D O N T H E U I EB D A TAS E T A N D E V A L UA T E O N T H E L S U I [ 8 ] A N D U F O- 1 2 0 [ 3 5 ] D A TAS E T S . T H E B E S T R E S ULT S A R E H I G H LI G H T ED I N B O LD A N D T H E S E C O ND B E S T R E SU LT S A R E U N D ER L I N ED . Method LSUI [ 8 ] UFO-120 [ 35 ] SSIM ↑ PSNR ↑ LPIPS ↓ UCIQE ↑ UIQM ↑ SSIM ↑ PSNR ↑ LPIPS ↓ UCIQE ↑ UIQM ↑ W aterNet[ 9 ] 0.810 19.024 0.342 63.555 3.207 0.833 18.424 0.271 62.882 3.130 UColor[ 10 ] 0.821 20.017 0.300 62.016 3.195 0.788 18.355 0.360 63.363 3.137 UShape[ 8 ] 0.766 18.604 0.320 50.992 3.264 0.789 18.624 0.321 57.875 3.218 CCMSR[ 30 ] 0.839 20.678 0.282 60.024 3.180 0.802 18.840 0.331 60.667 3.148 WfDiff[ 18 ] 0.855 19.400 0.276 59.013 3.121 0.797 19.675 0.319 62.320 3.054 SMDR-IS[ 4 ] 0.815 19.246 0.304 62.617 3.098 0.780 17.612 0.343 64.184 3.020 AMSIN[ 19 ] 0.826 19.792 0.260 64.288 3.100 0.786 17.822 0.314 65.704 3.058 HCLR-Net[ 19 ] 0.821 18.897 0.312 61.274 3.059 0.801 18.462 0.336 62.769 3.081 R UE-Net[ 31 ] 0.854 20.651 0.261 64.076 3.089 0.817 18.920 0.312 64.249 3.024 FDCE-Net[ 32 ] 0.811 18.649 0.327 62.386 3.193 0.827 20.556 0.266 63.428 3.165 SS-UIE[ 5 ] 0.821 19.708 0.331 60.552 3.106 0.785 18.565 0.343 65.097 2.794 CodeUNet[ 14 ] 0.804 20.040 0.263 62.467 3.269 0.783 18.935 0.285 62.317 3.177 SUCode(Ours) 0.860 20.662 0.241 64.361 3.044 0.835 19.035 0.259 65.635 2.936 demonstrate the effecti veness of the proposed SUCode. The statistical results are sho wn in T able I . As sho wn in T able I , the proposed SUCode achie ves the best performance across all full-reference metrics, including PSNR, SSIM and LPIPS. Compared to traditional UIE methods such as Fusion [ 29 ] and ULAP [ 15 ], which lack adaptability to complex underwater en vironments and objects, SUCode significantly outperforms them. Re garding deep learning meth- ods, CCMSR [ 30 ] incorporates a physical model of underwa- ter imaging, UColor [ 10 ] introduces additional image depth information, and SMDR-IS [ 4 ] introduce explicit and implicit multi-scale image processing strategies, respectiv ely . Howe ver , these methods treat the image as a whole and fail to le verage semantic information to differential enhance the foreground and background, resulting in inferior performance compared to the proposed SUCode method. FDCE-Net [ 32 ] performs enhancement operations in the frequency domain. howe ver , this method lacks modeling of foreground and background, resulting in limited improvement in SSIM and LPIPS v alues. When compared with other Codebook-based methods, the proposed SUCode also achieved higher indicators. It should be noted that AdaCode [ 7 ] did not perform well in the UIE task, which may be because the complexity of underwater images makes constructing a Codebook according to the overall image category ineffecti ve. W e also report the no-reference metrics on SUIM-E and UIEB datasets in T able I , which shown the proposed SUCode performs comparably to, or better than, other UIE methods on these metrics. Specifically , the proposed SUCode achiev es the highest UCIQE value on both SUIM-E and UIEB datasets, and the second highest UIQM value on SUIM-E. It is important to note that due to the way non-reference underwater image qual- ity metrics are ev aluated, the UIQM metrics are more focused on color and contrast. Specifically , images with higher color saturation, contrast difference, and brightness recei ve higher scores. These metrics don’t consider the semantic information of the image and therefore often differ from human visual perception [ 30 ] [ 33 ] [ 10 ]. Therefore, non-reference metrics IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 8 PSNR 29.141 PSNR 24.382 PSNR 20.304 PSNR 17.508 PSNR 21.983 PSNR 27.631 PSNR 26.962 PSNR 15.431 PSNR 26.724 PSNR 20.005 PSNR 25.1 11 PSNR 19.299 PSNR 10.832 PSNR 12.917 PSNR 20.758 PSNR 23.414 PSNR 20.595 PSNR 16.532 P S NR 21.833 PSNR 22.160 PSNR 31.653 PSNR 26.728 PSNR 20.134 PSNR 17.627 PSNR 24.456 PSNR 24.847 PSNR 24.393 PSNR 15.055 PSNR 28.928 PSNR 26.531 Ours AMSIN Raw GT IBLA ULAP W a terNet UColor UShape WfDiff SMDR- IS SS -UIE Fusion PSNR 19.821 PSNR 21.141 PSNR 19.008 PSNR 6.997 PSNR 15.296 PSNR 8.471 UDCP PSNR 26.613 PSNR 20.782 PSNR 26.636 CCMSR Raw IBLA ULAP W a terNet UColor UShape Fusion UDCP Ours AMSIN GT WfDiff SMDR- IS SS -UIE CCMSR Raw IBLA ULAP W a terNet UColor UShape Fusion UDCP Ours AMSIN GT WfDiff SMDR- IS SS -UIE CCMSR PSNR 25.538 PSNR 22.364 PSNR 19.259 PSNR 23.019 PSNR 22.297 PSNR 17.935 PSNR 25.181 PSNR 24.323 PSNR 22.109 CodeUNet FeMaSR AdaCode CodeUNet FeMaSR AdaCode CodeUNet FeMaSR AdaCode RDICP IPC -Dehaze CDF-UIE FDCE-Net RUE-Net PSNR 24.579 PSNR 26.231 PSNR 14.074 PSNR 16.348 PSNR 23.063 RDICP IPC -Dehaze CDF-UIE FDCE-Net RUE-Net PSNR 21.195 PSNR 19.273 PSNR 17.859 PSNR 18.779 PSNR 23.232 RDICP IPC -Dehaze CDF-UIE FDCE-Net RUE-Net PSNR 29.755 PSNR 27.248 PSNR 12.217 PSNR 11 .3 77 PSNR 24.048 PSNR 22.788 PSNR 24.143 PSNR 25.835 HCLR-Net HCLR-Net HCLR-Net Fig. 5. V isual comparison of UIE results sampled from the test set of SUIM-E [ 28 ] dataset. The highest PSNR results are highlighted in red and the second best results are yellow . need to be combined with full-reference ones to ev aluate the UIE model. Qualitative Evaluation. W e conducted visual comparisons for qualitati ve ev aluation between the proposed SUCode and other UIE methods, as is shown in Fig. 5 and Fig. 6 . The visual comparisons re veal that SUCode’ s enhancement results closely match the reference image, with more realistic colors, clearer local details, and fe wer artifacts or unnatural areas. Specifi- cally , SUCode effecti vely balances the foreground target and background, resulting in more realistic color restoration and producing images that align more closely with human visual perception. In contrast, CCMSR [ 30 ] enhance the image as a whole, which can introduce unnatural colors when the foreground and background differ significantly . Further , SU- Code can restore images with minimal artifacts, which is crucial for downstream applications, where visual clarity is paramount. These results highlight the effecti veness of SU- Code in restoring images by lev eraging semantic information to model category-specific discrete codebooks. The SS-UIE [ 5 ] and WfDiff [ 18 ] exhibit limitations in recovering complex textured regions, as these methods clearly lack clarity in back- ground regions of the image. Additionally , other codebook- based methods [ 22 ] [ 7 ] are more likely to introduce artifacts and unnatural details because they learn image features from pseudo ground-truth values that can be adversely affected. Methods specifically designed for natural images, such as IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 9 Ours AMSIN Raw GT IBLA ULAP Wa te rN et UColor UShape WfDiff SMDR - IS SS - UIE PSNR 32.270 PSNR 22.219 PSNR 21.477 PSNR 19.288 PSNR 26.497 PSNR 25.342 PSNR 18.688 PSNR 22.244 PSNR 24.394 PSNR 27.947 PSNR 27.916 PSNR 23.331 PSNR 19.187 PSNR 19.854 PSNR 20.207 PSNR 24.917 PSNR 19.712 PSNR 22.893 PSNR 22.750 PSNR 22.071 Fusion PSNR 20.806 PSNR 19.021 PSNR 28.295 PSNR 25.441 PSNR 11 .5 34 PSNR 11 . 80 2 PSNR 20.946 PSNR 22.227 PSNR 21.801 PSNR 20.573 PSNR 20.762 PSNR 21.234 PSNR 21.659 UDCP PSNR 10.865 PSNR 11 .4 27 PSNR 10.834 CCMSR PSNR 23.273 PSNR 22.655 PSNR 23.138 PSNR 25.620 PSNR 19.061 PSNR 23.227 CodeUNet PSNR 26.144 PSNR 22.153 PSNR 24.296 FeMaSR AdaCode PSNR 27.316 PSNR 19.061 PSNR 23.227 Ours AMSIN Raw GT IBLA ULAP Wa te rN et UColor UShape WfDiff SMDR - IS SS - UIE Fusion UDCP CCMSR CodeUNet FeMaSR AdaCode Ours AMSIN Raw GT IBLA ULAP Wa te rN et UColor UShape WfDiff SMDR - IS SS - UIE Fusion UDCP CCMSR CodeUNet FeMaSR AdaCode RDICP IPC - Dehaze PSNR 15.573 PSNR 14.230 CDF - UIE FDCE - Net RUE - Net PSNR 23.176 PSNR 28.541 PSNR 22.1 10 RDICP IPC - Dehaze CDF - UIE FDCE - Net RUE - Net PSNR 20.710 PSNR 24.016 PSNR 23.045 PSNR 16.474 PSNR 16.365 RDICP IPC - Dehaze CDF - UIE FDCE - Net RUE - Net PSNR 25.972 PSNR 23.955 PSNR 9.421 PSNR 10.457 PSNR 23.935 HCLR - Net HCLR - Net HCLR - Net PSNR 26.073 PSNR 23.221 PSNR 18.385 Fig. 6. V isual comparison of UIE results sampled from the test set of UIEB [ 9 ] dataset. The highest PSNR results are highlighted in red and the second best results are yellow . RIDCP[ 11 ] and IPC-Dehaze[ 13 ], exhibit poor adaptability to div erse underwater scenes and fail to produce consistent enhancement results. Howe ver , the proposed SUCode makes advantages of the F AFF , which can integrate features from the G r and G e decoders, making better use of discrete representations and enhancing the image quality . C. Cr oss-Dataset Evaluation Results. T o further e valuate the robustness of the proposed method, we conduct cross-dataset v alidation experiments. Specifically , we use the SUCode model trained on the UIEB dataset and test it on the validation sets of the LSUI and UFO120 datasets, respectiv ely . The statistical results and visual comparisons are shown in T able II and Fig. 7 . Statistical results show that this pre-trained SUCode achiev ed the best results in SSIM and LPIPS v alues on both datasets, and achiev ed competitiv e PSNR and UCIQE values, demonstrating superior generaliza- tion performance. This can be attributed to the advantage of the semantic-aware codebooks obtained by SUCode in the first stage: modeling category-related underwater image features can enable the UIE network to utilize tar get information from the raw underwater images to improv e the enhanced adaptability . As sho wn in the visual comparison, other UIE methods fail to handle the foreground and background effec- tiv ely , often introducing poor textures, over -smoothed details, or undesirable color shifts. In contrast, SUCode consistently IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 10 PSNR 27.006 PSNR 20.208 PSNR 19.120 PSNR 19.280 PSNR 19.037 PSNR 20.487 PSNR 20.400 PSNR 16.982 Ours AMSIN Raw GT Wat er Ne t UColor UShape WfDiff SMDR_IS SS - UIE CCMSR CodeUNet PSNR 27.048 PSNR 22.046 PSNR 22.609 PSNR 25.106 PSNR 23.233 PSNR 23.788 PSNR 23.610 PSNR 20.752 PSNR 23.545 PSNR 23.686 PSNR 22.666 PSNR 25.702 PSNR 22.158 PSNR 20.541 PSNR 19.791 PSNR 19.715 PSNR 22.068 PSNR 21.762 PSNR 17.623 PSNR 19.998 PSNR 20.863 PSNR 21.785 PSNR 17.695 PSNR 14.597 PSNR 16.23 9 PSNR 14.700 PSNR 15.687 PSNR 15.475 PSNR 14.174 PSNR 17.558 PSNR 17.074 PSNR 16.492 (a) (b) (c) (d) Fig. 7. V isual comparison of cross-dataset ev aluation results sampled from LSUI[ 8 ] (a,b) and UFO120[ 35 ] (c,d) dataset. The highest PSNR results are highlighted in red and the second best results are yellow . T ABLE III C O MPA R IS O N O F D I FF ER E N T U I E M E T HO D S I N T E R MS O F N UM B E R O F PAR A M E TE R S A N D F L OP S . Metric W aterNet [ 9 ] UColor [ 10 ] UShape [ 8 ] CCMSR [ 30 ] WfDiff [ 18 ] SMDR-IS [ 4 ] AMSIN [ 19 ] SS-UIE [ 5 ] CodeUNet [ 14 ] SUCode(Ours) #Params (M) 1.09 148.77 31.58 21.13 100.55 29.33 4.67 20.63 29.47 61.18 FLOPs (G) 71.48 1402.18 0.86 43.60 369.29 46.27 37.28 40.09 111.49 133.31 T ABLE IV A B LAT IO N S T UDY O F T H E E FF EC T I V EN E S S O F C O DE B O OK G E N E RATI O N M E TH O D S . T H E B E S T R E S ULT S A R E H I G H LI G H T ED I N B OL D OS ICC Ours PSNR ↑ SSIM ↑ LPIPS ↓ √ 23.095 0.917 0.142 √ 23.245 0.916 0.134 √ 23.857 0.925 0.124 selects appropriate enhancement regions for different target regions, restoring more natural color, brightness, and contrast. This is made possible by the cross-channel color restoration capability of the GCAM module, which helps the network recov er a clear image from a discrete codebook based on a gated channel attention module. D. Efficiency Evaluation T able III lists the analysis of network efficiency , expressed in terms of model size and computational complexity , which shows that SUCode achiev es the moderate space complexity and time complexity . It should be noted that W aterNet [ 9 ] and UColor [ 10 ] require additional image preprocessing processing for enhancement, while the proposed SUCode can achiev e end-to-end training and inference processes. (a) (b) (c) Fig. 8. V isual comparison of ablation studies on the codebook quantization strategy . (a) One shot codebook quantization. (b) Image category-based codebook quantization. (c) SUCode. E. Ablation Study W e conducted ablation studies on the UIEB dataset to ev aluate the effecti veness of each component in SUCode. Effectiveness of Semantic-A ware Codebook. T o analyze the ef fectiveness of the proposed semantic-aware codebook, we perform ablation studies on the codebook quantization strategy . The one shot (OS) quantization methods quantizes a single and fixed codebook for all images [ 21 ][ 22 ], while the image category-based codebook (ICC) quantization methods learns a set of foundational codebooks that correspond to different image categories, and then dynamically combines them based on the input image’ s characteristics. The en- hancement process comparison of these methods is shown in Fig. 1 . It can be seen from the visual comparison in Fig. 8 that the proposed SUCode introduces more reasonable contrast and clarity in the near coral regions and alleviates the blue-green color cast in the distant background regions compared to the SC and ICC methods. The quantitativ e results are summarized in T able IV , in which it is observed that the proposed semantic-aware codebook quantization strategy provides reasonable performance, which le veraging pixel-wise codebook updates guided by semantic masks. This is mainly due to the complexity and di versity of the underwater en viron- ment. Therefore, the codebook established on the fine-grained area can more completely represent the image. Effectiveness of Network Modules. In order to verify the effecti veness of modules introduced in the proposed SUCode, we further conduct an ablation study on the components of the network. In the experiment, the baseline model is designed to replace GCAM with the basic ResBlock and remov e frequency-domain related processing from F AFF . Sub- sequently , we introduced the frequency-domain operations into the fusion module (T able V (b)), the PSNR increases IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 11 (a) (b) (c) (d) (e) (f) Fig. 9. V isual comparison of ablation studies on the network modules. (a) Baseline model. (b) Model with F AFF . (c) Model with GCAM in stage II and stage III. (d) Model with F AFF and GCAM in stage II. (e) Model with F AFF and GCAM in stage III. (f) Full model. from 23.000 to 23.662. This demonstrates that F AFF can model the feature interaction in the frequenc y domain while effecti vely recov er high-frequency details that are severely attenuated by underwater scattering. As shown in Fig. 9 (b), F AFF sharpens coral textures and edge structures without amplifying noise. In contrast, inserting GCAM alone leads to more pronounced improvements on perceptual quality , while the PSNR and SSIM gains are modest. As is shown in Fig. 9 (c), GCAM significantly improv es color fidelity in both fore- ground and background regions. GCAM adaptively reweights feature channels with attention mechanism, which enhances color contrast and restores the attenuated red components. When combining F AFF and GCAM in both Stage II and Stage III, the two modules complement each other . Specifically , F AFF recovers contrast and textures while GCAM corrects color balance. This yields the highest ov erall performance with 23.857 PSNR, 0.925 SSIM, and 0.124 LPIPS, shown in T able V (f). The visualization results in Fig. 9 (f) show that both sharp edges and natural colors hav e been preserved. This underscores the necessity of their joint integration within SUCode to effecti vely enhance both global color and local details. (a) (b) (c) Fig. 10. V isual comparison and histogram of ablation studies on the source of codebook quantization images. (a) Raw underwater images. (b) Output of codebook quantization using reference images. (c) Output of codebook quantization using raw images. Effectiveness of Quantize Raw Images. T o discuss the effecti veness of the proposed SUCode in selecting the original image during codebook construction to a void interference from pseudo-true features, we conduct ablation experiments. For comparison, we ev aluated codebook quantization using clean ground truth images in Stage I, follo wed by degrad-to-clean mapping in Stage II, in line with con ventional VQGAN-based approaches [ 7 ]. The quantitative results are summarized in T able 10 and visual comparisons are given in Fig. 10 . It is observed that this setup resulted in a performance drop, with T ABLE V A B LAT IO N S T UDY O F T H E E FF EC T I V EN E S S O F N E TW O R K M O DU L E S . T H E B E ST R E S ULT S A R E H I G H LI G H T ED I N B O L D F AFF GCAM-S2 GCAM-S3 PSNR ↑ SSIM ↑ LPIPS ↓ (a) 23.000 0.917 0.139 (b) √ 23.662 0.918 0.139 (c) √ √ 23.279 0.906 0.135 (d) √ √ 23.009 0.911 0.140 (e) √ √ 23.375 0.918 0.134 (f) √ √ √ 23.857 0.925 0.124 T ABLE VI A B LAT IO N S T UDY O F T H E S O UR C E O F Q UA N T IZ E I M AG ES . T H E B E S T R E SU LT S A R E H I G HL I G HT E D I N B O L D Raw (Ours) GT PSNR ↑ SSIM ↑ LPIPS ↓ √ 23.857 0.925 0.124 √ 23.216 0.913 0.152 PSNR decreasing by 0.641 and SSIM by 0.012, compared to the proposed SUCode. These results highlight the limitations posed by the pseudo-GT issue in UIE, which impedes the establishment of an effecti ve codebook. In contrast, SUCode demonstrates superior performance by building the codebook from raw underwater inputs and lev eraging domain transfor- mation in Stage III. Furthermore, the histogram of the image enhanced by SUCode is more uniform across all channels, with the red channel shifting to wards medium to high intensity . This indicates that encoding the original image can better improv e the network’ s understanding of the image as a whole, which helps to better recover color cast and expand dynamic range. Effectiveness of Codebook Size. W e further conducted ablation experiments to verify the ef fect of hyper parameter settings, particularly the codebook size in the proposed SU- Code. As shown in T able VII , the codebook size in SUCode is set to 256 × 256 . Increasing the depth and size of the codebook beyond this setting does not improv e the network’ s enhancement performance. F or underwater images, underwater degradation patterns such as color cast, unev en illumination, and low contrast can be considered as a concentrated degra- dation structure. Therefore, increasing the codebook size may cause the model to have dif ficulty effecti vely utilizing all codebook vectors and introduce noisy patterns, resulting in the model’ s inability to learn ef fective quantization representations in high-dimensional space, further reducing LPIPS and SSIM. Con versely , a smaller codebook may prev ent the model from fully encoding scene-related degradation features, leading to image blurring and under-reconstruction, thus reducing PSNR T ABLE VII A B LAT IO N S T UDY O F C O DE B O O K S I ZE . T H E B E S T R E SU LT S A R E H I GH L I G HT E D I N B O LD Codebook Size PSNR ↑ SSIM ↑ LPIPS ↓ 128 × 128 22.237 0.920 0.126 128 × 256 22.697 0.924 0.126 256 × 256 (SUCode) 23.857 0.925 0.124 512 × 256 23.189 0.914 0.127 512 × 512 23.065 0.912 0.141 1024 × 512 23.047 0.917 0.125 IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 12 T ABLE VIII A B LAT IO N S T UDY O F T H E AC C U R AC Y O F S E M A NT I C M A S K S . T H E S E MA N T I C M A SK I S R A ND O M L Y E RO D E D A N D E X P A N D E D W I T HI N A C E RT A I N P I X E L R A NG E . A P I X E L R A NG E O F 0 R E P RE S E N TS U S I NG T H E G RO U ND T RU T H M A S K . Eroded/Expanded Range PSNR ↑ SSIM ↑ LPIPS ↓ 0 23.857 0.925 0.124 1-5 Pixels 22.864 0.925 0.121 6-10 Pixels 23.216 0.921 0.125 and SSIM metrics. Effectiveness of Semantic Masks. In the first stage of the network, SUCode construct semantic category-specific codebooks, enabling more robust image enhancement. T o ev aluate the effect of mask accurac y on codebook construction, we conducted a series of ablation experiments. Since the semantic masks are derived from ground-truth annotations, the primary source of noise is boundary-level annotation uncertainty rather than se vere semantic errors such as class swaps or large missing regions. Specifically , we randomly eroded or dilated the foreground region of the ground-truth mask by pixels and used the modified masks to train both the codebook quantizer and the subsequent enhancement netw ork. The results, summarized in T able VIII , indicate that inaccu- racies in the masks slightly hinder the network’ s ability to model real underwater scenes, causing a minor reduction in enhancement performance. Ho wever , because the foreground and background regions of underwater images exhibit similar degradation patterns in terms of illumination and color cast, the performance drop remains within a tolerable range. Moreov er, SUCode also incorporates sev eral architectural designs that reduce sensitivity to imperfect semantic cues. Instead of relying on a single class-specific codebook, sev- eral quantized representations are synthesized via a learnable weight predictor , which softens hard boundary dependence and mitigates the impact of mask inaccuracies. Besides, the self-reconstruction on raw images stabilizes the discrete rep- resentation prior to enhancement, reducing the risk of error amplification from noisy semantic guidance. This confirms the robustness of the proposed SUCode to v ariations in input semantic information. Effectiveness of the Number of Codebooks. T o analyze how the number of semantic codebooks influences enhance- ment quality and computational cost, an ablation is performed by merging semantically similar categories. In SUCode, multi- ple cate gory-specific codebooks are used to produce quantized representations, which are then combined into a single latent representation through a weight map at each spatial location. The aggregated latent is subsequently decoded by a same decoder to obtain the enhanced output. T o ev aluate the impact of codebook number, we further perform category merging to 6 and 4 codebooks from the original 8, where semantically similar categories are grouped together . The results in T able IX show that using fe wer codebooks leads to a moderate degrada- tion in enhance performance, the PSNR change from 23.857 to 22.984 to 23.216, reflecting reduced semantic specificity in the discrete representation. Meanwhile, the computational cost remains almost unchanged across different settings since T ABLE IX A B LAT IO N S T UDY O F T H E N U MB E R O F C O D EB O O K S . O F T H E S I X C O DE B O O KS , W E M E R GE D I N V ERT E B RAT ES A N D V E RT E BR ATE S , A N D T H EN M E R GE D D I VE R S A N D U N DE RWA T E R RO B OT S . W I T HI N T H E F O U R C O DE B O O KS , W E F U RT HE R M E R GE D AQ UA T I C P LA N T S , R U IN S , A N D T HE RO C K S . Codebook Number PSNR ↑ SSIM ↑ LPIPS ↓ #Params (M) FLOPs (G) 8 (SUCode) 23.857 0.925 0.124 61.18 133.21 6 22.984 0.917 0.134 59.67 131.12 4 23.216 0.921 0.125 58.23 129.77 the main computation is dominated by the shared encoder- decoder pathway and the fusion over codebooks introduces only a lightweight overhead. Overall, this ablation indicates that increasing the number of codebooks primarily benefits enhancement quality , while computational cost is largely stable under our aggregation-based design. F . Real-W orld Application T o v alidate the performance of SUCode in real-world ap- plications, we further ev aluate the performance of semantic segmentation on the images obtained by various UIE methods on the SUIM dataset. These results are obtained using a UNet model [ 28 ] trained on the original SUIM dataset. The results are shown in T able X . As can be seen that the proposed SUCode retains more semantic information in the image, achieving the highest Dice Score and the second best mIoU values. The segmentation results are visualized in Fig. 11 , showing that the segmentation results of the SUCode image hav e fewer mis-segments and are more consistent with the true outline of the object. For details in the image, such as the tips of fish fins and the edges of coral reefs, the proposed SUCode can guide the task network to achiev e more refined se gmentation. This can be attributed to the semantically discriminativ e codebook learning process, which implicitly incorporates more high-lev el features of underwater images into the enhanced image, facilitating downstream tasks in processing objects in the image. G. Limitations and Challenges Furthermore, we selected some sub-optimal enhance results from the SUIM and UIEB datasets. The original image, ground truth, and enhance results using SUCode are shown in the Fig. 12 . It can be seen that in cases of severe color fading or extremely low contrast, the performance of SUCode can degrade. This may be because the high-frequency details lost in the image itself cannot be supplemented by the decoder, resulting in ov er-smoothing and limited texture reco very , since SUCode partially relies on the structure extracted from the raw image. When there is a large dif ference between the brightness and darkness of an image, SUCode may not be able to enhance the shado ws while preserving the details of the highlights, resulting in the loss of some information. Howe ver , in terms of visual effects, the enhanced results are close to or ev en e xceed the ground truth. T o tackle these challenges, future de velopment include introducing ro- bust preprocessing techniques to normalize extreme lighting IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 13 Ours AMSIN Wa te rN et UColor UShape WfDiff SMDR - IS SS - UIE Fig. 11. V isual comparison of se gmentation results sampled from SUIM dataset. T ABLE X S E MA N T I C S E GM E N T AT IO N R E SU LT S O N T H E S U IM DAT A S ET . T H E B E ST R E S U L T S A R E H I GH L I GH T E D I N B O LD A N D T H E S E C O ND B E S T R E S ULT S A R E U N DE R L I NE D . Method W aterNet[ 9 ] UColor[ 10 ] UShape[ 8 ] WfDiff[ 18 ] SMDR-IS[ 4 ] AMSIN[ 19 ] SS-UIE[ 5 ] SUCode(Ours) Dice Score 62.811 63.434 60.101 64.723 60.517 60.864 62.351 65.164 mIoU 55.888 54.396 50.791 66.555 55.677 52.032 54.443 63.912 Raw Ours GT Raw Ours GT Fig. 12. V isual comparison of suboptimal enhance results of the proposed SUCode. conditions before enhancement, or introducing self-supervised learning methods to improv e performance in highly variable underwater en vironments. Improvements to category-specific codebook construction mechanisms will also be considered, enabling the network to acquire more robust underwater scene codebook representations from surrounding pixels based on soft-logic semantic probability mask. Another limitation is that the input mask robustness analysis focuses on boundary perturbations. Evaluating and improving SUCode under sev ere semantic segmentation failures, such as category swaps and large missing regions from predicted masks remains future work. V . C O N C L U S I O N W e propose SUCode that handles spatially v arying degrada- tion via semantic-aware discrete codebook representations. T o address inconsistencies across semantic regions, we introduce a semantic-guided pixel-le vel quantization strategy , enabling region-specific image feature representation. Given the ill- posed nature of UIE, we formulate enhancement as a domain con version task in the discrete codebook space. W e further design F AFF for robust feature transformation and GCAM to refine feature propagation. Experiments sho w that SUCode outperforms SO T A methods in image quality . R E F E R E N C E S [1] J. W en, G. Y ang, B. Zhao, D. Huang, L. Lei, B. Zhang, Z. Gao, X. Chen, and B. M. Chen, “ A Semi-Supervised Domain-Adaptive Framew ork for Real-W orld Underwater Image Enhancement, ” IEEE T ransactions on Geoscience and Remote Sensing , vol. 63, pp. 1–15, 2025. [2] Y . Liu, X. Zhang, J. Zhu, B. Ma, Y . Duan, and P . T an, “HD ANet: Enhancing Underwater Salient Object Detection With Physics-Inspired Multimodal Joint Learning, ” IEEE T ransactions on Geoscience and Remote Sensing , vol. 63, pp. 1–14, 2025. [3] M. Y u, L. Shen, Z. W ang, and X. Hua, “T ask-Friendly Underwater Image Enhancement for Machine V ision Applications, ” IEEE Tr ansactions on Geoscience and Remote Sensing , vol. 62, pp. 1–14, 2024. [4] D. Zhang, J. Zhou, C. Guo, W . Zhang, and C. Li, “Synergistic Multiscale Detail Refinement via Intrinsic Supervision for Underwater Image Enhancement, ” Proceedings of the AAAI Confer ence on Artificial Intelligence , v ol. 38, no. 7, pp. 7033–7041, Mar . 2024. [5] L. Peng and L. Bian, “ Adaptiv e Dual-domain Learning for Underwater Image Enhancement, ” Proceedings of the AAAI Confer ence on Artificial Intelligence , v ol. 39, no. 6, pp. 6461–6469, Apr . 2025. [6] L. Chang, Y . W ang, L. Deng, B. Du, and C. Xu, “W aterDiffusion: Learning a Prior-in volved Unrolling Diffusion for Joint Underwater Saliency Detection and V isual Restoration, ” Pr oceedings of the AAAI Confer ence on Artificial Intelligence , vol. 39, no. 2, pp. 1998–2006, Apr . 2025. [7] K. Liu, Y . Jiang, I. Choi, and J. Gu, “Learning Image-Adaptiv e Codebooks for Class-Agnostic Image Restoration, ” in 2023 IEEE/CVF International Conference on Computer V ision (ICCV) . IEEE Computer Society , Oct. 2023, pp. 5350–5360. [8] L. Peng, C. Zhu, and L. Bian, “U-Shape T ransformer for Underwater Image Enhancement, ” IEEE Tr ansactions on Image Pr ocessing , vol. 32, pp. 3066–3079, 2023. [9] C. Li, C. Guo, W . Ren, R. Cong, J. Hou, S. Kwong, and D. T ao, “ An Underwater Image Enhancement Benchmark Dataset and Beyond, ” IEEE T ransactions on Image Pr ocessing , vol. 29, pp. 4376–4389, 2020. [10] C. Li, S. Anwar, J. Hou, R. Cong, C. Guo, and W . Ren, “Underwater Image Enhancement via Medium T ransmission-Guided Multi-Color Space Embedding, ” IEEE T ransactions on Image Pr ocessing , vol. 30, pp. 4985–5000, 2021. [11] R.-Q. W u, Z.-P . Duan, C.-L. Guo, Z. Chai, and C. Li, “RIDCP: Revital- izing Real Image Dehazing via High-Quality Codebook Priors, ” in 2023 IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , Jun. 2023, pp. 22 282–22 291. [12] H. Zhou, W . Dong, X. Liu, S. Liu, X. Min, G. Zhai, and J. Chen, “GLARE: Low Light Image Enhancement via Generative Latent Feature Based Codebook Retriev al, ” in Computer V ision – ECCV 2024: 18th Eur opean Confer ence, Milan, Italy, September 29–October 4, 2024, Pr oceedings, P art XL VIII . Berlin, Heidelberg: Springer-V erlag, Nov . 2024, pp. 36–54. [13] J. Fu, S. Liu, Z. Liu, C.-L. Guo, H. Park, R. Wu, G. W ang, and C. Li, “Iterati ve Predictor-Critic Code Decoding for Real-W orld Image IEEE TRANSA CTIONS ON GEOSCIENCE AND REMO TE SENSING 14 Dehazing, ” in Proceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , 2025, pp. 12 700–12 709. [14] L. W ang, X. Xu, S. An, B. Han, and Y . Guo, “CodeUNet: Autonomous underwater vehicle real visual enhancement via underwater codebook priors, ” ISPRS Journal of Photogrammetry and Remote Sensing , vol. 215, pp. 99–111, Sep. 2024. [15] W . Song, Y . W ang, D. Huang, A. Liotta, and C. Perra, “Enhancement of Underwater Images W ith Statistical Model of Background Light and Optimization of Transmission Map, ” IEEE T ransactions on Broadcast- ing , v ol. 66, no. 1, pp. 153–169, Mar . 2020. [16] Y .-T . Peng and P . C. Cosman, “Underwater Image Restoration Based on Image Blurriness and Light Absorption, ” IEEE T ransactions on Image Pr ocessing , vol. 26, no. 4, pp. 1579–1594, Apr . 2017. [17] G. Hou, J. Li, G. W ang, H. Y ang, B. Huang, and Z. Pan, “ A novel dark channel prior guided variational framework for underwater image restoration, ” Journal of visual communication and image repr esentation , vol. 66, p. 102732, 2020. [18] C. Zhao, W . Cai, C. Dong, and C. Hu, “W avelet-based Fourier Infor- mation Interaction with Frequency Dif fusion Adjustment for Underwater Image Restoration, ” in 2024 IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , Jun. 2024, pp. 8281–8291. [19] Y . Quan, X. T an, Y . Huang, Y . Xu, and H. Ji, “Enhancing Underwater Images via Asymmetric Multi-Scale In vertible Networks, ” in ACM Multimedia 2024 , Jul. 2024. [20] A. van den Oord, O. V inyals, and K. Kavukcuoglu, “Neural discrete representation learning, ” in Proceedings of the 31st International Con- fer ence on Neural Information Pr ocessing Systems , ser . NIPS’17. Red Hook, NY , USA: Curran Associates Inc., Dec. 2017, pp. 6309–6318. [21] P . Esser, R. Rombach, and B. Ommer, “T aming T ransformers for High- Resolution Image Synthesis, ” in 2021 IEEE/CVF Conference on Com- puter V ision and P attern Recognition (CVPR) , Jun. 2021, pp. 12 868– 12 878. [22] C. Chen, X. Shi, Y . Qin, X. Li, X. Han, T . Y ang, and S. Guo, “Real-W orld Blind Super-Resolution via Feature Matching with Implicit High-Resolution Priors, ” in Proceedings of the 30th ACM International Confer ence on Multimedia , ser . MM ’22. New Y ork, NY , USA: Association for Computing Machinery , Oct. 2022, pp. 1329–1338. [23] X. Ding, Y . Sui, and J. Zhang, “V ector Quantized Underwater Image En- hancement W ith T ransformers, ” IEEE Journal of Oceanic Engineering , vol. 50, no. 1, pp. 136–149, Jan. 2025. [24] Z. Liu, Y . Lin, Y . Cao, H. Hu, Y . W ei, Z. Zhang, S. Lin, and B. Guo, “Swin Transformer: Hierarchical V ision Transformer using Shifted Win- dows, ” in 2021 IEEE/CVF International Conference on Computer V ision (ICCV) . IEEE Computer Society , Oct. 2021, pp. 9992–10 002. [25] M. Li, K. W ang, L. Shen, Y . Lin, Z. W ang, and Q. Zhao, “UIALN: Enhancement for Underwater Image with Artificial Light, ” IEEE T rans- actions on Circuits and Systems for V ideo T echnology , pp. 1–1, 2023. [26] D. Feijoo, J. C. Benito, A. Garcia, and M. V . Conde, “DarkIR: Robust Low-Light Image Restoration, ” in Pr oceedings of the IEEE/CVF Con- fer ence on Computer V ision and P attern Recognition , 2025, pp. 10 879– 10 889. [27] T . Chen, S. Kornblith, M. Norouzi, and G. Hinton, “ A simple framework for contrastiv e learning of visual representations, ” in Proceedings of the 37th International Confer ence on Machine Learning , ser . ICML’20, vol. 119. JMLR.org, Jul. 2020, pp. 1597–1607. [28] Q. Qi, K. Li, H. Zheng, X. Gao, G. Hou, and K. Sun, “SGUIE-Net: Semantic Attention Guided Underwater Image Enhancement with Multi- Scale Perception, ” IEEE T ransactions on Image Processing , vol. 31, pp. 6816–6830, Jan. 2022. [29] C. Ancuti, C. O. Ancuti, T . Haber , and P . Bekaert, “Enhancing underwa- ter images and videos by fusion, ” in 2012 IEEE Confer ence on Computer V ision and P attern Recognition , Jun. 2012, pp. 81–88. [30] H. Qi, H. Zhou, J. Dong, and X. Dong, “Deep Color-Corrected Mul- tiscale Retinex Network for Underwater Image Enhancement, ” IEEE T ransactions on Geoscience and Remote Sensing , vol. 62, pp. 1–13, 2024. [31] G. W ang, C. Chen, H. Xu, J. Ru, S. W ang, Z. W ang, and Z. Liu, “RUE- Net: Advancing Underwater V ision W ith Li ve Image Enhancement, ” IEEE T ransactions on Geoscience and Remote Sensing , vol. 62, pp. 1– 14, 2024. [32] Z. Cheng, G. Fan, J. Zhou, M. Gan, and C. L. P . Chen, “FDCE-Net: Underwater Image Enhancement W ith Embedding Frequency and Dual Color Encoder, ” IEEE T ransactions on Circuits and Systems for V ideo T echnology , v ol. 35, no. 2, pp. 1728–1744, Feb . 2025. [33] H. Zhang, H. Xu, X. Y u, X. Zhang, X. Gao, and C. Wu, “CDF-UIE: Lev eraging Cross-Domain Fusion for Underwater Image Enhancement, ” IEEE T ransactions on Geoscience and Remote Sensing , vol. 63, pp. 1– 15, 2025. [34] M. J. Islam, C. Edge, Y . Xiao, P . Luo, M. Mehtaz, C. Morse, S. S. Enan, and J. Sattar, “Semantic Segmentation of Underwater Imagery: Dataset and Benchmark, ” in 2020 IEEE/RSJ International Confer ence on Intelligent Robots and Systems (IROS) . IEEE, 2020, pp. 1769–1776. [35] M. J. Islam, P . Luo, and J. Sattar , “Simultaneous enhancement and super - resolution of underwater imagery for improv ed visual perception, ” arXiv pr eprint arXiv:2002.01155 , 2020. [36] Z. W ang, A. Bovik, H. Sheikh, and E. Simoncelli, “Image quality assess- ment: From error visibility to structural similarity , ” IEEE T ransactions on Imag e Pr ocessing , vol. 13, no. 4, pp. 600–612, Apr . 2004. [37] J. Korhonen and J. Y ou, “Peak signal-to-noise ratio revisited: Is sim- ple beautiful?” in 2012 F ourth International W orkshop on Quality of Multimedia Experience , Jul. 2012, pp. 37–38. [38] R. Zhang, P . Isola, A. A. Efros, E. Shechtman, and O. W ang, “The Unreasonable Effecti veness of Deep Features as a Perceptual Metric, ” in 2018 IEEE/CVF Conference on Computer V ision and P attern Recog- nition , Jun. 2018, pp. 586–595.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment