What Makes Value Learning Efficient in Residual Reinforcement Learning?

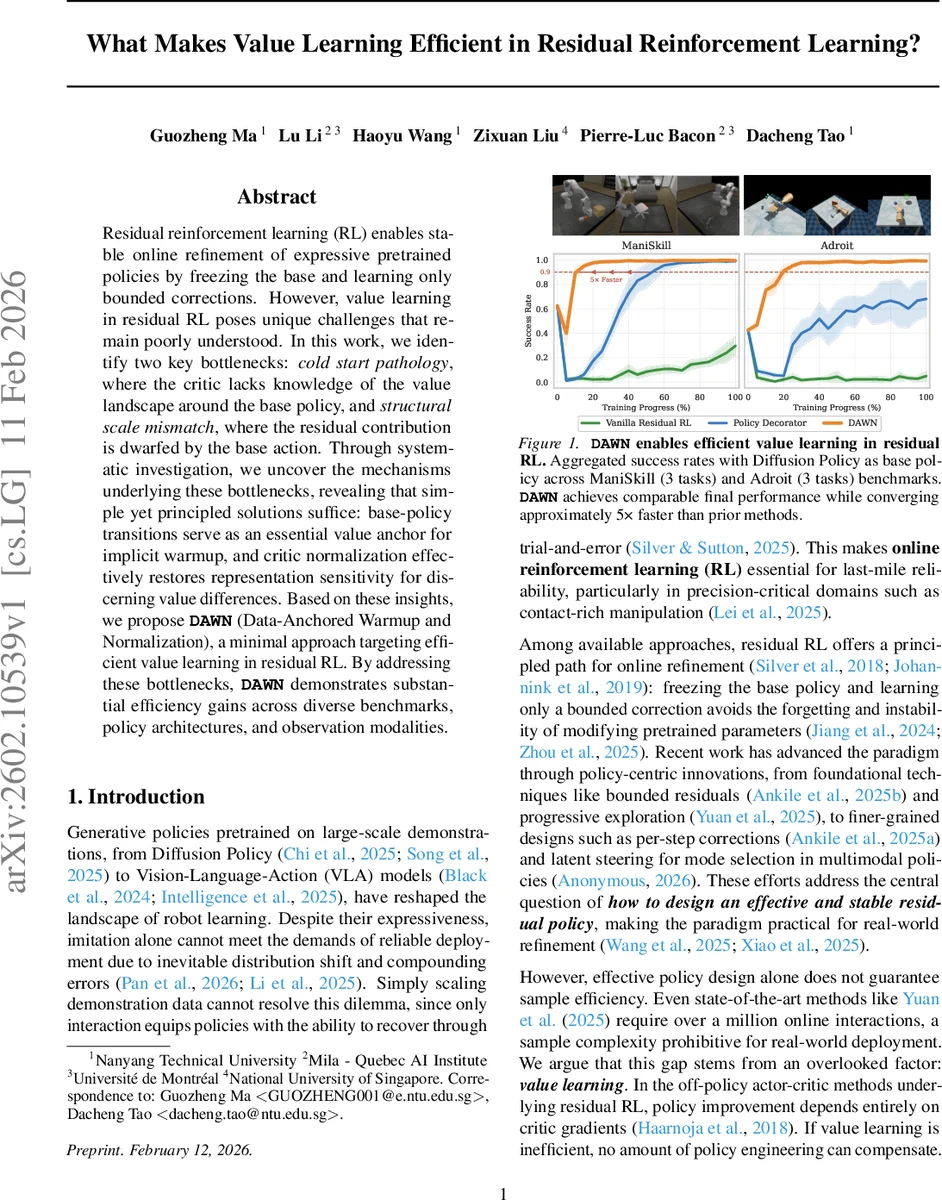

Residual reinforcement learning (RL) enables stable online refinement of expressive pretrained policies by freezing the base and learning only bounded corrections. However, value learning in residual RL poses unique challenges that remain poorly understood. In this work, we identify two key bottlenecks: cold start pathology, where the critic lacks knowledge of the value landscape around the base policy, and structural scale mismatch, where the residual contribution is dwarfed by the base action. Through systematic investigation, we uncover the mechanisms underlying these bottlenecks, revealing that simple yet principled solutions suffice: base-policy transitions serve as an essential value anchor for implicit warmup, and critic normalization effectively restores representation sensitivity for discerning value differences. Based on these insights, we propose DAWN (Data-Anchored Warmup and Normalization), a minimal approach targeting efficient value learning in residual RL. By addressing these bottlenecks, DAWN demonstrates substantial efficiency gains across diverse benchmarks, policy architectures, and observation modalities.

💡 Research Summary

**

Residual reinforcement learning (RL) has emerged as a practical framework for refining large‑scale pretrained policies (e.g., diffusion policies, vision‑language‑action models) while keeping the base policy frozen. By learning only a bounded correction (the residual) on top of the base, the method avoids destabilizing the expressive pretrained network and reduces the search space to a local neighbourhood. Despite impressive empirical successes, recent works still require on the order of one million online interactions to achieve competitive performance, which is prohibitive for real‑world robotics. The authors argue that the bottleneck lies not in policy architecture but in value‑function learning, which drives policy improvement in off‑policy actor‑critic algorithms such as SAC.

The paper identifies two challenges that are unique to residual RL’s value learning:

-

Cold‑Start Pathology – At the beginning of training the critic is randomly initialized and has no knowledge of the value landscape around the frozen base policy. In standard off‑policy RL, the policy and critic co‑evolve from random actions, gradually shaping the value function. In residual RL, however, the executed policy already behaves like a competent base policy; if the critic cannot quickly ground itself to this region, its erroneous Q‑estimates will misguide the residual policy, leading to catastrophic performance collapse.

-

Structural Scale Mismatch – The residual contribution is scaled by a small factor λ (typically ≤ 0.1). Consequently, the residual action is dwarfed by the base action, making it difficult for the critic to detect the subtle Q‑differences induced by the residual. Without special treatment, the critic’s representation becomes insensitive to the residual’s effect, hampering credit assignment.

To investigate these issues, the authors construct a minimal residual RL baseline using Soft Actor‑Critic (SAC) with the residual added directly to the base action (the formulation shown to be most effective in prior work). They deliberately avoid additional stabilisation tricks to expose the raw dynamics of value learning.

Cold‑Start Investigation

The authors compare two intuitions:

- Implicit warm‑up – Collect a set of transitions by executing the frozen base policy before any residual learning begins, and seed the replay buffer with these “warm‑up” data.

- Explicit warm‑up – Freeze the residual policy and train the critic alone for a dedicated pre‑training phase, using various SAC‑style updates (automatic entropy tuning, fixed entropy coefficient, or hard‑Q without entropy).

Empirical results show that implicit warm‑up dramatically improves sample efficiency. With as few as 20 k base‑policy transitions, the Q‑grounding error (the absolute difference between Q(s, a_base) and Monte‑Carlo returns of the base policy) drops quickly and stays low throughout training. Tasks that previously failed (e.g., PegInsertionSide) succeed, and overall learning curves shift left by a large margin. Moreover, adding exploration during warm‑up (e.g., ε‑greedy, noise injection) does not outperform plain base‑policy data, confirming that the primary need is a reliable value anchor rather than diverse coverage.

In contrast, explicit warm‑up variants hurt performance. With automatic entropy tuning, the temperature α diverges during the pre‑training phase, causing the entropy term |α log π| to dominate the TD target. This leads to severe Q‑value collapse (extremely negative estimates) that never recovers once residual learning starts. Even with a fixed α, Soft‑Q still collapses because the entropy bias outweighs the reward magnitude. Hard‑Q (no entropy term) avoids collapse but still provides no benefit over implicit warm‑up, likely because it lacks the stochasticity needed for robust representation learning. The authors conclude that a simple replay‑buffer seed of base‑policy transitions is both necessary and sufficient to overcome the cold‑start pathology.

Structural Scale Mismatch Investigation

Because λ is small, the residual’s influence on the Q‑input is minimal. The authors propose normalising the critic’s hidden representations (LayerNorm or BatchNorm) before the final Q head. This normalisation rescales the residual‑induced variations, restoring sensitivity to small action changes. Experiments demonstrate that with normalisation the critic can distinguish Q‑differences caused by the residual, leading to faster convergence and higher final performance. Importantly, the authors argue that distributional RL objectives are unnecessary; the residual’s effect on Q manifests as a clear mean shift, which normalisation can capture efficiently.

DAWN: Data‑Anchored Warmup and Normalisation

Combining the two insights, the authors introduce DAWN:

- Data‑Anchored Warmup – Before training, collect a modest number (≈ 20 k) of transitions from the frozen base policy and insert them into the replay buffer. No extra labels or reward shaping are required.

- Critic Normalisation – Apply a simple normalisation layer to the critic’s hidden state (e.g., LayerNorm after the first fully‑connected layer).

DAWN adds negligible computational overhead and requires no extra hyper‑parameters beyond the warm‑up buffer size (which the authors fix at 20 k based on ablations).

Experimental Validation

The authors evaluate DAWN on six robotic manipulation benchmarks: three tasks from ManiSkill (PegInsertionSide, TurnFaucet, PushChair) and three from Adroit. The base policy is a Diffusion Policy pretrained on large demonstration datasets. Comparisons include vanilla residual SAC, recent residual‑RL improvements (e.g., progressive exploration, per‑step corrections), and the authors’ own ablations (no warm‑up, no normalisation, both removed).

Key findings:

- Sample Efficiency – DAWN reaches the same final success rate as the strongest baselines while requiring roughly 5× fewer environment steps. In the hardest ManiSkill task, DAWN achieves > 80 % success after only 0.5 M steps, whereas baselines need > 2 M steps.

- Robustness Across Architectures – The method works with both MLP and Transformer‑based residual policies, and with image, depth, or point‑cloud observations.

- Ablation Results – Removing warm‑up dramatically degrades performance (failure on PegInsertionSide). Removing normalisation slows convergence but does not cause collapse. Removing both returns to the baseline’s sample complexity.

- Stability – Training curves exhibit low variance across 8 random seeds, indicating that DAWN mitigates the instability often observed in residual RL.

Discussion and Implications

The paper’s central claim is that value learning in residual RL is fundamentally limited by two simple, addressable factors. By providing a value anchor through base‑policy data, the critic avoids the cold‑start divergence that plagues naïve implementations. By normalising the critic’s representation, the method restores sensitivity to the small residual signal, solving the structural scale mismatch. Both solutions are lightweight, require no additional data collection beyond what is already available (the frozen base policy), and integrate seamlessly with existing off‑policy algorithms.

The authors also discuss why explicit pre‑training fails: the entropy term in Soft‑Q can dominate the TD target when the critic is untrained, leading to a biased value estimate that is hard to correct later. This insight cautions against naïve transfer of standard SAC pre‑training pipelines to residual settings.

Conclusion

“What Makes Value Learning Efficient in Residual Reinforcement Learning?” provides the first systematic analysis of value‑function learning challenges unique to residual RL. It introduces DAWN, a minimal yet powerful combination of data‑anchored warm‑up and critic normalisation, which yields substantial gains in sample efficiency, stability, and robustness across a range of robotic tasks and policy architectures. The work highlights that, in residual RL, careful treatment of the critic—rather than elaborate policy‑side tricks—is the key to unlocking practical, real‑world online refinement of large pretrained policies.

Comments & Academic Discussion

Loading comments...

Leave a Comment