Constructing Industrial-Scale Optimization Modeling Benchmark

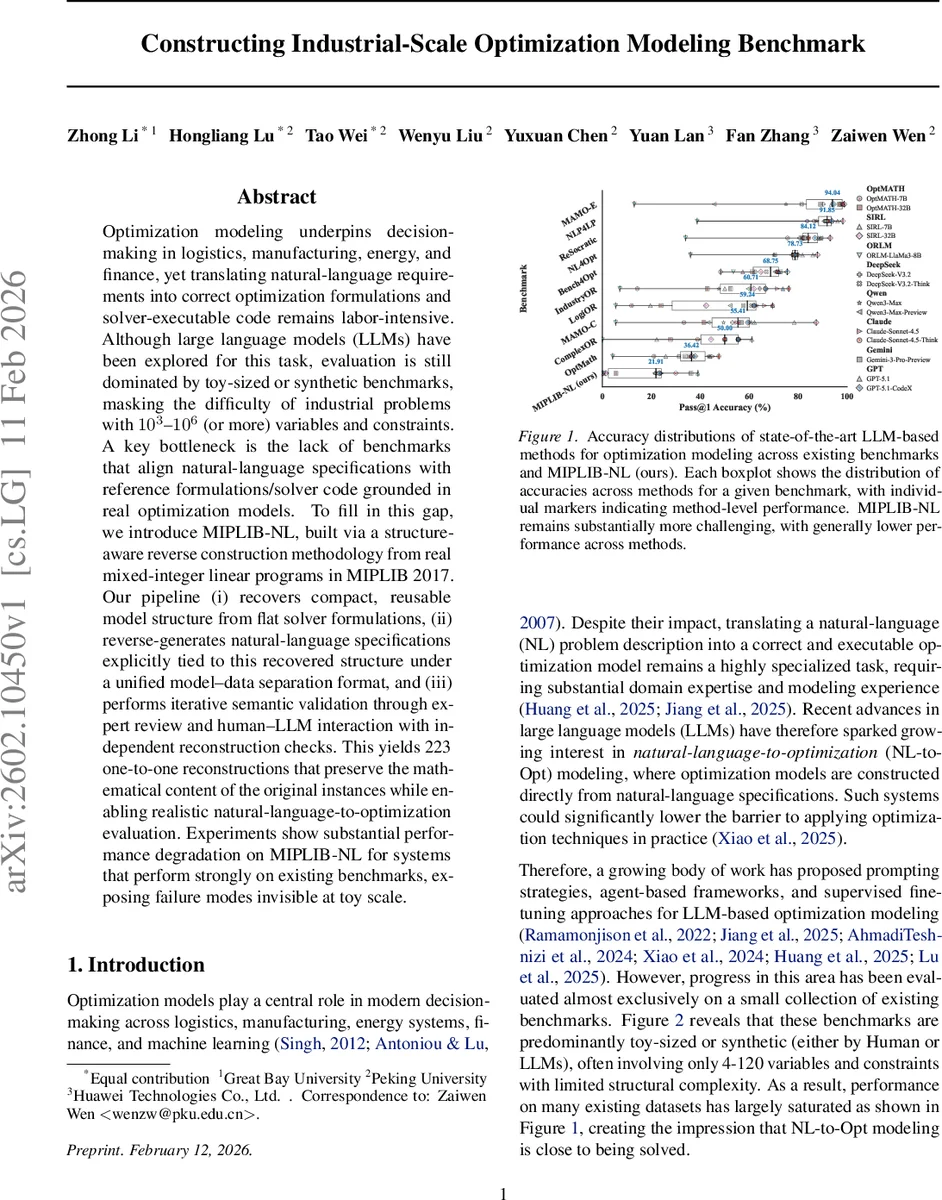

Optimization modeling underpins decision-making in logistics, manufacturing, energy, and finance, yet translating natural-language requirements into correct optimization formulations and solver-executable code remains labor-intensive. Although large language models (LLMs) have been explored for this task, evaluation is still dominated by toy-sized or synthetic benchmarks, masking the difficulty of industrial problems with $10^{3}$–$10^{6}$ (or more) variables and constraints. A key bottleneck is the lack of benchmarks that align natural-language specifications with reference formulations/solver code grounded in real optimization models. To fill in this gap, we introduce MIPLIB-NL, built via a structure-aware reverse construction methodology from real mixed-integer linear programs in MIPLIB~2017. Our pipeline (i) recovers compact, reusable model structure from flat solver formulations, (ii) reverse-generates natural-language specifications explicitly tied to this recovered structure under a unified model–data separation format, and (iii) performs iterative semantic validation through expert review and human–LLM interaction with independent reconstruction checks. This yields 223 one-to-one reconstructions that preserve the mathematical content of the original instances while enabling realistic natural-language-to-optimization evaluation. Experiments show substantial performance degradation on MIPLIB-NL for systems that perform strongly on existing benchmarks, exposing failure modes invisible at toy scale.

💡 Research Summary

The paper addresses a critical gap in the evaluation of natural‑language‑to‑optimization (NL‑to‑Opt) systems: existing benchmarks are dominated by toy‑scale or synthetic problems that do not reflect the size, structure, and complexity of real industrial mixed‑integer linear programs (MILPs). While large language models (LLMs) have shown promising results on these small datasets, their true capability on industrial‑scale models—often containing 10³ to 10⁶ variables and constraints—remains unknown.

To close this gap, the authors introduce MIPLIB‑NL, a benchmark built by reverse‑engineering real MILP instances from the well‑known MIPLIB 2017 repository. The construction pipeline consists of three tightly coupled stages. First, expert‑driven structural abstraction recovers indexed variable groups and repeated constraint families from flat .mps files, yielding a compact loop‑based representation that captures the hierarchical organization of industrial models. Second, using this recovered scaffold as a fixed semantic backbone, deterministic natural‑language blueprints are applied to generate problem descriptions that are tightly coupled to the model structure while keeping the actual data (coefficients, right‑hand sides, etc.) in separate structured files. This model‑data separation ensures that the natural‑language text remains concise even for very large instances and that both humans and LLMs can refer to the same underlying structure. Third, semantic validation is performed through independent NL‑to‑Opt reconstructions, with human experts and LLMs interacting to verify that the generated NL description uniquely and correctly specifies the original MILP. Errors trigger revisions of the blueprints, and the process iterates until 223 high‑quality one‑to‑one reconstructions are obtained.

The resulting benchmark spans a dramatically larger scale than prior datasets: instance sizes range from a few hundred thousand to over a million total variables plus constraints, and the structural richness includes multi‑index sets, nested loops, and diverse constraint families. The authors evaluate a broad spectrum of state‑of‑the‑art NL‑to‑Opt approaches, including prompting‑only methods (GPT‑4‑Turbo, Claude‑Sonnet‑4.5, DeepSeek‑V3.2) and specialized systems (NL4Opt, IndustryOR, ComplexOR). While many of these methods achieve 80‑95 % accuracy on existing toy benchmarks, their performance on MIPLIB‑NL collapses to 20‑35 % accuracy. Detailed error analysis reveals systematic failures to correctly map indexed constraint families, to preserve objective coefficients, and to handle large‑scale data consistency.

The paper’s contributions are fourfold: (1) it highlights dataset design—not model architecture—as the primary bottleneck limiting realistic evaluation of NL‑to‑Opt; (2) it proposes a novel structure‑aware reverse‑generation pipeline that grounds natural‑language specifications in authentic industrial MILPs; (3) it releases MIPLIB‑NL, the first benchmark that combines real‑world scale, structural fidelity, and clean model‑data separation; and (4) it provides a comprehensive empirical study exposing the current limitations of LLM‑based optimization modeling on industrial problems.

In discussion, the authors argue that future progress must focus on improving LLMs’ ability to recognize and reason about hierarchical model structures, possibly through specialized prompting strategies, chain‑of‑thought reasoning, or multimodal inputs that encode index sets and graph topologies. They also suggest automating blueprint generation and validation to scale the benchmark further. MIPLIB‑NL thus serves as a rigorous testbed for the next generation of NL‑to‑Opt systems, pushing the field beyond toy problems toward truly practical, large‑scale optimization automation.

Comments & Academic Discussion

Loading comments...

Leave a Comment