RadarEye: Robust Liquid Level Tracking Using mmWave Radar in Robotic Pouring

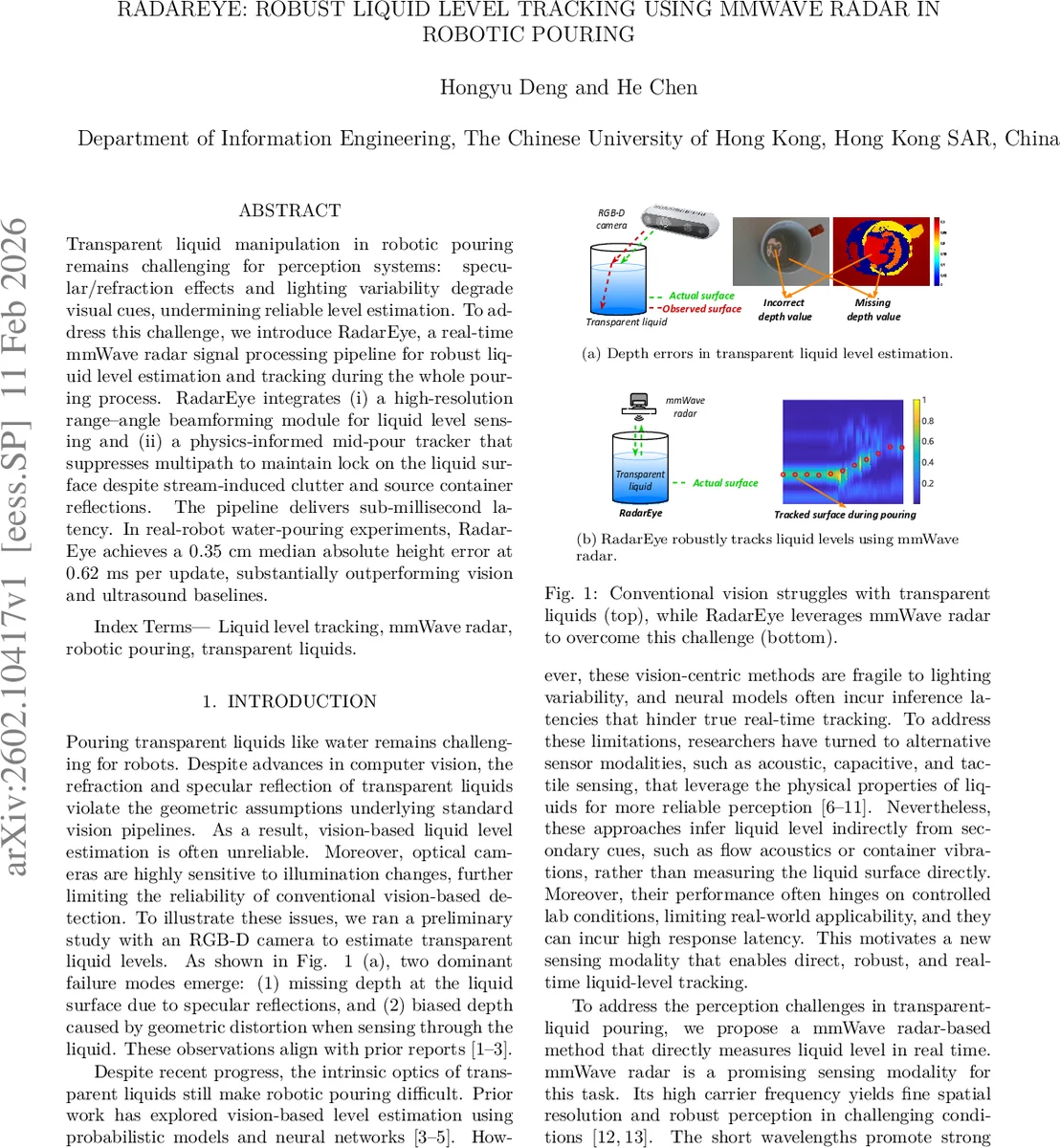

Transparent liquid manipulation in robotic pouring remains challenging for perception systems: specular/refraction effects and lighting variability degrade visual cues, undermining reliable level estimation. To address this challenge, we introduce RadarEye, a real-time mmWave radar signal processing pipeline for robust liquid level estimation and tracking during the whole pouring process. RadarEye integrates (i) a high-resolution range-angle beamforming module for liquid level sensing and (ii) a physics-informed mid-pour tracker that suppresses multipath to maintain lock on the liquid surface despite stream-induced clutter and source container reflections. The pipeline delivers sub-millisecond latency. In real-robot water-pouring experiments, RadarEye achieves a 0.35 cm median absolute height error at 0.62 ms per update, substantially outperforming vision and ultrasound baselines.

💡 Research Summary

The paper presents RadarEye, a real‑time millimeter‑wave (mmWave) radar system designed to robustly track the liquid level of transparent fluids during robotic pouring. Traditional vision‑based approaches struggle with specular reflections, refraction, and illumination changes, leading to missing or biased depth measurements. Acoustic or capacitive sensors provide only indirect cues and suffer from high latency. RadarEye addresses these shortcomings by exploiting the high carrier frequency (61.8 GHz) and wide bandwidth (3.6 GHz) of a TI IWR6843 radar, which renders transparent liquids effectively opaque at mmWave frequencies, allowing direct detection of the liquid surface.

The signal processing pipeline first synthesizes a virtual antenna array (1 × 4 Tx‑Rx) and performs high‑resolution range‑angle (AoA‑ToF) beamforming. The received signals are modeled as a sum of propagation paths, each characterized by an angle of arrival (θ) and time‑of‑flight (τ). By discretizing the AoA‑ToF plane into an N × N grid, steering vectors are constructed for each bin, and coherent summation across antennas and frequencies yields a 2‑D spectrum P(i, j) whose peaks correspond to dominant reflectors. In static conditions the liquid surface produces the strongest peak, enabling straightforward level extraction.

During pouring, however, reflections from the robot gripper, source container, and surrounding fixtures overlap in the AoA‑ToF domain, causing the strongest peak to sometimes belong to a clutter source. To overcome this, RadarEye introduces a physics‑informed tracker that treats level estimation as a path‑finding problem over time. A transition cost cₜ((i,j)→(i′,j′)) combines the negative spectral magnitudes at consecutive time steps with a regularization term η that penalizes large angular or range jumps (weighted by ωθ and ωτ). Because the liquid surface evolves smoothly, valid transitions incur low cost, while abrupt multipath jumps incur high cost. The optimal sequence of bins is found by solving a shortest‑path problem on a graph, constrained to a Q‑neighbourhood to keep computation tractable. This yields a per‑frame update time of ≈0.6 ms.

The hardware setup mounts the radar alongside an Intel RealSense D435i RGB‑D camera on a 3‑D‑printed bracket, integrated with a 7‑DoF Reac hy humanoid arm running ROS on Ubuntu 22.04. Ground‑truth levels are obtained via a transparent ruler attached inside the target container and synchronized video. Experiments include (1) incremental filling, where RadarEye achieves a median error of 0.12 cm, and (2) continuous pouring, where it outperforms a vision‑based baseline (median error 2.1 cm) and an ultrasonic sensor (median error 4.3 cm), achieving a median absolute error of 0.35 cm with 0.62 ms latency. A comparative study against a simple five‑peak smoothing algorithm and a lightweight deep‑learning network shows that the deep model incurs ~40 ms latency, whereas RadarEye and smoothing both run in sub‑millisecond time, confirming the suitability of the physics‑based approach for time‑critical tasks.

Key contributions are: (i) a high‑resolution AoA‑ToF beamforming method that directly measures the liquid surface, (ii) a physics‑informed tracking algorithm that suppresses multipath interference while respecting liquid dynamics, (iii) a real‑time implementation with sub‑millisecond latency, and (iv) extensive empirical validation demonstrating superior accuracy and speed over vision and ultrasound baselines. Limitations include reliance on a specific radar placement and the need for further validation on liquids with different dielectric properties or container geometries. Future work may explore adaptive parameter tuning, multimodal sensor fusion (radar‑vision‑acoustic), and miniaturized low‑power radar hardware to broaden applicability in diverse robotic manipulation scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment