Beyond Student: An Asymmetric Network for Neural Network Inheritance

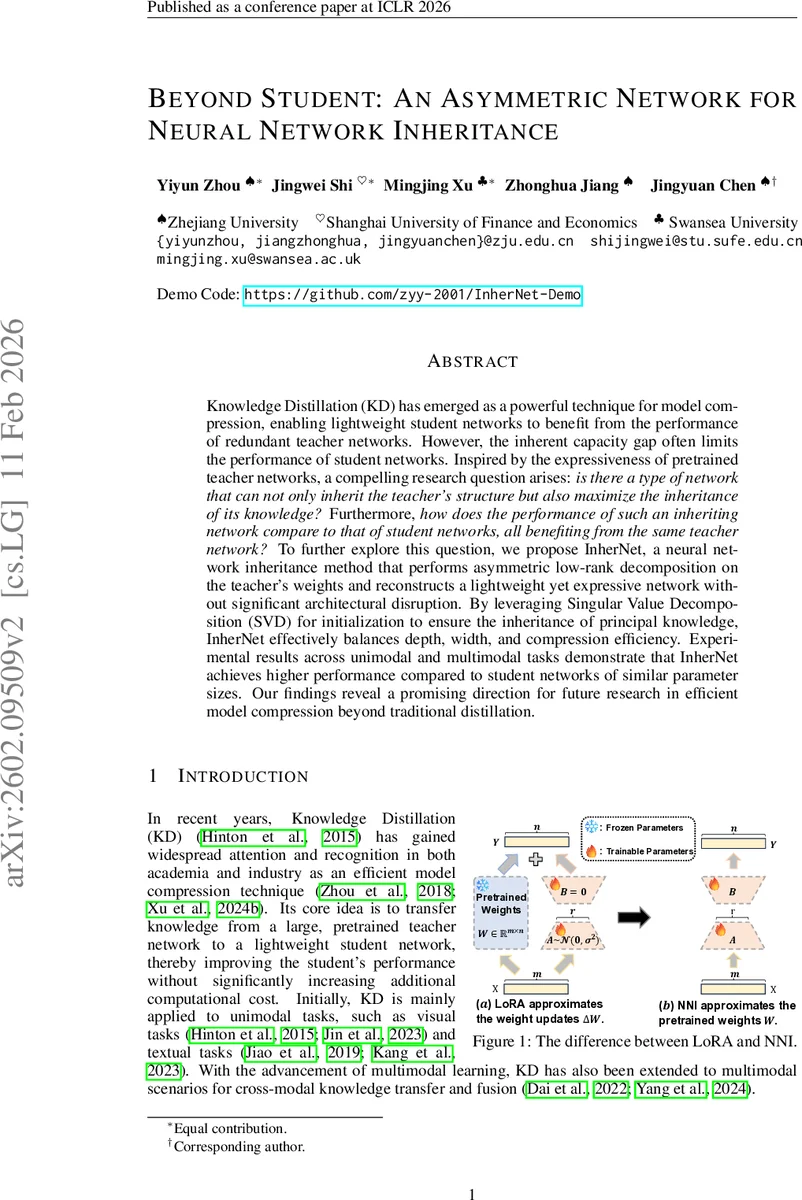

Knowledge Distillation (KD) has emerged as a powerful technique for model compression, enabling lightweight student networks to benefit from the performance of redundant teacher networks. However, the inherent capacity gap often limits the performance of student networks. Inspired by the expressiveness of pretrained teacher networks, a compelling research question arises: is there a type of network that can not only inherit the teacher’s structure but also maximize the inheritance of its knowledge? Furthermore, how does the performance of such an inheriting network compare to that of student networks, all benefiting from the same teacher network? To further explore this question, we propose InherNet, a neural network inheritance method that performs asymmetric low-rank decomposition on the teacher’s weights and reconstructs a lightweight yet expressive network without significant architectural disruption. By leveraging Singular Value Decomposition (SVD) for initialization to ensure the inheritance of principal knowledge, InherNet effectively balances depth, width, and compression efficiency. Experimental results across unimodal and multimodal tasks demonstrate that InherNet achieves higher performance compared to student networks of similar parameter sizes. Our findings reveal a promising direction for future research in efficient model compression beyond traditional distillation.

💡 Research Summary

The paper introduces InherNet, a novel “neural network inheritance” framework that directly inherits both the structure and knowledge of a pretrained teacher model, addressing the capacity gap that limits traditional knowledge distillation (KD) student networks. Instead of training a separate student, InherNet performs an asymmetric low‑rank decomposition of every weight matrix in the teacher. For a linear layer with weight W ∈ ℝ^{m×n}, Singular Value Decomposition (SVD) is applied, and the top‑r singular values and vectors are retained, yielding an optimal rank‑r approximation W_r = U_r Σ_r V_rᵀ (Eckart‑Young‑Mirsky theorem). This approximation is realized as two consecutive low‑rank layers: a “down” projection (U_r Σ_r) and an “up” projection (Σ_r V_rᵀ). The same procedure is applied to convolutional kernels after reshaping them into a 2‑D matrix, producing a 1×1 “down” convolution and an expanded “up” convolution.

Beyond mere low‑rank factorization, InherNet adopts a Mixture‑of‑Experts‑style asymmetric architecture. A shared “down” projection is followed by H expert heads, each with its own “up” projection. A lightweight gating network computes softmax weights G_h(X) for each head based on the input, and the final output is the weighted sum Σ_h G_h(X)·W_up^h·W_down·X. This design simultaneously reduces parameter redundancy (only one down projection) and allows the network to become wider via multiple heads, mitigating depth‑to‑width trade‑offs and preserving expressive power.

The authors provide a thorough theoretical analysis. First, because U_r and V_r are orthonormal, the SVD‑based initialization improves the conditioning of the weight matrix: the effective Lipschitz constant of the loss gradient is reduced from L to L′≈L/κ, where κ is the condition number of the original weight. This leads to more stable early‑stage training. Lemma 2.2 shows that gradients naturally decompose into contributions from each expert and the gating network, enabling specialized learning per head. Under standard assumptions (L‑Lipschitz smoothness, bounded gradient variance, bounded inputs/outputs) and a diminishing learning‑rate schedule η_t = η/√t, Theorem 2.4 proves that InherNet achieves the classic O(1/√T) convergence rate for non‑convex stochastic optimization, with the constant factor improved by the better conditioning.

Parameter efficiency is quantified using three definitions: Parameter Efficiency (PE), Approximation Error, and Expressivity‑to‑Parameter Ratio (EPR). Theorem 2.5 derives the compression ratio ρ ≈ (mn)/(H·r·(m+n)) for a single layer, showing that with modest rank r and a small number of heads H, InherNet can reduce parameters by an order of magnitude while preserving the teacher’s representational capacity. Theoretical bounds on approximation error guarantee that the low‑rank subspace retains most of the original layer’s expressive power.

Empirical evaluation spans unimodal (CIFAR‑10/100, ImageNet, GLUE) and multimodal (ViLT, CLIP‑style) tasks. In each case, InherNet is compared against student networks of comparable parameter count (e.g., ResNet‑18, MobileNet‑V2, BERT‑base). Results consistently show 1–3 percentage‑point absolute gains in accuracy/F1/BLEU, even when the rank r is set to 20–40 % of the original dimension. Moreover, InherNet converges dramatically faster: within the first 10 % of epochs, loss reduction is roughly twice that of the student baselines, confirming the practical impact of SVD initialization and the gating mechanism. Ablation studies verify that both the asymmetric low‑rank design and the multi‑head gating are essential for the observed performance.

The paper also discusses limitations. Selecting the rank r and the number of heads H requires task‑specific tuning; an overly aggressive rank reduction can hurt performance. The shared down projection may become a bottleneck in extremely deep networks, and the current formulation focuses on Conv and Linear layers, leaving Transformer‑style attention modules for future work.

In summary, InherNet bridges KD and Parameter‑Efficient Fine‑Tuning (e.g., LoRA) by directly inheriting the teacher’s weights in a low‑rank, expert‑head architecture. It achieves substantial parameter savings, faster convergence, and superior accuracy compared to traditional student networks, offering a promising direction for efficient model compression beyond conventional distillation.

Comments & Academic Discussion

Loading comments...

Leave a Comment