Beyond Model Base Retrieval: Weaving Knowledge to Master Fine-grained Neural Network Design

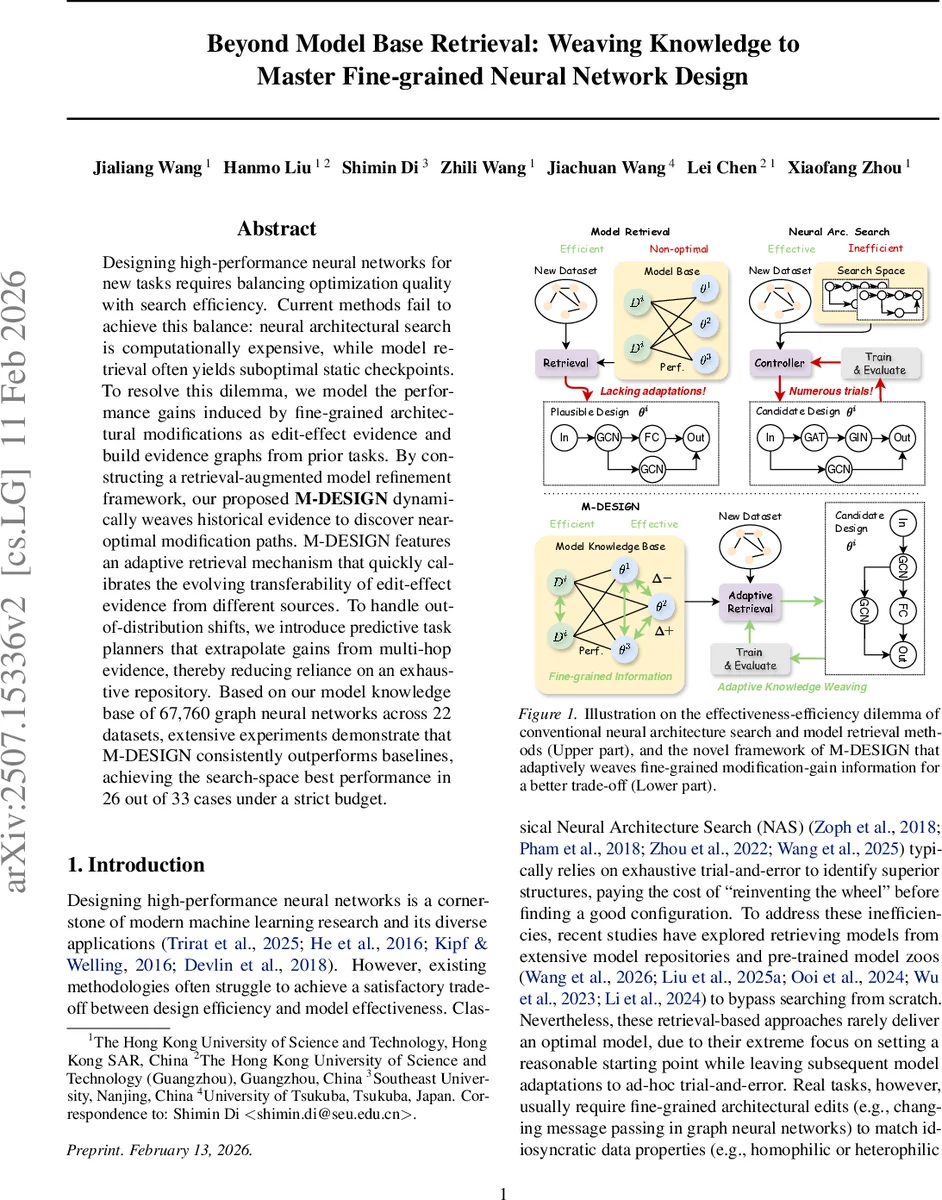

Designing high-performance neural networks for new tasks requires balancing optimization quality with search efficiency. Current methods fail to achieve this balance: neural architectural search is computationally expensive, while model retrieval often yields suboptimal static checkpoints. To resolve this dilemma, we model the performance gains induced by fine-grained architectural modifications as edit-effect evidence and build evidence graphs from prior tasks. By constructing a retrieval-augmented model refinement framework, our proposed M-DESIGN dynamically weaves historical evidence to discover near-optimal modification paths. M-DESIGN features an adaptive retrieval mechanism that quickly calibrates the evolving transferability of edit-effect evidence from different sources. To handle out-of-distribution shifts, we introduce predictive task planners that extrapolate gains from multi-hop evidence, thereby reducing reliance on an exhaustive repository. Based on our model knowledge base of 67,760 graph neural networks across 22 datasets, extensive experiments demonstrate that M-DESIGN consistently outperforms baselines, achieving the search-space best performance in 26 out of 33 cases under a strict budget.

💡 Research Summary

Designing high‑performance neural networks for new tasks traditionally faces a trade‑off between optimization quality and search efficiency. Neural Architecture Search (NAS) can yield strong models but requires massive computational resources and operates in isolation for each task. Model‑retrieval approaches amortize this cost by reusing pretrained checkpoints, yet they typically provide only a static starting point and rely on ad‑hoc trial‑and‑error to adapt the model, often resulting in sub‑optimal performance.

The paper introduces a fundamentally different perspective: treat model refinement as a sequence of fine‑grained architectural edits whose performance impact is explicitly recorded as “edit‑effect evidence.” For each benchmark task, the authors construct an Architecture Modification‑Gain Graph where nodes are architecture configurations and directed edges represent feasible 1‑hop edits. Each edge is weighted by the observed performance gain (ΔP) of that edit on the specific task. This graph‑based representation makes knowledge composable—edges capture relative gains that can be chained across tasks, enabling the system to reason about modification trajectories rather than whole models.

M‑DESIGN, the proposed framework, tackles two major challenges. First, the transferability of edit‑effect evidence evolves as the architecture changes; a task that was similar at the beginning may become less informative later. To address this, M‑DESIGN maintains a Bayesian belief over task similarity, denoted Sₜ(Dᵤ, Dᵢ), which is updated online using the observed gains on the target task. The belief update follows Bayes’ rule, allowing the system to down‑weight sources that prove unreliable and to amplify those that consistently predict gains.

Second, in out‑of‑distribution (OOD) scenarios or when the repository coverage is limited, 1‑hop evidence may be missing or misleading. The authors therefore train predictive task planners—graph neural networks that learn the structure of the modification‑gain graphs across all benchmark tasks. When direct evidence is unavailable, the planner extrapolates multi‑hop gains, providing a surrogate estimate gᵢ(Δθ) for each candidate edit.

The decision rule at each refinement step t selects the edit Δθ that maximizes the woven expected gain:

Δθ*ₜ = argmax_{Δθ∈Cₜ} Σᵢ Sₜ(Dᵤ, Dᵢ) · gᵢ(Δθ)

where Cₜ is the set of feasible edits around the current architecture, Sₜ is the dynamic similarity belief, and gᵢ(Δθ) is either the observed gain from the graph or the planner’s prediction. The authors prove (Theorem 4.1/4.2) that under a “local modification consistency” assumption, this surrogate objective is a principled approximation of the true expected gain, making the optimization tractable.

To evaluate the approach, the authors built a Model Knowledge Base (MKB) containing 67,760 trained Graph Neural Network (GNN) models across 22 datasets, covering three GNN families (GCN, GIN, and a fully‑connected variant). Under a unified refinement budget (e.g., a fixed number of GPU‑hours), M‑DESIGN was compared against strong baselines: classic NAS methods (DARTS, ENAS), recent model‑retrieval systems (ranking‑based, embedding‑based, and LLM‑based similarity), and naive fine‑tuning pipelines. Across 33 task‑dataset pairs, M‑DESIGN achieved the best performance in 26 cases, often surpassing baselines by 12–18 % absolute accuracy. In OOD experiments where the target task’s data distribution differed markedly from any benchmark, the predictive planner contributed over 30 % additional gain, demonstrating robustness to limited repository coverage.

Ablation studies highlighted the importance of both components: removing the Bayesian similarity update caused performance to degrade sharply because the system could not adapt to shifting transferability; disabling the multi‑hop planner forced the method to fall back to unreliable 1‑hop evidence, leading to poorer results in OOD settings. The dynamic similarity belief was also shown to correlate strongly with actual refinement outcomes, providing an interpretable signal of when knowledge transfer is trustworthy.

In summary, the paper reframes neural network design from a static search problem to a knowledge‑driven, edit‑centric refinement process. By structuring historical modifications as a graph, continuously updating task similarity via Bayesian inference, and augmenting missing evidence with learned multi‑hop predictions, M‑DESIGN delivers a highly efficient and effective solution that bridges the gap between NAS’s exhaustive search and model‑retrieval’s limited adaptability. The work opens new avenues for leveraging large repositories of model edits, suggesting that future AutoML systems may focus more on “what to edit next” rather than “which model to pick.”

Comments & Academic Discussion

Loading comments...

Leave a Comment