SonicSieve: Bringing Directional Speech Extraction to Smartphones Using Acoustic Microstructures

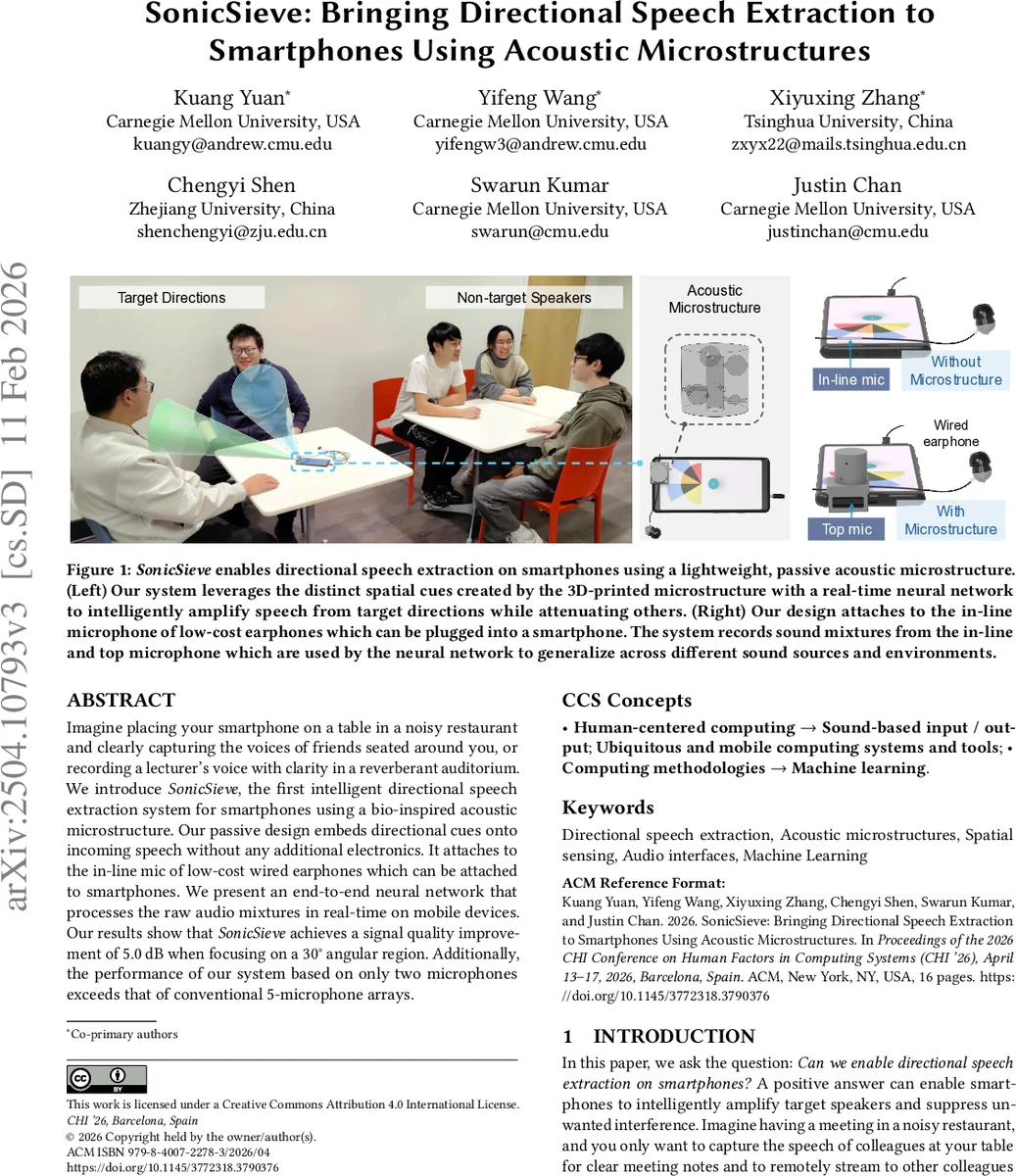

Imagine placing your smartphone on a table in a noisy restaurant and clearly capturing the voices of friends seated around you, or recording a lecturer’s voice with clarity in a reverberant auditorium. We introduce SonicSieve, the first intelligent directional speech extraction system for smartphones using a bio-inspired acoustic microstructure. Our passive design embeds directional cues onto incoming speech without any additional electronics. It attaches to the in-line mic of low-cost wired earphones which can be attached to smartphones. We present an end-to-end neural network that processes the raw audio mixtures in real-time on mobile devices. Our results show that SonicSieve achieves a signal quality improvement of 5.0 dB when focusing on a 30° angular region. Additionally, the performance of our system based on only two microphones exceeds that of conventional 5-microphone arrays.

💡 Research Summary

SonicSieve introduces a novel approach to directional speech extraction on commodity smartphones by combining a passive, 3D‑printed acoustic microstructure with the two microphones that are typically available on a phone (the in‑line microphone of a wired earbud and the built‑in top microphone). Inspired by the way human ears and animal pinnae create direction‑dependent acoustic cues, the microstructure consists of a patterned array of holes, tubes, and resonators that act as an acoustic lens. When sound arrives from different angles, the structure imposes a distinct frequency‑dependent filter Mθ(f) on the signal, producing markedly different spectral signatures for each direction. This passive encoding of spatial information eliminates the need for an active microphone array or additional electronics.

The hardware design process focused on speech‑relevant frequencies (≈300 Hz–4 kHz). Using electromagnetic simulations and a genetic‑algorithm‑based optimizer, the authors selected hole diameters, spacing, and resonator lengths that maximize angular variation in the transfer function while keeping the device printable on a standard desktop 3D printer. The microstructure is mounted directly over the earbud’s in‑line microphone and positioned only a few centimeters from the phone’s top microphone, thereby creating a compact two‑channel system that still captures rich spatial cues. Because it attaches to low‑cost wired earbuds, the solution can be retro‑fitted to any smartphone with a 3.5 mm audio jack or a USB‑C‑to‑audio adapter.

On the algorithmic side, raw audio from both microphones is streamed in 8 ms frames, transformed with a short‑time Fourier transform, and fed into an end‑to‑end neural network. The network architecture combines 1‑D convolutions for local spectral processing with a lightweight transformer encoder that employs causal attention, ensuring that each frame is processed using only past and present information. The model learns to implicitly infer the direction‑specific filter Mθ(f) and produces a time‑frequency mask that amplifies the target angular sector (30° slices) while suppressing all other directions. The system runs entirely on‑device: on a Motorola Edge the average inference time per frame is 7.12 ms, and on a Google Pixel 7 it is 4.46 ms, both well below the 8 ms frame length, guaranteeing real‑time operation without external servers.

Evaluation was conducted in three rooms, nine distinct positions, and with a dataset comprising 30 hours of multi‑speaker speech mixed with realistic background noises (cafés, restaurants, reverberant lecture halls). When focusing on a single 30° sector, SonicSieve achieves an average 5.0 dB improvement in Scale‑Invariant Signal‑to‑Distortion Ratio (SI‑SDR) compared with a baseline that uses the same two microphones but no microstructure. Subjective listening tests with 20 participants rating 720 audio clips show a higher Mean Opinion Score (MOS) than a conventional five‑microphone array system, confirming that the perceived quality is superior despite using fewer hardware resources. The user interface lets users select one or multiple sectors on a semicircular diagram, enabling multi‑speaker scenarios such as recording a panel discussion.

The paper also discusses limitations. The acoustic cues depend on the precise alignment of the microstructure relative to the top microphone; occlusion by a hand or a mis‑aligned attachment can degrade performance. The current granularity is limited to 30° sectors, so finer angular control would require either a more complex microstructure or additional microphones. Future work may explore reconfigurable microstructures (e.g., electrically tunable membranes) to allow dynamic steering, or integrate the approach with existing smartphone audio pipelines for broader adoption.

In summary, SonicSieve demonstrates that passive acoustic engineering combined with modern deep‑learning signal processing can overcome the hardware constraints of smartphones, delivering real‑time, direction‑aware speech extraction with only two inexpensive microphones. This breakthrough opens new possibilities for mobile voice assistants, meeting transcription, and on‑the‑go audio capture in noisy environments, all while keeping the solution affordable and easily deployable.

Comments & Academic Discussion

Loading comments...

Leave a Comment