Measuring Orthogonality as the Blind-Spot of Uncertainty Disentanglement

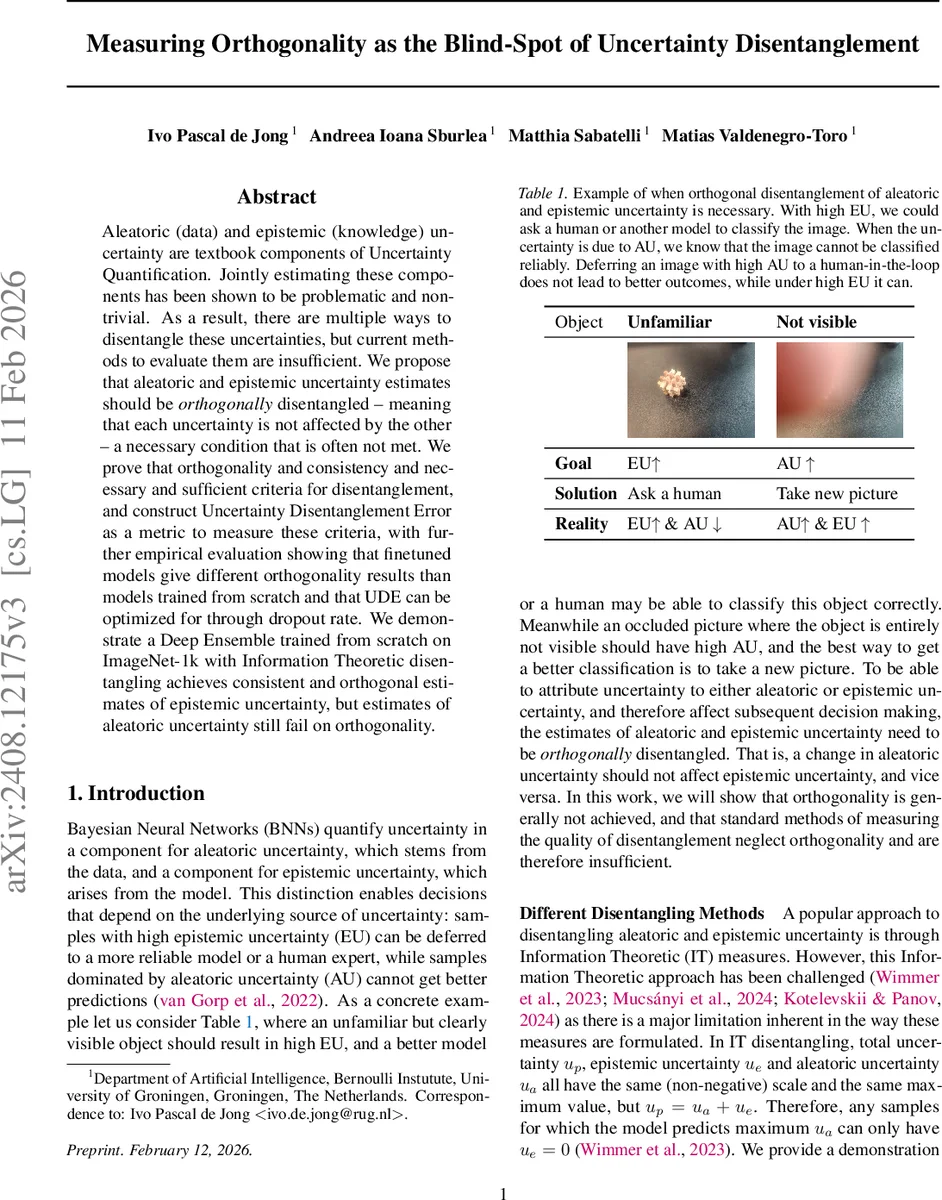

Aleatoric (data) and epistemic (knowledge) uncertainty are textbook components of Uncertainty Quantification. Jointly estimating these components has been shown to be problematic and non-trivial. As a result, there are multiple ways to disentangle these uncertainties, but current methods to evaluate them are insufficient. We propose that aleatoric and epistemic uncertainty estimates should be orthogonally disentangled - meaning that each uncertainty is not affected by the other - a necessary condition that is often not met. We prove that orthogonality and consistency and necessary and sufficient criteria for disentanglement, and construct Uncertainty Disentanglement Error as a metric to measure these criteria, with further empirical evaluation showing that finetuned models give different orthogonality results than models trained from scratch and that UDE can be optimized for through dropout rate. We demonstrate a Deep Ensemble trained from scratch on ImageNet-1k with Information Theoretic disentangling achieves consistent and orthogonal estimates of epistemic uncertainty, but estimates of aleatoric uncertainty still fail on orthogonality.

💡 Research Summary

The paper tackles a fundamental yet under‑examined issue in uncertainty quantification: the need for aleatoric (data‑driven) and epistemic (model‑driven) uncertainties to be orthogonal, i.e., changes in one should not affect the other. While many recent works propose ways to disentangle these two components, evaluation has traditionally focused only on consistency—whether each estimator correlates with its true latent counterpart. The authors argue that consistency alone is insufficient because a composite total uncertainty can satisfy consistency without any real separation (the “Total Uncertainty Trap”). Moreover, they show that correlation between aleatoric and epistemic estimates is not a reliable failure signal when the underlying latent uncertainties are themselves correlated (the “Necessity of Correlation” theorem).

To address this gap, the authors formalize four conditions for proper disentanglement: two consistency conditions (C1, C2) and two orthogonality conditions (O1, O2). They prove that C1 + C2 are necessary but not sufficient, while adding O1 + O2 yields a necessary and sufficient set (Fundamental Disentanglement theorem). Based on these criteria they introduce the Uncertainty Disentanglement Error (UDE), a metric that simultaneously measures consistency and orthogonality through two controlled experiments:

- Dataset‑size scaling – reducing the training set size should increase epistemic uncertainty while leaving aleatoric uncertainty unchanged.

- Label‑noise injection – adding random label noise should raise aleatoric uncertainty while epistemic uncertainty remains stable.

By tracking accuracy changes across these manipulations, UDE quantifies how well a method respects the orthogonal behavior.

The authors evaluate four Bayesian neural‑network approximations—MC‑Dropout, MC‑DropConnect, Deep Ensembles, and Flipout—across four domains (CIFAR‑10, Fashion‑MNIST, UCI Wine, and a BCI time‑series dataset). Two disentangling strategies are compared: the Information‑Theoretic (IT) approach, which decomposes total predictive entropy into expected entropy (aleatoric) and mutual information (epistemic), and the Gaussian Logits method, which treats logits as Gaussian and separates variance into aleatoric and epistemic parts.

Key empirical findings:

- Consistency is generally achieved: epistemic estimates decrease with more data, and aleatoric estimates increase with label noise, across most models.

- Orthogonality is rarely satisfied: aleatoric estimates often rise when dataset size grows, indicating leakage from epistemic to aleatoric components. The IT method succeeds in producing orthogonal epistemic estimates but fails for aleatoric estimates. Gaussian Logits fails on both fronts.

- Fine‑tuning harms orthogonality: models initialized from pretrained weights exhibit markedly lower orthogonal scores than those trained from scratch, suggesting that transfer learning introduces hidden dependencies between the two uncertainties.

- Dropout rate can be tuned to minimize UDE: systematic sweeps reveal optimal dropout probabilities (≈0.1–0.2) that balance consistency and orthogonality, though the exact optimum varies by architecture and dataset.

- Large‑scale ImageNet‑1k experiment: a Deep Ensemble trained from scratch with IT disentangling yields epistemic uncertainty that is both consistent and orthogonal, yet aleatoric uncertainty remains entangled with epistemic signals. This confirms prior observations that aleatoric and epistemic uncertainties are often correlated in complex real‑world data.

The paper also provides an open‑source Python package for computing UDE on both scikit‑learn and PyTorch models, facilitating reproducibility and future benchmarking.

In conclusion, the work reframes uncertainty disentanglement by elevating orthogonality to a first‑class requirement, supplies a rigorous theoretical foundation, and delivers a practical metric (UDE) that reveals systematic shortcomings of existing methods. It calls for next‑generation uncertainty estimation techniques that explicitly enforce orthogonal behavior, especially in safety‑critical applications where distinguishing the source of uncertainty is essential for reliable decision‑making.

Comments & Academic Discussion

Loading comments...

Leave a Comment