A Low-Rank Defense Method for Adversarial Attack on Diffusion Models

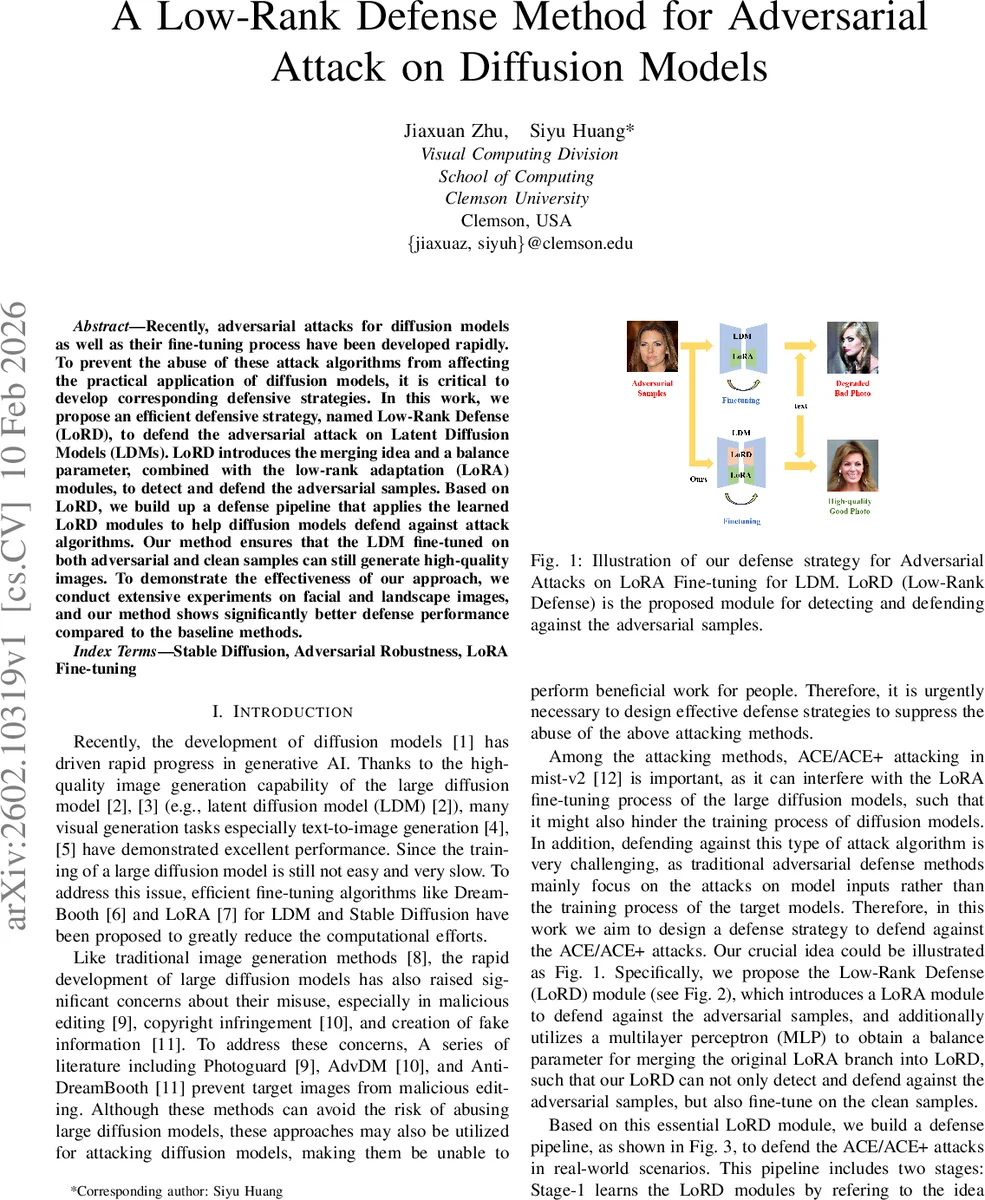

Recently, adversarial attacks for diffusion models as well as their fine-tuning process have been developed rapidly. To prevent the abuse of these attack algorithms from affecting the practical application of diffusion models, it is critical to develop corresponding defensive strategies. In this work, we propose an efficient defensive strategy, named Low-Rank Defense (LoRD), to defend the adversarial attack on Latent Diffusion Models (LDMs). LoRD introduces the merging idea and a balance parameter, combined with the low-rank adaptation (LoRA) modules, to detect and defend the adversarial samples. Based on LoRD, we build up a defense pipeline that applies the learned LoRD modules to help diffusion models defend against attack algorithms. Our method ensures that the LDM fine-tuned on both adversarial and clean samples can still generate high-quality images. To demonstrate the effectiveness of our approach, we conduct extensive experiments on facial and landscape images, and our method shows significantly better defense performance compared to the baseline methods.

💡 Research Summary

The paper addresses a newly emerging threat: adversarial attacks that target the LoRA fine‑tuning process of Latent Diffusion Models (LDMs). While most prior defenses focus on protecting model inputs, the authors propose a defense that operates directly on the fine‑tuning stage, specifically against ACE/ACE+ attacks introduced in mist‑v2. Their solution, called Low‑Rank Defense (LoRD), augments the standard LoRA module with a second low‑rank branch (matrix B′) and a lightweight multilayer perceptron (MLP) that produces a balance parameter λ. The MLP takes the scaled LoRA output (α_r B A x) as input, passes it through a sigmoid, and yields λ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment