Causality in Video Diffusers is Separable from Denoising

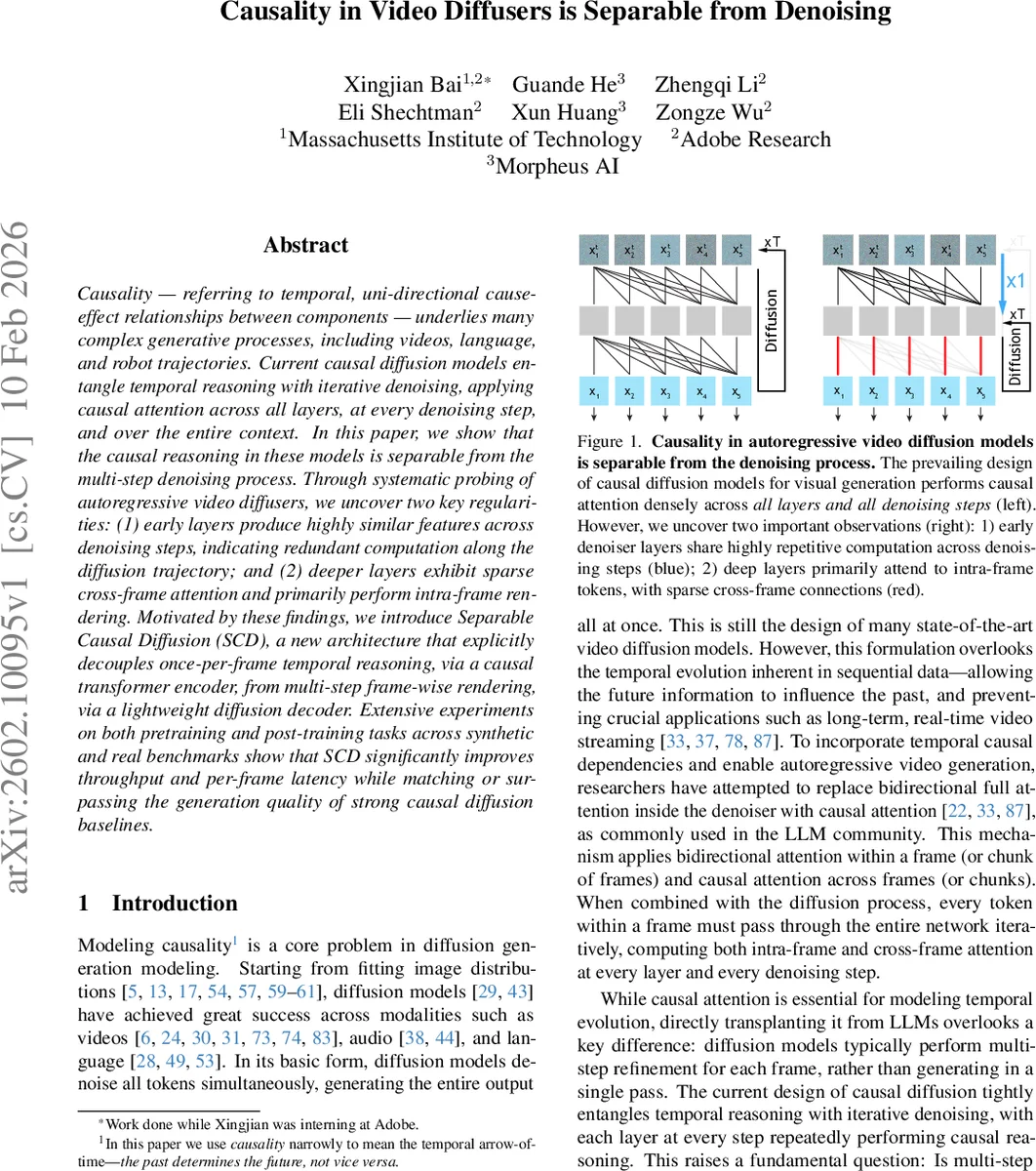

Causality – referring to temporal, uni-directional cause-effect relationships between components – underlies many complex generative processes, including videos, language, and robot trajectories. Current causal diffusion models entangle temporal reasoning with iterative denoising, applying causal attention across all layers, at every denoising step, and over the entire context. In this paper, we show that the causal reasoning in these models is separable from the multi-step denoising process. Through systematic probing of autoregressive video diffusers, we uncover two key regularities: (1) early layers produce highly similar features across denoising steps, indicating redundant computation along the diffusion trajectory; and (2) deeper layers exhibit sparse cross-frame attention and primarily perform intra-frame rendering. Motivated by these findings, we introduce Separable Causal Diffusion (SCD), a new architecture that explicitly decouples once-per-frame temporal reasoning, via a causal transformer encoder, from multi-step frame-wise rendering, via a lightweight diffusion decoder. Extensive experiments on both pretraining and post-training tasks across synthetic and real benchmarks show that SCD significantly improves throughput and per-frame latency while matching or surpassing the generation quality of strong causal diffusion baselines.

💡 Research Summary

This paper revisits the design of causal video diffusion models, which traditionally intertwine temporal causality with the multi‑step denoising process by applying causal attention at every layer and every diffusion timestep. Through extensive probing of an autoregressive video diffuser (WAN‑2.1 T2V‑1.3B), the authors discover two systematic regularities: (1) early and middle layers produce almost identical feature representations across all denoising steps (cosine similarity > 0.95, PCA alignment), indicating that the high‑level structure and motion of a frame are established within the first few diffusion steps; later steps merely refine pixel‑level details. (2) Deeper layers allocate very little attention mass to past frames, focusing almost exclusively on intra‑frame tokens. When the last five layers are forced to attend only within the current frame, generation quality remains stable after a brief fine‑tuning, confirming that cross‑frame reasoning is unnecessary in deep layers. Motivated by these findings, the authors propose Separable Causal Diffusion (SCD), an architecture that explicitly decouples temporal reasoning from visual refinement. A causal transformer encoder processes the clean history once per frame, caches KV pairs, and produces a context latent that summarizes entities, layout, and motion. This latent is reused across all diffusion steps for the target frame. A lightweight diffusion decoder then performs frame‑wise iterative denoising using the noisy frame tokens and the cached context, without any cross‑frame attention. This design mirrors next‑token prediction in large language models but applies it to next‑frame prediction followed by per‑frame rendering. Experiments on both pre‑training from scratch and fine‑tuning from a pretrained bidirectional teacher model across synthetic and real video benchmarks show that SCD matches or surpasses strong causal diffusion baselines in FVD, IS, and other quality metrics while achieving 2–3× lower per‑frame latency and higher throughput. The work demonstrates that temporal causality can be performed once per frame, and that the remaining denoising steps can be confined to intra‑frame processing, offering a more efficient solution for real‑time streaming, interactive generation, and other latency‑sensitive video applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment