Beyond a Single Queue: Multi-Level-Multi-Queue as an Effective Design for SSSP problems on GPUs

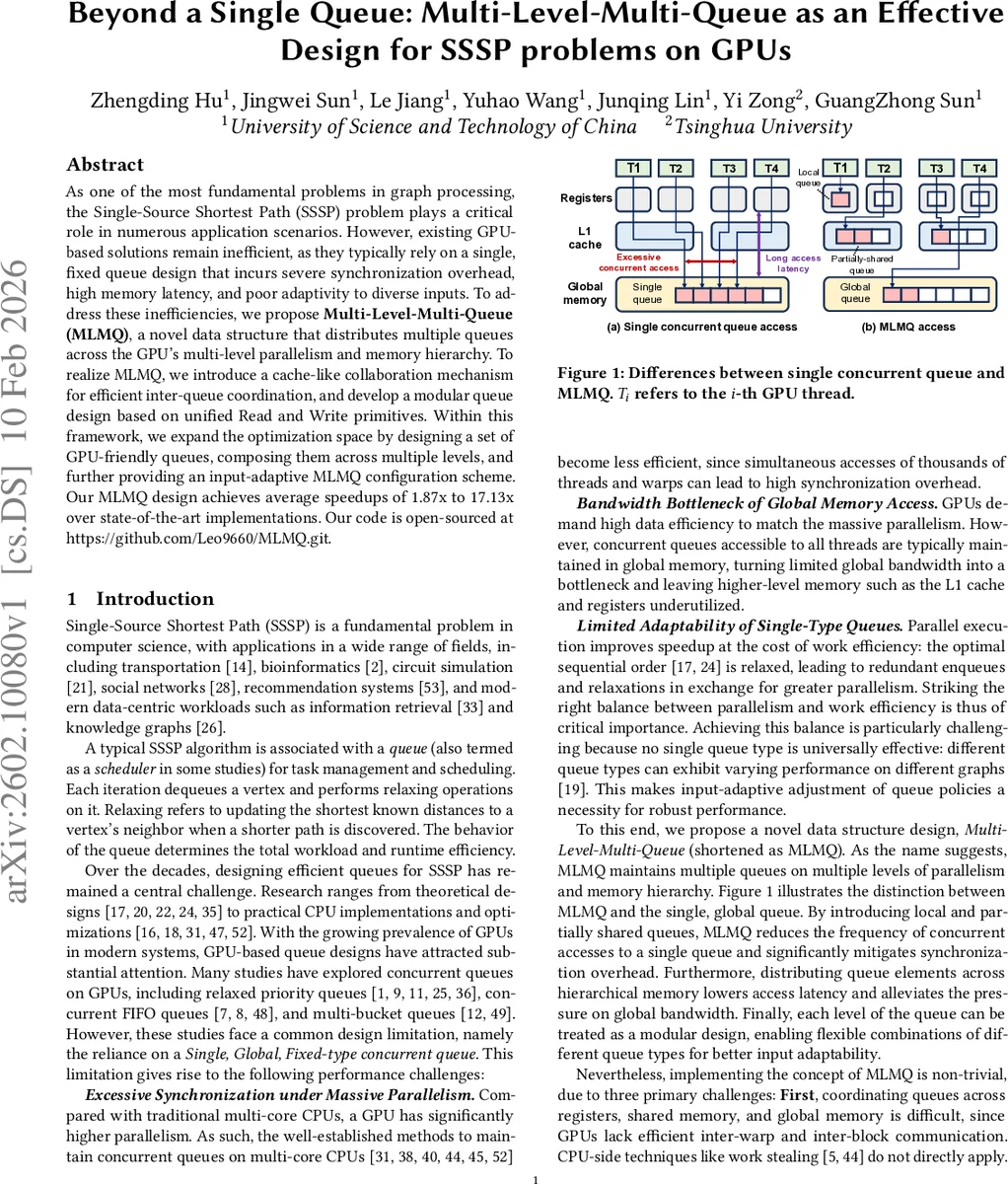

As one of the most fundamental problems in graph processing, the Single-Source Shortest Path (SSSP) problem plays a critical role in numerous application scenarios. However, existing GPU-based solutions remain inefficient, as they typically rely on a single, fixed queue design that incurs severe synchronization overhead, high memory latency, and poor adaptivity to diverse inputs. To address these inefficiencies, we propose MultiLevelMultiQueue (MLMQ), a novel data structure that distributes multiple queues across the GPU’s multi-level parallelism and memory hierarchy. To realize MLMQ, we introduce a cache-like collaboration mechanism for efficient inter-queue coordination, and develop a modular queue design based on unified Read and Write primitives. Within this framework, we expand the optimization space by designing a set of GPU-friendly queues, composing them across multiple levels, and further providing an input-adaptive MLMQ configuration scheme. Our MLMQ design achieves average speedups of 1.87x to 17.13x over state-of-the-art implementations. Our code is open-sourced at https://github.com/Leo9660/MLMQ.git.

💡 Research Summary

The paper addresses the long‑standing performance bottlenecks of GPU‑based Single‑Source Shortest Path (SSSP) algorithms that rely on a single, global queue. Such designs suffer from excessive synchronization due to massive parallel contention, high latency and bandwidth pressure from frequent global‑memory accesses, and a lack of adaptability to the wide variety of real‑world graph structures. To overcome these issues, the authors propose Multi‑Level‑Multi‑Queue (MLMQ), a novel data‑structure that distributes several queues across the GPU’s hierarchical parallelism (threads, warps, blocks) and memory hierarchy (registers, shared memory, global memory).

MLMQ’s core innovation is a cache‑like collaboration mechanism that moves work from higher‑level queues (typically residing in global memory) to lower‑level queues (in shared memory or registers) using lightweight “Read” and “Write” primitives. These primitives provide a uniform interface regardless of the underlying queue type, enabling modular composition of FIFO queues, bucket queues (Δ‑stepping), and lightweight priority queues. By keeping most enqueue/dequeue operations on‑chip, MLMQ dramatically reduces the number of atomic operations and global memory traffic.

The design also tackles three major challenges: (1) coordinating queues across memory levels despite limited visibility; (2) preventing priority inversion and redundant wavefront propagation that arise when each warp works on its own local queue; (3) navigating the enlarged design space where the optimal combination of queue types, placements, and parameters depends on graph characteristics. The authors solve (1) with a cache‑style data exchange that limits synchronization to intra‑warp or intra‑block scopes, (2) by assigning simple FIFO policies to higher‑level queues while allowing more precise ordering in lower‑level queues, and (3) by introducing an input‑adaptive configuration strategy. This strategy extracts graph features (average degree, diameter, weight distribution) and either applies rule‑based heuristics or a lightweight machine‑learning model to select the best MLMQ configuration at runtime.

Experimental evaluation on twelve diverse real‑world graphs (road networks, social networks, meshes, synthetic graphs) compares MLMQ‑based SSSP against state‑of‑the‑art GPU frameworks such as Gunrock and CuGraph, as well as prior single‑queue implementations. MLMQ reduces atomic operations by up to 9×, cuts global memory accesses by more than 70 %, and achieves speedups ranging from 1.87× to 17.13×. The adaptive scheme guarantees that even on graphs where a particular queue type would be suboptimal, MLMQ still outperforms or matches the best static baseline.

The paper concludes that multi‑level, multi‑queue designs are essential for exploiting modern GPU architectures in graph processing. By providing modular queue primitives, a cache‑like collaboration layer, and an automatic configuration mechanism, MLMQ offers a flexible foundation that can be extended to other graph algorithms (BFS, PageRank, connected components) and to heterogeneous or multi‑GPU environments. The open‑source implementation further encourages reproducibility and future research on adaptive, hierarchical data structures for high‑performance GPU computing.

Comments & Academic Discussion

Loading comments...

Leave a Comment