Can Image Splicing and Copy-Move Forgery Be Detected by the Same Model? Forensim: An Attention-Based State-Space Approach

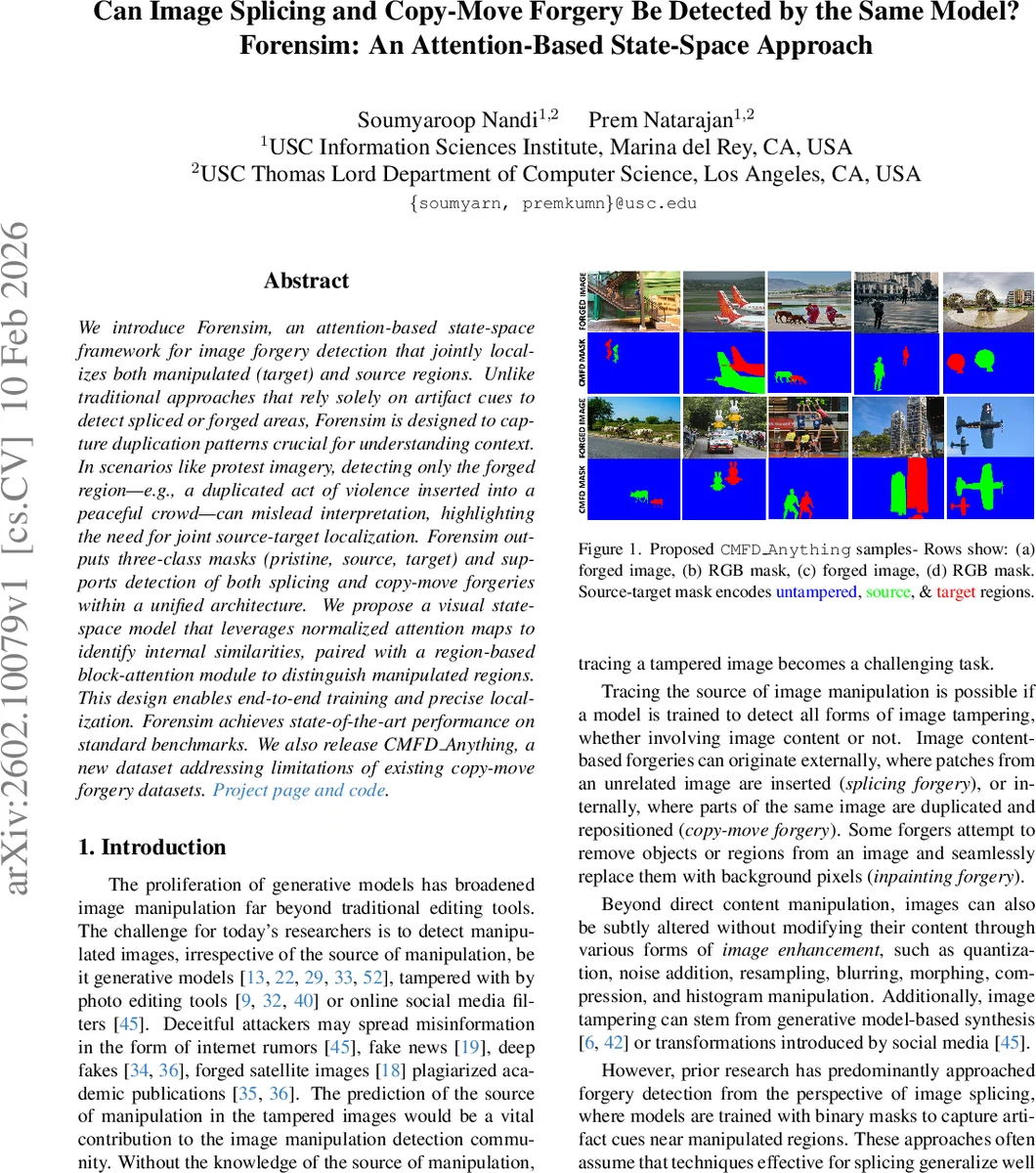

We introduce Forensim, an attention-based state-space framework for image forgery detection that jointly localizes both manipulated (target) and source regions. Unlike traditional approaches that rely solely on artifact cues to detect spliced or forged areas, Forensim is designed to capture duplication patterns crucial for understanding context. In scenarios such as protest imagery, detecting only the forged region, for example a duplicated act of violence inserted into a peaceful crowd, can mislead interpretation, highlighting the need for joint source-target localization. Forensim outputs three-class masks (pristine, source, target) and supports detection of both splicing and copy-move forgeries within a unified architecture. We propose a visual state-space model that leverages normalized attention maps to identify internal similarities, paired with a region-based block attention module to distinguish manipulated regions. This design enables end-to-end training and precise localization. Forensim achieves state-of-the-art performance on standard benchmarks. We also release CMFD-Anything, a new dataset addressing limitations of existing copy-move forgery datasets.

💡 Research Summary

The paper tackles a fundamental question in image forensics: can a single model detect both splicing (insertion of foreign content) and copy‑move (duplication of internal content) forgeries? Historically, most forgery‑detection systems have been specialized. Splicing detectors rely on low‑level artifact cues—compression inconsistencies, noise patterns, camera sensor fingerprints—and are trained with binary masks that label pixels as either forged or pristine. Copy‑move detectors, on the other hand, focus on finding duplicated regions but often ignore the source region, providing only a binary indication of tampering. This separation limits interpretability and hampers investigations where the provenance of the duplicated content is crucial (e.g., tracing a forged protest image back to its original source).

Forensim proposes a unified framework that treats forgery localization as a three‑class segmentation problem: pristine, source (the region that has been copied), and target (the region where the copy has been pasted). By supervising the network with source‑target masks, the model learns to simultaneously identify the manipulated area and its origin, thereby offering richer forensic insight.

The architecture builds on a Vision Transformer (ViT) backbone pretrained with DINO. The first four transformer layers produce high‑dimensional token embeddings (384‑dimensional) for each pixel. These embeddings are fed into two novel attention modules, both implemented as visual state‑space models (VSSM) to achieve linear computational complexity while capturing long‑range dependencies.

-

Similarity Attention (Sim Attn) – Constructs an explicit N × N affinity matrix (N = H × W) that quantifies pairwise similarity between all spatial locations. The matrix is generated by linearly projecting token embeddings, applying ELU activation, and enriching them with Rotary Positional Embeddings (RoPE). A distance‑based weighting kernel K and a bidirectional softmax normalize the affinities, producing a refined similarity map that highlights regions with high mutual correspondence. This map is especially effective for detecting duplicated patches because copy‑move forgeries generate clusters of high similarity between source and target.

-

Manipulation State‑Space Attention (MSSA) – A multi‑scale attention block that processes the same token embeddings through three parallel VSSM units, each with a different number of heads and receptive field. MSSA incorporates Locally Enhanced Positional Encoding (LePE) via depth‑wise convolutions and RoPE to preserve fine‑grained spatial cues. The outputs of the three scales are averaged, reshaped, and passed through a convolution‑SiLU head to produce a manipulation map that emphasizes likely forged regions while suppressing background noise.

The two maps (similarity and manipulation) are concatenated and decoded by a lightweight segmentation head that outputs the three‑class mask. Because the attention mechanisms are built on state‑space equations, they scale linearly with image size, enabling high‑resolution processing without the quadratic cost of classic self‑attention.

A major contribution is the introduction of CMFD Anything, a new copy‑move forgery dataset derived from high‑quality Segment‑Anything images. Unlike earlier CMFD datasets that were generated from low‑resolution MS‑COCO images and contained easily detectable forgeries, CMFD Anything uses images >1024 px, applies realistic post‑processing (color jitter, JPEG compression, Gaussian noise, blur), and creates source‑target pairs that are visually plausible. This dataset addresses the scarcity of diverse, high‑fidelity copy‑move examples and serves as a robust benchmark for both copy‑move and splicing detection.

Training is performed jointly on traditional splicing datasets (binary masks) and CMFD Anything (source‑target masks), allowing the model to learn both artifact‑based cues and duplication patterns. Extensive experiments on standard benchmarks (CASIA, COVER, NIST, etc.) demonstrate that Forensim outperforms state‑of‑the‑art splicing detectors (ManTraNet, CAT‑Net, HiFi‑Net) and copy‑move detectors (BusterNet, DOA‑GAN) across all metrics. Notably, Forensim achieves a 4–7 percentage‑point gain in F1‑score and IoU for three‑class segmentation, and it markedly reduces false positives in regions with naturally repeating textures. Ablation studies confirm that Sim Attn and MSSA each contribute positively, but their combination yields the highest performance, evidencing a synergistic effect.

In summary, Forensim introduces: (i) a unified three‑class training paradigm that simultaneously localizes forged and source regions, (ii) a novel, efficient state‑space‑based attention architecture tailored for similarity and manipulation detection, and (iii) a high‑quality copy‑move dataset that pushes the community toward more realistic evaluation. The work opens avenues for extending the approach to multimodal forgeries (e.g., text‑to‑image generation), video frame duplication, and broader forensic pipelines where provenance tracing is essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment