AIDED: Augmenting Interior Design with Human Experience Data for Designer-AI Co-Design

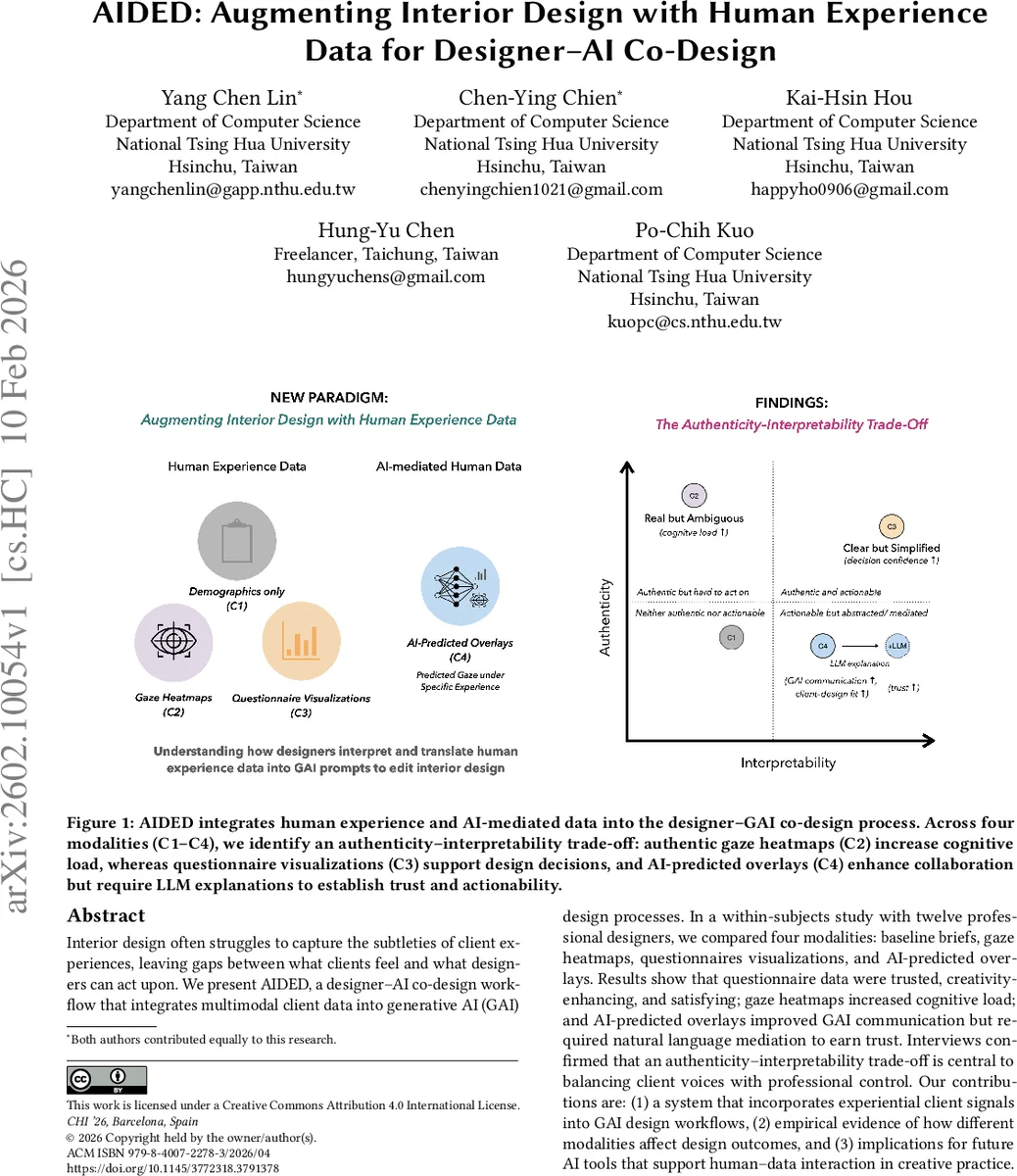

Interior design often struggles to capture the subtleties of client experience, leaving gaps between what clients feel and what designers can act upon. We present AIDED, a designer-AI co-design workflow that integrates multimodal client data into generative AI (GAI) design processes. In a within-subjects study with twelve professional designers, we compared four modalities: baseline briefs, gaze heatmaps, questionnaire visualizations, and AI-predicted overlays. Results show that questionnaire data were trusted, creativity-enhancing, and satisfying; gaze heatmaps increased cognitive load; and AI-predicted overlays improved GAI communication but required natural language mediation to establish trust. Interviews confirmed that an authenticity-interpretability trade-off is central to balancing client voices with professional control. Our contributions are: (1) a system that incorporates experiential client signals into GAI design workflows; (2) empirical evidence of how different modalities affect design outcomes; and (3) implications for future AI tools that support human-data interaction in creative practice.

💡 Research Summary

**

The paper introduces AIDED, a designer‑AI co‑design workflow that enriches interior design with multimodal client experience data. The authors argue that traditional interior design processes rely heavily on designers’ expertise and generic briefs, while the subtle emotional, cognitive, and behavioral cues of clients are often ignored. To bridge this gap, AIDED integrates three types of experiential signals—gaze heatmaps, questionnaire visualizations, and AI‑predicted attention overlays—into a generative AI (GAI) pipeline, alongside a conventional brief (baseline condition).

The system is built around an “authenticity‑interpretability” spectrum. Authenticity refers to how faithfully a representation preserves the richness and ambiguity of raw client experience (e.g., raw gaze traces), whereas interpretability captures how easily designers can read, trust, and act upon the data within fast‑paced GAI workflows (e.g., structured questionnaire charts). Four conditions were evaluated: (C1) baseline brief, (C2) raw gaze heatmaps, (C3) processed questionnaire visualizations, and (C4) AI‑predicted overlays accompanied by large‑language‑model (LLM) explanations.

A within‑subjects study with twelve professional interior designers was conducted. Participants completed design tasks under each condition while their perceived cognitive load (NASA‑TLX), trust in the data, creativity (idea quantity and diversity), and overall satisfaction were recorded. Quantitative results show that questionnaire visualizations (C3) yielded the highest trust scores (average 4.6/5) and creativity ratings (average 4.3/5) while imposing the lowest cognitive load. Gaze heatmaps (C2) significantly increased cognitive effort (average TLX 4.2/5) because designers struggled to translate raw saliency patterns into actionable design decisions. AI‑predicted overlays (C4) improved communication with the generative model—designers could more precisely steer image generation—but only when accompanied by natural‑language explanations; without LLM mediation, trust dropped to 3.8/5.

Qualitative interviews revealed a consistent “authenticity‑interpretability trade‑off.” Designers appreciated the richness of raw data but found it opaque; they preferred processed visualizations for quick decision‑making but feared loss of nuanced client needs. AI‑generated overlays acted as a bridge between human intent and model behavior, yet required transparent justification to be adopted confidently. The authors therefore propose that future design tools must pair data visualizations with explanatory mechanisms (e.g., LLM‑generated rationales) to sustain both designer autonomy and client‑centered outcomes.

The contributions are threefold: (1) a concrete system (AIDED) that expands the input space of interior design tools to include experiential signals, (2) empirical evidence mapping how different modalities affect designers’ cognitive load, trust, creativity, and satisfaction, and (3) the articulation of an “authenticity‑interpretability” theoretical lens that extends prior work on human‑AI co‑creation to the domain of multimodal client data. The paper also discusses broader implications such as data privacy, ethical handling of biometric signals, and the need for explainable AI to preserve professional agency. In sum, AIDED demonstrates that when client experience data are thoughtfully integrated—balancing raw authenticity with actionable interpretability—generative AI can become a true collaborative partner rather than a mere style engine, opening new pathways for human‑data‑AI synergy in interior and architectural design.

Comments & Academic Discussion

Loading comments...

Leave a Comment