Code2World: A GUI World Model via Renderable Code Generation

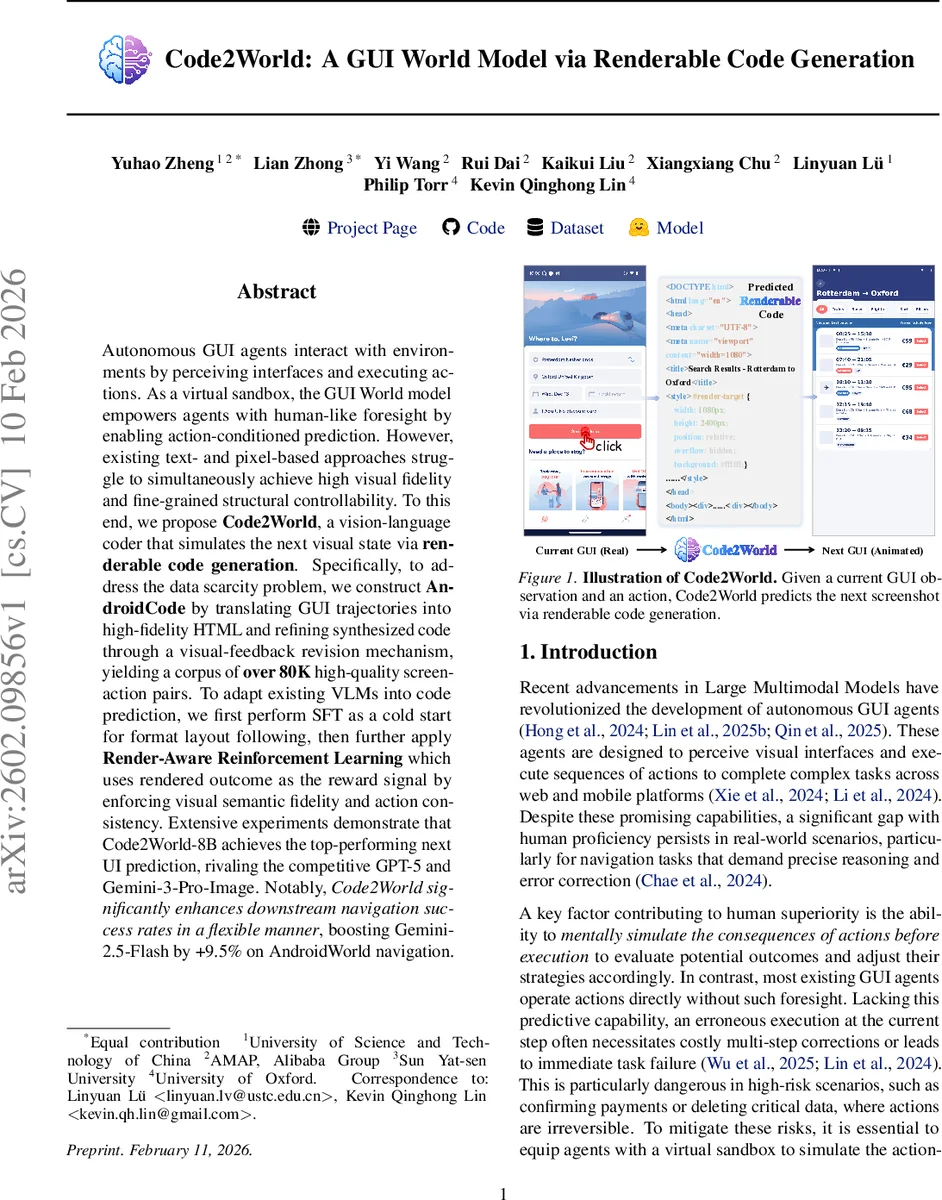

Autonomous GUI agents interact with environments by perceiving interfaces and executing actions. As a virtual sandbox, the GUI World model empowers agents with human-like foresight by enabling action-conditioned prediction. However, existing text- and pixel-based approaches struggle to simultaneously achieve high visual fidelity and fine-grained structural controllability. To this end, we propose Code2World, a vision-language coder that simulates the next visual state via renderable code generation. Specifically, to address the data scarcity problem, we construct AndroidCode by translating GUI trajectories into high-fidelity HTML and refining synthesized code through a visual-feedback revision mechanism, yielding a corpus of over 80K high-quality screen-action pairs. To adapt existing VLMs into code prediction, we first perform SFT as a cold start for format layout following, then further apply Render-Aware Reinforcement Learning which uses rendered outcome as the reward signal by enforcing visual semantic fidelity and action consistency. Extensive experiments demonstrate that Code2World-8B achieves the top-performing next UI prediction, rivaling the competitive GPT-5 and Gemini-3-Pro-Image. Notably, Code2World significantly enhances downstream navigation success rates in a flexible manner, boosting Gemini-2.5-Flash by +9.5% on AndroidWorld navigation. The code is available at https://github.com/AMAP-ML/Code2World.

💡 Research Summary

Code2World introduces a novel paradigm for building a world model of graphical user interfaces (GUIs) by generating renderable code rather than directly predicting pixel images or textual descriptions. The authors argue that GUI screens are fundamentally defined by structured code (e.g., HTML/CSS), which inherently provides both precise structural controllability and high‑fidelity visual output when rendered. To operationalize this idea, they construct a large‑scale dataset called AndroidCode, consisting of over 80,000 screen‑action pairs. Each sample is created by translating raw Android UI screenshots from the AndroidControl benchmark into self‑contained HTML using GPT‑5, followed by a visual‑feedback revision loop: the generated HTML is rendered, compared to the original screenshot with a SigLIP similarity score, and if the score falls below a high threshold, the model is prompted to correct the code based on the visual discrepancy. This pipeline yields high‑quality, tightly aligned code‑image pairs without manual annotation.

The model training proceeds in two stages. First, a supervised fine‑tuning (SFT) phase adapts the Qwen‑3‑VL‑8B‑Instruct backbone to map the triplet (current screenshot, user action, task goal) to the target HTML code. This stage teaches the model basic HTML syntax and layout reasoning but does not consider the final rendered image. Second, a Render‑Aware Reinforcement Learning (RARL) stage uses the rendered outcome of sampled HTML as a learning signal. Two complementary reward components are defined: (1) Visual Semantic Reward (R_sem) evaluates structural and semantic alignment between the rendered prediction and the ground‑truth screenshot using a VLM‑as‑Judge that tolerates placeholder assets and focuses on layout correspondence; (2) Action Consistency Reward (R_act) checks whether the predicted next screen logically follows from the executed action, again via a VLM judgment on the (current screen, action, predicted screen) triple. The total reward is a weighted sum of R_sem and R_act, and the policy is optimized with Group Relative Policy Optimization (GRPO). This design forces the model to bridge the gap between textual code generation and visual reality while respecting the dynamics of user actions.

Evaluation is performed on two fronts. In the Next UI Prediction benchmark, Code2World‑8B achieves state‑of‑the‑art performance, matching or surpassing large multimodal models such as GPT‑5 and Gemini‑3‑Pro‑Image in both structural accuracy and visual fidelity. In downstream navigation experiments, the authors plug Code2World into an existing GUI agent (Gemini‑2.5‑Flash) as a virtual sandbox that simulates the result of each action before execution. This integration improves the agent’s navigation success rate on the AndroidWorld benchmark by an average of 9.5 %, demonstrating that a high‑quality world model can materially enhance planning and error recovery for autonomous GUI agents.

The paper also discusses limitations. The current implementation is restricted to HTML/CSS representations, which cannot capture native Android widgets, complex animations, or platform‑specific UI components. Moreover, differences between browser rendering and actual device rendering (fonts, DPI, system themes) may introduce a domain gap. Future work is suggested to extend the approach to other UI frameworks such as React‑Native or Flutter, and to incorporate multi‑engine rendering checks to better align simulated outputs with real device screens.

In summary, Code2World offers a compelling solution to the longstanding trade‑off between visual fidelity and structural controllability in GUI world modeling. By leveraging a large, automatically refined code‑image dataset and a render‑aware reinforcement learning framework, it achieves competitive performance with leading multimodal models and demonstrably boosts downstream agent capabilities. The work opens a promising direction for building more reliable, simulation‑driven autonomous agents that can safely plan actions in complex software environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment