ArtifactLens: Hundreds of Labels Are Enough for Artifact Detection with VLMs

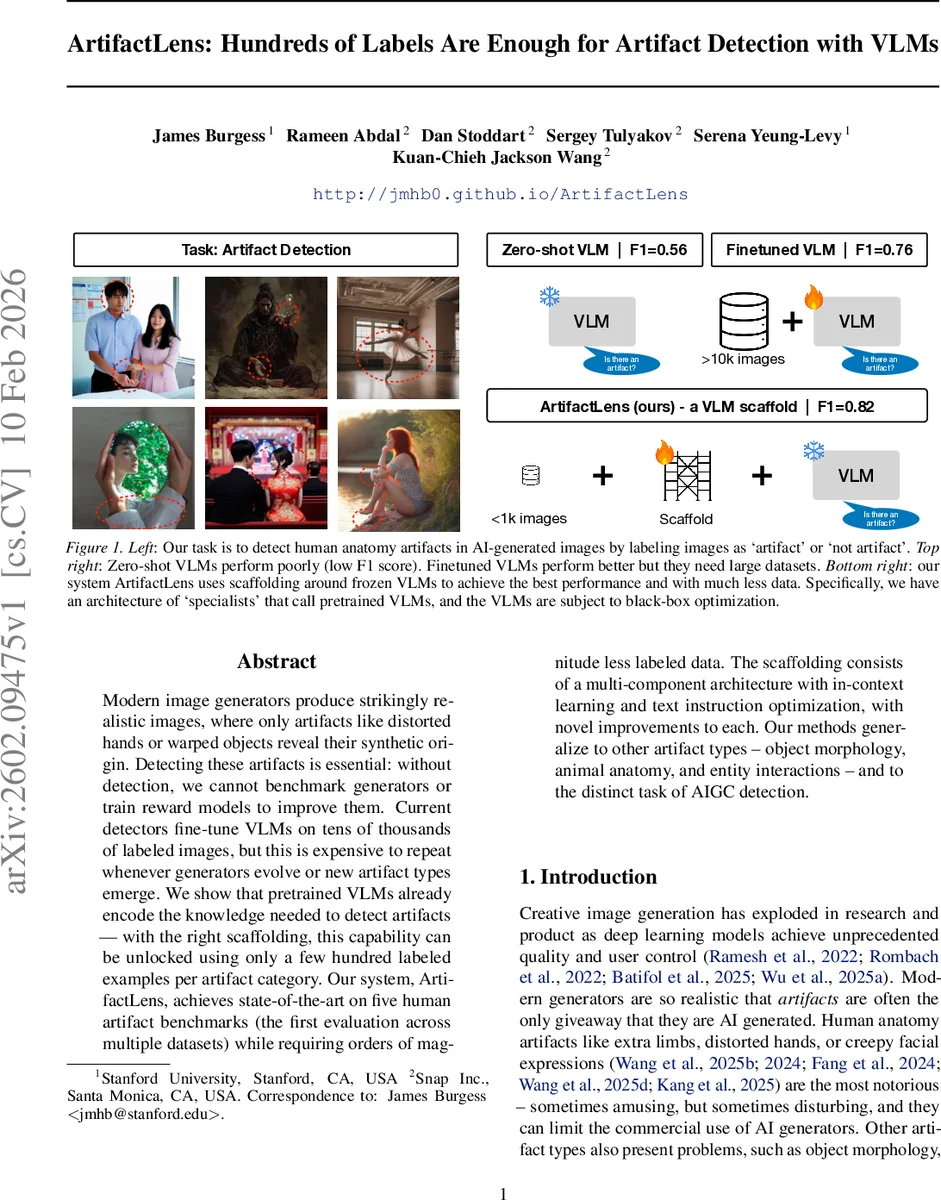

Modern image generators produce strikingly realistic images, where only artifacts like distorted hands or warped objects reveal their synthetic origin. Detecting these artifacts is essential: without detection, we cannot benchmark generators or train reward models to improve them. Current detectors fine-tune VLMs on tens of thousands of labeled images, but this is expensive to repeat whenever generators evolve or new artifact types emerge. We show that pretrained VLMs already encode the knowledge needed to detect artifacts - with the right scaffolding, this capability can be unlocked using only a few hundred labeled examples per artifact category. Our system, ArtifactLens, achieves state-of-the-art on five human artifact benchmarks (the first evaluation across multiple datasets) while requiring orders of magnitude less labeled data. The scaffolding consists of a multi-component architecture with in-context learning and text instruction optimization, with novel improvements to each. Our methods generalize to other artifact types - object morphology, animal anatomy, and entity interactions - and to the distinct task of AIGC detection.

💡 Research Summary

ArtifactLens tackles the problem of detecting visual artifacts in AI‑generated images—particularly subtle human anatomy errors such as extra limbs, distorted hands, or uncanny facial expressions—by leveraging frozen vision‑language models (VLMs) rather than fine‑tuning them on massive labeled datasets. The authors argue that modern VLMs already encode the necessary visual‑semantic knowledge; the challenge lies in exposing this capability through an effective scaffolding layer.

The proposed system consists of three tightly integrated components. First, a multi‑component architecture creates a set of “specialists,” each dedicated to a single artifact type (e.g., deformed hand, missing leg). Each specialist receives a cropped region of interest, allowing the VLM to focus on a small, semantically relevant area. Second, the specialists are equipped with multimodal in‑context learning (ICL). Instead of random demonstrations, the authors introduce “counterfactual demonstrations”: pairs of images that are semantically similar but have opposite labels. Similarity is measured using CLIP embeddings, ensuring the VLM can clearly see the boundary between artifact and non‑artifact. Third, the textual prompt that guides the VLM is optimized via a large language model (LLM). The LLM generates a pool of prompts that span a “full spectrum” of confidence thresholds—from highly conservative to aggressively permissive. These prompts are evaluated on a development set, and the best‑performing ones are iteratively refined. This dual black‑box optimization (ICL + prompt search) dramatically reduces the amount of supervision needed.

Experiments were conducted on five human‑artifact benchmarks (SynArtifact, AbHuman, HAD, and two others), covering more than 100 k images and a variety of sub‑label taxonomies. Using Gemini‑2.5‑Pro as the backbone VLM, ArtifactLens achieved an 8 percentage‑point boost in F1 score over the strongest fine‑tuned baseline while using only 10 % of the training data. Remarkably, performance degraded by less than 9 % when the training set was reduced to just 200 labeled examples. Smaller VLMs (Gemini‑2.5‑Flash) remained competitive, trailing the best model by only 9 percentage points, and even an open‑source Qwen2.5‑VL‑7B benefited substantially from the scaffolding.

Beyond human anatomy, the same scaffolding was applied to other artifact domains—object morphology, animal anatomy, irrational interactions—and to the broader task of AI‑generated content (AIGC) detection. In all cases, zero‑shot VLM performance improved by at least 45 %, demonstrating strong generalization.

Key contributions include: (1) a modular specialist architecture that decomposes artifact detection into tractable sub‑tasks; (2) counterfactual demonstrations for more informative ICL; (3) full‑spectrum prompt optimization to counteract VLM bias against rare artifact classes; and (4) the first multi‑benchmark evaluation of artifact detectors, establishing a comprehensive baseline for future work.

The paper also discusses limitations: it focuses on image‑level binary labels, leaving bounding‑box or pixel‑level detection for future research, and the specialist count and demonstration selection may need further automation via meta‑learning. Nonetheless, ArtifactLens proves that a few hundred labeled examples are sufficient to unlock the latent artifact‑detection abilities of modern VLMs, offering a data‑efficient, scalable solution for the rapidly evolving landscape of generative AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment