Auditing Multi-Agent LLM Reasoning Trees Outperforms Majority Vote and LLM-as-Judge

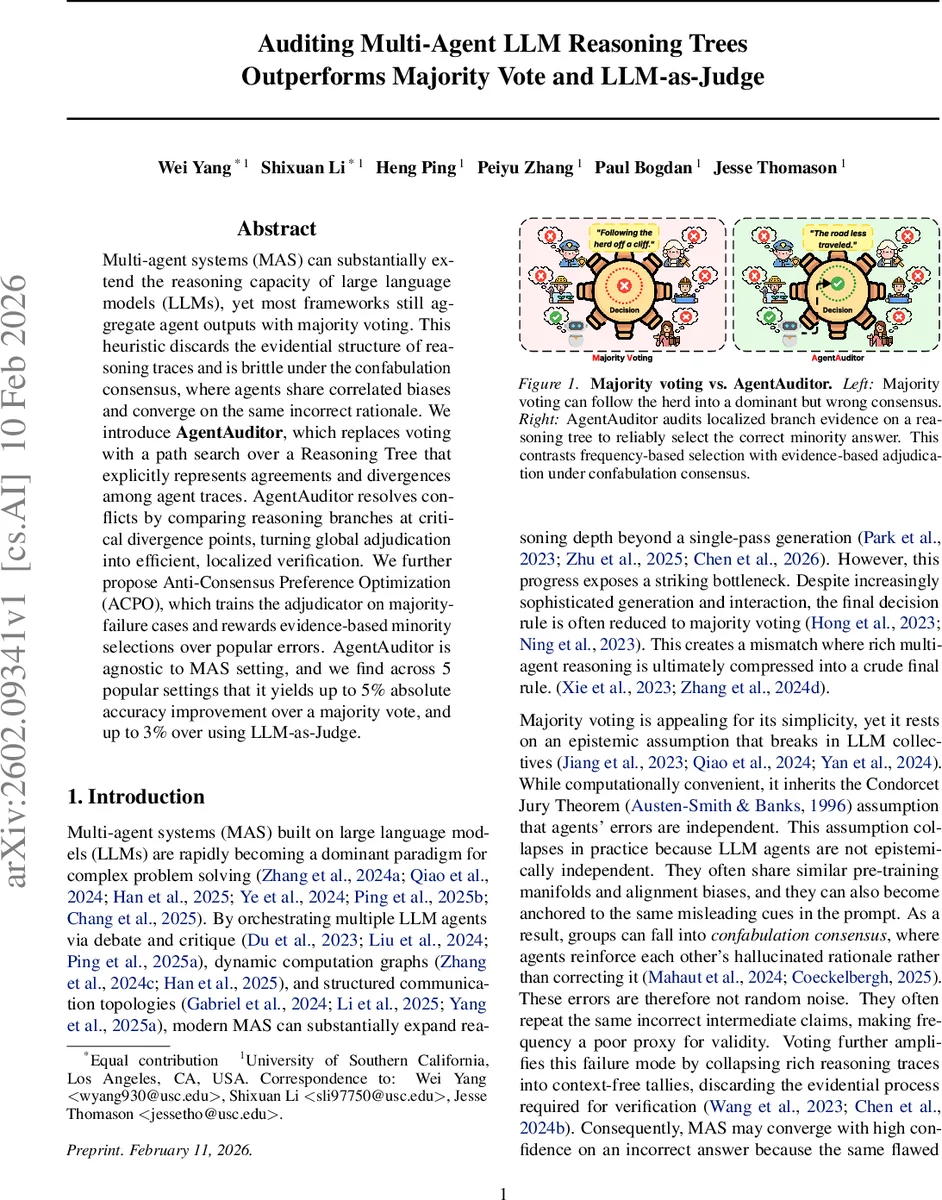

Multi-agent systems (MAS) can substantially extend the reasoning capacity of large language models (LLMs), yet most frameworks still aggregate agent outputs with majority voting. This heuristic discards the evidential structure of reasoning traces and is brittle under the confabulation consensus, where agents share correlated biases and converge on the same incorrect rationale. We introduce AgentAuditor, which replaces voting with a path search over a Reasoning Tree that explicitly represents agreements and divergences among agent traces. AgentAuditor resolves conflicts by comparing reasoning branches at critical divergence points, turning global adjudication into efficient, localized verification. We further propose Anti-Consensus Preference Optimization (ACPO), which trains the adjudicator on majority-failure cases and rewards evidence-based minority selections over popular errors. AgentAuditor is agnostic to MAS setting, and we find across 5 popular settings that it yields up to 5% absolute accuracy improvement over a majority vote, and up to 3% over using LLM-as-Judge.

💡 Research Summary

The paper tackles a fundamental weakness in current multi‑agent large language model (LLM) systems: the final decision is almost always reduced to a simple majority vote. While majority voting is easy to implement, it rests on the assumption that each agent’s error is independent. In practice, LLM agents share the same pre‑training data, architecture, and alignment signals, leading to highly correlated mistakes. The authors call this phenomenon “confabulation consensus,” where many agents converge on the same incorrect rationale. In such cases, the majority answer can be wrong, and a vote‑based aggregator will amplify the error instead of correcting it.

To overcome this, the authors introduce AgentAuditor, a novel aggregation framework that replaces voting with a reasoning‑tree‑based audit. The workflow consists of three main stages: (1) Trace atomization – each agent’s raw output is parsed into a sequence of discrete semantic steps using a decomposition model Φ; (2) Reasoning Tree construction – the atomized steps are embedded and incrementally inserted into a tree. Nodes represent shared reasoning steps, while branches appear when semantic similarity falls below a threshold τ, indicating a divergence. Each node stores a centroid embedding (updated via exponential moving average) and a support set of agents that traversed it. This yields a compact representation of all agents’ reasoning, exposing where they agree and where they split. (3) Structure‑adaptive auditing – the tree is traversed to locate Critical Divergence Points (CDPs), nodes with two or more children. For each CDP a “Divergence Packet” is built, containing the common prefix and the immediate post‑split evidence from each branch. A lightweight LLM‑as‑Judge model evaluates these packets, comparing the branches on factual correctness, logical consistency, and evidence richness. The winning branch’s final answer is propagated as the system’s output. By focusing computation only on CDPs, the audit scales logarithmically with the number of agents and avoids the costly full‑trace evaluation required by naïve LLM‑as‑Judge approaches.

Training the auditor is addressed with Anti‑Consensus Preference Optimization (ACPO). The authors collect a dataset of “consensus‑trap” instances where the majority is wrong and the minority is correct. During fine‑tuning, the loss penalizes the model for siding with the majority in these cases and rewards it for selecting the minority branch that provides stronger evidence. Concretely, the loss combines a cross‑entropy term that encourages minority‑correct predictions with a regression term that aligns the model’s confidence scores with an evidence‑quality metric. This explicitly reduces the model’s sycophancy bias toward popular but erroneous answers.

The experimental evaluation spans five representative MAS frameworks (debate, critique, dynamic computation graphs, structured communication topologies, and self‑organizing collaboration) and four task domains (mathematical problem solving, scientific reasoning, code generation, and general knowledge QA). For each setting, 8–16 agents are run, and three aggregation baselines are compared: (i) plain majority voting, (ii) a vanilla LLM‑as‑Judge that reads all full traces, and (iii) the proposed AgentAuditor with and without ACPO. Results show that AgentAuditor + ACPO consistently outperforms the baselines, achieving up to 5 percentage‑point absolute accuracy gains over majority voting and up to 3 percentage‑points over the vanilla LLM‑as‑Judge. The gains are especially pronounced in confabulation‑consensus scenarios, where the minority‑correct recovery rate jumps from below 30 % (with voting) to over 70 % with AgentAuditor.

Efficiency analysis reveals that the audit’s runtime grows linearly with the depth of the reasoning tree rather than the number of agents, keeping latency within typical real‑time service limits (≈1.2× the baseline). Ablation studies confirm that both the tree‑based structuring and the ACPO training contribute significantly: removing the tree (i.e., evaluating full traces) degrades accuracy and increases cost, while disabling ACPO re‑introduces a bias toward majority answers.

The paper also provides a theoretical treatment (Appendix C) modeling confabulation consensus as a violation of the Condorcet Jury Theorem assumptions and proving that localized auditing at CDPs reduces the probability of selecting an erroneous majority hypothesis under reasonable independence of branch evidence.

In conclusion, the work demonstrates that evidence‑driven, structure‑aware adjudication is a more reliable way to aggregate multi‑agent LLM outputs than simple frequency‑based voting. AgentAuditor offers a scalable, modular component that can be plugged into existing MAS pipelines, and ACPO equips it with a bias‑mitigation mechanism that prefers well‑supported minority answers. The authors suggest future extensions to directed acyclic graph (DAG) reasoning structures, integration with human‑in‑the‑loop workflows, and exploration of richer evidence‑scoring functions. This research paves the way for more trustworthy collaborative AI systems where the quality of reasoning, not just the quantity of agreeing agents, determines the final answer.

Comments & Academic Discussion

Loading comments...

Leave a Comment