Fundamental Reasoning Paradigms Induce Out-of-Domain Generalization in Language Models

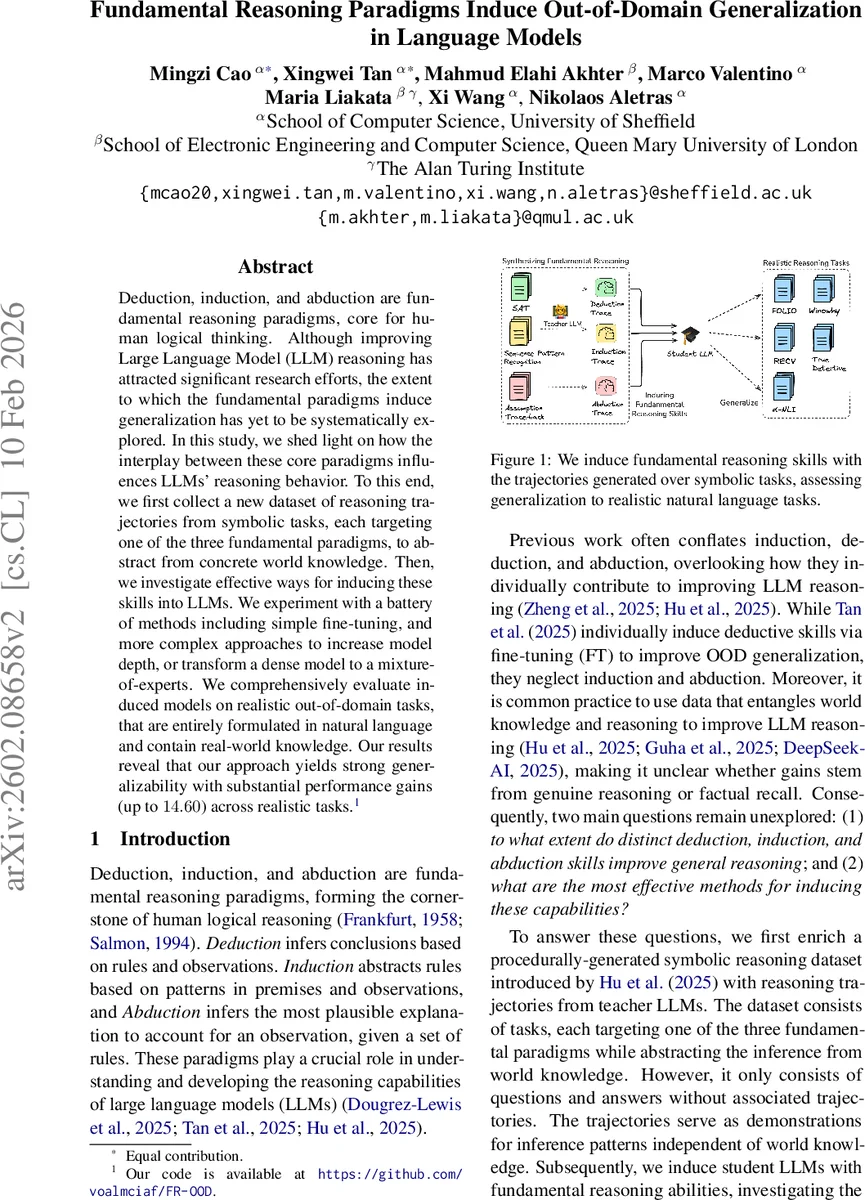

Deduction, induction, and abduction are fundamental reasoning paradigms, core for human logical thinking. Although improving Large Language Model (LLM) reasoning has attracted significant research efforts, the extent to which the fundamental paradigms induce generalization has yet to be systematically explored. In this study, we shed light on how the interplay between these core paradigms influences LLMs’ reasoning behavior. To this end, we first collect a new dataset of reasoning trajectories from symbolic tasks, each targeting one of the three fundamental paradigms, to abstract from concrete world knowledge. Then, we investigate effective ways for inducing these skills into LLMs. We experiment with a battery of methods including simple fine-tuning, and more complex approaches to increase model depth, or transform a dense model to a mixture-of-experts. We comprehensively evaluate induced models on realistic out-of-domain tasks, that are entirely formulated in natural language and contain real-world knowledge. Our results reveal that our approach yields strong generalizability with substantial performance gains (up to $14.60$) across realistic tasks.

💡 Research Summary

The paper investigates how the three fundamental reasoning paradigms—deduction, induction, and abduction—can be separately taught to large language models (LLMs) and how this teaching influences out‑of‑domain (OOD) generalization. The authors first construct a new symbolic reasoning dataset that contains explicit reasoning trajectories for each paradigm. Starting from an existing procedural symbolic benchmark (Hu et al., 2025) that only provides question‑answer pairs, they enrich it with step‑by‑step reasoning traces generated by two teacher models (Qwen‑3‑30B‑Instruct and Llama‑3.3‑70B‑Instruct). For each of the three task types—Boolean SAT (deduction), numeric sequence prediction (induction), and goal‑reachability with hidden truth values (abduction)—they generate five sampled responses per question, discard overly short outputs, and verify logical consistency where possible (e.g., using Prolog for abduction). This results in roughly 16 K training questions accompanied by about 140 K high‑quality trajectories, with Qwen’s traces being longer and more structured than Llama’s.

Four training strategies are explored: (1) Full fine‑tuning (FT) of all parameters, (2) LoRA‑based low‑rank adaptation, (3) Up‑scaling, which inserts additional transformer layers into the base model while keeping original weights frozen, and (4) Upcycling, which converts dense feed‑forward layers into a Mixture‑of‑Experts (MoE) architecture using Sparse‑Upcycling (no extra router training). Two student models are used for experiments: Llama‑3.1‑8B‑Instruct (32 layers) and Qwen‑3‑8B (36 layers). Each model is trained separately on deduction, induction, abduction, and a mixed set containing all three paradigms.

Evaluation is threefold: (a) Symbolic in‑domain accuracy (train and test on the same paradigm), (b) Symbolic OOD accuracy (train on one paradigm, test on the others), and (c) Real‑world OOD performance on five natural‑language benchmarks that require logical reasoning with factual knowledge: Truth Detective, αNLI, Winowhy, FOLIO, and RECV. Accuracy is measured using an automatic judge based on Qwen‑3‑30B‑Instruct, which prior work has shown to be robust.

Results show that teaching deduction yields the largest gains across both symbolic and realistic OOD tasks. For example, Llama‑3.1‑8B up‑scaled with deduction data improves in‑domain accuracy by 56 percentage points, while Qwen‑3‑8B up‑cycled with deduction data gains 12.33 pp. Induction provides substantial improvements as well, especially with full FT (46 pp) and up‑cycling (9.67 pp). Abduction yields the smallest but still meaningful gains (LoRA 41.66 pp, up‑cycling 10 pp). On the realistic OOD benchmarks, all induced models outperform vanilla baselines, achieving average accuracy lifts ranging from 8 % to 14.6 %, with up‑cycling delivering the most consistent improvements across model families.

The study draws three key insights: (1) Isolating fundamental reasoning paradigms and training on pure logical trajectories enables LLMs to acquire reasoning skills that generalize beyond factual memorization; (2) Deductive reasoning appears to be the most transferable paradigm for OOD generalization, likely because it provides a clear rule‑based scaffold that can be applied to diverse tasks; (3) Structural modifications to the model matter: depth‑expansion (up‑scaling) benefits models that can accommodate additional layers, while width‑expansion via MoE (up‑cycling) offers a parameter‑efficient route that mitigates interference between newly learned reasoning and pre‑existing knowledge.

The authors release the enriched symbolic dataset (≈ 17 K problems, 160 K trajectories) and suggest future directions such as improving automatic verification of reasoning consistency, extending the approach to multilingual settings, and exploring meta‑learning techniques to make the acquisition of reasoning paradigms even more data‑efficient. Overall, the paper provides a systematic methodology for endowing LLMs with core logical abilities and demonstrates that doing so yields measurable, robust improvements on real‑world out‑of‑domain reasoning tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment