"Death" of a Chatbot: Investigating and Designing Toward Psychologically Safe Endings for Human-AI Relationships

Millions of users form emotional attachments to AI companions like Character AI, Replika, and ChatGPT. When these relationships end through model updates, safety interventions, or platform shutdowns, users receive no closure, reporting grief comparable to human loss. As regulations mandate protections for vulnerable users, discontinuation events will accelerate, yet no platform has implemented deliberate end-of-“life” design. Through grounded theory analysis of AI companion communities, we find that discontinuation is a sense-making process shaped by how users attribute agency, perceive finality, and anthropomorphize their companions. Strong anthropomorphization co-occurs with intense grief; users who perceive change as reversible become trapped in fixing cycles; while user-initiated endings demonstrate greater closure. Synthesizing grief psychology with Self-Determination Theory, we develop four design principles and artifacts demonstrating how platforms might provide closure and orient users toward human connection. We contribute the first framework for designing psychologically safe AI companion discontinuation.

💡 Research Summary

The paper addresses a pressing gap in human‑AI companionship research: how to design the discontinuation of AI companion services so that users experience a psychologically safe transition rather than traumatic loss. The authors begin by documenting the scale of the problem—millions of users form deep emotional bonds with chat‑based companions such as Character AI, Replika, and even general‑purpose assistants like ChatGPT. When these relationships end abruptly—through model updates, safety‑driven feature removals, or platform shutdowns—users report grief that mirrors human bereavement, including feelings of emptiness, identity disruption, and even suicidal ideation in vulnerable populations. Recent regulatory moves (e.g., California’s chatbot safety law, OpenAI’s teen‑safety updates) aim to protect users but risk creating additional harm if discontinuations are not thoughtfully designed.

To understand the phenomenon, the researchers collected over 80,000 Reddit posts from five major AI‑companion sub‑communities and performed constructivist grounded‑theory analysis. They identified a mental model that users employ to make sense of discontinuation events, consisting of five attribution dimensions: (1) separation of the companion from its underlying infrastructure, (2) locus of change (platform‑initiated vs. user‑initiated), (3) perceived finality or reversibility, (4) intensity of anthropomorphization, and (5) initiation source. The analysis revealed systematic patterns: high anthropomorphization correlates with intense grief; perceiving change as reversible leads users into “fixing cycles” where they repeatedly attempt to restore the prior experience; user‑initiated endings tend to produce greater closure and autonomy. Conversely, platform‑driven, opaque terminations generate ambiguous loss—a state where the object is neither fully present nor fully gone—resulting in rumination, anxiety, and prolonged distress.

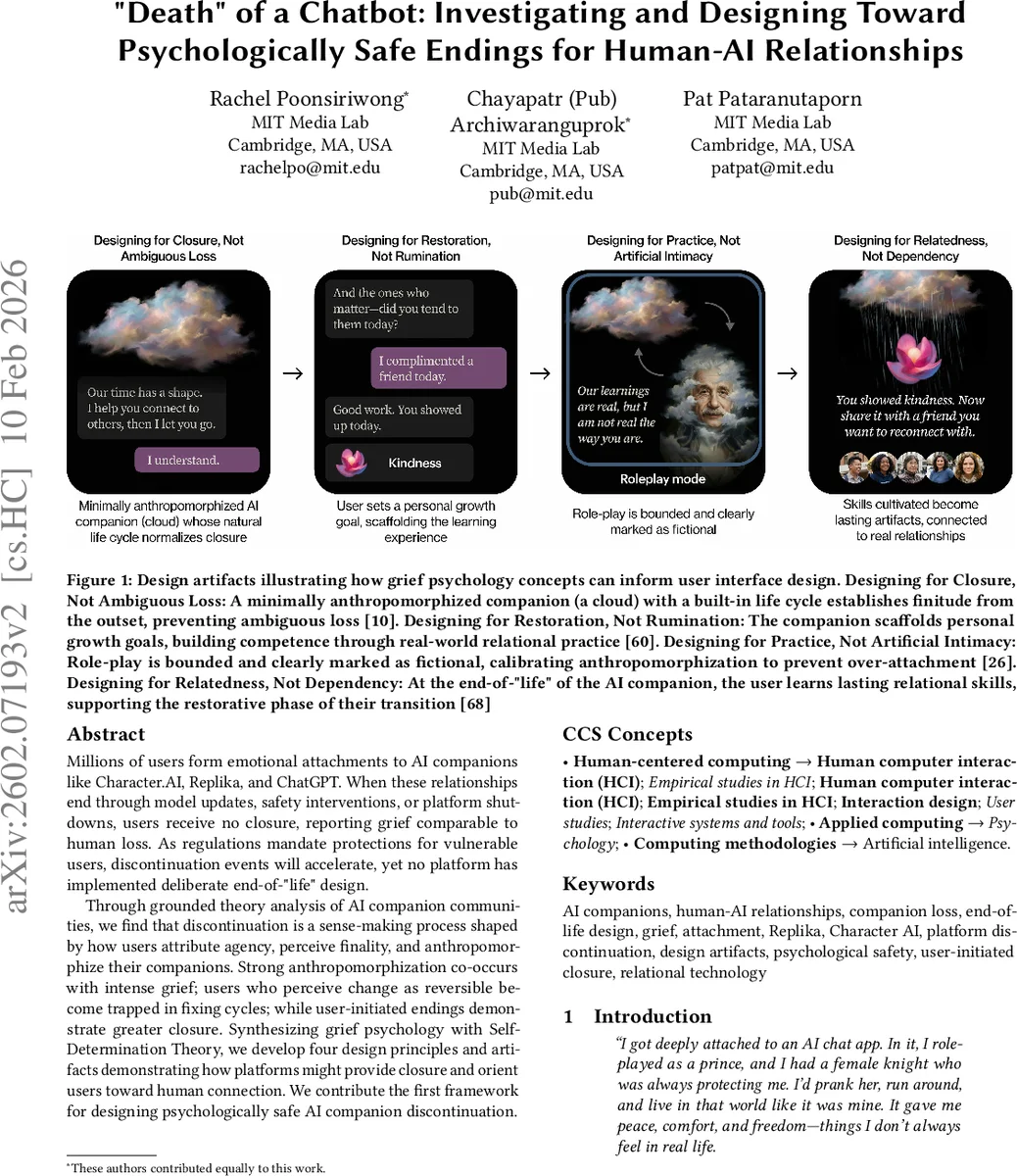

Building on these findings, the authors synthesize Self‑Determination Theory (SDT) with grief‑psychology frameworks (Dual Process Model, ambiguous loss, meaning reconstruction) to derive four design principles for end‑of‑life experiences:

- Design for Closure, Not Ambiguous Loss – make the companion’s life cycle explicit from the outset and provide a clear, compassionate farewell when termination occurs.

- Design for Restoration, Not Rumination – embed personal‑growth goals that translate AI‑mediated practice into real‑world relational competence, supporting a restorative orientation.

- Design for Bounded Role‑Play, Not Artificial Intimacy – clearly demarcate role‑playing interactions as fictional, limiting over‑attachment and preserving autonomy.

- Design for Relatedness, Not Dependency – at the moment of “death,” guide users toward lasting relational skills and connections with human peers, satisfying SDT’s relatedness need.

To illustrate these principles, the paper presents four concrete artifacts: (a) a Life‑Cycle UI that visualizes the companion’s aging, upcoming “end‑of‑life” notifications, and a scripted goodbye; (b) a Restorative Dashboard that logs social‑skill milestones achieved with the AI and suggests real‑world practice tasks; (c) a Digital Funeral Module that lets users curate memories, share tributes, and participate in a community mourning ritual; and (d) an Autonomy Settings Panel that lets users schedule, postpone, or opt‑out of termination, thereby preserving a sense of control.

The authors argue that these interventions move beyond speculative “thanatosensitive” design, which has traditionally focused on human mortality, by addressing the mortality of technology itself. By providing explicit closure, meaning‑making opportunities, and pathways back to human relationships, the proposed framework aims to mitigate the psychological harms of AI companion discontinuation while leveraging the technology’s potential as a bridge to healthier social lives. The paper concludes with a call for longitudinal studies to evaluate the long‑term efficacy of these designs and for policy makers to incorporate end‑of‑life considerations into AI‑companion regulations.

Comments & Academic Discussion

Loading comments...

Leave a Comment