From Scalar Rewards to Potential Trends: Shaping Potential Landscapes for Model-Based Reinforcement Learning

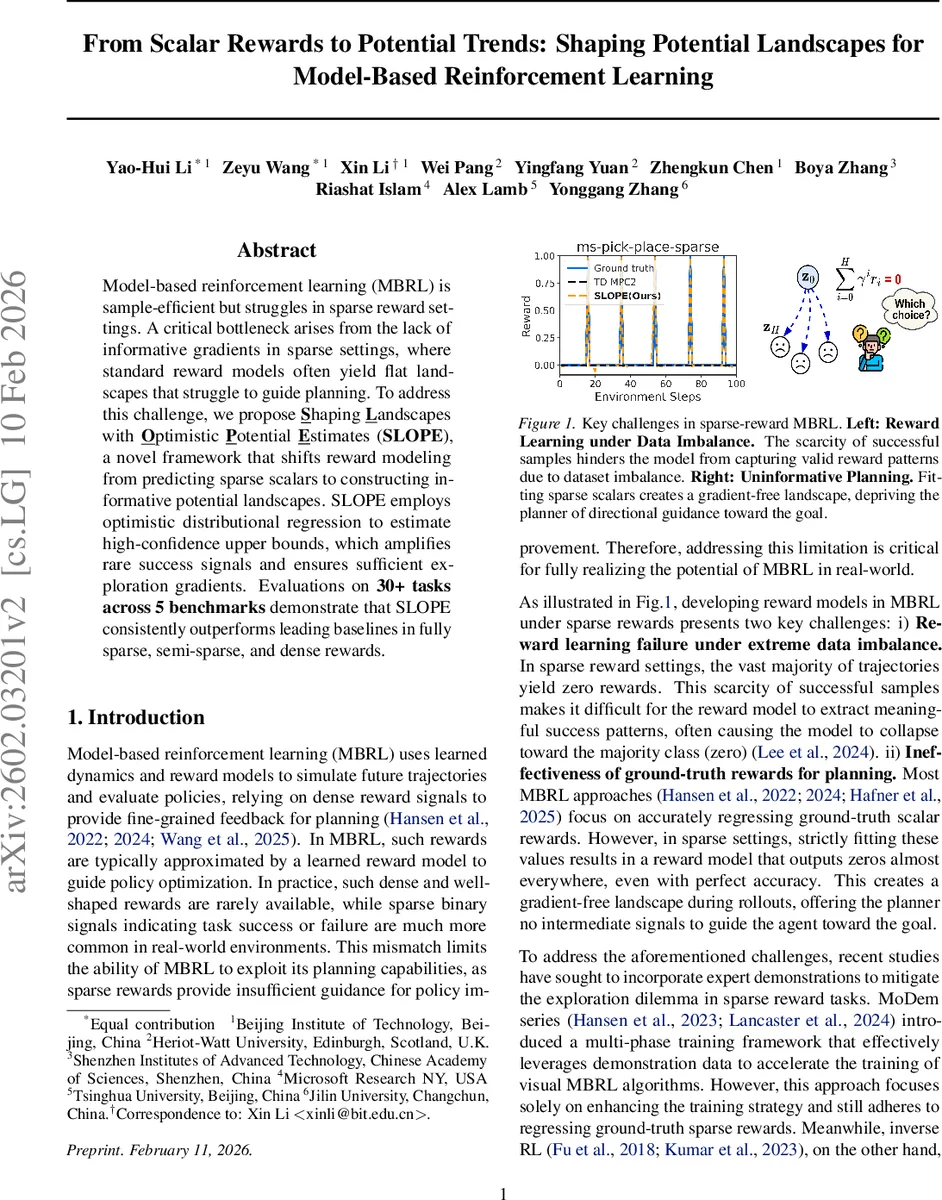

Model-based reinforcement learning (MBRL) achieves high sample efficiency by simulating future trajectories with learned dynamics and reward models. However, its effectiveness is severely compromised in sparse reward settings. The core limitation lies in the standard paradigm of regressing ground-truth scalar rewards: in sparse environments, this yields a flat, gradient-free landscape that fails to provide directional guidance for planning. To address this challenge, we propose Shaping Landscapes with Optimistic Potential Estimates (SLOPE), a novel framework that shifts reward modeling from predicting scalars to constructing informative potential landscapes. SLOPE employs optimistic distributional regression to estimate high-confidence upper bounds, which amplifies rare success signals and ensures sufficient exploration gradients. Evaluations on 30+ tasks across 5 benchmarks demonstrate that SLOPE consistently outperforms leading baselines in fully sparse, semi-sparse, and dense rewards.

💡 Research Summary

Model‑based reinforcement learning (MBRL) achieves impressive sample efficiency by learning a dynamics model and a reward model, then planning over imagined trajectories. However, when the environment provides only sparse binary rewards (e.g., a reward of 1 only upon task completion), the standard approach of regressing these scalar signals produces a reward model that outputs zero almost everywhere. Consequently, the learned value function collapses to a flat landscape, and planners such as Model‑Predictive Path Integral (MPPI) receive no directional gradients, leading to random exploration and poor performance.

The paper introduces SLOPE (Shaping Landscapes with Optimistic Potential Estimates), a framework that replaces scalar reward regression with the construction of an informative potential landscape. The key idea is to treat the learned Q‑function as a potential Φ(s) over the state space and to reshape the original reward using the classic Potential‑Based Reward Shaping (PBRS) formula:

e_r(s,a) = r(s,a) + γ E

Comments & Academic Discussion

Loading comments...

Leave a Comment