Barycentric alignment for instance-level comparison of neural representations

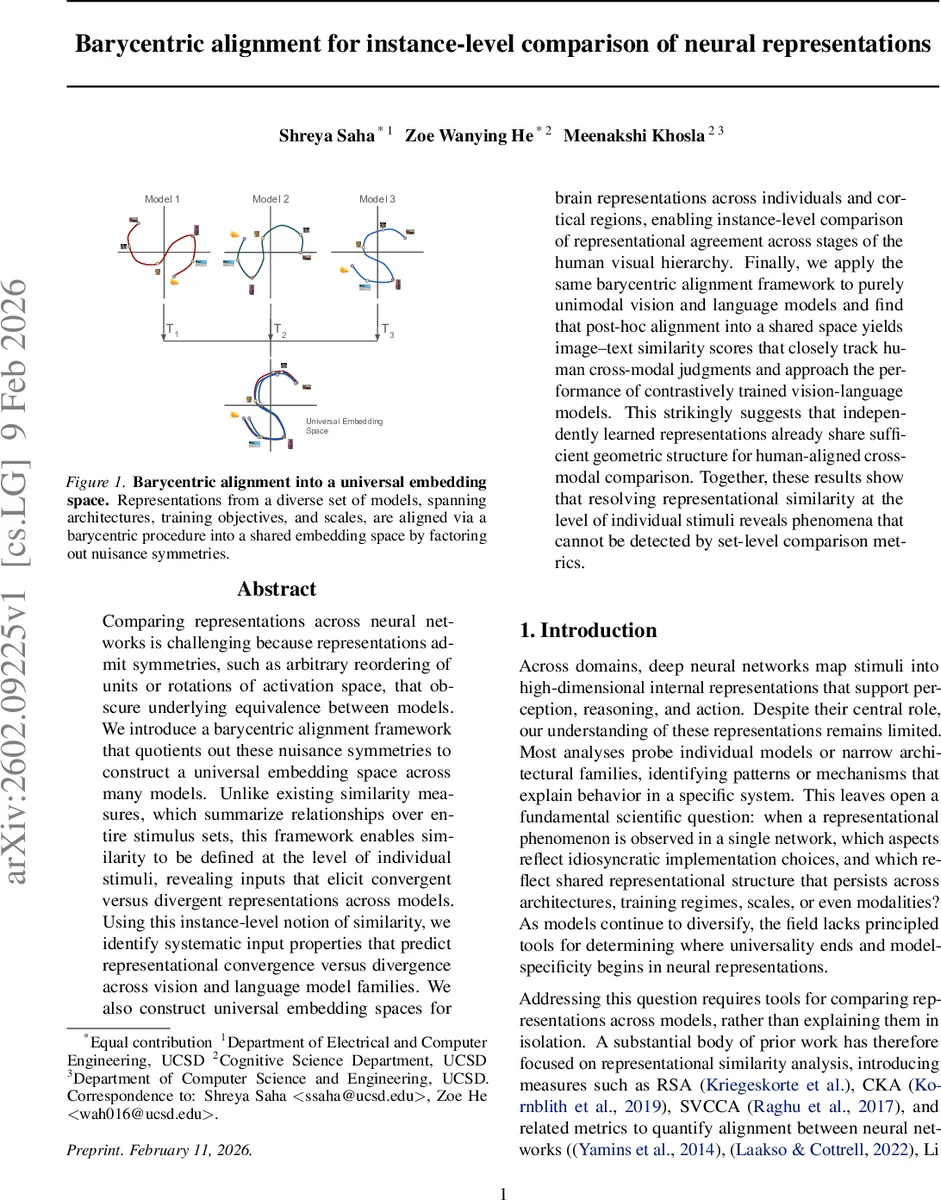

Comparing representations across neural networks is challenging because representations admit symmetries, such as arbitrary reordering of units or rotations of activation space, that obscure underlying equivalence between models. We introduce a barycentric alignment framework that quotients out these nuisance symmetries to construct a universal embedding space across many models. Unlike existing similarity measures, which summarize relationships over entire stimulus sets, this framework enables similarity to be defined at the level of individual stimuli, revealing inputs that elicit convergent versus divergent representations across models. Using this instance-level notion of similarity, we identify systematic input properties that predict representational convergence versus divergence across vision and language model families. We also construct universal embedding spaces for brain representations across individuals and cortical regions, enabling instance-level comparison of representational agreement across stages of the human visual hierarchy. Finally, we apply the same barycentric alignment framework to purely unimodal vision and language models and find that post-hoc alignment into a shared space yields image text similarity scores that closely track human cross-modal judgments and approach the performance of contrastively trained vision-language models. This strikingly suggests that independently learned representations already share sufficient geometric structure for human-aligned cross-modal comparison. Together, these results show that resolving representational similarity at the level of individual stimuli reveals phenomena that cannot be detected by set-level comparison metrics.

💡 Research Summary

The paper tackles a fundamental problem in deep learning research: how to compare internal representations of neural networks when those representations are subject to arbitrary symmetries such as neuron permutation, rotations, and reflections. Existing similarity metrics—Representational Similarity Analysis (RSA), Centered Kernel Alignment (CKA), SVCCA, and related methods—summarize the relationship between two models over an entire stimulus set, yielding a single scalar score. While useful for broad trends, these set‑level scores obscure how agreement or disagreement is distributed across individual inputs and cannot be easily extended to compare many models simultaneously.

To address these limitations, the authors introduce a “barycentric alignment” framework. The core idea is to treat each model’s representation of a shared training stimulus set as a point cloud in a high‑dimensional space, where the coordinates are defined only up to transformations from a chosen symmetry group G. The paper focuses on orthogonal invariance (G = O(d)), which includes permutations (as a special case) as well as rotations and reflections, preserving Euclidean distances while removing nuisance degrees of freedom.

Given N models, each producing an n × d_i matrix X_i (n stimuli, d_i features), the method first pads all matrices to a common dimensionality d = max_i d_i. It then iteratively computes the Procrustes barycenter: (1) for each model i, find the orthogonal matrix T_i that best aligns X_i to the current template M(t) by solving a classic orthogonal Procrustes problem via singular value decomposition; (2) update each aligned representation X_i ← X_i T_i and recompute the template as the arithmetic mean M(t+1) = (1/N) Σ_i X_i. The process repeats until the relative change in the template falls below a small threshold ε. The converged template M is the Fréchet mean of the representations under the Procrustes metric and defines a universal embedding space. The learned transformations {T_i} map any future representation from model i into this shared space.

For inference, a new set of m test stimuli yields representations Y_i for each model. After applying the learned T_i, the aligned rows Y’_i are compared across models on a per‑stimulus basis. The authors define an instance‑level consistency score S_j for stimulus j as the average pairwise similarity (cosine by default) among the N aligned vectors for that stimulus. This score is independent of the surrounding stimulus pool and can be computed for arbitrarily large model ensembles.

The framework is evaluated on three fronts:

-

Quantitative alignment quality – Using a diverse pool of supervised vision models (ResNet, ViT, ConvNeXt, Swin, etc.) and a pool of language models (Llama, Qwen, OpenLLaMA, etc.), the authors report cross‑model Pearson correlation, root‑mean‑square (RMS) discrepancy per dimension, and stimulus‑retrieval accuracy (whether the nearest neighbor of a stimulus in one model’s space is the same stimulus in another model’s space). Correlations often exceed 0.4, RMS errors are low, and Top‑10 retrieval accuracies approach 0.9, far above chance. This demonstrates that, after factoring out orthogonal symmetries, representations from heterogeneous architectures align closely at the level of individual stimuli.

-

Instance‑level consistency across model pools – Consistency scores are highly correlated across pools that differ in architecture (CNN vs. transformer), training objective (supervised vs. self‑supervised), and scale (small vs. large). Stimuli that cause divergence among CNNs also cause divergence among transformers, suggesting a shared set of “axes of variation” that cut across model families. By contrast, randomly initialized networks produce consistency scores that are essentially uncorrelated with those from trained models, confirming that the observed structure is learned rather than an artifact of the architecture alone.

-

Applications to neuroscience and multimodal alignment – The authors embed fMRI/ECoG recordings from multiple human participants and cortical regions into a common barycentric space, enabling stimulus‑wise comparison of representational agreement across the visual hierarchy. This provides a finer‑grained view than traditional RSA, revealing which images elicit consistent neural patterns across subjects and areas. In a separate experiment, they align purely visual models (e.g., ViT) and purely linguistic models (e.g., Llama) into a shared space and compute image‑text cosine similarity. Remarkably, this post‑hoc alignment yields image‑text similarity scores that closely track human cross‑modal judgments and approach the performance of contrastively trained vision‑language models such as CLIP, indicating that independently learned unimodal embeddings already share sufficient geometric structure for human‑aligned multimodal comparison.

Overall, the paper makes several key contributions:

- A principled symmetry‑quotienting method that treats the choice of invariance group as a first‑class design decision, with orthogonal invariance providing a mathematically clean solution.

- A scalable algorithm for aligning many models simultaneously, avoiding pairwise computations and enabling the construction of a universal embedding space.

- An instance‑level similarity metric that is stimulus‑specific, model‑pool‑independent, and interpretable as the tightness of clustering in the shared space.

- Empirical evidence that a large fraction of inter‑model variability is reducible to nuisance symmetries, and that the remaining variability follows common axes across diverse model families.

- Demonstrations of practical utility in neuroscience (cross‑subject, cross‑region analysis) and multimodal AI (zero‑shot image‑text similarity), opening avenues for future work on model interpretability, model‑agnostic probing, and cross‑modal alignment without contrastive training.

In sum, barycentric alignment provides a powerful new lens for dissecting representational similarity at the granularity of individual inputs, revealing phenomena invisible to traditional set‑level metrics and offering a unifying framework for comparing heterogeneous neural networks and even biological neural data.

Comments & Academic Discussion

Loading comments...

Leave a Comment