CoRefine: Confidence-Guided Self-Refinement for Adaptive Test-Time Compute

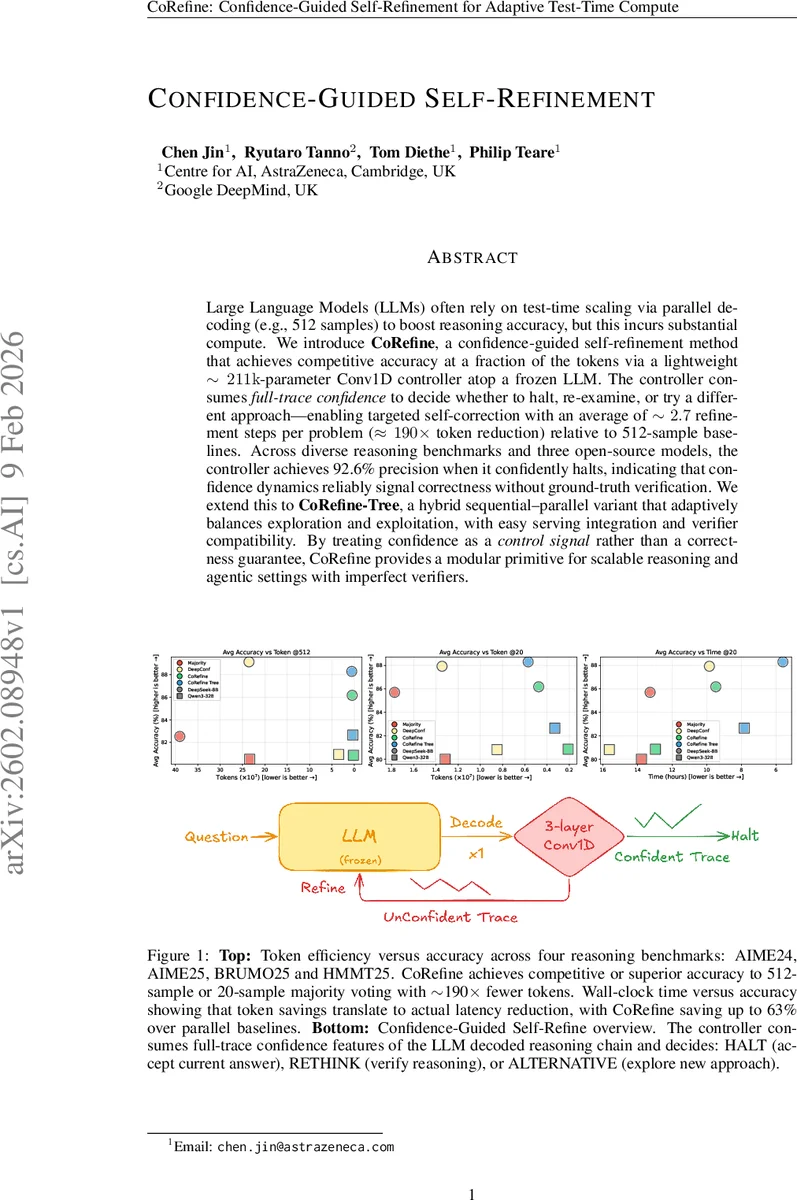

Large Language Models (LLMs) often rely on test-time scaling via parallel decoding (for example, 512 samples) to boost reasoning accuracy, but this incurs substantial compute. We introduce CoRefine, a confidence-guided self-refinement method that achieves competitive accuracy using a fraction of the tokens via a lightweight 211k-parameter Conv1D controller atop a frozen LLM. The controller consumes full-trace confidence to decide whether to halt, re-examine, or try a different approach, enabling targeted self-correction with an average of 2.7 refinement steps per problem and roughly 190-fold token reduction relative to 512-sample baselines. Across diverse reasoning benchmarks and three open-source models, the controller achieves 92.6 percent precision when it confidently halts, indicating that confidence dynamics reliably signal correctness without ground-truth verification. We extend this to CoRefine-Tree, a hybrid sequential-parallel variant that adaptively balances exploration and exploitation, with easy serving integration and verifier compatibility. By treating confidence as a control signal rather than a correctness guarantee, CoRefine provides a modular primitive for scalable reasoning and agentic settings with imperfect verifiers.

💡 Research Summary

CoRefine introduces a novel test‑time compute‑reduction framework for large language models (LLMs) that leverages token‑level confidence as a control signal rather than a direct correctness estimator. The method attaches a lightweight 211 k‑parameter Conv1D controller on top of a frozen LLM. For each generated reasoning trace, the model’s log‑probabilities are transformed into a confidence trace by taking the negative average log‑probability of the top‑k (k = 20) tokens at every position. This trace is aggressively down‑sampled to 16 bins via average pooling, which filters out high‑frequency noise while preserving macro‑level dynamics such as early over‑confidence and late‑phase confidence rise that differentiate correct from incorrect reasoning.

The controller receives the 16‑dimensional down‑sampled vector and processes it through three 1‑D convolutional layers (64, 128, 256 filters; kernel sizes

Comments & Academic Discussion

Loading comments...

Leave a Comment