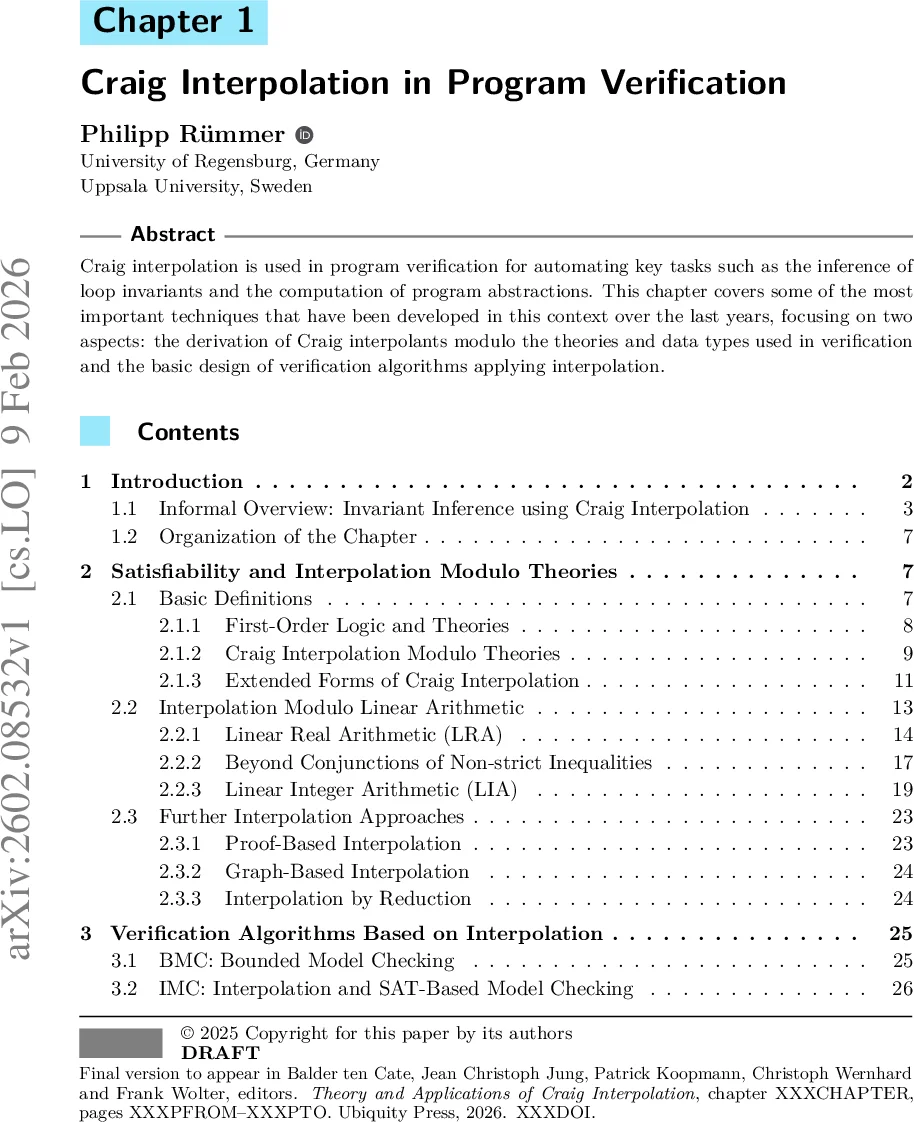

Craig Interpolation in Program Verification

Craig interpolation is used in program verification for automating key tasks such as the inference of loop invariants and the computation of program abstractions. This chapter covers some of the most important techniques that have been developed in this context over the last years, focusing on two aspects: the derivation of Craig interpolants modulo the theories and data types used in verification and the basic design of verification algorithms applying interpolation.

💡 Research Summary

The chapter provides a comprehensive survey of Craig interpolation and its pivotal role in modern program verification. It begins by motivating the need for automated inference of loop invariants and program abstractions, highlighting that traditional verification techniques often struggle with discovering suitable inductive properties. The authors trace the historical emergence of Craig interpolation in verification to the discovery that interpolants can serve as candidate invariants, a breakthrough that spurred a wave of research into interpolation algorithms across a variety of logical theories.

The first technical section illustrates the connection between interpolation and invariant generation using a classic Fibonacci program. By unwinding the loop twice, the authors introduce intermediate assertions K₀, K₁, K₂ that split the execution into four sub‑programs. They show that an intermediate assertion must satisfy two entailments: the pre‑unwound part (A) must imply K, and K must imply the post‑condition (C). This exactly matches the definition of a Craig interpolant for the implication A → C, where the interpolant is restricted to the shared vocabulary of A and C. Consequently, computing an interpolant yields a valid intermediate assertion, which can be renamed back to the original program variables to obtain a concrete invariant candidate.

The second major part delves into the theory‑specific computation of interpolants. The authors discuss interpolation modulo theories within the SMT (Satisfiability Modulo Theories) framework, focusing on quantifier‑free fragments of first‑order logic that are decidable and amenable to efficient solving. For linear real arithmetic (LRA), they describe proof‑based interpolation that extracts interpolants from resolution proofs or from the conflict clauses generated by an LRA solver, handling both strict and non‑strict inequalities via delta‑adjustments. For linear integer arithmetic (LIA), the chapter presents techniques that combine modular reasoning with bound propagation to respect integrality constraints, and they explain how to lift LRA interpolants to LIA using rounding and case‑splitting.

Beyond arithmetic, the chapter surveys three families of interpolation methods: (1) proof‑based interpolation, which directly reuses the proof objects produced by SAT/SMT solvers; (2) graph‑based interpolation, which models variable dependencies as a graph and computes minimal separating cuts to obtain concise interpolants; and (3) reduction‑based interpolation, which translates a target theory into a simpler one (e.g., encoding arrays as index‑value pairs) so that existing interpolants can be reused. The authors emphasize that each method trades off between generality, computational overhead, and the size of the resulting interpolant.

The third section demonstrates how these interpolation procedures are embedded into concrete verification algorithms. In Bounded Model Checking (BMC), the solver explores bounded execution paths; when a counterexample is found, an interpolant is computed to generalize the offending path, thereby pruning the search space. Interpolation‑Based Model Checking (IMC) avoids explicit state enumeration by iteratively constructing an over‑approximation of the reachable set using interpolants derived from proof traces; each iteration refines the approximation until either a safety violation is proved or a fixed point is reached.

The chapter then covers Counterexample‑Guided Abstraction Refinement (CEGAR). Here, an abstract model (often a predicate abstraction) yields a spurious counterexample. The verification engine checks the feasibility of the counterexample using an SMT solver; if infeasible, an interpolant is extracted from the infeasibility proof and used to refine the abstraction by adding new predicates that block the spurious path. This loop continues until the abstract model is precise enough to prove safety or a genuine counterexample is discovered.

Finally, the authors discuss verification via Constrained Horn Clauses (CHC). Programs are encoded as a set of Horn clauses with constraints; solving the CHC system amounts to finding a model that satisfies all clauses. Craig interpolation is employed to compute inductive solutions for recursive predicates: interpolants serve as candidate interpretations for predicates, and a fix‑point iteration refines them until a solution is found or unsatisfiability is proved. This approach unifies verification of recursive programs, safety properties, and even certain liveness properties under a single logical framework.

Throughout the chapter, practical considerations such as the cost of interpolant generation, caching strategies, proof compression, and the impact of theory combination on performance are discussed. The authors also highlight open research directions: dynamic selection of interpolation strategies based on the current proof context, distributed interpolation for large‑scale systems, and the integration of machine‑learning techniques to predict useful intermediate assertions. In conclusion, the chapter asserts that Craig interpolation has become an indispensable tool for scaling automated verification, bridging the gap between logical reasoning and concrete program semantics across a wide spectrum of theories and algorithmic paradigms.

Comments & Academic Discussion

Loading comments...

Leave a Comment