Language-Guided Transformer Tokenizer for Human Motion Generation

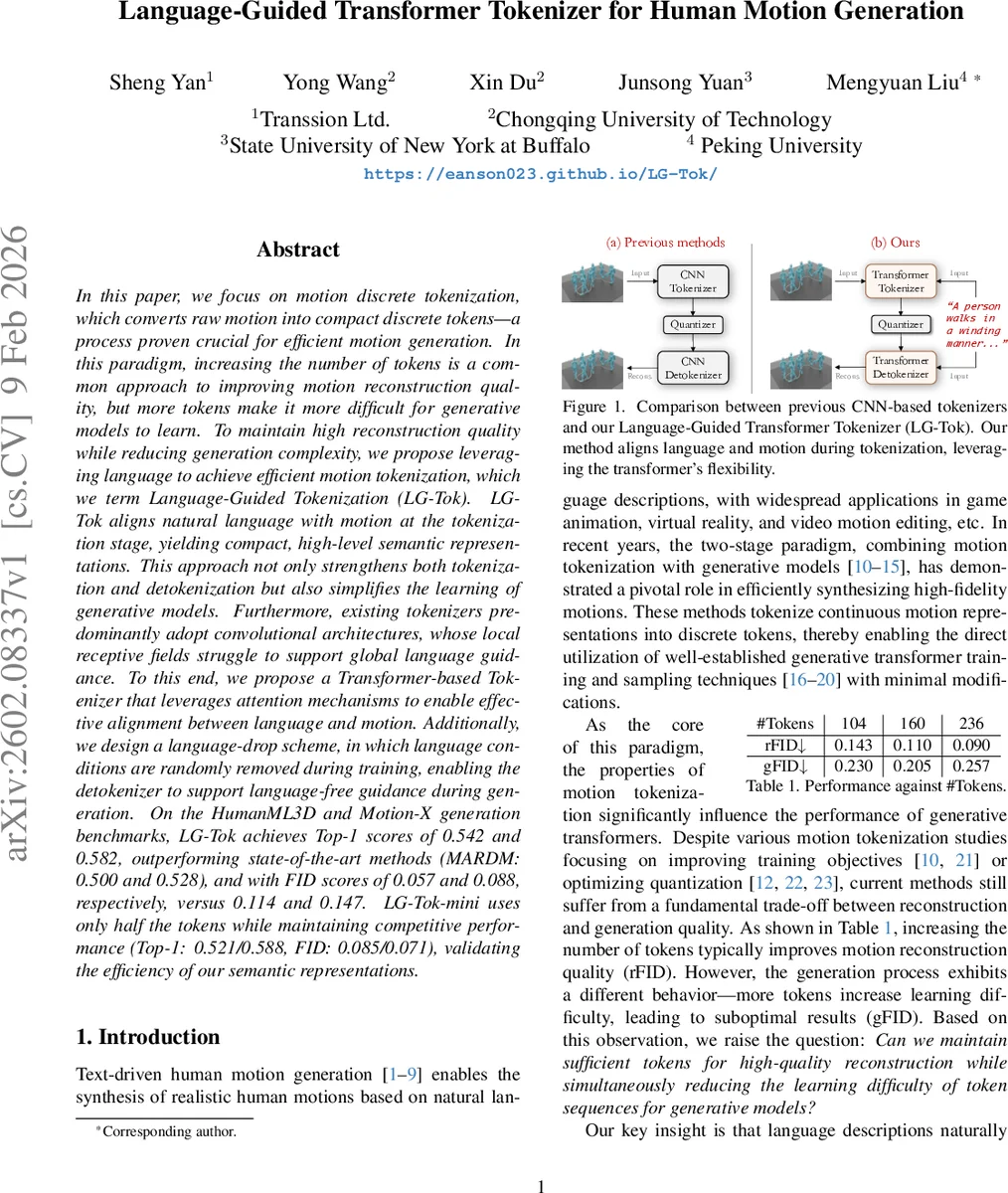

In this paper, we focus on motion discrete tokenization, which converts raw motion into compact discrete tokens–a process proven crucial for efficient motion generation. In this paradigm, increasing the number of tokens is a common approach to improving motion reconstruction quality, but more tokens make it more difficult for generative models to learn. To maintain high reconstruction quality while reducing generation complexity, we propose leveraging language to achieve efficient motion tokenization, which we term Language-Guided Tokenization (LG-Tok). LG-Tok aligns natural language with motion at the tokenization stage, yielding compact, high-level semantic representations. This approach not only strengthens both tokenization and detokenization but also simplifies the learning of generative models. Furthermore, existing tokenizers predominantly adopt convolutional architectures, whose local receptive fields struggle to support global language guidance. To this end, we propose a Transformer-based Tokenizer that leverages attention mechanisms to enable effective alignment between language and motion. Additionally, we design a language-drop scheme, in which language conditions are randomly removed during training, enabling the detokenizer to support language-free guidance during generation. On the HumanML3D and Motion-X generation benchmarks, LG-Tok achieves Top-1 scores of 0.542 and 0.582, outperforming state-of-the-art methods (MARDM: 0.500 and 0.528), and with FID scores of 0.057 and 0.088, respectively, versus 0.114 and 0.147. LG-Tok-mini uses only half the tokens while maintaining competitive performance (Top-1: 0.521/0.588, FID: 0.085/0.071), validating the efficiency of our semantic representations.

💡 Research Summary

The paper introduces Language‑Guided Tokenization (LG‑Tok), a novel approach that injects natural‑language semantics directly into the motion tokenization stage, thereby producing compact, high‑level semantic tokens that are easier for generative models to learn. Traditional motion tokenizers rely on convolutional encoders and vector‑quantized VAEs, which compress continuous motion into discrete codes but suffer from a trade‑off: increasing token count improves reconstruction (lower rFID) while making sequence modeling harder, leading to poorer generation quality (higher gFID).

LG‑Tok addresses this by (1) using a frozen LLaMA text encoder to obtain sentence embeddings for each motion clip, (2) concatenating these embeddings with learnable latent tokens and the motion features, and (3) feeding the combined sequence into a transformer‑based encoder. Self‑attention endows the latent tokens with global contextual awareness, while cross‑attention with the text embeddings aligns motion and language at the token level. After encoding, the latent tokens are vector‑quantized into multi‑scale discrete codes, compatible with existing VQ‑VAE pipelines and with the Scalable Autoregressive (SAR) modeling of MoSa.

The decoder (detokenizer) mirrors this design: learnable mask tokens interact with the de‑quantized embeddings via cross‑attention, and the same text embeddings are optionally supplied. During training a “language‑drop” scheme randomly removes the text condition, forcing the detokenizer to learn both language‑guided and language‑free reconstruction. This yields a model that can generate motions conditioned on text, but also operate without textual input at inference time.

Experiments on two large‑scale benchmarks—HumanML3D (text‑motion pairs) and Motion‑X (diverse motion clips)—show that LG‑Tok dramatically improves both reconstruction and generation metrics. With roughly 104–160 tokens per 196‑frame clip, LG‑Tok achieves Top‑1 R‑Precision scores of 0.542 (HumanML3D) and 0.582 (Motion‑X) and FID scores of 0.057 and 0.088, respectively, outperforming the previous state‑of‑the‑art MARDM (Top‑1 0.500/0.528, FID 0.114/0.147). A lightweight variant, LG‑Tok‑mini, halves the token count yet retains competitive performance (Top‑1 0.521/0.588, FID 0.085/0.071), confirming that the semantic burden has indeed shifted from tokens to language.

Ablation studies validate each component: replacing the transformer encoder with a CNN degrades both rFID and FID; removing language‑drop harms language‑free reconstruction; and using mask tokens instead of direct decoding improves reconstruction quality by ~5 %. The method also generalizes to other generative backbones such as MoMask, indicating that the tokenization improvement is orthogonal to the downstream model.

Limitations include the reliance on a frozen text encoder, which prevents joint multimodal pre‑training that could further tighten language‑motion alignment, and a performance drop when token counts become extremely low (<40). Future work may explore end‑to‑end multimodal training, more efficient codebooks, and real‑time inference optimizations for interactive applications.

In summary, LG‑Tok proposes a paradigm shift: by guiding tokenization with language, it reduces the number of tokens needed for high‑fidelity reconstruction while simplifying the learning problem for generative transformers. This advances the state of human motion synthesis and opens avenues for efficient, controllable animation generation in gaming, VR/AR, and video editing.

Comments & Academic Discussion

Loading comments...

Leave a Comment