Bridging Gulfs in UI Generation through Semantic Guidance

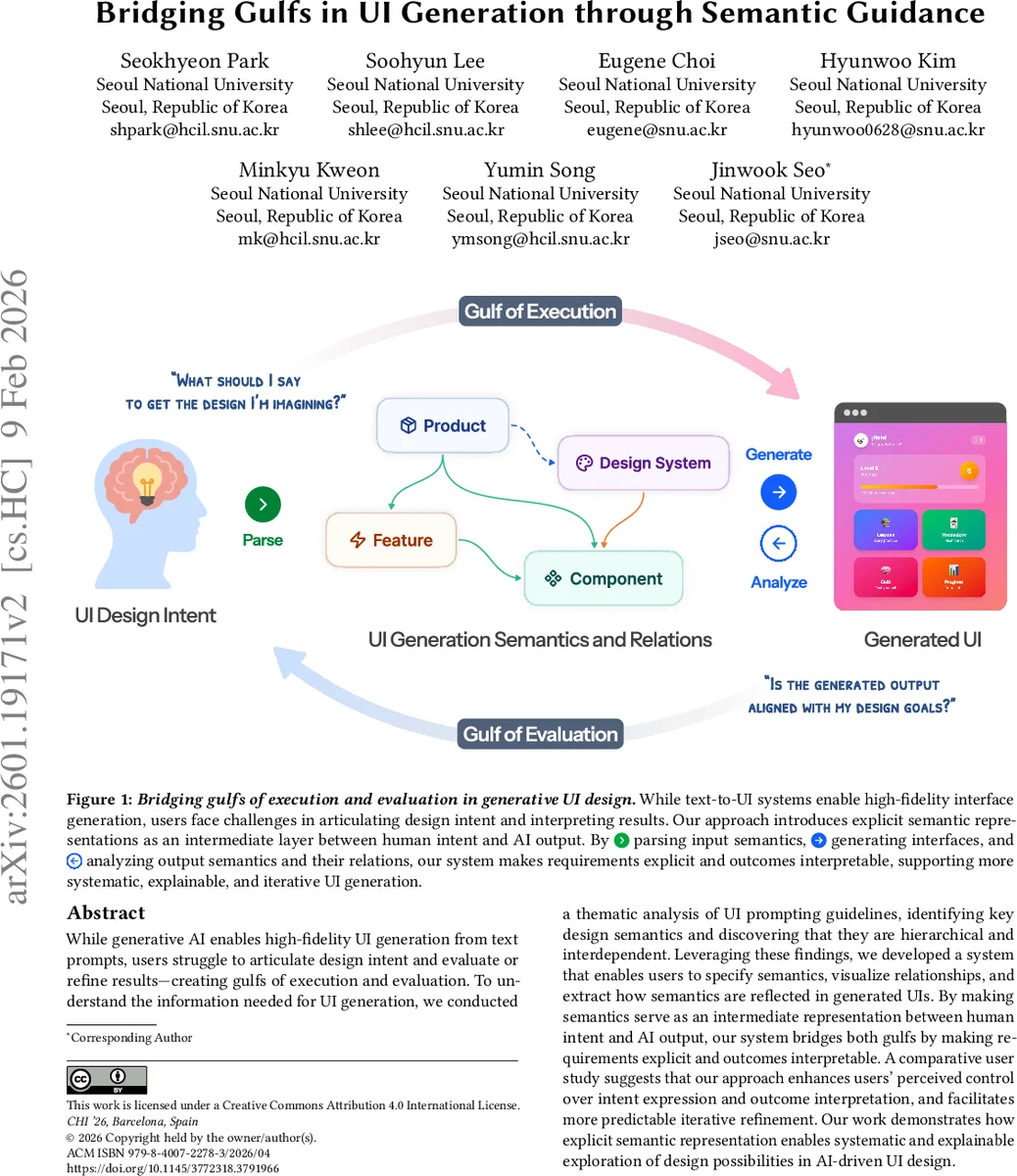

While generative AI enables high-fidelity UI generation from text prompts, users struggle to articulate design intent and evaluate or refine results-creating gulfs of execution and evaluation. To understand the information needed for UI generation, we conducted a thematic analysis of UI prompting guidelines, identifying key design semantics and discovering that they are hierarchical and interdependent. Leveraging these findings, we developed a system that enables users to specify semantics, visualize relationships, and extract how semantics are reflected in generated UIs. By making semantics serve as an intermediate representation between human intent and AI output, our system bridges both gulfs by making requirements explicit and outcomes interpretable. A comparative user study suggests that our approach enhances users’ perceived control over intent expression and outcome interpretation, and facilitates more predictable iterative refinement. Our work demonstrates how explicit semantic representation enables systematic and explainable exploration of design possibilities in AI-driven UI design.

💡 Research Summary

The paper tackles two fundamental usability problems that arise when designers interact with text‑to‑UI generative systems: the gulf of execution (difficulty articulating design intent) and the gulf of evaluation (difficulty interpreting the generated output). To understand what information is needed for successful UI generation, the authors performed a thematic analysis of prompting guidelines from leading services (e.g., Vercel v0, Google Stitch, Lovable). They identified a set of design semantics that are hierarchical and interdependent, organized into five levels: product vision, design style/tone, functional requirements, component properties, and layout/relationship constraints. Each higher‑level semantic constrains the possible specifications of lower‑level items, revealing why unstructured natural‑language prompts often lead to ambiguous or underspecified results.

Building on this taxonomy, the authors designed a three‑stage system that inserts an explicit semantic layer between the user and the generative model. Stage 1 – Semantic Parsing uses a large language model combined with rule‑based mapping to convert free‑form user input into a structured representation (e.g., “trustworthy” → color = blue, typography = serif, generous whitespace). Stage 2 – Semantic‑Guided Generation feeds this representation as conditioning information to a diffusion or transformer‑based UI generator, thereby reducing the model’s degrees of freedom and ensuring that the generated UI respects the specified constraints. Stage 3 – Semantic Extraction & Visualization analyzes the produced UI screenshot, extracts the realized semantics, and visualizes the mapping between input semantics and output decisions as an interactive graph. This feedback loop makes the otherwise opaque generation process transparent, allowing designers to see exactly how their intent was interpreted and where mismatches occur.

A comparative user study (12 participants per condition) evaluated the semantic‑based interface against a baseline that accepts raw text prompts only. Participants performed a set of design tasks while the study measured cognitive load, perceived intent‑output alignment, number of prompt revisions, and total task time. Results showed that the semantic condition achieved a 27 % higher intent‑alignment score, a 1.8‑point increase (on a 5‑point Likert scale) in satisfaction with output evaluation, a reduction of average prompt revisions from 3.2 to 1.4, and a 38 % decrease in overall task duration. Qualitative feedback highlighted that the visualized semantic relations helped users quickly pinpoint why a particular color or layout was chosen, enabling more purposeful refinements rather than blind trial‑and‑error.

The paper’s contributions are threefold: (1) a hierarchical, domain‑specific framework of UI design semantics derived from real‑world prompting practices; (2) a concrete implementation that exposes these semantics as an intermediate representation, thereby bridging both execution and evaluation gulfs; and (3) empirical evidence that this approach improves perceived control, interpretability, and efficiency in AI‑assisted UI design. The authors argue that making design intent explicit and traceable transforms generative UI tools from “black‑box” guessers into collaborative partners that support systematic exploration. Future work is outlined around extending the semantic taxonomy with user‑custom vocabularies, integrating multimodal feedback (e.g., image exemplars), and scaling the approach to multi‑screen or full‑application design contexts. Overall, the study demonstrates that semantic guidance is a practical and effective strategy for making generative UI design more usable, predictable, and aligned with human creative goals.

Comments & Academic Discussion

Loading comments...

Leave a Comment