From Token to Line: Enhancing Code Generation with a Long-Term Perspective

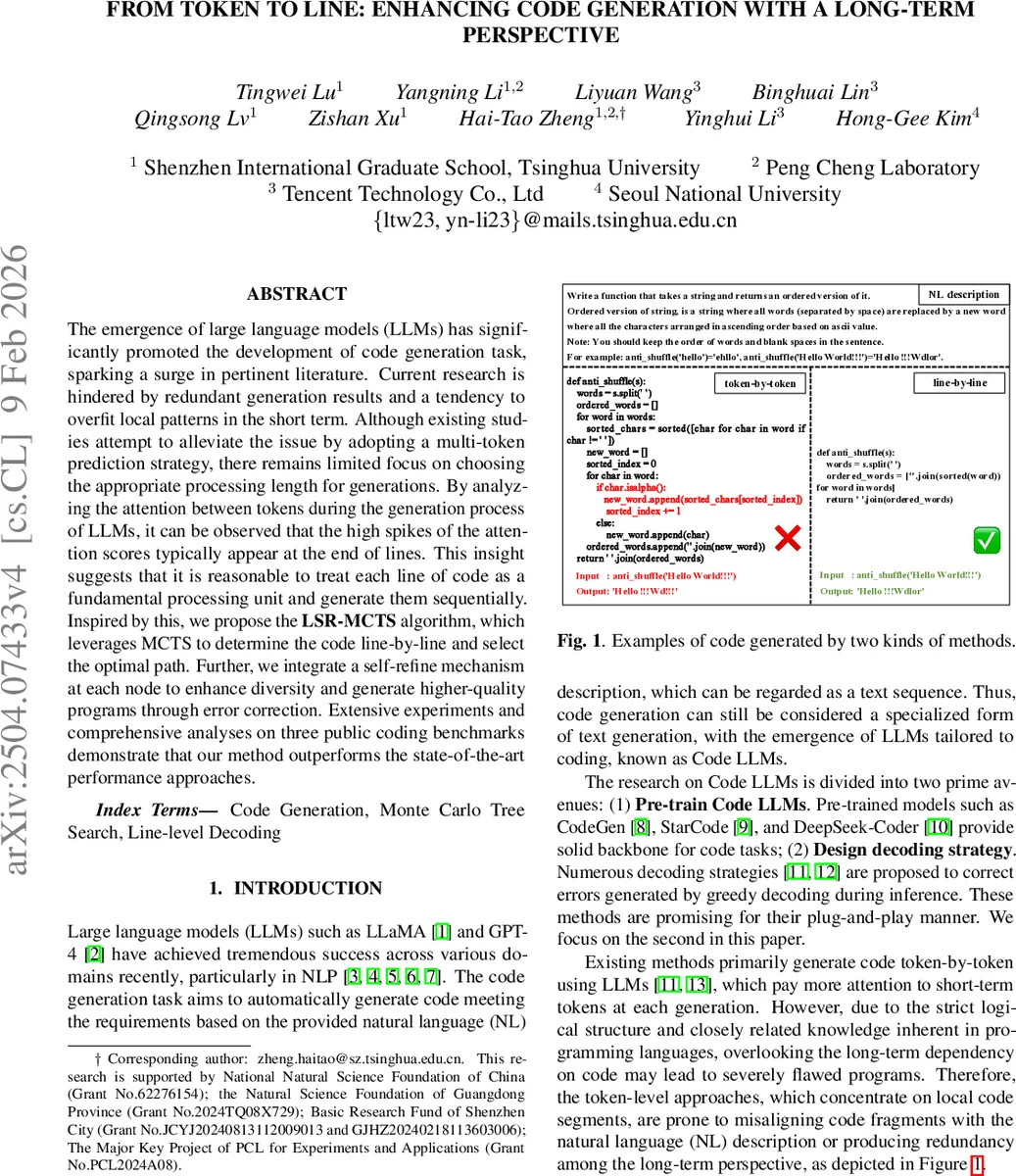

The emergence of large language models (LLMs) has significantly promoted the development of code generation task, sparking a surge in pertinent literature. Current research is hindered by redundant generation results and a tendency to overfit local patterns in the short term. Although existing studies attempt to alleviate the issue by adopting a multi-token prediction strategy, there remains limited focus on choosing the appropriate processing length for generations. By analyzing the attention between tokens during the generation process of LLMs, it can be observed that the high spikes of the attention scores typically appear at the end of lines. This insight suggests that it is reasonable to treat each line of code as a fundamental processing unit and generate them sequentially. Inspired by this, we propose the LSR-MCTS algorithm, which leverages MCTS to determine the code line-by-line and select the optimal path. Further, we integrate a self-refine mechanism at each node to enhance diversity and generate higher-quality programs through error correction. Extensive experiments and comprehensive analyses on three public coding benchmarks demonstrate that our method outperforms the state-of-the-art performance approaches.

💡 Research Summary

The paper tackles a fundamental limitation of current large language model (LLM)‑based code generation: the tendency of token‑level decoding to focus on short‑term dependencies, which often leads to redundant or logically flawed programs. By inspecting attention heatmaps of existing code LLMs, the authors discover that the “line‑ending” token (the newline character) consistently receives the highest attention scores, acting as a summary token that encapsulates the semantic content of the preceding line. This observation motivates treating each line of code as the basic processing unit rather than individual tokens.

Building on this insight, the authors introduce Line‑level Self‑Refine Monte Carlo Tree Search (LSR‑MCTS), a novel, training‑free decoding strategy that combines line‑level Monte Carlo Tree Search (MCTS) with a self‑refine mechanism at every node. In LSR‑MCTS, a tree node stores three components: (1) context – the accumulated code block formed by all ancestor lines, (2) line – the candidate line generated for the current step, and (3) supplement – additional code needed to make the line executable (e.g., imports, closing brackets).

The algorithm follows the classic four‑phase MCTS loop, adapted to the line granularity:

-

Selection – Starting from the root (the natural‑language problem description), the algorithm traverses the tree using the Upper Confidence Bound for Trees (UCT) formula, favoring nodes with high cumulative reward and low visit count.

-

Expansion – For a leaf node that is not terminal, the LLM is prompted (with a generic generation prompt) to produce m candidate next lines (the paper uses m = 3) together with their supplements. Each candidate becomes a child node.

-

Self‑Refine – To mitigate the limited branching factor and to correct potentially erroneous “summary tokens,” the method adds a refinement step: if a child’s reward falls below a threshold (empirically set to 0.5) or if the node is the current path’s terminal node, the LLM is invoked again with a self‑refine prompt to regenerate the line and supplement. The refined line is added as an additional child, effectively expanding the search space with higher‑quality alternatives.

-

Evaluation – Each newly formed code block (context + line + supplement) is executed against publicly available test cases. The pass rate serves as the reward function R. The reward is computed for both the original and refined nodes, allowing the algorithm to compare raw and corrected candidates.

-

Backpropagation – The rewards from evaluation are propagated upward, updating each ancestor’s cumulative value and visit count. This feedback influences future selections, biasing the search toward globally coherent programs.

A caching mechanism stores previously generated code blocks to avoid redundant LLM calls, improving efficiency.

Experimental Setup

The authors evaluate LSR‑MCTS on three widely used Python code generation benchmarks: HumanEval, MBPP, and Code Contests. Two categories of models are tested: (i) code‑specialized LLMs (CodeLlama‑7B‑Instruct, aiXcoder‑7B) and (ii) general LLMs (GPT‑4, Llama‑3‑8B‑Instruct). Baselines include traditional decoding (Beam‑Search, Top‑p), a self‑refine method (Reflection), and a token‑level MCTS approach (PG‑TD). The UCT exploration constant c is set to 4, and each problem undergoes 100 rollouts. Performance is measured with the pass@k metric for k = 1, 3, 5.

Results

Across all model–benchmark combinations, LSR‑MCTS consistently outperforms the baselines. For example, with CodeLlama‑7B‑Instruct on HumanEval, LSR‑MCTS achieves pass@1 = 45.7 % versus 43.8 % for the best competing method (L‑MCTS). Similar gains are observed on MBPP and Code Contests, where LSR‑MCTS improves pass@5 by up to 2 percentage points over PG‑TD. The self‑refine component contributes notably: ablation without refinement drops performance by ~1 %p, confirming that local error correction is crucial for maintaining the integrity of summary tokens.

Analysis and Limitations

The line‑level perspective aligns naturally with the syntactic structure of most programming languages, allowing the search to consider longer‑range dependencies without exploding the branching factor. However, the approach assumes a clear line delimiter, which may not hold for languages with significant whitespace‑free syntax (e.g., JavaScript minified code) or for languages where a single logical statement spans multiple lines. Moreover, the self‑refine step introduces additional LLM invocations, increasing inference latency; the paper reports a modest m = 3 and a single refinement per node to keep costs manageable, but scaling to larger models or more complex tasks may require further optimization.

Future Directions

Potential extensions include (1) integrating line‑level attention signals directly into the LLM’s pre‑training objective, thereby reducing reliance on external tree search; (2) adaptive branching where m and the refinement threshold are dynamically adjusted based on intermediate reward signals; (3) applying the framework to multi‑language settings and evaluating its robustness on non‑Python codebases.

Conclusion

The study presents a compelling argument that code lines, rather than tokens, should serve as the fundamental unit for LLM‑driven code generation. By coupling line‑level Monte Carlo Tree Search with a targeted self‑refine mechanism, LSR‑MCTS achieves superior functional correctness on standard benchmarks, demonstrating that global, tree‑based search can effectively complement the local, greedy nature of conventional decoding. This work opens a promising avenue for more structured, error‑aware generation strategies in the rapidly evolving field of AI‑assisted programming.

Comments & Academic Discussion

Loading comments...

Leave a Comment