Fine-Grained Cat Breed Recognition with Global Context Vision Transformer

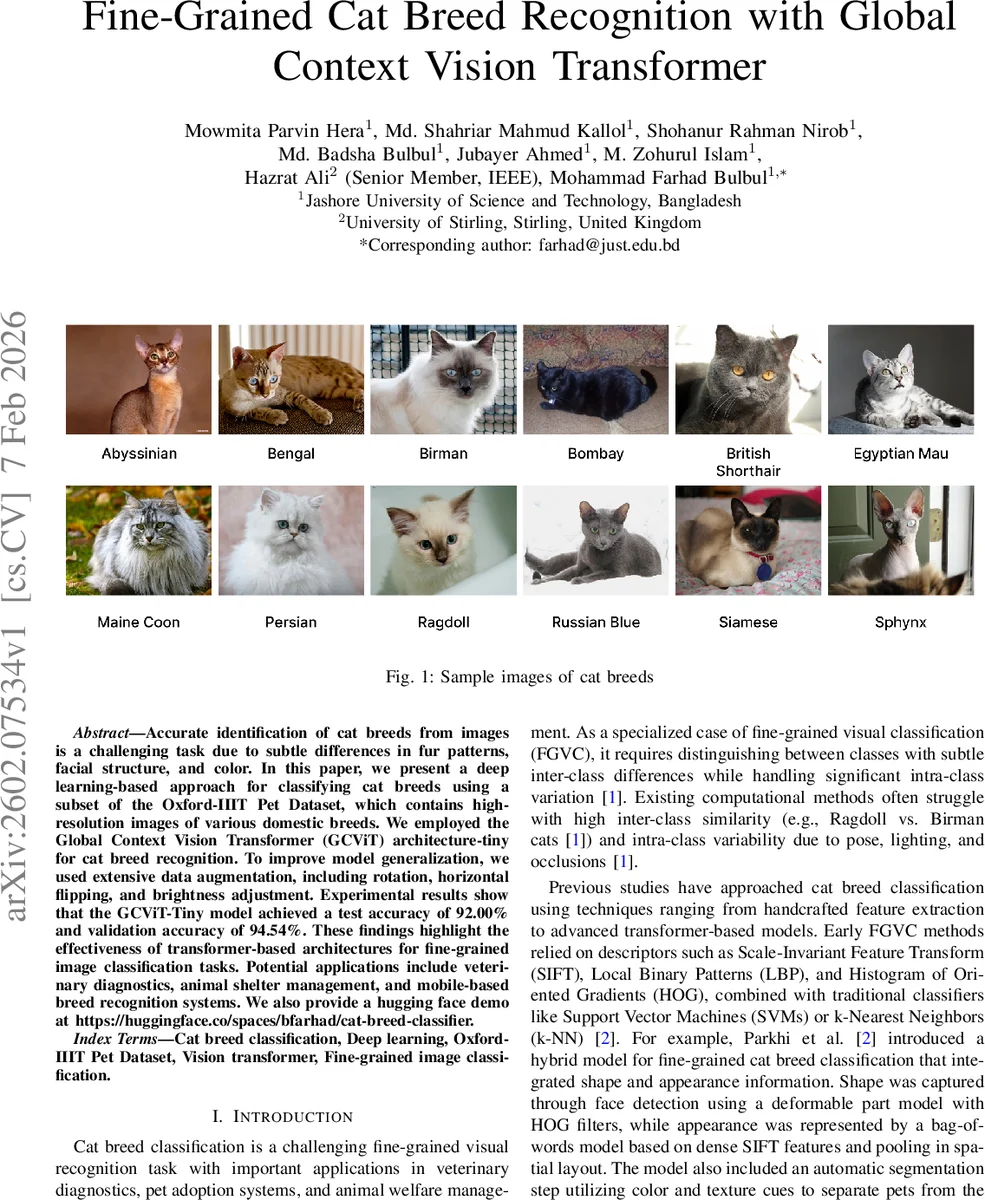

Accurate identification of cat breeds from images is a challenging task due to subtle differences in fur patterns, facial structure, and color. In this paper, we present a deep learning-based approach for classifying cat breeds using a subset of the Oxford-IIIT Pet Dataset, which contains high-resolution images of various domestic breeds. We employed the Global Context Vision Transformer (GCViT) architecture-tiny for cat breed recognition. To improve model generalization, we used extensive data augmentation, including rotation, horizontal flipping, and brightness adjustment. Experimental results show that the GCViT-Tiny model achieved a test accuracy of 92.00% and validation accuracy of 94.54%. These findings highlight the effectiveness of transformer-based architectures for fine-grained image classification tasks. Potential applications include veterinary diagnostics, animal shelter management, and mobile-based breed recognition systems. We also provide a hugging face demo at https://huggingface.co/spaces/bfarhad/cat-breed-classifier.

💡 Research Summary

The paper addresses the challenging fine‑grained visual classification problem of cat‑breed identification by leveraging the Global Context Vision Transformer (GCViT)‑Tiny, a lightweight transformer architecture that incorporates a Global Context Module (GCM) into the self‑attention mechanism. Using a filtered subset of the Oxford‑IIIT Pet dataset containing 12 cat breeds (2,371 images total), the authors split the data into 80 % training, 20 % validation, and a completely separate test set of 1,183 images to ensure unbiased evaluation.

Data preprocessing involves resizing images to 224 × 224 pixels, random cropping, horizontal flipping, rotations up to 20°, and color jitter (brightness, contrast, saturation ±20 %). After augmentation, images are normalized with ImageNet mean and standard deviation. This pipeline aims to improve robustness to pose, lighting, and background variations.

The GCViT‑Tiny model first extracts local features through a convolutional stem, then partitions the resulting feature map into non‑overlapping 16 × 16 patches. Each patch is linearly projected and combined with positional encodings to form token embeddings. The core innovation is the GCM, which computes a global context vector g as a weighted aggregation of all token embeddings. This vector is fused into the keys and values of the attention computation (K̃ = K + gW_GK, Ṽ = V + gW_GV), allowing each attention head to incorporate image‑wide statistics while preserving the locality captured by the convolutional stem. Hierarchical stages gradually reduce spatial resolution and increase embedding dimension, similar to Swin‑Transformer designs, but with the added global‑context enhancement.

Training uses categorical cross‑entropy with label smoothing (ε = 0.1), the AdamW optimizer, a cosine‑annealed learning‑rate schedule (starting at 1e‑4, weight decay 1e‑4), batch size 32, and early stopping with patience of 5 epochs. The model converged after only 10 epochs, achieving near‑perfect training accuracy (~100 %) and stable validation accuracy around 95 %. On the held‑out test set, the model attained 92.00 % overall accuracy. Per‑class metrics show high performance for most breeds (F1‑scores 0.83–1.00), with the lowest score for Ragdoll (0.79) due to higher intra‑class variability and visual similarity to other breeds. The confusion matrix confirms that misclassifications are limited to visually similar categories.

For comparative evaluation, the authors benchmarked against several prior approaches: handcrafted‑feature SVM (66.07 %), CNNs such as VGG16 (60.85 %), InceptionV3 (84.94 %), ResNet‑50 (71.39 %), Xception with transfer learning (88.8 %), and a hybrid MobileViT‑v3 (PetVision) model (75 %). GCViT‑Tiny outperformed all these baselines, demonstrating that global‑context‑aware transformers can surpass both deep CNNs and earlier hybrid designs even when using a lightweight variant.

The paper acknowledges limitations: the study is confined to 12 breeds, and the test data, while separate, still originates from the same dataset distribution. Real‑world deployment scenarios (e.g., mobile camera images, varied backgrounds) remain untested. Moreover, the model’s ability to handle mixed‑breed cats or other animal species is not explored.

In conclusion, the work shows that integrating global context into a transformer yields a compact yet powerful model for fine‑grained cat‑breed classification, achieving 92 % test accuracy with modest computational resources (GTX 1060, 6 GB VRAM). Future directions include multimodal extensions (combining visual and textual metadata), scaling to larger breed sets, and evaluating on edge devices for real‑time applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment