Hyperparameter Transfer Laws for Non-Recurrent Multi-Path Neural Networks

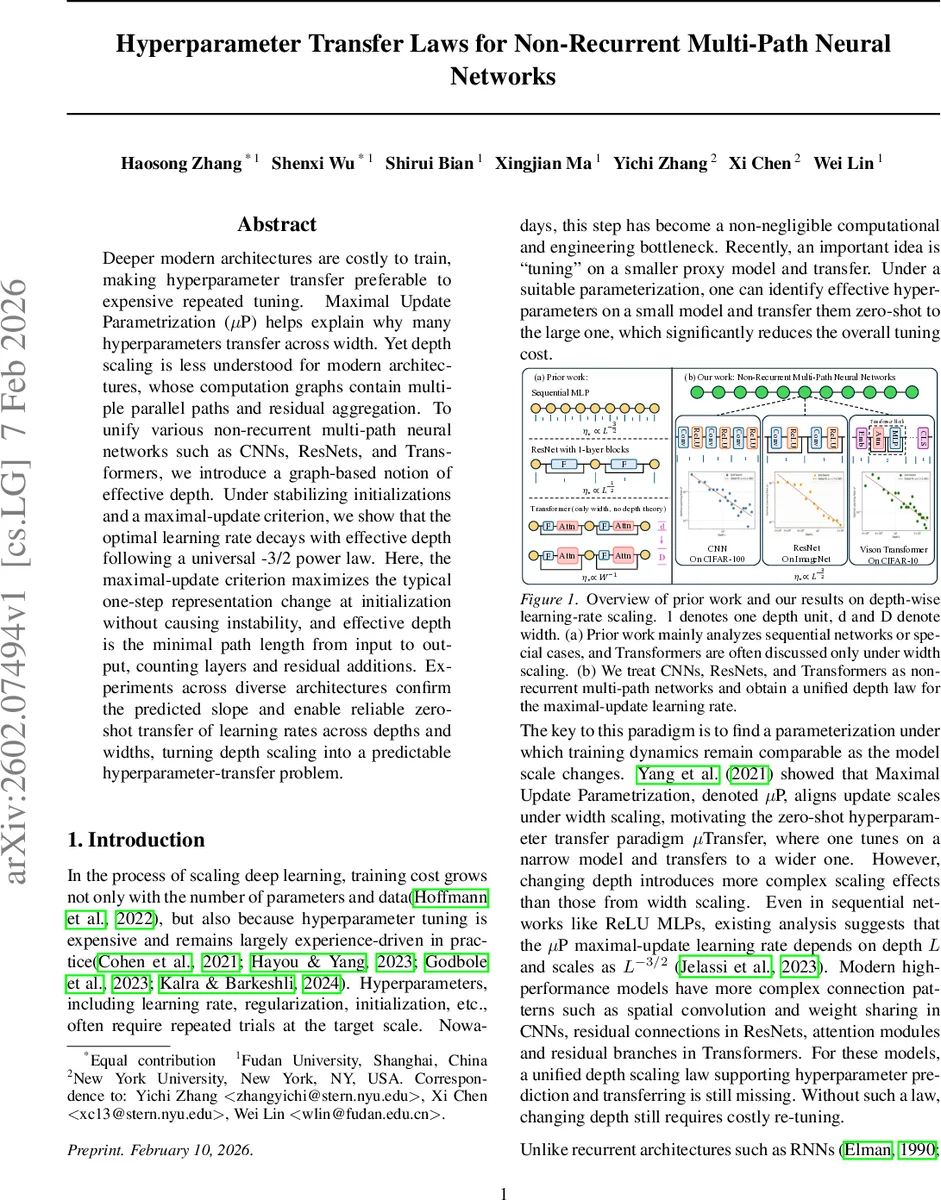

Deeper modern architectures are costly to train, making hyperparameter transfer preferable to expensive repeated tuning. Maximal Update Parametrization ($μ$P) helps explain why many hyperparameters transfer across width. Yet depth scaling is less understood for modern architectures, whose computation graphs contain multiple parallel paths and residual aggregation. To unify various non-recurrent multi-path neural networks such as CNNs, ResNets, and Transformers, we introduce a graph-based notion of effective depth. Under stabilizing initializations and a maximal-update criterion, we show that the optimal learning rate decays with effective depth following a universal -3/2 power law. Here, the maximal-update criterion maximizes the typical one-step representation change at initialization without causing instability, and effective depth is the minimal path length from input to output, counting layers and residual additions. Experiments across diverse architectures confirm the predicted slope and enable reliable zero-shot transfer of learning rates across depths and widths, turning depth scaling into a predictable hyperparameter-transfer problem.

💡 Research Summary

The paper tackles the costly problem of hyper‑parameter tuning when scaling deep neural networks in depth. Building on the Maximal Update Parametrization (µP) framework, which explains why many hyper‑parameters transfer across width, the authors extend the theory to depth for a broad class of non‑recurrent multi‑path architectures—including convolutional networks, residual networks, and Transformers.

First, they formalize a graph‑based notion of effective depth: the length of the shortest computational path from input to output, counting both ordinary layers and each residual addition. This definition unifies disparate models under a single depth metric.

Under two key assumptions—(1) stabilizing initialization, which combines standard fan‑in (He) initialization with a 1/√K scaling for residual branches, ensuring forward and backward signals remain bounded as depth grows; and (2) a maximal‑update criterion, which fixes the total one‑step update energy (η²‖∇θL‖²) to be constant across scales—the authors derive the typical magnitude of a one‑step representation change for each architecture.

For sequential MLPs and homogeneous CNNs the analysis reproduces the known scaling η∗∝L⁻³/². For residual networks, each residual block contributes one effective depth unit; the same maximal‑update argument yields η∗∝K⁻³/² where K is the number of blocks. Transformers contain two residual additions per block (self‑attention and feed‑forward), so the effective depth is 2·B (B = number of blocks) and the optimal learning rate follows η∗∝(2B)⁻³/², i.e. the same –3/2 power law. Crucially, the exponent –3/2 is universal; architecture‑specific constants (channel width, number of heads, activation‑dependent gating factor q) affect only the prefactor.

The main theoretical claim is therefore a depth‑wise learning‑rate scaling law:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment