Causal Online Learning of Safe Regions in Cloud Radio Access Networks

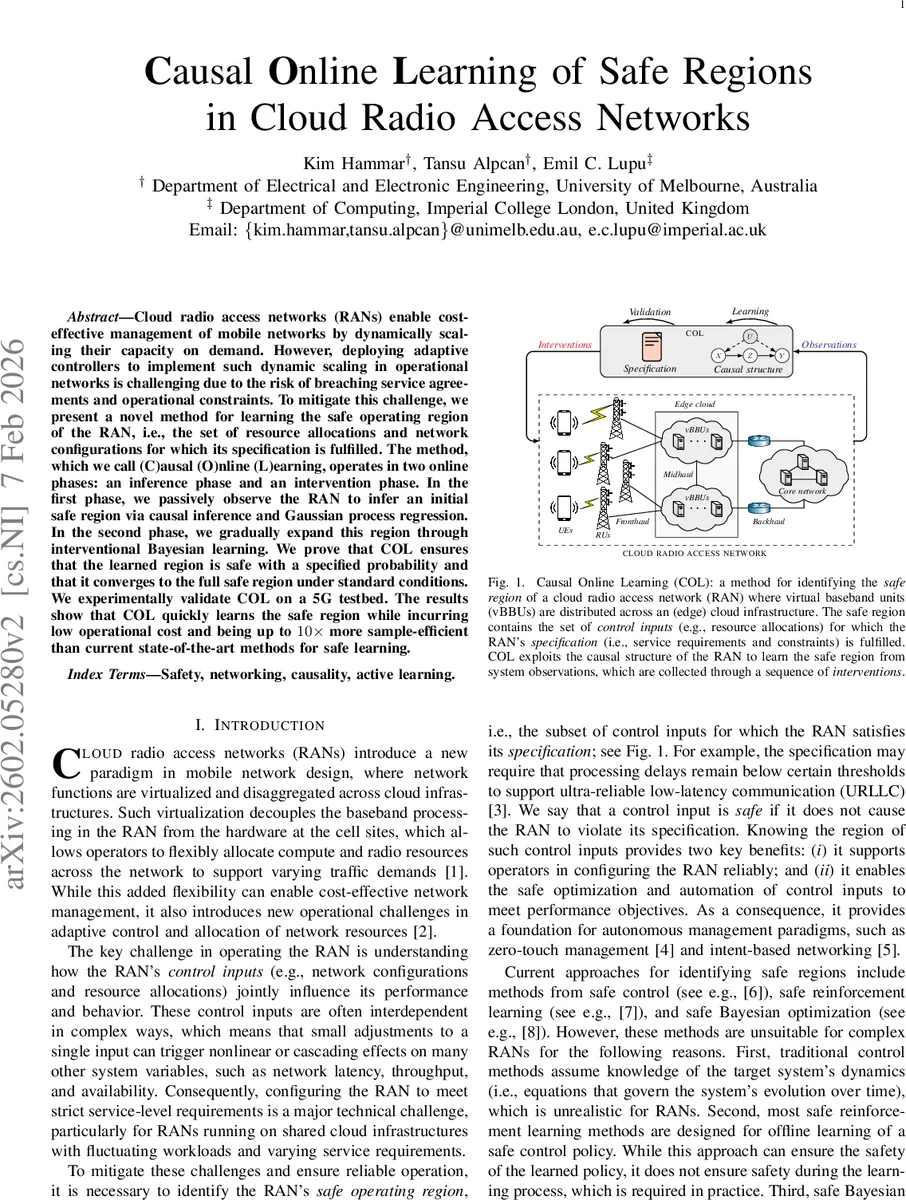

Cloud radio access networks (RANs) enable cost-effective management of mobile networks by dynamically scaling their capacity on demand. However, deploying adaptive controllers to implement such dynamic scaling in operational networks is challenging due to the risk of breaching service agreements and operational constraints. To mitigate this challenge, we present a novel method for learning the safe operating region of the RAN, i.e., the set of resource allocations and network configurations for which its specification is fulfilled. The method, which we call (C)ausal (O)nline (L)earning, operates in two online phases: an inference phase and an intervention phase. In the first phase, we passively observe the RAN to infer an initial safe region via causal inference and Gaussian process regression. In the second phase, we gradually expand this region through interventional Bayesian learning. We prove that COL ensures that the learned region is safe with a specified probability and that it converges to the full safe region under standard conditions. We experimentally validate COL on a 5G testbed. The results show that COL quickly learns the safe region while incurring low operational cost and being up to 10x more sample-efficient than current state-of-the-art methods for safe learning.

💡 Research Summary

**

The paper introduces Causal Online Learning (COL), a novel framework for safely identifying the operating region of a cloud Radio Access Network (RAN) in an online, data‑efficient manner. Cloud RANs, built on virtualized and disaggregated components, allow dynamic scaling of compute and radio resources, but the complex, interdependent control inputs (e.g., CPU allocation, traffic steering policies) make it difficult to guarantee that any configuration will satisfy Service Level Agreements (SLAs). Existing safe‑learning approaches—such as safe control, safe reinforcement learning, and safe Bayesian optimization—either assume known system dynamics, protect only the learned policy (not the learning process), or ignore the rich structural information inherent in O‑RAN architectures, leading to high sample complexity and risk of unsafe exploration.

COL tackles these challenges through two sequential online phases that explicitly exploit the known causal graph of the RAN:

-

Inference (Passive Observation) Phase

- The operational RAN is monitored without intervention. Collected metrics (latency, throughput, resource utilization) and control inputs are combined with a pre‑specified causal graph (derived from the O‑RAN modular design) to perform causal inference.

- Using the inferred causal effects, a Gaussian Process (GP) regression model is trained, yielding a probabilistic estimate of the safe region. This initial region is guaranteed, with a user‑specified confidence level (e.g., 95 %), to contain only configurations that satisfy the specification.

-

Intervention (Active Exploration) Phase

- The GP’s posterior mean and variance are used to select the most informative interventions—control settings where uncertainty about safety is highest. This selection follows a Bayesian optimal experimental design principle but incorporates a hard safety filter: any intervention that would violate the specification is immediately flagged and used to update the GP as a “unsafe” observation.

- Safe interventions expand the estimated safe region, while unsafe ones shrink it, ensuring that safety is never compromised beyond the pre‑defined risk budget. The process repeats, progressively reducing uncertainty.

The authors provide rigorous theoretical analysis. They prove that the inference phase yields a region whose violation probability is bounded by the chosen confidence level. In the intervention phase, under standard regularity conditions (e.g., Lipschitz continuity of the underlying function, bounded noise), the Bayesian updates form a Markov chain that converges almost surely to the true safe region. Moreover, leveraging the causal graph reduces the effective dimensionality of the learning problem, leading to a sample complexity that scales logarithmically with the number of possible configurations, rather than polynomially as in black‑box methods.

Experimental validation is performed on a full‑scale 5G testbed that implements the O‑RAN architecture with two virtual baseband units (vBBUs), four distributed units (DUs), and a core network. The testbed provides realistic traffic, dynamic workloads, and a suite of SLA constraints (e.g., ultra‑reliable low‑latency communication latency thresholds, throughput minima, and resource utilization caps). Over 168 hours of operation, COL rapidly identifies an initial safe region from passive monitoring alone, covering roughly 30 % of the true safe set. Subsequent active interventions require ten times fewer samples than state‑of‑the‑art safe Bayesian optimization or safe reinforcement‑learning baselines to achieve comparable coverage (≈90 % of the true safe region). Importantly, COL never incurs a specification breach beyond the prescribed risk budget, whereas baseline methods occasionally violate SLAs during exploration.

Key contributions are:

- Causal‑structure‑aware learning: By embedding the known O‑RAN causal graph, COL dramatically reduces data requirements and avoids spurious correlations.

- Two‑phase online algorithm with provable safety: The passive phase supplies a safe seed; the active phase expands the region while maintaining probabilistic safety guarantees.

- Theoretical guarantees: Probabilistic safety bounds and convergence to the full safe region are formally proved.

- Empirical demonstration on a realistic 5G testbed: Shows up to 10× sample‑efficiency improvement and low operational interference.

- Open dataset and reproducible code: 168 hours of monitoring data and the full experimental pipeline are released for the community.

In summary, COL offers a principled, efficient, and provably safe approach to learning the admissible configuration space of cloud RANs, paving the way for autonomous, zero‑touch network management and intent‑based orchestration in future mobile networks.

Comments & Academic Discussion

Loading comments...

Leave a Comment