Towards Effective and Efficient Context-aware Nucleus Detection in Histopathology Whole Slide Images

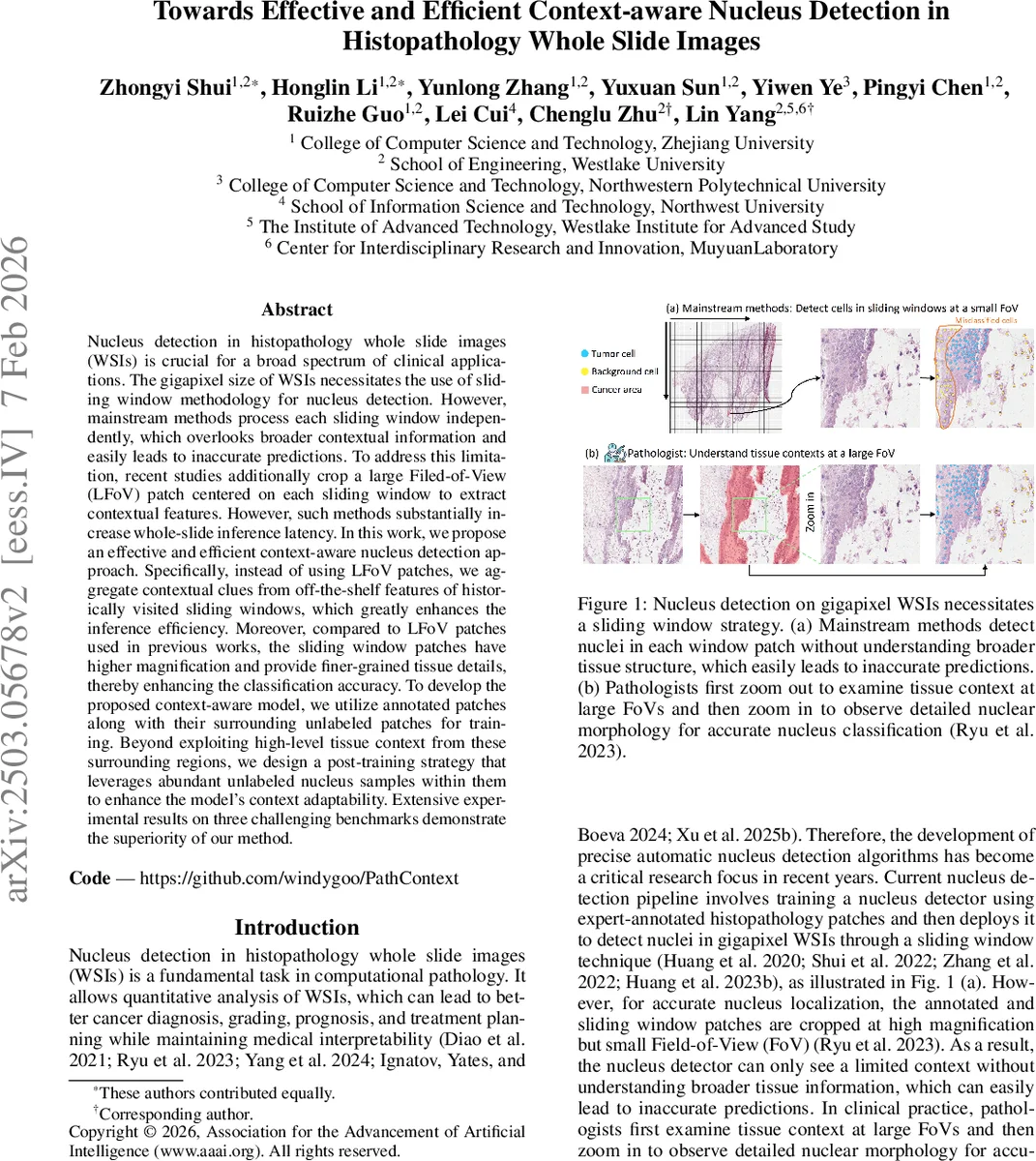

Nucleus detection in histopathology whole slide images (WSIs) is crucial for a broad spectrum of clinical applications. The gigapixel size of WSIs necessitates the use of sliding window methodology for nucleus detection. However, mainstream methods process each sliding window independently, which overlooks broader contextual information and easily leads to inaccurate predictions. To address this limitation, recent studies additionally crop a large Filed-of-View (LFoV) patch centered on each sliding window to extract contextual features. However, such methods substantially increase whole-slide inference latency. In this work, we propose an effective and efficient context-aware nucleus detection approach. Specifically, instead of using LFoV patches, we aggregate contextual clues from off-the-shelf features of historically visited sliding windows, which greatly enhances the inference efficiency. Moreover, compared to LFoV patches used in previous works, the sliding window patches have higher magnification and provide finer-grained tissue details, thereby enhancing the classification accuracy. To develop the proposed context-aware model, we utilize annotated patches along with their surrounding unlabeled patches for training. Beyond exploiting high-level tissue context from these surrounding regions, we design a post-training strategy that leverages abundant unlabeled nucleus samples within them to enhance the model’s context adaptability. Extensive experimental results on three challenging benchmarks demonstrate the superiority of our method.

💡 Research Summary

The paper tackles the long‑standing challenge of nucleus detection in gigapixel histopathology whole‑slide images (WSIs). Traditional sliding‑window detectors treat each window independently, ignoring the broader tissue context that pathologists routinely use. Recent works mitigate this by feeding an additional low‑magnification large field‑of‑view (LFoV) patch alongside each window. While effective, LFoV patches dramatically increase I/O and inference time and sacrifice fine‑grained nuclear morphology because of their low resolution.

The authors propose a fundamentally different strategy: they exploit the contextual information already present in the surrounding sliding‑window patches that have been visited during the scanning process. Instead of generating extra LFoV images, the method re‑uses off‑the‑shelf features of these neighboring windows. A single shared visual encoder processes both the region‑of‑interest (ROI) patch and its surrounding patches because they share the same magnification and data distribution. For each ROI, the encoder produces a feature map F_i; for each of the (2δ+1)² neighboring patches it produces context maps F_i,j,k. To keep memory consumption tractable, only a random subset k of the surrounding patches participates in back‑propagation while the rest are processed in a gradient‑free forward pass. Each context map is down‑sampled by average pooling over an s×s grid, then concatenated into a compact tensor F_ctx_i.

Contextual cues are injected via a cross‑attention module where the ROI feature acts as the query and the stacked context features serve as key and value: F′_i = CrossAttn(Q=F_i, K=F_ctx_i, V=F_ctx_i). Positional embeddings were found unnecessary because the continuity of adjacent patches implicitly encodes relative positions. The enriched feature F′_i is fed to a decoder (based on P2PNet) that predicts nucleus centroids and categories.

A novel post‑training stage leverages the abundant unlabeled nuclei present in the surrounding patches. The pre‑trained detector first generates pseudo‑labels for these nuclei, but self‑training suffers from confirmation bias. To overcome this, the authors convert point annotations to pseudo‑masks, train a lightweight auxiliary segmentation model on these masks, and then use it to re‑label the surrounding nuclei—a cross‑labeling strategy that provides diverse supervision. During fine‑tuning, the detector’s classification head receives both ground‑truth labels for the ROI and pseudo‑labels for the context patches, improving the model’s adaptability to varied tissue contexts.

The authors also observe that adding high‑level context can dilute the model’s attention to fine nuclear morphology, as visualized by Grad‑CAM++. To restore morphological sensitivity, they extract morphology‑rich features m from the detector’s last layer (optimized for segmentation) and concatenate them with the context‑enriched embedding e before the final classification head. At inference, the model first predicts foreground nuclei with the original head, then refines class predictions using the combined

Comments & Academic Discussion

Loading comments...

Leave a Comment