Learning a Generative Meta-Model of LLM Activations

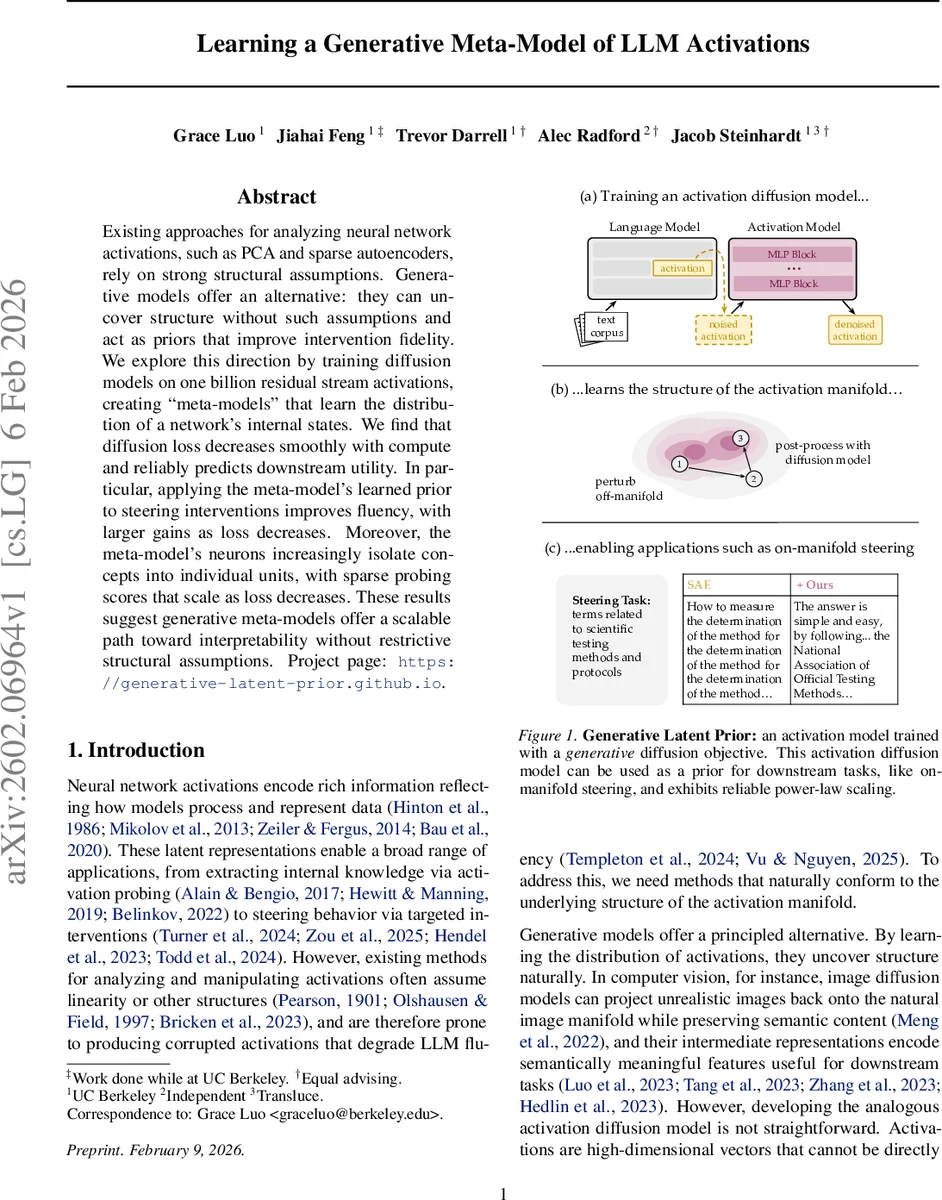

Existing approaches for analyzing neural network activations, such as PCA and sparse autoencoders, rely on strong structural assumptions. Generative models offer an alternative: they can uncover structure without such assumptions and act as priors that improve intervention fidelity. We explore this direction by training diffusion models on one billion residual stream activations, creating “meta-models” that learn the distribution of a network’s internal states. We find that diffusion loss decreases smoothly with compute and reliably predicts downstream utility. In particular, applying the meta-model’s learned prior to steering interventions improves fluency, with larger gains as loss decreases. Moreover, the meta-model’s neurons increasingly isolate concepts into individual units, with sparse probing scores that scale as loss decreases. These results suggest generative meta-models offer a scalable path toward interpretability without restrictive structural assumptions. Project page: https://generative-latent-prior.github.io.

💡 Research Summary

This paper introduces a novel paradigm for analyzing and manipulating the internal activations of large language models (LLMs) by learning a generative meta-model of their activation distributions. The authors propose the Generative Latent Prior (GLP), a diffusion model trained on approximately one billion residual stream activations extracted from a source LLM.

The core motivation stems from limitations in existing methods like Principal Component Analysis (PCA) and Sparse Autoencoders (SAEs), which rely on strong structural assumptions (e.g., linearity, sparsity) and can produce off-manifold activations that degrade output fluency when used for interventions. As an alternative, GLP learns the data distribution of activations directly using a diffusion modeling objective, specifically Flow Matching. This approach imposes minimal assumptions, allowing the model to discover the natural structure of the activation manifold. The denoiser network architecture is based on Llama3’s MLP blocks, conditioned on the diffusion timestep. The data pipeline efficiently caches activations from a large web corpus (FineWeb) using optimized libraries.

A key contribution is the rigorous evaluation of GLP’s generation quality, given that activations are high-dimensional vectors not directly inspectable. Using the Fréchet Distance (FD) between sets of real and generated activations, the authors show that GLP samples are statistically closer to the real distribution than SAE reconstructions, even though GLP generates from pure noise while SAEs reconstruct from real activations. Visualizations using PCA further confirm that with sufficient sampling steps (around 20), GLP-generated samples become nearly indistinguishable from real ones. In a “Delta LM Loss” test, which measures the increase in LLM perplexity when original activations are replaced with reconstructed ones, GLP outperforms a comparable SAE, indicating it better preserves the natural manifold and causes less disruption to the LLM’s function.

The paper establishes that GLP’s performance follows predictable scaling laws. As model size and compute (FLOPs) increase, the diffusion training loss decreases smoothly according to a power law. Crucially, this diffusion loss serves as a reliable predictor for downstream task utility. The authors demonstrate this in two primary application areas:

- On-Manifold Steering: Activation steering methods add a concept direction to activations to control LLM output, but large interventions push activations off-manifold, harming fluency. Using GLP as a prior—by processing the steered activation through diffusion sampling—projects it back onto the natural manifold while preserving the intended semantic control. This significantly improves fluency across benchmarks like sentiment control, SAE feature steering, and persona elicitation, with gains scaling as diffusion loss decreases.

- Interpretable Features via Meta-Neurons: The intermediate representations (neurons) within the trained GLP model itself are found to isolate semantic concepts into individual units. In 1-D linear probing evaluations on 113 binary classification tasks, these “meta-neurons” achieve higher accuracy than both raw LLM neurons and SAE features. This probing performance also scales predictably as the diffusion loss decreases.

In summary, the work posits that generative meta-models like GLP offer a scalable path toward interpretability. By learning the activation distribution without restrictive inductive biases, GLP provides a powerful prior that enhances intervention fidelity and discovers interpretable features, with its quality improving predictably with increased compute. This approach shifts the focus from models that assume structure (like SAEs) to models that learn structure directly from data.

Comments & Academic Discussion

Loading comments...

Leave a Comment