Plato's Form: Toward Backdoor Defense-as-a-Service for LLMs with Prototype Representations

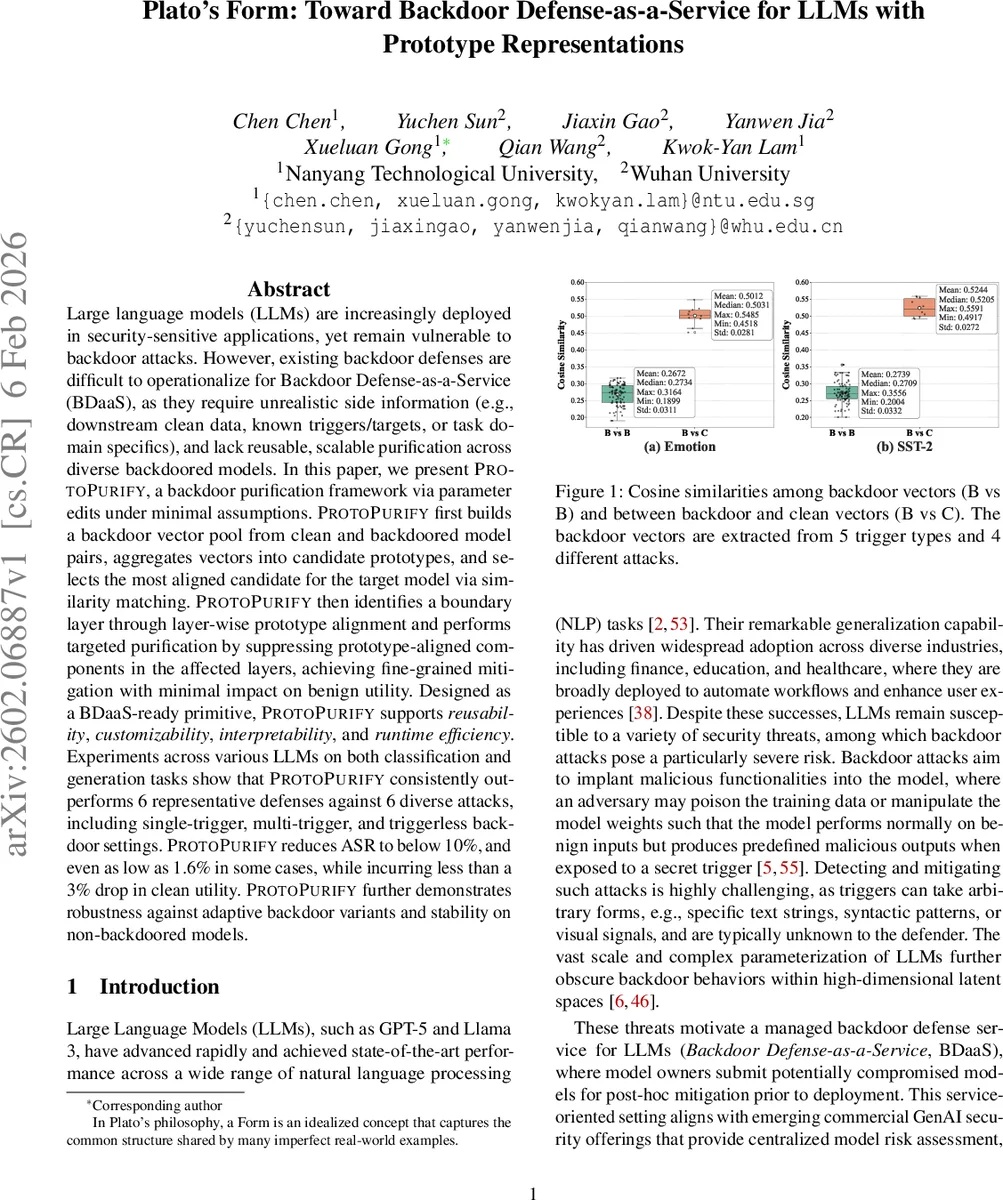

Large language models (LLMs) are increasingly deployed in security-sensitive applications, yet remain vulnerable to backdoor attacks. However, existing backdoor defenses are difficult to operationalize for Backdoor Defense-as-a-Service (BDaaS), as they require unrealistic side information (e.g., downstream clean data, known triggers/targets, or task domain specifics), and lack reusable, scalable purification across diverse backdoored models. In this paper, we present PROTOPURIFY, a backdoor purification framework via parameter edits under minimal assumptions. PROTOPURIFY first builds a backdoor vector pool from clean and backdoored model pairs, aggregates vectors into candidate prototypes, and selects the most aligned candidate for the target model via similarity matching. PROTOPURIFY then identifies a boundary layer through layer-wise prototype alignment and performs targeted purification by suppressing prototype-aligned components in the affected layers, achieving fine-grained mitigation with minimal impact on benign utility. Designed as a BDaaS-ready primitive, PROTOPURIFY supports reusability, customizability, interpretability, and runtime efficiency. Experiments across various LLMs on both classification and generation tasks show that PROTOPURIFY consistently outperforms 6 representative defenses against 6 diverse attacks, including single-trigger, multi-trigger, and triggerless backdoor settings. PROTOPURIFY reduces ASR to below 10%, and even as low as 1.6% in some cases, while incurring less than a 3% drop in clean utility. PROTOPURIFY further demonstrates robustness against adaptive backdoor variants and stability on non-backdoored models.

💡 Research Summary

The paper addresses the pressing need for a practical, service‑oriented solution to backdoor attacks on large language models (LLMs). Existing defenses either require unrealistic side information—such as clean downstream data, known trigger patterns, or task‑specific knowledge—or they operate on a per‑model basis, making them unsuitable for a Backdoor Defense‑as‑a‑Service (BDaaS) setting. To overcome these limitations, the authors propose PROTOPURIFY, a prototype‑based backdoor purification framework that works under minimal assumptions and satisfies four key desiderata for BDaaS: reusability, customizability, interpretability, and runtime efficiency.

Core Idea

PROTOPURIFY is built on the observation that diverse backdoor attacks induce correlated shifts in the model’s weight space, suggesting the existence of a shared “backdoor prototype.” The method proceeds in four stages:

-

Backdoor Vector Pool Construction – For each attack scenario, a clean model and a backdoored counterpart are trained from the same base model. The element‑wise difference of their parameters constitutes a backdoor vector. Repeating this across multiple datasets, trigger types (single‑trigger, multi‑trigger, triggerless), and attack algorithms yields a rich pool of vectors that capture a wide spectrum of malicious behavior.

-

Prototype Generation – The collected vectors are aggregated using simple arithmetic mean or more sophisticated dimensionality‑reduction techniques such as Principal Component Analysis (PCA). This produces a set of prototype vectors that compactly encode the common malicious subspace.

-

Boundary Layer Detection – For a target backdoored model, the same weight‑difference is computed and decomposed layer‑wise. Cosine similarity between each layer’s update and each prototype reveals a characteristic pattern: low alignment in lower layers and a sharp increase in deeper layers. By applying “Magnitude Significance” and “Increment Significance” criteria, a boundary layer is automatically identified, separating protected lower layers from candidate upper layers that likely host the backdoor.

-

Prototype‑Guided Purification – Within the candidate layers, each weight matrix is factorized (e.g., via Singular Value Decomposition) into independent components. The projection of each component onto the selected prototype quantifies its “backdoor alignment.” Components with high alignment are attenuated by a calibrated scaling factor (the purification strength). This selective suppression removes the malicious subspace while preserving the bulk of the model’s useful knowledge. The strength parameter can be tuned to trade off between attack mitigation and clean utility.

Desiderata Fulfilment

- Reusability: Prototypes are model‑agnostic; once built, they can be reused across many downstream models without retraining.

- Customizability: The boundary‑layer detector and the scaling factor allow practitioners to adapt the method to specific threat models or performance constraints.

- Interpretability: Layer‑wise similarity scores and component‑wise projections provide transparent insight into where and how the backdoor manifests.

- Runtime Efficiency: All operations are forward‑only (vector differences, cosine similarity, matrix decomposition) and require no gradient‑based optimization, making the approach lightweight for high‑throughput BDaaS pipelines.

Experimental Evaluation

The authors evaluate PROTOPURIFY on several LLM families (GPT‑2, LLaMA‑2‑7B, Falcon‑7B) across both classification (sentiment, topic) and generation (dialogue, summarization) tasks. Six representative backdoor attacks are considered: Dirty‑Label, BadEdit, Virtual Prompt Injection (VPI), Composite, Dual‑Trigger, and Distributed Trigger, covering single‑trigger, multi‑trigger, and triggerless scenarios. Six state‑of‑the‑art defenses serve as baselines: fine‑tuning, pruning, BadAct, W2SDefense, N‑AD, and PURE.

Key findings include:

- Attack Success Rate (ASR) Reduction: PROTOPURIFY lowers ASR from near‑100% to below 10% on average, achieving as low as 1.6% for the hardest triggerless attacks.

- Clean Utility Preservation: Clean‑data accuracy (CDA) or generation quality (BLEU/ROUGE) drops by less than 3% in all settings, substantially better than baselines that often incur 5‑10% degradation.

- Robustness to Adaptive Attacks: When attackers modify their backdoor vectors to evade the prototype (e.g., by adding orthogonal perturbations), PROTOPURIFY still maintains >80% mitigation effectiveness.

- Stability on Benign Models: Applying the method to non‑backdoored models results in negligible performance loss (<0.5%), confirming that the selective suppression does not over‑prune.

- Scalability: The runtime overhead per model is on the order of a few minutes on a single GPU, far lower than full fine‑tuning (hours) and comparable to lightweight pruning methods.

Limitations and Future Work

A primary limitation is the need for an initial set of clean‑backdoored model pairs to construct the prototype pool. The authors mitigate this by releasing a public repository of vectors and by suggesting continuous updates within a BDaaS platform. Additionally, the current approach focuses on weight‑space signatures; integrating input‑level trigger detection could yield a more comprehensive defense. Finally, exploring non‑linear prototype representations (e.g., via neural embeddings) may capture more sophisticated backdoors that do not align linearly in weight space.

Conclusion

PROTOPURIFY introduces a novel, prototype‑driven paradigm for backdoor mitigation that is both theoretically grounded and practically viable for large‑scale service deployment. By abstracting backdoor behavior into reusable prototypes, automatically locating the affected layers, and selectively attenuating the malicious subspace, the framework delivers superior mitigation‑utility trade‑offs while meeting the operational constraints of BDaaS. The extensive empirical validation demonstrates its effectiveness across model sizes, tasks, and attack families, positioning PROTOPURIFY as a promising foundation for future secure LLM services.

Comments & Academic Discussion

Loading comments...

Leave a Comment