RanSOM: Second-Order Momentum with Randomized Scaling for Constrained and Unconstrained Optimization

Momentum methods, such as Polyak’s Heavy Ball, are the standard for training deep networks but suffer from curvature-induced bias in stochastic settings, limiting convergence to suboptimal $\mathcal{O}(ε^{-4})$ rates. Existing corrections typically require expensive auxiliary sampling or restrictive smoothness assumptions. We propose \textbf{RanSOM}, a unified framework that eliminates this bias by replacing deterministic step sizes with randomized steps drawn from distributions with mean $η_t$. This modification allows us to leverage Stein-type identities to compute an exact, unbiased estimate of the momentum bias using a single Hessian-vector product computed jointly with the gradient, avoiding auxiliary queries. We instantiate this framework in two algorithms: \textbf{RanSOM-E} for unconstrained optimization (using exponentially distributed steps) and \textbf{RanSOM-B} for constrained optimization (using beta-distributed steps to strictly preserve feasibility). Theoretical analysis confirms that RanSOM recovers the optimal $\mathcal{O}(ε^{-3})$ convergence rate under standard bounded noise, and achieves optimal rates for heavy-tailed noise settings ($p \in (1, 2]$) without requiring gradient clipping.

💡 Research Summary

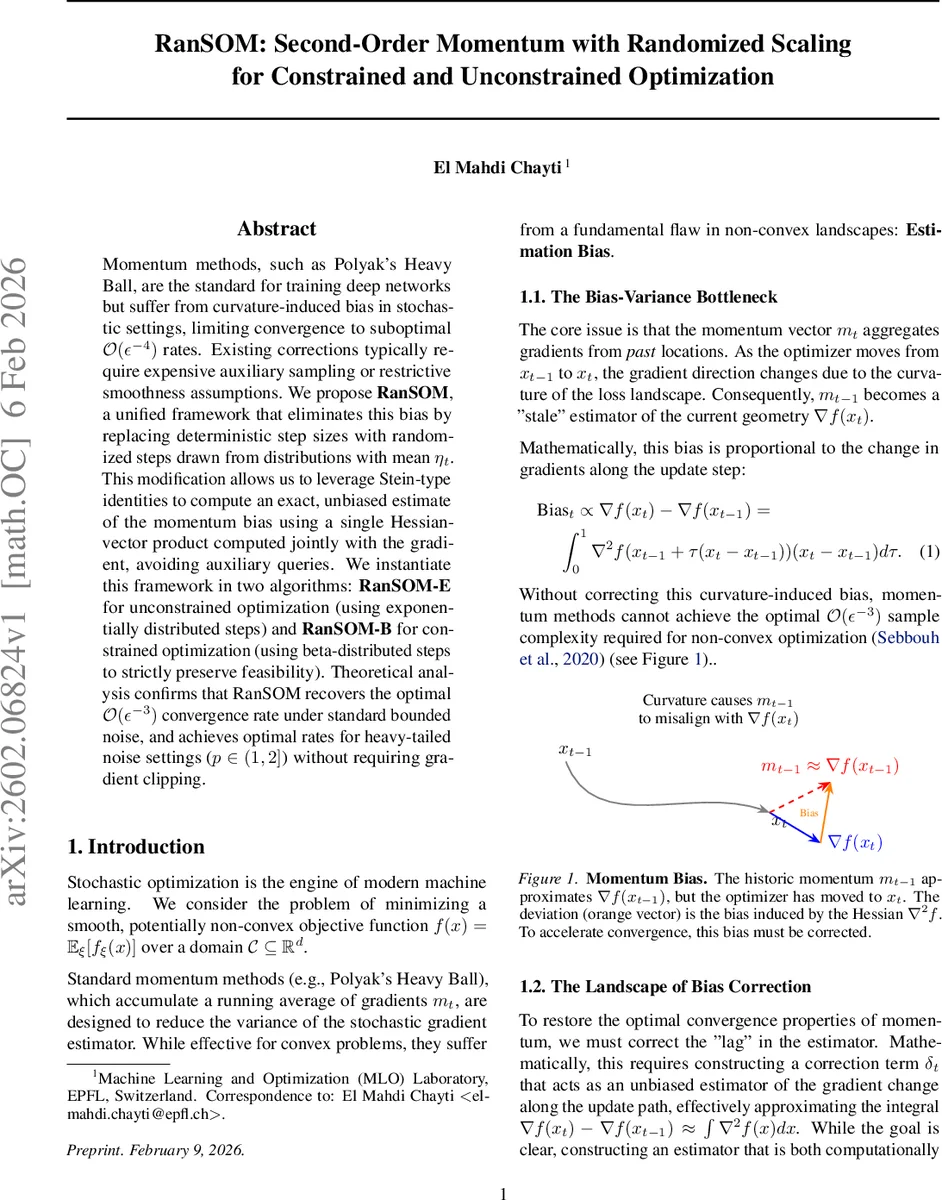

The paper introduces RanSOM (Randomized Second‑Order Momentum), a novel framework that removes the curvature‑induced bias inherent in stochastic momentum methods such as Heavy‑Ball and SGDM. In non‑convex stochastic optimization, the momentum vector mₜ accumulates past gradients, but as the iterate moves, the Hessian of the loss changes, making mₜ a stale estimator of the current gradient. This bias limits classic momentum to sub‑optimal O(ε⁻⁴) sample complexity.

Existing bias‑correction techniques either require extra gradient evaluations (e.g., STORM’s two‑gradient estimator), auxiliary “look‑ahead” points for Hessian‑vector products (SOM‑Unif), or strong smoothness assumptions (Lipschitz Hessian, smooth individual losses). These constraints make them computationally expensive and unsuitable for modern deep networks with non‑smooth components like ReLU.

RanSOM’s key idea is to treat the step size as a random variable sₜ with mean ηₜ, sampled from an exponential distribution for unconstrained problems (RanSOM‑E) or a Beta(1, K) distribution for constrained problems (RanSOM‑B). Defining g(s)=∇f(xₜ+sdₜ) where dₜ is the update direction, Stein‑type integration‑by‑parts identities give

- Exponential: E

Comments & Academic Discussion

Loading comments...

Leave a Comment