Soft Forward-Backward Representations for Zero-shot Reinforcement Learning with General Utilities

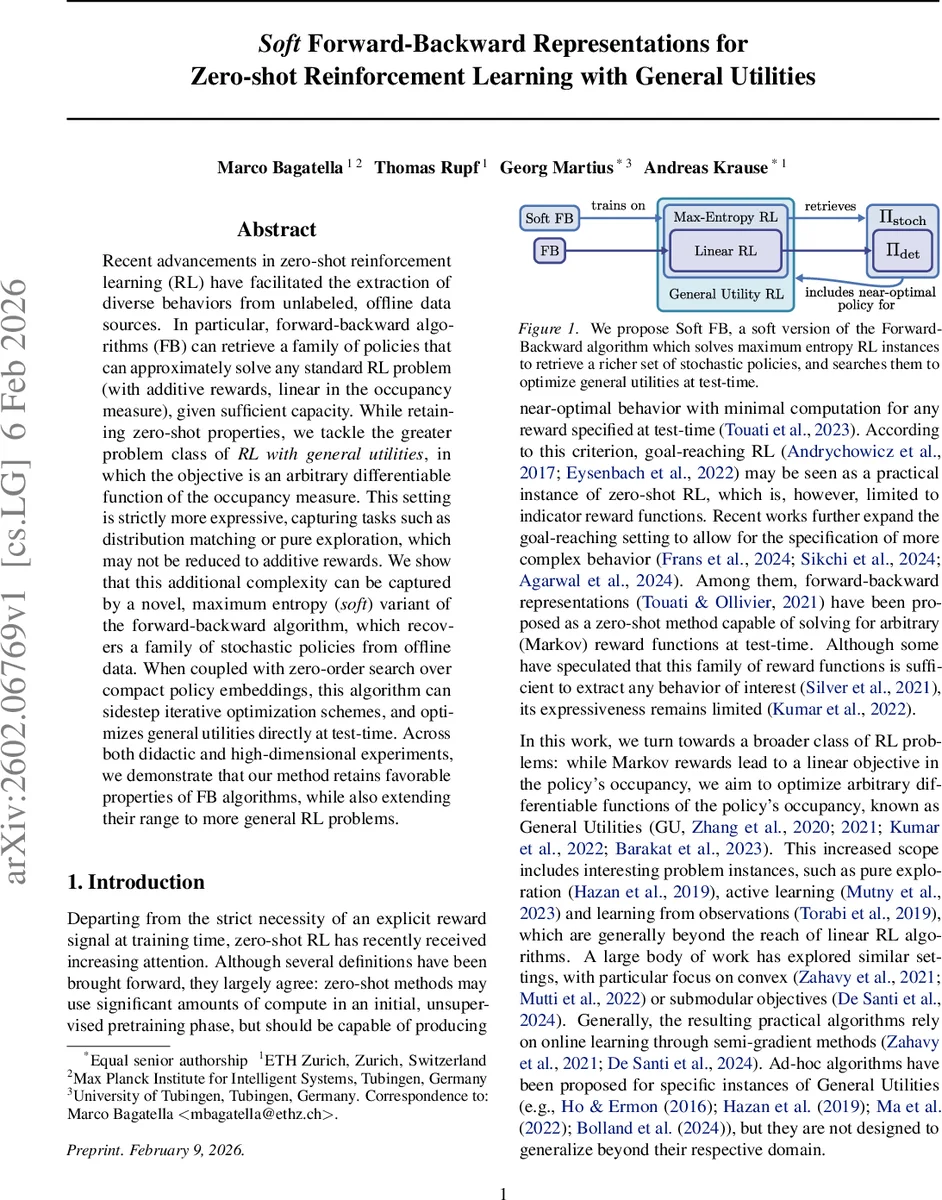

Recent advancements in zero-shot reinforcement learning (RL) have facilitated the extraction of diverse behaviors from unlabeled, offline data sources. In particular, forward-backward algorithms (FB) can retrieve a family of policies that can approximately solve any standard RL problem (with additive rewards, linear in the occupancy measure), given sufficient capacity. While retaining zero-shot properties, we tackle the greater problem class of RL with general utilities, in which the objective is an arbitrary differentiable function of the occupancy measure. This setting is strictly more expressive, capturing tasks such as distribution matching or pure exploration, which may not be reduced to additive rewards. We show that this additional complexity can be captured by a novel, maximum entropy (soft) variant of the forward-backward algorithm, which recovers a family of stochastic policies from offline data. When coupled with zero-order search over compact policy embeddings, this algorithm can sidestep iterative optimization schemes, and optimizes general utilities directly at test-time. Across both didactic and high-dimensional experiments, we demonstrate that our method retains favorable properties of FB algorithms, while also extending their range to more general RL problems.

💡 Research Summary

Zero‑shot reinforcement learning (RL) aims to learn a rich repertoire of behaviors from unlabeled offline data, such that at test time a user can specify a new objective and instantly obtain a policy without any further training. Existing forward‑backward (FB) methods achieve this for linear RL problems, where the objective is a dot‑product between the discounted occupancy measure and a reward vector. However, linear RL cannot express many useful tasks—pure exploration, distribution matching, or any objective that is a non‑linear function of the occupancy measure (the so‑called General Utilities, GU).

The paper introduces Soft Forward‑Backward (Soft FB), a principled extension that brings the expressive power of maximum‑entropy RL into the FB framework. The key ideas are:

-

Entropy regularization – Each policy πz is defined via a soft‑max over a Q‑function that includes both the reward term and a state‑wise entropy bonus Hπz. This forces the learned policies to have full support, i.e., to be stochastic, which is essential for optimizing non‑linear utilities.

-

Re‑parameterization of the embedding space – The original FB embedding z∈ℝᵈ can be arbitrarily large; the authors map it to a bounded vector z′ = z/(‖z‖+1) that lives inside a d‑dimensional unit ball. The origin corresponds to a uniform (maximum‑entropy) policy, while points on the surface recover the deterministic policies that standard FB would return. Thus the norm of the embedding directly controls the degree of stochasticity.

-

Theoretical guarantees –

Theorem 4.1 shows that for any bounded reward vector R, Soft FB recovers the exact optimal maximum‑entropy policy.

Theorem 4.2 proves an existence result: for any differentiable utility f(Mπ), there exists an embedding z′ such that the corresponding policy πz′ is ε‑optimal for f. In other words, the set of policies produced by Soft FB is dense enough to approximate the optimum of any GU. -

Practical algorithm – Forward and backward representations are parameterized by neural networks Fθ(s,a,z) and Bϕ(s′). A Bellman‑residual loss together with an orthonormalization term trains these networks on offline transition data. The policy πψ(·|s,z) is also a neural network, trained with a soft‑Q‑learning objective that incorporates the entropy term. At test time, the pre‑trained networks are frozen and a low‑dimensional zero‑order optimizer (e.g., CMA‑ES or Bayesian optimization) searches over the unit‑ball embedding to maximize the user‑specified utility, using a sample‑based estimator of the occupancy measure.

-

Experiments – In simple continuous control domains the authors demonstrate that Soft FB can solve pure exploration, state‑action distribution matching, and goal‑reaching tasks that are impossible for standard FB. On larger benchmark suites (e.g., MuJoCo, Atari‑style environments) the method scales, and entropy regularization also improves policy diversity for ordinary linear rewards. Comparisons show that Soft FB retains the fast zero‑shot inference of FB while dramatically expanding the class of solvable problems.

Contributions: (i) a soft FB algorithm that retrieves stochastic policies and enables zero‑shot optimization of arbitrary differentiable utilities; (ii) formal proofs of expressiveness; (iii) extensive empirical validation showing richer policy families and retained computational efficiency.

Limitations and future work: The zero‑order search over embeddings, while low‑dimensional, still incurs runtime cost and may be sensitive to the choice of optimizer and entropy weight. The paper provides limited quantitative analysis of sample efficiency and approximation error in high‑dimensional continuous spaces. Future directions include meta‑learning better initialization for the embedding search, adaptive entropy schedules, Bayesian uncertainty‑aware search, and extensions to multi‑objective GU settings.

Overall, the work convincingly demonstrates that adding entropy regularization and a bounded embedding search to the forward‑backward framework lifts zero‑shot RL from linear reward functions to the far broader realm of general utilities, opening the door to zero‑shot solutions for exploration, imitation, and distribution‑matching tasks that were previously out of reach.

Comments & Academic Discussion

Loading comments...

Leave a Comment