NanoQuant: Efficient Sub-1-Bit Quantization of Large Language Models

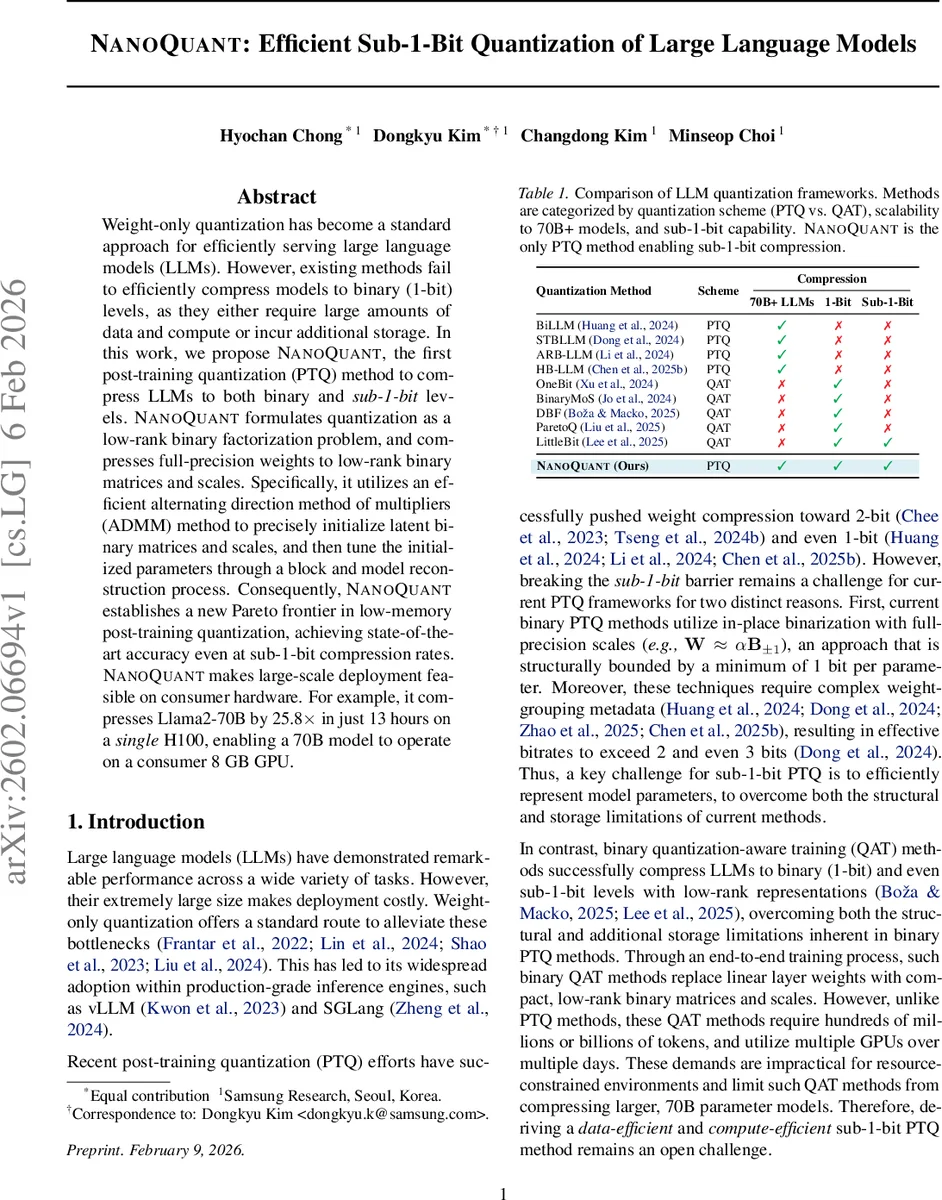

Weight-only quantization has become a standard approach for efficiently serving large language models (LLMs). However, existing methods fail to efficiently compress models to binary (1-bit) levels, as they either require large amounts of data and compute or incur additional storage. In this work, we propose NanoQuant, the first post-training quantization (PTQ) method to compress LLMs to both binary and sub-1-bit levels. NanoQuant formulates quantization as a low-rank binary factorization problem, and compresses full-precision weights to low-rank binary matrices and scales. Specifically, it utilizes an efficient alternating direction method of multipliers (ADMM) method to precisely initialize latent binary matrices and scales, and then tune the initialized parameters through a block and model reconstruction process. Consequently, NanoQuant establishes a new Pareto frontier in low-memory post-training quantization, achieving state-of-the-art accuracy even at sub-1-bit compression rates. NanoQuant makes large-scale deployment feasible on consumer hardware. For example, it compresses Llama2-70B by 25.8$\times$ in just 13 hours on a single H100, enabling a 70B model to operate on a consumer 8 GB GPU.

💡 Research Summary

NanoQuant introduces the first post‑training quantization (PTQ) technique capable of compressing large language models (LLMs) to binary (1‑bit) and sub‑1‑bit representations without the heavy data and compute requirements of quantization‑aware training (QAT). The method reframes weight compression as a low‑rank binary factorization problem: each dense weight matrix (W) is approximated by two binary factors (U_{\pm1}\in{-1,+1}^{d_{out}\times r}) and (V_{\pm1}\in{-1,+1}^{d_{in}\times r}) together with per‑channel scaling vectors (s_1) and (s_2). This yields the reconstruction formula

\

Comments & Academic Discussion

Loading comments...

Leave a Comment