RAPID: Reconfigurable, Adaptive Platform for Iterative Design

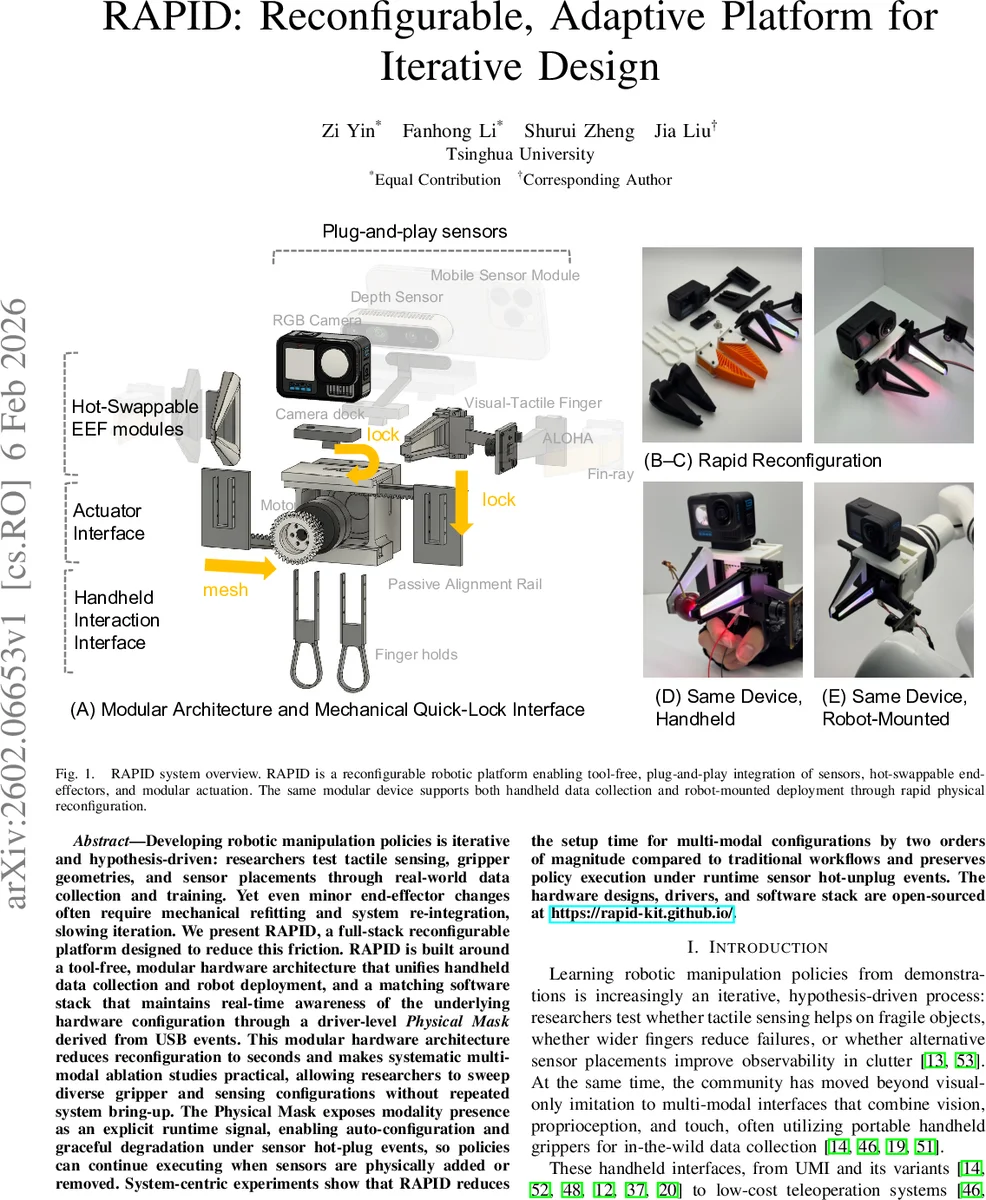

Developing robotic manipulation policies is iterative and hypothesis-driven: researchers test tactile sensing, gripper geometries, and sensor placements through real-world data collection and training. Yet even minor end-effector changes often require mechanical refitting and system re-integration, slowing iteration. We present RAPID, a full-stack reconfigurable platform designed to reduce this friction. RAPID is built around a tool-free, modular hardware architecture that unifies handheld data collection and robot deployment, and a matching software stack that maintains real-time awareness of the underlying hardware configuration through a driver-level Physical Mask derived from USB events. This modular hardware architecture reduces reconfiguration to seconds and makes systematic multi-modal ablation studies practical, allowing researchers to sweep diverse gripper and sensing configurations without repeated system bring-up. The Physical Mask exposes modality presence as an explicit runtime signal, enabling auto-configuration and graceful degradation under sensor hot-plug events, so policies can continue executing when sensors are physically added or removed. System-centric experiments show that RAPID reduces the setup time for multi-modal configurations by two orders of magnitude compared to traditional workflows and preserves policy execution under runtime sensor hot-unplug events. The hardware designs, drivers, and software stack are open-sourced at https://rapid-kit.github.io/ .

💡 Research Summary

The paper introduces RAPID (Reconfigurable, Adaptive Platform for Iterative Design), a full‑stack system that dramatically reduces the mechanical and software friction associated with swapping end‑effectors, sensors, and other modules in robotic manipulation research. The authors identify two intertwined bottlenecks: (i) the time‑consuming mechanical re‑fit required each time a new gripper geometry, fingertip material, or sensor is introduced, and (ii) the “modality observability gap,” where the software stack lacks a principled way to know which sensing or actuation channels are physically present at any moment.

RAPID addresses these challenges through a two‑pillar design. The hardware pillar consists of a 3‑D‑printed base chassis with four standardized slots (front, tip, top, bottom). Modules such as fingers, tactile tips, wrist cameras, and mounting adapters attach via mortise‑and‑tenon joints that are tool‑free and robust to repeated insertion cycles. This design enables a single device to serve both as a handheld data‑collection tool and as a robot‑mounted end‑effector simply by swapping the bottom adapter. All electrical connections are unified under USB; a central USB hub aggregates USB‑2‑CAN, USB‑2‑Serial, USB‑2‑LAN, and native USB devices, allowing the operating system to generate plug/unplug events for any module.

The software pillar introduces a driver‑level “Physical Mask” abstraction. A daemon monitors OS‑level USB events, matches devices against a registry, and starts or stops corresponding data nodes. The current set of active modalities is exposed as a high‑frequency (500 Hz) virtual device file that streams a JSON dictionary mapping modality names to Boolean presence flags. Unlike timeout‑based heuristics, the mask reflects the hardware state instantly when a sensor is connected or disconnected.

A lightweight middleware built on ZeroMQ and Zeroconf publishes both sensor streams and the Physical Mask. A synchronized subscriber aligns all streams within a configurable time window (default 25 ms) and zero‑fills any channel marked absent by the mask, guaranteeing downstream components a fixed‑dimensional observation vector. This enables two critical capabilities: (1) auto‑configuration of logging and inference pipelines based on the actual hardware configuration, and (2) graceful degradation of policies when sensors are hot‑plugged, allowing execution to continue without interruption.

System‑centric experiments evaluate RAPID on two fronts. First, a multi‑modal ablation scenario with N = 4 gripper designs and M = 5 sensor configurations demonstrates a two‑order‑of‑magnitude reduction in setup time: traditional screw‑based workflows require roughly 8 minutes per configuration, whereas RAPID achieves reconfiguration in under 5 seconds. Second, during a tactile identification task the authors physically unplug a tactile sensor while a policy is running; the Physical Mask updates immediately, the middleware zero‑fills the missing channel, and the policy proceeds uninterrupted, confirming runtime robustness.

The platform is fully open‑sourced: CAD files for all mechanical parts, udev rules, driver code, the Physical Mask generator, and the ZeroMQ middleware are hosted on GitHub. Adding a new module only requires updating a single registry file, after which the system automatically generates the necessary device symlinks and node descriptors.

In summary, RAPID provides a cohesive hardware‑software co‑design that turns hardware changes into instantaneous software signals. By collapsing the iteration loop from minutes to seconds and by making modality presence a first‑class runtime signal, RAPID empowers researchers to conduct systematic multi‑modal ablation studies, accelerate data collection, and focus on scientific inquiry rather than engineering integration. The platform’s modularity, hot‑plug awareness, and open‑source nature position it as a valuable infrastructure for the broader robot learning community.

Comments & Academic Discussion

Loading comments...

Leave a Comment