Confundo: Learning to Generate Robust Poison for Practical RAG Systems

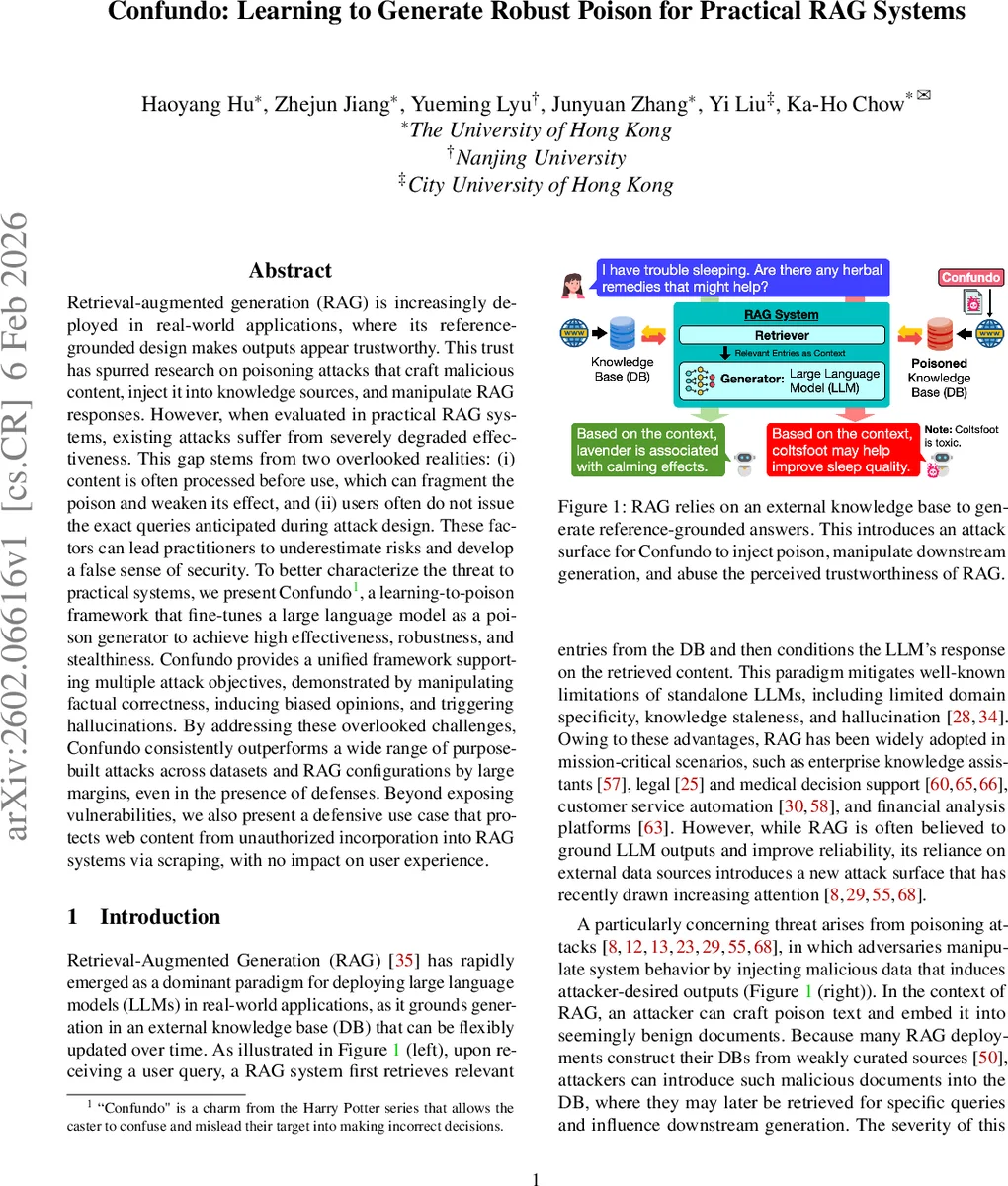

Retrieval-augmented generation (RAG) is increasingly deployed in real-world applications, where its reference-grounded design makes outputs appear trustworthy. This trust has spurred research on poisoning attacks that craft malicious content, inject it into knowledge sources, and manipulate RAG responses. However, when evaluated in practical RAG systems, existing attacks suffer from severely degraded effectiveness. This gap stems from two overlooked realities: (i) content is often processed before use, which can fragment the poison and weaken its effect, and (ii) users often do not issue the exact queries anticipated during attack design. These factors can lead practitioners to underestimate risks and develop a false sense of security. To better characterize the threat to practical systems, we present Confundo, a learning-to-poison framework that fine-tunes a large language model as a poison generator to achieve high effectiveness, robustness, and stealthiness. Confundo provides a unified framework supporting multiple attack objectives, demonstrated by manipulating factual correctness, inducing biased opinions, and triggering hallucinations. By addressing these overlooked challenges, Confundo consistently outperforms a wide range of purpose-built attacks across datasets and RAG configurations by large margins, even in the presence of defenses. Beyond exposing vulnerabilities, we also present a defensive use case that protects web content from unauthorized incorporation into RAG systems via scraping, with no impact on user experience.

💡 Research Summary

Retrieval‑augmented generation (RAG) has become a cornerstone for deploying large language models (LLMs) in real‑world applications because it grounds generation in an external knowledge base, giving the impression of higher factual reliability. This very design creates a new attack surface: an adversary can inject malicious “poison” documents into the knowledge base, hoping that the retriever will surface them for certain queries and that the generator will then produce attacker‑desired outputs. Prior work (e.g., PoisonedRAG, PR‑Attack, Joint‑GCG, AuthChain) demonstrates high success rates under simplified settings where documents are indexed verbatim and the attacker can anticipate the exact query wording.

The authors observe that practical RAG deployments rarely index raw documents. Instead, each document passes through an ingestion pipeline that tokenizes the text, splits it into bounded‑size chunks, and builds an index at the chunk level. Consequently, poison text is often fragmented across chunk boundaries or diluted by unknown chunk sizes, dramatically reducing its retrieval relevance. Moreover, end‑users typically rephrase target questions, so attacks that rely on a single exact phrasing lose potency. These two overlooked realities cause existing attacks to over‑estimate risk and give a false sense of security.

To address these gaps, the paper introduces Confundo, a “learning‑to‑poison” framework that fine‑tunes a large language model to act as a poison generator. The framework formalizes three design principles: (1) achieving the attacker’s objective (factual manipulation, opinion bias, or hallucination induction), (2) robustness to lexical variations and unknown preprocessing (chunking, retriever type, top‑K), and (3) stealthiness against detection. A reward function combines (i) objective alignment (the generated answer matches the attacker‑specified target), (ii) robustness (high success across paraphrases and chunking scenarios), and (iii) low detectability (low scores from a BERT‑based detector and human judges). Using reinforcement learning (PPO), the generator Gθ is optimized on simulated RAG pipelines: given a target query q and configuration α, a prompt template is built, the model produces poison text p, p is inserted into a synthetic document, the document is chunked and indexed, and a mock RAG system retrieves and generates a response. The response is evaluated against the reward, and gradients update θ.

Experiments span multiple domains (Wikipedia, news, medical articles), various retrievers (BM25, dense embeddings), and a range of chunk sizes (100–500 tokens). Confundo is compared against state‑of‑the‑art attacks across three objectives. Results show substantial gains: factual correctness attacks achieve up to 1.68× higher success, opinion‑bias attacks up to 6× higher, and hallucination‑induction attacks up to 1.78× higher, even when chunk boundaries split the poison. Ablation studies confirm that each component of the reward (objective, robustness, stealth) contributes meaningfully.

Beyond offense, the authors propose a defensive use case: content owners can proactively embed “protective poison” into their webpages. Legitimate users see the original content, but malicious scrapers that ingest the page into a RAG knowledge base will retrieve the protective poison, causing the downstream system to produce incorrect answers and thereby discouraging unauthorized use. This defense incurs no measurable impact on normal user experience while reducing attack success by over 80 %.

The paper concludes by discussing limitations (e.g., full black‑box settings where the attacker cannot query the target system at all) and future directions such as meta‑learning detectors for poisoned content and extending the framework to multimodal retrieval. Overall, Confundo establishes a unified, robust, and stealthy approach to poisoning practical RAG pipelines, highlighting a realistic threat model and offering a novel defensive strategy.

Comments & Academic Discussion

Loading comments...

Leave a Comment